OpenAI launched GPT-5.4-Cyber on April 14, 2026 — a cyber-permissive variant of GPT-5.4 built for defensive security teams, gated behind a new Trusted Access for Cyber (TAC) program. The model lowers GPT-5.4's refusal boundary for legitimate offensive-security workflows, ships native binary reverse engineering, and is aimed at vulnerability discovery, pentest support, SOC triage and bug bounty at scale. Access is limited to thousands of individually-vetted defenders and hundreds of teams who authenticate via strong KYC at chatgpt.com/cyber. Pricing follows enterprise GPT-5.4 rates for approved organizations. The launch comes exactly one week after Anthropic unveiled Claude Mythos Preview and Project Glasswing — the cyber AI arms race is now officially a two-horse race at the frontier, with DeepSeek and Llama security fine-tunes running a distant third.

What is GPT-5.4-Cyber

GPT-5.4-Cyber is a specialized variant of OpenAI's GPT-5.4 flagship model, optimized and gated specifically for defensive cybersecurity work. Announced April 14, 2026, it is the first publicly-disclosed OpenAI model to ship with a lowered refusal boundary for offensive-security content — the kind of content a standard ChatGPT instance refuses to produce because it looks adjacent to abuse.

The four facts that matter, before the nuance:

- Who gets it: only users who authenticate as security professionals via the Trusted Access for Cyber (TAC) program. Individual defenders apply at chatgpt.com/cyber. Enterprises go through their OpenAI rep.

- What it unlocks: native binary reverse engineering, lowered refusal on dual-use cyber topics, approved workflows for vulnerability research, defensive programming, and security education.

- Scale of rollout: thousands of individually-vetted defenders, hundreds of security teams. Not a general-availability release. Not even a waitlist in the normal sense — it is a KYC gate.

- Competitive context: launched exactly seven days after Anthropic's Claude Mythos Preview + Project Glasswing announcement (April 7, 2026). OpenAI's move is a direct answer to Anthropic capturing the cyber narrative with a 12-partner coalition.

The positioning is unambiguous. For two years, the complaint from senior security researchers has been that frontier LLMs refuse on basic pentest queries — a CTF-level prompt gets the same canned "I cannot help with that" that a real attack prompt gets. GPT-5.4-Cyber is OpenAI's answer to that complaint, but the price is giving up anonymity. You want the cyber-permissive model; you get KYC'd as a defender.

Features deep dive

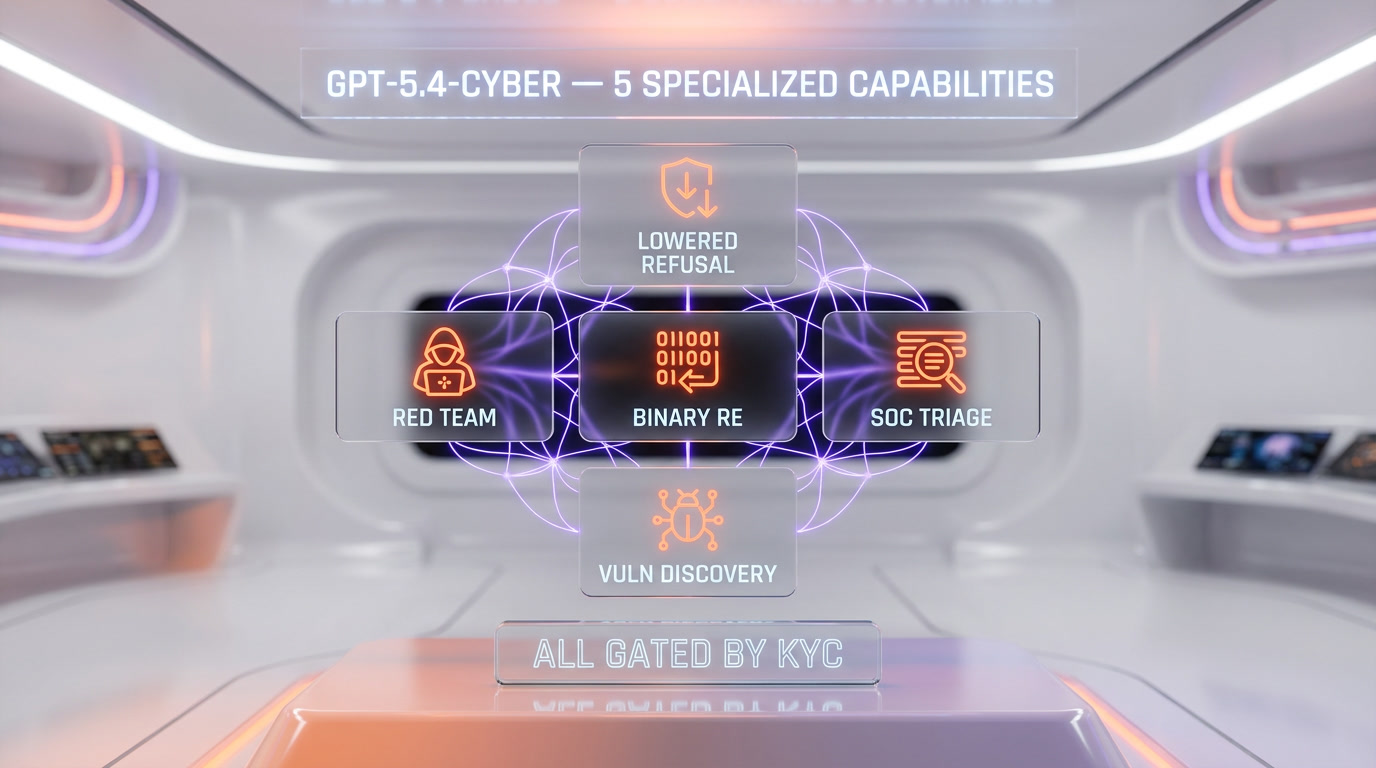

Across the public announcement, the help-net-security coverage, and the 9to5Mac technical breakdown, five capabilities separate GPT-5.4-Cyber from plain GPT-5.4.

1. Native binary reverse engineering

The headline capability. GPT-5.4-Cyber can analyze compiled binaries — Windows PE files, ELF binaries, Mach-O, firmware images — without source code. The model ingests disassembly and decompilation output, identifies malware indicators, flags vulnerability classes (stack overflows, heap corruption, use-after-free, format string bugs, insecure deserialization), and produces structured reports suitable for handoff to an incident response team.

This matters because binary analysis is the single hardest-to-automate task in defensive security. Tools like IDA Pro, Ghidra, and Binary Ninja have been pushing in this direction for a decade. GPT-5.4-Cyber is the first general-purpose LLM that ships this as a first-class capability rather than a best-effort side feature.

2. Lowered refusal boundary for dual-use work

Standard GPT-5.4 refuses on queries that even look offensive-security adjacent. Ask it to write a working exploit PoC for a known-patched CVE and you get a policy message. Ask it to walk you through the exploitation primitives of a heap overflow and you get the same policy message. Researchers have been routing around this with jailbreaks, homebrew fine-tunes, or open-source alternatives.

GPT-5.4-Cyber's refusal boundary is tuned for authenticated security professionals. The approved workflows include responsible vulnerability research (finding, documenting, and disclosing CVEs), defensive programming (writing code that resists exploitation), security education (teaching how attacks work so defenders can build mitigations), and red team support (adversarial emulation for hardening enterprise environments). The guardrails that remain are focused on harm at scale — mass attack automation, self-replicating malware design, and anything that crosses into critical-infrastructure targeting.

3. Vulnerability discovery at scale

GPT-5.4-Cyber integrates with OpenAI's Codex Security tooling, which as of April 2026 has contributed to 3,000+ critical/high vulnerability findings across customer codebases since launch. The model ingests a repository, runs static and dynamic analysis queries, validates candidate issues, and proposes patches. For open-source maintainers and bug bounty hunters, this is the workflow that justifies the KYC friction — you hand the model a fresh commit, you get back a prioritized list of likely-exploitable paths.

4. Pentest and red team support

Approved red teams can use GPT-5.4-Cyber for adversarial emulation — building realistic attack chains against their own environments, generating phishing payloads for internal awareness testing, writing post-exploitation scripts for authorized engagements, and producing reproducible test cases for blue team training. The model knows current TTPs mapped to MITRE ATT&CK, can chain multi-stage scenarios, and generates output in formats compatible with existing red team tooling.

5. SOC triage and incident response

For blue teams, the model ingests alerts, log streams, and IOC feeds, correlates across sources, and produces triage summaries at a quality level most SOC analysts cannot match on volume. The pitch is not "replace the SOC analyst" — it is "give every SOC analyst a senior-grade assistant who reads every alert at 3am without burning out."

Pricing and access — the Verification Program

Here is where GPT-5.4-Cyber breaks from standard GPT product releases. There is no self-serve signup. There is no trial. There is no consumer ChatGPT toggle. The only way in is through OpenAI's Trusted Access for Cyber program.

| Access tier | Who gets it | How to apply | Pricing |

|---|---|---|---|

| Individual defender | Verified security professionals — researchers, bug bounty hunters, independent consultants | chatgpt.com/cyber — strong KYC + identity verification | Standard GPT-5.4 API rates for approved users |

| Security team | Vetted organizations — security vendors, SOC teams, red teams, open-source maintainer collectives | Through your OpenAI enterprise representative | Custom enterprise pricing, Zero-Data Retention (ZDR) available |

| Critical infrastructure defender | Organizations responsible for securing critical software and infrastructure | Directly via OpenAI partnerships team | Priority tier, direct OpenAI security team support |

OpenAI's public framing is "trusted access for the next era of cyber defense." The subtext is clearer: this is an OpenAI-audited whitelist, and if you fail the KYC check, you use standard GPT-5.4 like everybody else. Axios reported the TAC rollout will scale to "thousands" of individual defenders and "hundreds" of teams in the first wave.

GPT-5.4-Cyber vs Claude Mythos (Project Glasswing)

One week before OpenAI's launch, Anthropic unveiled Claude Mythos Preview and Project Glasswing on April 7, 2026. Mythos is the most capable Anthropic model on coding and agentic tasks, with standout strength on cyber — Anthropic claims Mythos has identified thousands of zero-day vulnerabilities, many critical, across every major operating system and every major web browser. Project Glasswing brings together AWS, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks as launch partners.

| Dimension | GPT-5.4-Cyber (OpenAI) | Claude Mythos (Anthropic) |

|---|---|---|

| Launch date | April 14, 2026 | April 7, 2026 |

| Model type | Specialized cyber variant of GPT-5.4 | General-purpose frontier model (cyber is a side effect of capability) |

| Access model | Individual KYC at chatgpt.com/cyber + enterprise TAC program | Invite-only via Project Glasswing coalition |

| Target scale | Thousands of vetted defenders, hundreds of teams | Coalition of 12 launch partners (AWS, Apple, Microsoft, Google, CrowdStrike, etc.) |

| Binary reverse engineering | Native, first-class capability | Strong but not explicitly positioned as headline feature |

| Lowered refusal | Yes — tuned for authenticated defenders | Not explicitly — Anthropic emphasizes safety and does not ship a permissive variant |

| Vulnerability discovery track record (claimed) | 3,000+ via Codex Security since launch | Thousands of zero-days across all major OS and browsers |

| Pricing | Standard GPT-5.4 API rates for approved users | $25 per million input tokens / $125 per million output tokens for Glasswing participants |

| Public availability | Gated but scaling | Not planned for general availability |

| Philosophical stance | Trust-and-verify — give vetted defenders the sharp tool | Coalition-and-contain — deploy to partners who can handle the risk |

The strategic divergence is the story. OpenAI is betting on scale — enroll thousands of verified individuals, let the market decide what security work looks like with a permissive LLM. Anthropic is betting on selectivity — hand the sharpest model to a dozen partners with infrastructure responsibility, avoid a broad release. CISOs will have to pick a lane. Most will probably run both.

GPT-5.4-Cyber vs open-source alternatives

The open-source cyber LLM scene is real but uneven. DeepSeek's security fine-tunes, Llama-3 CyberSec derivatives, and a handful of community-hosted Mistral variants cover roughly 70% of what GPT-5.4-Cyber does — on queries where the baseline model's reasoning is strong enough, the open-source option works. But three gaps matter.

| Dimension | GPT-5.4-Cyber | DeepSeek security fine-tunes | Llama security derivatives |

|---|---|---|---|

| Baseline reasoning quality | Frontier (GPT-5.4) | Mid-frontier | Mid-frontier (Llama-3/4 class) |

| Binary reverse engineering | Native, first-class | Partial, prompt-engineered | Partial, prompt-engineered |

| Vuln discovery depth | Codex Security integration | Community tooling | Community tooling |

| Refusal boundary | Tuned for defenders | Effectively none | Effectively none |

| Sovereignty / air-gap | Cloud-only, KYC-gated | Self-host, no KYC | Self-host, no KYC |

| Cost | Enterprise GPT-5.4 rates | Infrastructure cost only | Infrastructure cost only |

| Best for | Enterprise defenders, bug bounty at scale, managed SOCs | Sovereignty-focused teams, offensive labs who will not KYC | Budget-constrained teams, academic research |

The honest 2026 read: for an enterprise defender who can pass KYC and pay enterprise rates, GPT-5.4-Cyber is the sharper tool. For a nation-state-adjacent team, a privacy-focused research lab, or anybody who will not KYC to a US AI lab, the DeepSeek and Llama paths are the real alternatives — and they are improving every quarter.

Real-world use cases

Based on OpenAI's approved-workflow list and the early access reporting, here are the workflows where GPT-5.4-Cyber earns its keep:

- Red team adversarial emulation. Authorized red teams use GPT-5.4-Cyber to build realistic attack chains against their own infrastructure. Chain phishing, initial access, lateral movement, persistence, exfiltration — with the model writing the payloads, the C2 config, and the post-exploitation scripts. What used to take a three-person red team a week now takes one senior operator plus the model.

- SOC triage and incident response. Blue teams pipe alert streams, PCAPs, and log dumps into the model. Get back a triaged incident summary with IOCs, mapped TTPs, and recommended next steps. The value prop is not "replace the analyst" — it is "let the analyst cover 10x more ground without missing the real incident buried in the noise."

- Bug bounty at scale. Independent researchers feed target scope plus public attack surface into the model. Get back a prioritized list of candidate issues, validated by the model's code review pass. For a solo bug bounty hunter, this is the workflow that pays for the enterprise API tier.

- Vulnerability research and responsible disclosure. Academic researchers and corporate security labs use GPT-5.4-Cyber for the tedious middle of the vuln research loop — fuzzing output triage, root-cause analysis on crash dumps, CVE documentation drafting. The glamour work stays human; the model eats the grunt work.

- Defensive code review. Engineering teams integrate GPT-5.4-Cyber into their PR review pipeline via Codex Security. Every commit gets a security pass before merge. For teams with no dedicated security engineer, this is the closest thing to having a senior AppSec person on staff.

Safety safeguards — what it won't do

OpenAI's messaging is deliberate: lowered refusal, not zero refusal. The model is tuned to say yes on legitimate defensive and dual-use work, and to say no on content that crosses into abuse at scale.

What GPT-5.4-Cyber refuses, even for verified defenders:

- Self-replicating malware design. The model will not help design worms, ransomware with mass-deployment characteristics, or malware optimized for spread rather than targeted impact.

- Critical-infrastructure attack assistance. Power grid, water systems, hospital networks, election systems — the refusal boundary here is hard and does not move for any verification tier.

- Weapons of mass effect. Anything that crosses into CBRN (chemical, biological, radiological, nuclear) adjacency is refused across the board.

- Targeted abuse of individuals. Stalkerware design, mass spearphishing against non-consenting targets, doxxing automation — refused.

- Zero-day weaponization for unknown targets. The model will help with responsible vuln research against your own targets or authorized scope. It will not help weaponize a 0-day for an unspecified victim.

The Zero-Data Retention (ZDR) configuration matters for enterprise buyers. Default TAC deployments can be configured for ZDR, which means prompts and outputs are not retained by OpenAI — a requirement for regulated industries. Third-party platform deployments carry ZDR limitations, which OpenAI has disclosed as part of the rollout.

The cyber AI arms race

Two frontier labs shipped cyber-specialized access in the same week. That is not a coincidence. It is the start of a two-horse race, with DeepSeek and Meta's open-source ecosystem running hard in third place.

Three forces are driving the race:

- Defender asymmetry is the narrative. Both OpenAI and Anthropic are framing their cyber launches as "give the defenders the same sharp tool the attackers already have access to." The underlying claim is that attackers are already using unconstrained LLMs — open-source, jailbroken, or built in-country — and that refusing to give defenders equivalent capability is the unsafe option.

- Enterprise cyber is a $200B market. The security tooling market is the largest line item in most enterprise IT budgets that isn't cloud infrastructure. Capturing 5% of it is a multi-billion-dollar business for any frontier lab.

- National security matters to the roadmap. Both OpenAI and Anthropic have publicly-reported relationships with the US government and adjacent agencies. A frontier-lab cyber product is a national security asset, not just a commercial product. That drives the gated access model — neither lab can afford to have their most capable cyber variant abused, and both are signing up for the responsibility that comes with the capability.

Read our broader coverage of the OpenAI-Anthropic-Google pact on Chinese espionage for the geopolitical backdrop on why these cyber launches are arriving in April 2026 specifically.

What CISOs should know

If you are a CISO reading this on Monday morning, here are the five things that matter for your planning cycle:

- Enroll your security team in TAC this quarter. The bar for access is strong KYC plus demonstrable security role — not impossibly high. Put your AppSec leads, your SOC managers, and your red team ops through the process. The model is live; competitors are getting access; waiting is a cost.

- Evaluate both GPT-5.4-Cyber and Claude Mythos if you can. If your organization is in the Project Glasswing partner list or can negotiate access, run both in parallel on the same workflows. The models have different strengths — OpenAI's binary reverse engineering is ahead; Anthropic's long-horizon agentic coding is ahead. You want both data points.

- Budget for the API costs. Enterprise GPT-5.4 rates on cyber workflows are not cheap at scale — expect thousands of dollars per month for a mid-sized SOC that goes hard on triage automation. Budget accordingly. The Mythos rate card at $25 per million input tokens and $125 per million output tokens gives you a sense of where frontier cyber compute is priced.

- Update your acceptable-use policies. Your org's AUP likely has language that forbids "hacking tools" in broad strokes. GPT-5.4-Cyber is a hacking tool by any fair reading. Work with your legal team to carve out an authorized-use pathway for your security org before individual analysts start using the tool on personal accounts.

- Assume your adversaries have equivalent capability. Whatever GPT-5.4-Cyber can do for your defenders, a DeepSeek or Llama derivative with equivalent fine-tuning can do for your attackers. Budget for the defender-side tooling, but harden your systems assuming the attacker has the same quality of LLM assistance. That is the 2026 threat model.

Our verdict

GPT-5.4-Cyber is the sharpest commercially-available cyber LLM shipped to date. The lowered refusal boundary is the unlock that every senior security researcher has been asking for since GPT-4. The native binary reverse engineering is a legitimate category leader. The Trusted Access for Cyber program is a thoughtful answer to the "how do you gate this" problem — scale through KYC, not through waitlist theater.

It is not perfect. The KYC gate will lock out legitimate independent researchers who refuse to hand KYC data to a US AI lab — and those researchers will route around to DeepSeek and Llama derivatives. The pricing is opaque ("standard GPT-5.4 rates for approved users" is not a pricing page). The model ships exactly one week after Anthropic's Mythos launch, which will invite fair comparisons on cyber workloads where Anthropic's coding lead may matter more than OpenAI's binary reverse engineering lead.

Score: 9 out of 10. Half a point off for the pricing opacity. Half a point off pending 90-day field data on the approval rate for independent defenders. Our short-form verdict: if you are a vetted defender and you can pass KYC, apply this week. If you are a CISO, get your senior team into TAC by end of Q2. If you are an adversary reading this, assume the defenders you are targeting now have a capability they did not have in March. The cyber AI arms race started on April 7 with Claude Mythos. It went two-horse on April 14 with GPT-5.4-Cyber. It does not slow down from here.

For more on the broader arms race, see our coverage of Claude Mythos and Project Glasswing, the OpenAI-Anthropic-Google pact on Chinese espionage, and the full ChatGPT and Claude tool pages on ThePlanetTools.

Frequently asked questions

When did OpenAI launch GPT-5.4-Cyber?

OpenAI launched GPT-5.4-Cyber on April 14, 2026 — exactly one week after Anthropic unveiled Claude Mythos Preview and Project Glasswing on April 7, 2026. The launch was disclosed via OpenAI's official blog post "Trusted access for the next era of cyber defense" and confirmed across Axios, Bloomberg, The Hacker News, 9to5Mac, and Help Net Security on April 14 and 15, 2026.

Who can access GPT-5.4-Cyber?

Access is limited to verified cybersecurity professionals through the Trusted Access for Cyber (TAC) program. Individual defenders apply at chatgpt.com/cyber and pass strong KYC and identity verification. Enterprise teams request access through their OpenAI representative. The initial rollout targets thousands of individually-vetted defenders and hundreds of security teams — it is not a general-availability release.

How much does GPT-5.4-Cyber cost?

OpenAI has not published a separate GPT-5.4-Cyber price list. Approved individual users pay standard GPT-5.4 API rates. Enterprise teams negotiate custom pricing through their OpenAI rep, with Zero-Data Retention (ZDR) available on qualifying deployments. For reference, Anthropic's Claude Mythos is priced at $25 per million input tokens and $125 per million output tokens for Project Glasswing participants.

What is the Trusted Access for Cyber (TAC) program?

TAC is OpenAI's gating and verification framework for GPT-5.4-Cyber. It performs strong KYC and identity checks on every applicant, maintains an audited whitelist of approved individuals and organizations, and scales iteratively as OpenAI learns about model risks in the wild. Approved use cases include vulnerability research, defensive programming, security education, and red team support. Critical-infrastructure and CBRN-adjacent requests remain hard-refused even for vetted users.

How is GPT-5.4-Cyber different from standard GPT-5.4?

GPT-5.4-Cyber is a specialized variant with three key differences. First, it ships native binary reverse engineering as a first-class capability. Second, the refusal boundary is lowered for legitimate offensive-security workflows — queries that standard GPT-5.4 refuses as policy violations are answered for authenticated defenders. Third, it is gated behind KYC through the TAC program rather than being available to any ChatGPT user.

GPT-5.4-Cyber vs Claude Mythos — which should a CISO pick?

If you can, run both. GPT-5.4-Cyber is a specialized cyber variant with lowered refusal and native binary reverse engineering, gated to thousands of individual defenders. Claude Mythos is a general frontier model with strong cyber as a side effect of overall capability, gated through the Project Glasswing coalition of 12 partners (AWS, Apple, Microsoft, Google, CrowdStrike, and others). OpenAI scales through KYC. Anthropic scales through coalition. Most serious CISOs will want both data points for 2026 procurement.

Can GPT-5.4-Cyber write malware?

For authorized, defensive workflows — yes, within limits. The model can help build red team adversarial-emulation payloads, write PoC exploits for known-patched CVEs as part of responsible vulnerability research, and generate test malware for blue team detection engineering. It refuses on self-replicating malware, ransomware optimized for mass deployment, critical-infrastructure targeting, CBRN-adjacent content, and weaponization of zero-days for unspecified victims. The refusal boundary is lower than standard GPT-5.4 but it is not zero.

What are the open-source alternatives to GPT-5.4-Cyber?

Three ecosystems matter. DeepSeek's security-tuned models ship with effectively no refusal boundary and can be self-hosted — the sovereignty pick for teams who will not KYC to a US lab. Meta's Llama security derivatives (community and commercial fine-tunes) cover a similar budget-tier role. A handful of Mistral-based community cyber models round out the open ecosystem. None match GPT-5.4-Cyber's baseline reasoning or native binary reverse engineering yet, but the gap is closing quarter over quarter.

Does GPT-5.4-Cyber have binary reverse engineering?

Yes. Native binary reverse engineering is the headline feature of GPT-5.4-Cyber. The model analyzes compiled software — PE, ELF, Mach-O, firmware — without source code access, identifies malware indicators, flags vulnerability classes, and produces structured reports. This is the single largest technical separation between GPT-5.4-Cyber and both standard GPT-5.4 and most open-source cyber LLMs as of April 2026.

Why did OpenAI launch GPT-5.4-Cyber one week after Claude Mythos?

The timing is not a coincidence. Anthropic's Claude Mythos Preview and Project Glasswing announcement on April 7, 2026 captured the cybersecurity AI narrative with a 12-partner coalition including AWS, Apple, Microsoft, Google, and CrowdStrike. OpenAI's April 14 launch is a direct competitive response — a different strategic bet (scale through individual KYC rather than coalition) aimed at the same enterprise cyber market. Expect ongoing back-and-forth through 2026 as both labs iterate on cyber-specific capability, pricing, and access.