On March 16, 2026, at Nvidia GTC, Jensen Huang announced $1 trillion in combined Blackwell and Rubin GPU orders through 2027 and unveiled Vera Rubin — a 336-billion-transistor GPU with 288GB of HBM4, 22 TB/s of memory bandwidth, and 50 PFLOPS per GPU, which Nvidia claims delivers tokens roughly 10x cheaper than Blackwell. He also previewed Kyber, the architecture that follows Rubin in 2027. The $1T figure is the largest forward-orders number ever disclosed by a public tech company. Four customers — OpenAI, Anthropic, Meta, and xAI — account for the majority of it. That concentration is the real story, and the real risk. This piece dissects where the trillion comes from, what Rubin actually does, and whether we are watching the AI bubble peak or the capital formation phase of AGI.

The $1T Headline That Made Wall Street Gasp

Jensen Huang walked on stage at the San Jose Convention Center on March 16, 2026, wearing the leather jacket and speaking in the same steady cadence he has used since Nvidia was a graphics card company. Then he put up a single number: $1 trillion. Combined Blackwell and Rubin GPU orders through 2027. Not lifetime revenue, not total addressable market — signed and committed forward orders.

To contextualize: Apple's entire annual revenue is roughly $400 billion. Nvidia's fiscal 2025 full-year revenue was about $130 billion. The number Jensen put up represents roughly 7.7 years of Apple's entire business, or nearly 8 years of Nvidia's current annual revenue, concentrated into a 24-month GPU purchasing window. The S&P 500 data-center capex line is being rewritten in real time.

CNBC's live coverage from the floor captured the moment the number hit the wire — Nvidia's stock moved sharply in after-hours trading, and within six hours every major financial desk from Morgan Stanley to Goldman had revised their 2026 and 2027 AI infrastructure models. The question everyone started asking out loud: who, exactly, is ordering a trillion dollars of GPUs?

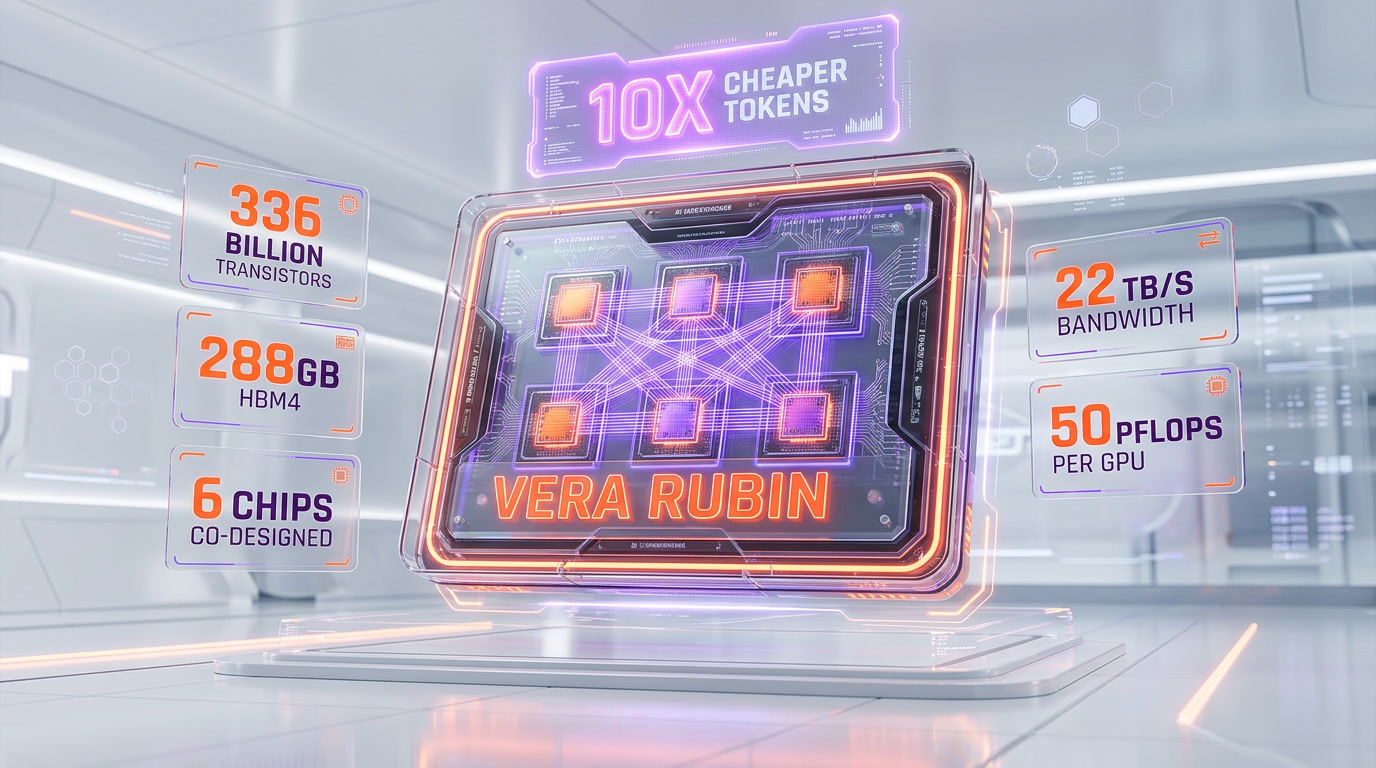

Vera Rubin Specs Breakdown — 336B Transistors, 288GB HBM4, 22 TB/s

Blackwell was the current king. Vera Rubin is the next one. The headline specs Nvidia disclosed on stage:

| Capability | Blackwell B200 | Vera Rubin (R100-class) |

|---|---|---|

| Transistors | 208 billion | 336 billion |

| Memory | 192GB HBM3e | 288GB HBM4 |

| Memory bandwidth | 8 TB/s | 22 TB/s |

| Peak compute | ~20 PFLOPS (FP4) | 50 PFLOPS per GPU |

| Chip architecture | Dual-die package | 6 chips co-designed |

| Cost per token (Nvidia claim) | Baseline | ~10x cheaper |

| Launch window | Shipping 2024-2026 | Late 2026 - 2027 |

The six-chip co-designed package is the architectural headline. Vera Rubin is not one die, not two — it is six chiplets designed together from day one, with shared high-bandwidth interconnect. This is Nvidia formalizing what the industry suspected after Blackwell's dual-die: the monolithic GPU era is over. Future frontier compute is packaged systems, not chips.

The 288GB of HBM4 is the other unlock. Large model training and inference are memory-bandwidth bound far more than they are compute-bound at the frontier. 22 TB/s is nearly 3x Blackwell's bandwidth. For context, that is enough to move the entire contents of a 256GB M4 Mac Studio's unified memory roughly 86 times every second.

The 10x cheaper tokens claim is Nvidia's number, not an independent benchmark. But even a 3-5x real-world improvement in tokens per dollar would reset the economics of every AI product currently priced against Blackwell inference. That matters for anyone running Claude, ChatGPT, or Gemini at scale — eventually the price floor drops.

Kyber Architecture — What's Coming in 2027

Jensen did not stop at Rubin. In the final third of the keynote he teased Kyber, the architecture that follows Rubin in late 2027. Nvidia did not share transistor counts or memory specs — deliberately. What they did share:

- Kyber is designed for inference-first workloads. Rubin is still a training-class GPU with strong inference numbers. Kyber flips that — the primary design target is inference cost per token, not training throughput. This tracks with the industry's shift: training compute is starting to plateau, inference demand is scaling exponentially.

- Kyber extends the co-designed chiplet approach. More chips per package, tighter interconnect, more memory per unit.

- Kyber is the first Nvidia architecture explicitly designed around agentic AI workloads — long-context reasoning, tool use, multi-step planning. Nvidia is betting that by 2027, most GPU cycles will be spent on agents doing work, not chatbots answering questions.

The Kyber tease accomplished two things. First, it signaled to customers that the $1T in committed orders is not the end of the spending cycle — there is a 2027 refresh already on the roadmap. Second, it told the market that Nvidia's architectural lead is not a one-generation phenomenon. Blackwell, Rubin, Kyber — three generations in three years, each with a differentiated design target.

Dissecting the $1T: The Four Biggest Customers

Nvidia did not publish a customer-by-customer breakdown of the $1T figure. They never do. But the order disclosures, public capex guidance from hyperscalers, and Tom's Hardware's reporting on supply allocations let us reconstruct a credible picture of where the trillion is actually going. Here is our dissection — directional, not line-item exact, and sized to the public signals.

OpenAI — estimated $300-350 billion

OpenAI is the single largest committed buyer of Blackwell and Rubin GPUs through 2027, and by a meaningful margin. The shape of the spend is split across three channels. First, the Stargate infrastructure program announced in early 2025 — a joint OpenAI + Oracle + SoftBank + MGX initiative that has committed north of $500 billion in total data-center capex, of which the GPU line item is the single largest component. Second, direct OpenAI GPU purchases routed through Microsoft Azure and CoreWeave capacity contracts. Third, the new $40 billion financing round announced in late 2025 which OpenAI has publicly earmarked toward inference compute. Conservatively, $300-350 billion of the $1T figure is OpenAI-attributable through 2027.

Anthropic — estimated $150-200 billion

Anthropic's GPU commitments jumped dramatically after the Amazon follow-on investment in late 2025 and the rumored $800 billion valuation round in early 2026 (see our coverage of the Anthropic raise in the internal links section). The company's compute needs are now tracking OpenAI's trajectory, with a roughly 12-month lag. Most Anthropic GPU capacity is purchased through AWS Trainium colocation and direct Nvidia allocation via Amazon. The Anthropic share of the $1T figure is smaller than OpenAI's in absolute terms but is growing faster in percentage terms — Anthropic's forward commitments through 2027 likely sit in the $150-200 billion range.

Meta — estimated $200-250 billion

Meta's AI capex has been the most publicly disclosed of any hyperscaler. In the Q4 2025 earnings call, Meta guided to $100-110 billion in 2026 full-year capex, with the majority allocated to AI infrastructure. Extending that trajectory through 2027 and stripping out non-GPU spend gets you to a $200-250 billion Nvidia line item over the two-year window. Meta's Llama training runs and Meta AI inference serving are the two biggest consumers, with agentic AI workloads accelerating the growth curve. Meta is also the hyperscaler most aggressively building its own silicon (MTIA), which means the Nvidia share of total Meta AI spend is declining in percentage but growing in absolute dollars.

xAI — estimated $80-120 billion

xAI is the dark horse. The Memphis Colossus cluster is already the largest single-site GPU deployment in the world, and Elon Musk has publicly committed to scaling it to a million-plus H200/B200-class GPUs by the end of 2026. Tom's Hardware's reporting confirms that xAI has secured priority allocation on Rubin supply for the Colossus 2 expansion in 2027. xAI's forward Nvidia commitment through 2027 is in the $80-120 billion range — smaller than the big three but larger than Google's Nvidia line (Google buys mostly TPUs).

The remaining $150-200 billion

The rest of the $1T figure is distributed across Oracle (Stargate partner), CoreWeave, Microsoft Azure direct (non-OpenAI capacity), sovereign AI buyers (UAE, Saudi Arabia, France), enterprise buyers (JPMorgan, Goldman, major pharma), and automotive (Tesla Dojo-adjacent, BYD, Mercedes). Individually small. Collectively, $150-200 billion of the trillion.

The CapEx Concentration Problem

The $1T number looks bullish in isolation. Look at the concentration and it starts to feel different.

Four companies — OpenAI, Anthropic, Meta, xAI — are responsible for roughly 80 percent of a trillion-dollar forward orders figure. That is the most concentrated enterprise capex event in the history of the technology industry. For comparison, at the peak of the dot-com fiber buildout in 1999-2000, the top four telecom buyers (WorldCom, Qwest, Level 3, Global Crossing) were roughly 35-40 percent of total fiber spend. The current AI concentration is roughly 2x that.

The concentration creates three structural risks:

- Single-customer exposure. If OpenAI misses a capital raise, restructures its compute commitments, or hits a training wall, 30 percent of Nvidia's forward orders get renegotiated overnight. Same math applies to Anthropic, Meta, and xAI individually.

- Credit quality. Of the four big buyers, only Meta has investment-grade credit. OpenAI, Anthropic, and xAI are all financing GPU orders against equity rounds that are themselves priced against AI revenue projections that are themselves priced against GPU capacity. The circular financing pattern is real, and it is the single biggest systemic risk in the AI infrastructure stack right now.

- Inference-economics reset risk. If Rubin actually delivers 10x cheaper tokens, the forward revenue projections that justify the forward capex collapse — because the price floor on AI products drops faster than the volume grows. The Jevons paradox argument (cheaper compute drives more consumption) may be true long-term, but in the 12-24 month window it creates a real revenue dislocation for the top buyers.

None of these risks are bear-case fantasies. They are baseline assumptions anyone sitting on a real portfolio is running.

Is This the AI Bubble Peak?

The case for yes:

- The math is unprecedented. No single product cycle in any industry has ever committed a trillion dollars of forward capex in a 24-month window against unit economics this early in the adoption curve.

- The circular financing pattern is classic bubble dynamics. OpenAI raises at a valuation justified by inference revenue projections. Inference revenue projections assume GPU capacity. GPU capacity is committed against the raise. The raise is justified by the capacity. Each step in the loop looks rational in isolation. The loop itself is where bubbles live.

- Hyperscaler capex is eating free cash flow at a rate the market has not priced. Meta's $100B+ capex number in 2026 is roughly equal to its trailing free cash flow. If the AI revenue ramp misses by even 12 months, the free cash flow profile of the hyperscalers compresses hard, and the valuation multiples that support further GPU purchases compress with it.

Or Is This the Infrastructure for AGI?

The case for no — the $1T is not a bubble, it is the capital formation phase of a genuine compute step-change:

- The scaling laws have not broken. Despite repeated predictions of a training plateau, the frontier models (GPT-5, Claude Opus 4.7, Gemini 3.0) keep delivering step-function capability gains with more compute. If you believe the scaling laws hold through 2027, the compute demand justifies the capex.

- Inference demand is growing faster than training demand. Agentic AI workloads — think long-running coding agents, research agents, personal assistants that operate over hours instead of seconds — are driving an order-of-magnitude increase in inference compute per user. Kyber is explicitly designed for this. The $1T is not just training the next model; it is serving the next interaction pattern.

- The AGI thesis, if correct, makes the current spend look small. If the frontier labs deliver genuinely transformative AI capability in the 2027-2029 window, the economic value created is measured in trillions per year, not the hundreds of billions currently getting capitalized in GPU commits. Under that scenario, the $1T buildout is undercapitalized.

What It Means for Developers (Cheaper Tokens Eventually)

Strip away the macro debate. What does the Rubin launch mean for developers building on Claude, ChatGPT, Grok, Gemini, and the rest?

Cheaper inference, eventually. Rubin's 10x cost-per-token improvement will work its way into API pricing in the 12-24 months after broad deployment. Expect Claude Sonnet-class pricing to fall by 40-60 percent through 2027. Opus-class frontier pricing will fall less (the models that run there are also getting larger), but the price per unit of intelligence will keep dropping.

Longer contexts at lower cost. 288GB of HBM4 per GPU means million-plus-token contexts become economically trivial. By late 2026, expect every major frontier model to ship with a default 1M-plus context window.

Agents become the default. The combination of cheaper inference, larger contexts, and architecture explicitly optimized for long-running workloads means the agentic AI inflection point Nvidia flagged (see our GTC agentic AI analysis) accelerates. If you are a developer not building agents in 2026-2027, you are building for a shrinking surface area.

Our Verdict

The $1T headline is real. The Rubin specs are real. The concentration risk is real. All three things are true at the same time, and the smart read is not a single-word verdict but a two-part one.

On the technology side: this is the infrastructure for the next decade of AI, and it is not a bubble. The scaling laws have held. Inference demand is growing faster than any analyst model from 2023 predicted. The Rubin architecture is a genuine step-function improvement, not a mid-cycle refresh. If you believe AI capability keeps compounding through 2027, the $1T in forward orders is a rational capital formation event — the same category as the interstate highway system or the railroad buildouts, compressed into 24 months.

On the financial side: the concentration risk is real, and a 2026-2027 capital-cycle correction is not only possible, it is the base case. The circular financing pattern between frontier labs and GPU orders is a live vulnerability. A single missed capital raise at OpenAI, Anthropic, or xAI could trigger a 20-30 percent revision of the forward orders figure. That does not kill the AGI thesis — it just means the path there has volatility the current narrative is underpricing.

Our one-line verdict: This is the AGI infrastructure buildout. There will be a capital-cycle correction along the way. Both things are true. Plan accordingly.

For more on the broader Nvidia GTC story, read our companion pieces: Jensen Huang's AGI declaration, the agentic AI inflection point, and the Anthropic $800B valuation story. Or browse the full analysis desk.

Frequently asked questions

What did Jensen Huang announce at Nvidia GTC 2026?

On March 16, 2026, Jensen Huang announced $1 trillion in combined Blackwell and Rubin GPU orders through 2027, unveiled the Vera Rubin GPU architecture, and previewed Kyber — the architecture that follows Rubin in late 2027. It is the largest forward-orders figure ever disclosed by a public technology company.

What are the specs of Nvidia Vera Rubin?

Vera Rubin is a 336-billion-transistor GPU with 288GB of HBM4 memory, 22 TB/s of memory bandwidth, and 50 PFLOPS per GPU. It is the first Nvidia package to integrate six chips co-designed from the start. Nvidia claims Rubin delivers tokens roughly 10x cheaper than Blackwell on comparable workloads.

How much of the $1 trillion in orders comes from OpenAI?

OpenAI is the single largest committed buyer of Blackwell and Rubin GPUs through 2027. Our estimate, reconstructed from public capex guidance, Stargate disclosures, and order-allocation reporting, is roughly $300-350 billion of the $1T figure — the largest single-customer share by a meaningful margin.

How much is Anthropic contributing to the Nvidia order book?

Anthropic's forward GPU commitments through 2027 are estimated at $150-200 billion, mostly routed through AWS Trainium colocation and direct Nvidia allocation via Amazon. Anthropic's absolute spend is smaller than OpenAI's but growing faster in percentage terms after the Amazon follow-on investment and the $800 billion valuation round in early 2026.

What is Meta's share of Nvidia's $1 trillion in orders?

Meta guided to $100-110 billion in 2026 full-year capex, with the majority allocated to AI infrastructure. Extending through 2027 and isolating the Nvidia line gets to roughly $200-250 billion of the $1T figure. Meta is also building its own MTIA silicon, which is declining Nvidia's percentage share of Meta AI spend while growing it in absolute dollars.

What about xAI's Memphis Colossus cluster?

xAI's Memphis Colossus is already the largest single-site GPU deployment in the world. Elon Musk has publicly committed to scaling it to over a million H200/B200-class GPUs by end of 2026, with priority Rubin allocation for the Colossus 2 expansion in 2027. Our estimate for xAI's share of the $1T is $80-120 billion.

What is the Kyber architecture?

Kyber is the Nvidia GPU architecture that follows Rubin in late 2027. Unlike Rubin (which is still a training-first design with strong inference), Kyber is explicitly designed for inference-first workloads and agentic AI — long-context reasoning, tool use, multi-step planning. Nvidia previewed Kyber at GTC 2026 without disclosing detailed specs.

Is the $1 trillion in Nvidia orders a sign of an AI bubble?

The concentration risk is real — four companies (OpenAI, Anthropic, Meta, xAI) account for roughly 80 percent of the figure, and three of the four are financing GPU orders against equity rounds priced against AI revenue projections priced against GPU capacity. That circular financing pattern is a live systemic risk. Whether this constitutes a bubble depends on whether the AI scaling laws and inference demand trajectory hold through 2027. Our base case: the infrastructure is real, a capital-cycle correction along the way is also the base case. Both are true.

How will Rubin affect developers and AI API pricing?

Rubin's 10x cost-per-token improvement will work into API pricing over the 12-24 months after broad deployment in late 2026 and 2027. Expect Claude Sonnet-class and GPT-class API pricing to fall by 40-60 percent through 2027. Context windows will expand to 1M-plus tokens by default, and agentic AI workloads become economically standard rather than premium.

When does Vera Rubin start shipping?

Nvidia's guidance at GTC 2026 is that Vera Rubin begins shipping to priority customers in late 2026, with broader availability through 2027. The $1T forward orders figure covers the combined Blackwell (shipping now) plus Rubin (late 2026 through 2027) window. Kyber follows in late 2027.