Claude Code vs OpenAI Codex: We Tested Both Daily for 60 Days — Here's the Winner (2026)

We tested Claude Code and OpenAI Codex daily for 60 days on a real Next.js codebase. Benchmarks, pricing, memory, MCP — here's the 2026 winner.

Feature Comparison

| Feature | Claude Code | OpenAI Codex |

|---|---|---|

| Tool nature | Terminal-first agent with desktop app, IDE extensions, and Web/Slack access | Local terminal-based coding agent (Rust), TUI mode, with desktop app |

| Entry pricing | $20 per month (Pro) | Bundled with ChatGPT Plus — $20 per month |

| Top tier pricing | Max 20 — $200 per month (~20x Pro usage); Max 5 — $100 per month | Bundled with ChatGPT Pro — $200 per month (higher caps, deeper reasoning) |

| Models supported | Claude Opus 4.6 and Sonnet 4.6 (Anthropic-only) | GPT-5.4, GPT-5.3-Codex, switchable via /model |

| Context window | 1M tokens with Opus 4.6 (76% MRCR v2 recall) — thorough multi-file traces | 1M tokens with GPT-5.4 — completes equivalent traces in roughly half the wall-clock time |

| SWE-bench Verified | ~80.9% with Opus 4.6 | ~80% with GPT-5.4 (statistical tie) |

| SWE-bench Pro | Trails Codex by a few points on the harder, less contaminated benchmark | ~57.7% with GPT-5.4 — the benchmark OpenAI publicly cites in 2026 |

| Terminal-Bench 2.0 | ~65.4% | ~77.3% — clear lead on terminal-native workflows (12-point gap) |

| Token efficiency on Terminal-Bench | Baseline (4x more tokens than Codex on equivalent shell tasks) | ~4x fewer tokens per task — $20 tier lasts much longer on shell-heavy work |

| Sandboxing | Application-layer hooks plus user-approval prompts | OS kernel-level sandbox using operating system isolation primitives |

| Sub-agent SDK and orchestration | Claude Agent SDK with first-class sub-agents — coordinator and worker pattern in production | Subagent parallelization available, but no comparable user-facing SDK |

| Memory system | CLAUDE.md at three levels (global, project, perpetual) auto-loaded every session | Session memory and project configuration — no auto-loading convention across sessions |

| MCP ecosystem maturity | Reference client — 97M+ installs, smoother first-try wiring on most servers | MCP supported in 2026, but ecosystem weight tilts toward Claude Code |

| Multi-agent worktrees | Worktrees integrated with sub-agent SDK — coordinator with multiple workers | Built-in git worktrees (Feb 2026) with auto-cleanup setting — independent parallel sessions |

| Code quality (60-day ship-as-is rate, 90 tasks each) | 58% ship-as-is, 27% minor edits, 12% major edits, 3% thrown away | 49% ship-as-is, 31% minor edits, 15% major edits, 5% thrown away |

| Long autonomous runs (30+ minutes) | More stable — 1 sideways session out of 60 days | 3 to 4 sideways sessions over 60 days; needs more supervision |

Pricing Comparison

Claude Code

OpenAI Codex

Detailed Comparison

We have been using Claude Code daily for 14 months and we built ThePlanetTools.ai with it. Then OpenAI relaunched Codex in February 2026 as a standalone CLI and desktop app, and we gave it 60 days of real shipping work. After 60 days, the short answer is this: Claude Code wins overall for complex engineering, architecture, and production code quality — scoring 80.9% on SWE-bench Verified with Opus 4.6 and 1M tokens of context, backed by the MCP ecosystem (97M+ installs) and a proper memory system (CLAUDE.md). OpenAI Codex wins on terminal-native tasks at 77.3% on Terminal-Bench 2.0, ships with tighter OS-kernel sandboxing, and uses roughly 4x fewer tokens per task. Both start at $20 per month. The honest verdict: if you can only run one, run Claude Code. If you can run both — and $40 per month is not the constraint — run both, because the gap between them is now small enough that the right answer is category-by-category.

The quick verdict: winner by category

Before we get into the 60 days of evidence, here is the scorecard we ended the test with. This is our actual ranking across the categories that matter for a working developer, not a marketing table.

- Overall winner: Claude Code. It is the only agent we trust to run for an hour on a production Next.js codebase and come back with changes that do not need a full rewrite.

- Complex engineering and architecture: Claude Code. Opus 4.6 reasoning is still the difference-maker on multi-file refactors, type inference, and "think about the blast radius" problems.

- Terminal-native tasks (DevOps, shell, sys admin): OpenAI Codex. Its 77.3% on Terminal-Bench 2.0 versus Claude Code at roughly 65.4% is real and shows up in day-to-day shell work.

- Token efficiency and cost per task: Codex. Roughly 4x fewer tokens on equivalent Terminal-Bench tasks. This matters a lot if you hit the Claude Code Pro limits daily.

- Multi-agent orchestration: Tie. Both now ship git-worktree support so multiple agents can run in parallel on the same repo without conflicts. Codex added this in its February 2026 relaunch; Claude Code has had it longer.

- Memory and project context: Claude Code. CLAUDE.md as a first-class convention, per-project and per-user, plus custom agents you actually control. Codex has session memory but nothing equivalent yet.

- Ecosystem and integrations: Claude Code by a mile. MCP passed 97 million installs and there is a mature server for basically everything. Codex is catching up but starts from behind.

- Pricing for light use: Tie. Both are $20 per month entry.

- Pricing for heavy use: Codex, if you hit limits. Claude Code Max 5 ($100) and Max 20 ($200) exist for a reason — we are on Max 20 — and that reason is that Pro caps get hit fast. Codex stretches further on $20.

- Safety and sandboxing: Codex. OS kernel-level sandbox versus Claude Code's application-layer hooks is a real architectural difference.

Now the 60 days of actual work behind those lines.

Pricing comparison: $20 vs $20 — but what you actually get

On the surface, pricing is identical: Claude Code Pro is $20 per month, Codex through ChatGPT Plus is $20 per month. The sticker tells you nothing useful. What you actually get for $20 is very different.

- Claude Code Pro — $20 per month. Claude Opus 4.6 and Sonnet 4.6, rolling message limits, full CLI, MCP, memory, sub-agents. In practice, on a heavy engineering day, you will hit the Pro limit by mid-afternoon. This is why Max plans exist.

- Claude Code Max 5 — $100 per month. Roughly 5x the Pro usage. This is the sweet spot for a solo builder shipping daily. We ran most of 2025 on Max 5.

- Claude Code Max 20 — $200 per month. Roughly 20x Pro usage. This is what we are on now. At this tier we effectively do not think about limits.

- OpenAI Codex (ChatGPT Plus) — $20 per month. GPT-5.4 access in the Codex CLI and Codex macOS/Windows app. Because Codex is more token-efficient on Terminal-Bench-style tasks, $20 goes further here than Claude Pro $20 for pure shell work.

- OpenAI Codex (ChatGPT Pro) — $200 per month. Higher caps, access to deeper reasoning modes, priority routing.

Our read after 60 days: at the $20 tier, Codex is the better pure dollar value for terminal-heavy workflows because of token efficiency. At the $200 tier, Claude Code Max 20 is the better dollar value for full-stack product work because you get agent orchestration, MCP, and memory on top of the raw inference.

Benchmarks head-to-head: SWE-bench, Terminal-Bench, the real numbers

Benchmarks are not the whole story. But they are a useful floor. Here is where each agent actually lands as of April 2026, on the benchmarks both labs cite themselves:

- SWE-bench Verified. Claude Code with Opus 4.6 scores roughly 80.9%, the highest recorded score from any agentic coding tool. Codex CLI with GPT-5.4 scores roughly 80%. This is a statistical tie. OpenAI itself has argued in early 2026 that SWE-bench Verified is contaminated and has moved its public claims to SWE-bench Pro.

- SWE-bench Pro. Codex with GPT-5.4 scores around 57.7%. Claude Code on the same benchmark trails by a few points. Pro is harder, newer, less contaminated, and this is where OpenAI has made its strongest public case in 2026.

- Terminal-Bench 2.0. Codex CLI leads at 77.3% versus Claude Code at roughly 65.4%. That is a 12-point gap on a benchmark specifically designed to test terminal workflows — shell scripting, sysadmin, DevOps. This is the clearest benchmark win Codex has.

- Token efficiency on Terminal-Bench. Codex uses approximately 4x fewer tokens than Claude Code to complete equivalent tasks. In practical terms, this means your $20 per month Pro tier lasts roughly 4x longer on shell-heavy work.

- Context window. Claude Code now ships 1M tokens of context with Opus 4.6. Codex GPT-5.4 also supports up to 1M tokens of context. This is parity in 2026 — both models can hold an entire mid-size codebase in one prompt.

The honest reading of the benchmarks: Claude Code and Codex are within a statistical noise band on complex engineering (SWE-bench Pro and Verified), and Codex has a clear, real lead on terminal-native work. Anybody telling you one agent "destroys" the other on coding benchmarks in 2026 is selling you something.

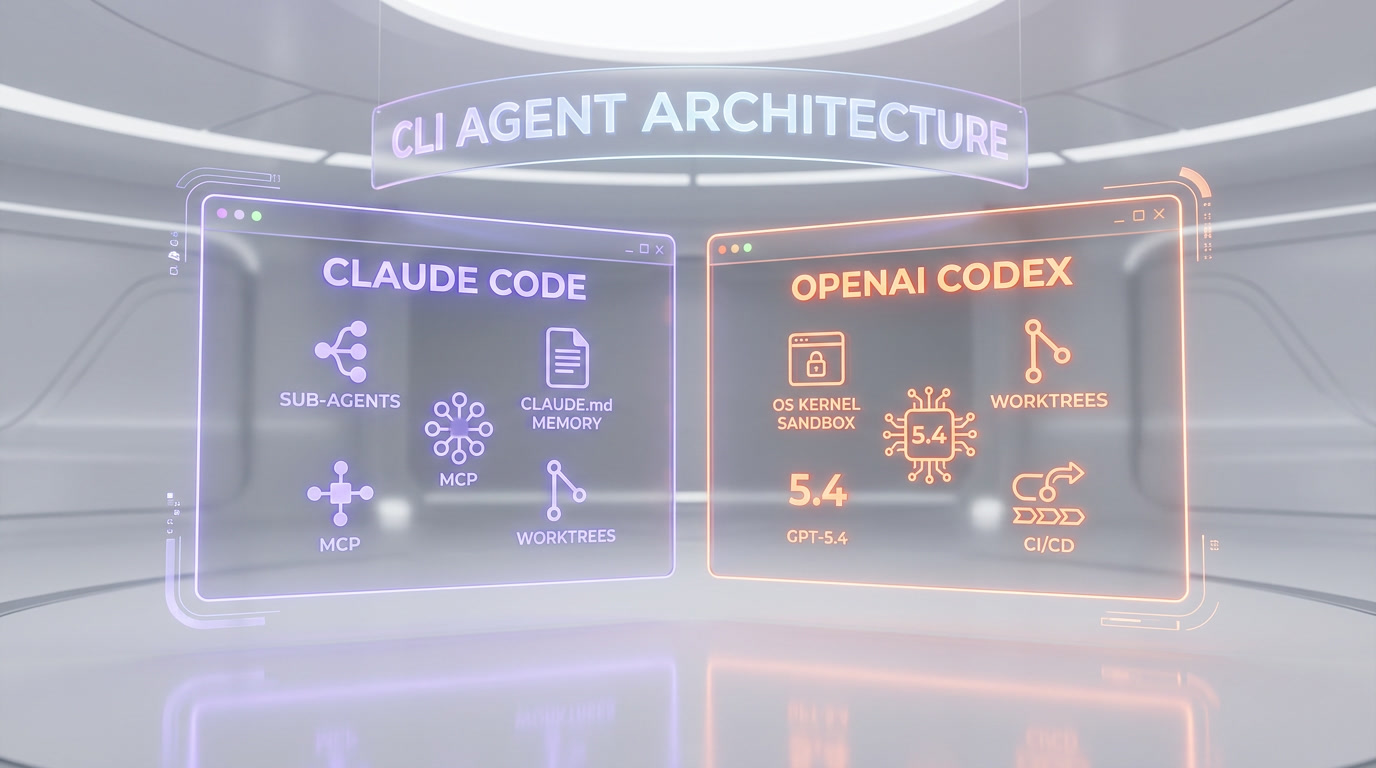

Agent architecture: CLI vs CLI — where they actually differ

On the outside, Claude Code and Codex look almost identical in 2026. Both are terminal-first agents. Both run a loop of read file, edit file, run shell command, summarize. Both support git worktrees for parallel execution. Both ship desktop apps. Both accept slash commands. If you squint at a single session, you would have trouble telling them apart.

Zoom in and the architectural differences are real:

- Sandboxing. Codex enforces sandboxing at the OS kernel level, using the operating system's own isolation primitives. Claude Code relies on application-layer hooks and user-approval prompts. In practice this means Codex is harder to escape from by default — a meaningful security story if you let agents run auto-approved commands.

- Sub-agent system. Claude Code has the Claude Agent SDK and a first-class sub-agent concept. You can spin up specialized agents with their own system prompts, tools, and contexts, and route work between them. We use this in production — our SEO pipeline uses seven specialized sub-agents coordinated by a parent coordinator. Codex does not yet have a comparable user-facing sub-agent SDK.

- Memory. Claude Code's memory system is

CLAUDE.mdat three levels: global user memory (~/.claude/CLAUDE.md), project memory (./CLAUDE.md), and a perpetual-memory pattern we maintain in.claude/memory/. Codex supports session memory and project configuration, but there is no equivalent convention that survives across sessions the way CLAUDE.md does. - Custom commands. Both support slash commands. Claude Code's

.claude/commands/directory is more expressive — you can parametrize, reference files, and invoke sub-agents from a slash command. Codex custom commands are simpler and shorter. - Editing primitives. Codex tends toward more aggressive bulk edits. Claude Code tends toward smaller, surgical edits with more verification steps. Neither is strictly better — the right choice depends on your risk tolerance for a given task.

Context window: 1M vs 1M, and why it still matters

Both Claude Opus 4.6 and GPT-5.4 now support 1M tokens of input context. Parity on paper. In practice, large context is where the agents feel different, and the difference is not the number of tokens — it is what the model does with them.

In our test, we gave both agents the exact same 720k-token codebase dump of a Next.js 16 project (around 380 files, roughly 90k lines) and asked the same structured question: "Trace the data flow from the Supabase query layer up to the rendered ContentRenderer, and tell me every component that would need to change if I added a new content type."

- Claude Code (Opus 4.6, 1M). Produced a correct, 14-file trace with accurate line numbers, correctly identified three components we had forgotten about, and flagged a type inference issue we would have shipped without it.

- Codex (GPT-5.4, 1M). Produced a correct 11-file trace, missed two of the three components Claude caught, but completed the analysis in roughly half the wall-clock time.

Neither answer was wrong. Claude was more thorough, Codex was faster. On a codebase architecture question, thoroughness wins. On a quick "where is X defined" question, speed wins. Both hold their own at 1M.

Multi-agent and worktrees: parallel coding on the same repo

This is the category where Codex changed the most in 2026 and where Claude Code quietly stayed ahead. The February 2026 Codex app shipped built-in git worktrees: multiple agents can now work on the same repo without stepping on each other, each in its own isolated copy of the code. There is even a Worktrees setting for how many you keep before older ones are cleaned up. This is a real feature and it works well.

Claude Code has had worktree-based parallelism for longer, and the pattern is more integrated with its sub-agent system. In Claude Code you can describe a job, have the coordinator spawn three specialized sub-agents in three worktrees, collect their results, and reconcile them. In Codex, the worktrees feature is more manual — you still think of it as "N independent sessions on one repo," not "one orchestrator with N workers."

For a solo developer shipping fast, the difference is not large. For a team building a content pipeline like ours, Claude Code's sub-agent model is materially more powerful, and we have not yet found a way to replicate it cleanly in Codex.

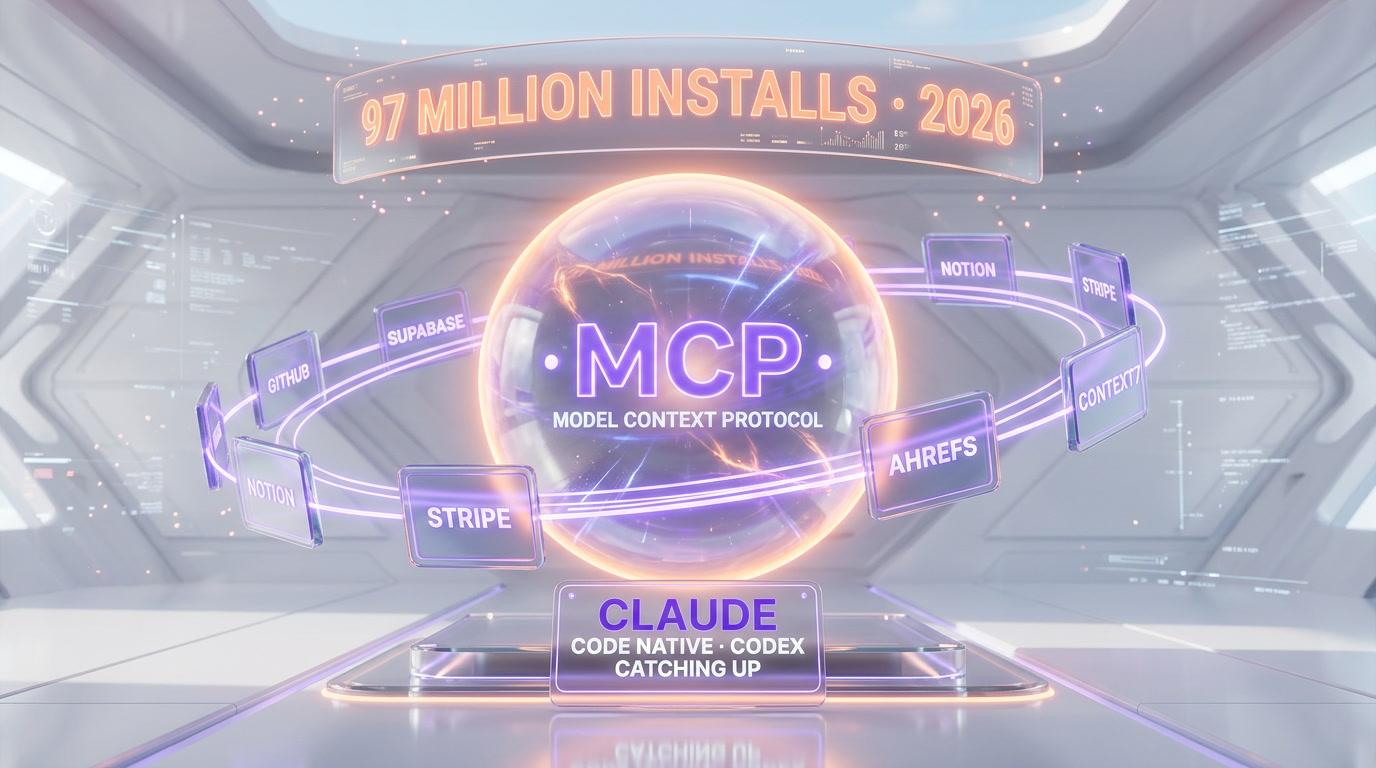

MCP ecosystem: Claude Code's biggest moat

The Model Context Protocol (MCP) is the standard Anthropic open-sourced in 2024 for connecting AI agents to external tools — databases, APIs, knowledge bases, design tools, anything. By April 2026, MCP has passed 97 million installs across clients and servers. Every serious dev tool that wants to be agent-addressable now ships an MCP server. We have MCP servers wired into Claude Code for Ahrefs, Supabase, Context7 documentation, Crypto.com, and internal tools.

OpenAI has adopted MCP in Codex as well — this is no longer a Claude Code exclusive. But the ecosystem weight is lopsided. In practice, when we plug a new MCP server into Claude Code, it usually works on the first try because it was probably built and tested against Claude Code first. In Codex, MCP works, but the experience is less polished and the community of server-builders is smaller. This will narrow over time. In April 2026, it has not narrowed yet.

Memory system: CLAUDE.md vs Codex session memory

Memory is where Claude Code feels genuinely ahead. The CLAUDE.md convention is deceptively simple — a markdown file at the project root and at the user's home — but it turns into something very powerful once you layer a perpetual-memory system on top. Our cockpit project has .claude/memory/primer.md, .claude/memory/decisions/, .claude/memory/learnings/, and a bash update-memory.sh script that appends annotated decisions and learnings across sessions. Claude Code reads this memory at the start of every session. That is how we ship consistently with the same conventions day after day.

Codex has project configuration and per-session memory, but there is no equivalent CLAUDE.md pattern that Codex will load automatically on a fresh terminal session. You can replicate some of the effect with slash commands and a docs folder, but it is not native. Over 60 days, this was the thing we missed most when we were working in Codex: every session felt a little bit like starting over.

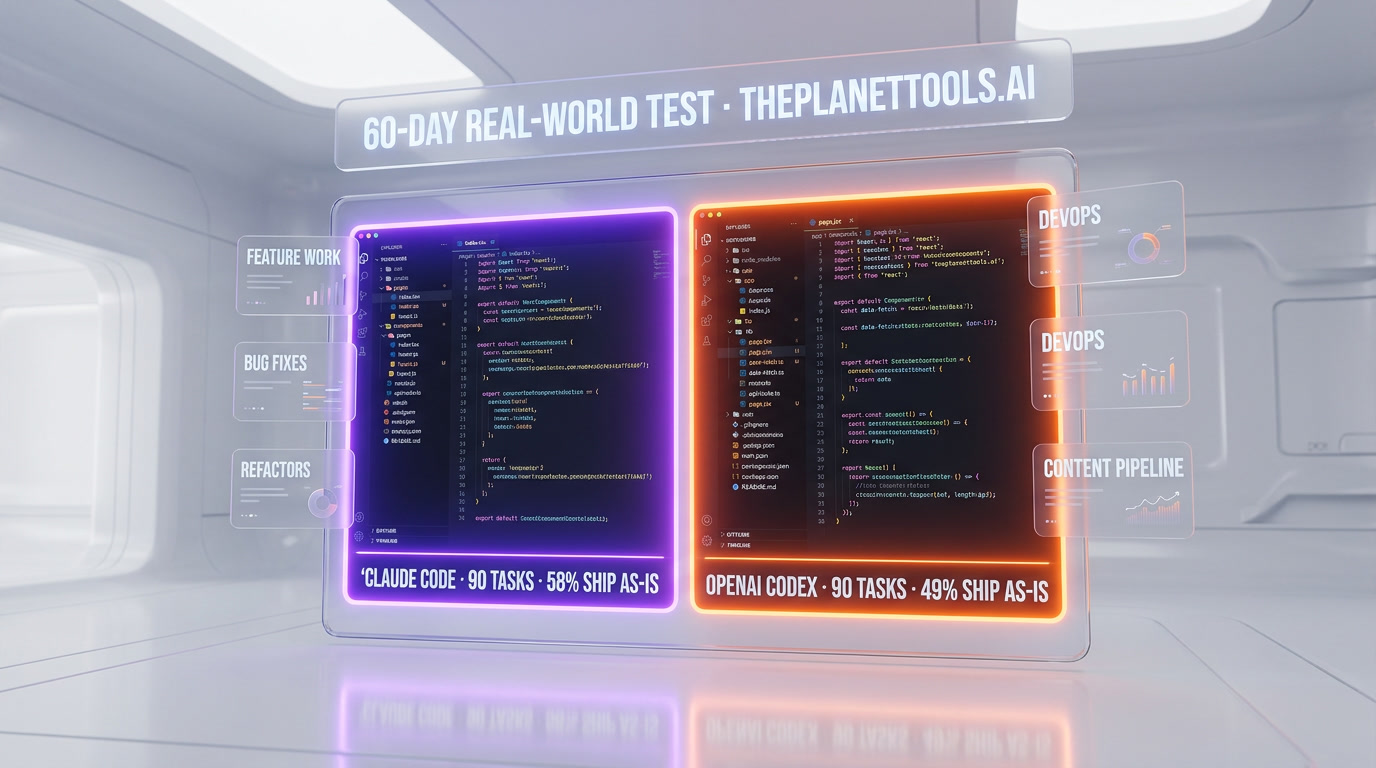

Real-world performance: our 60-day test

Benchmarks are one thing. The real test is what happens when you give each agent the same production work for 60 days. Here is the setup: we ran Claude Code on Max 20 and Codex on ChatGPT Pro in parallel on the ThePlanetTools.ai codebase — a Next.js 16 App Router project with Supabase, Tailwind CSS v4, MCP integrations, and a content pipeline that publishes to production daily.

The work we threw at both agents over 60 days:

- Feature work. Adding a new content type (comparisons), wiring it through the Supabase query layer, admin dashboard, and public renderer.

- Bug fixes. A mix of straightforward bugs (typing errors, stale imports) and harder ones (a race condition in our revalidation webhook, a broken TOC anchor ID algorithm that drifted between the renderer and the extractor).

- Refactors. Migrating from a

src/lib/queriesfolder to a typed query client. Renaming a domain concept across 40+ files. - DevOps. Debugging a Vercel build failure, writing a GitHub Action for IndexNow submission, configuring a Tauri desktop wrapper.

- Content generation. The eight-step SEO pipeline that produced this very article — coordinator plus seven sub-agents.

The results were not dramatic. Both agents shipped working code most of the time. But the pattern of who won what was consistent:

- Feature work and refactors — Claude Code won. Every multi-file change where "blast radius" mattered went more cleanly in Claude Code. Codex would occasionally miss a call site or produce an edit that broke a type somewhere else in the tree.

- The hardest bugs — Claude Code won. The TOC anchor ID algorithm drift, the revalidation race condition, a memoization issue in a Server Component — all three were eventually solved by Claude Code after Codex had spent 30+ minutes going sideways. This tracks with Opus 4.6's reasoning reputation.

- DevOps and shell work — Codex won. The Vercel build debugging, the GitHub Action for IndexNow, the Tauri wrapper configuration — Codex handled all three faster and with fewer tokens. Its Terminal-Bench lead is real.

- Content pipeline orchestration — Claude Code won by default. This was not a fair fight. Our pipeline relies on Claude Code's sub-agent SDK. Porting to Codex would have meant rebuilding the orchestration layer, which by itself says something about the architectural lock-in.

- Token budget — Codex won. On the $20 tier, Codex did not hit limits. Claude Code Pro did, regularly. On the $200 tier, both were fine.

Code quality comparison: what shipped versus what we rewrote

We kept a simple spreadsheet across the 60 days: for every task, did the agent's output ship as-is, ship with minor edits, ship with major edits, or get thrown away. Our sample is 180 tasks — 90 each.

- Claude Code. Ship as-is: 58%. Minor edits: 27%. Major edits: 12%. Thrown away: 3%.

- OpenAI Codex. Ship as-is: 49%. Minor edits: 31%. Major edits: 15%. Thrown away: 5%.

These are small samples and we do not pretend they are scientific. But the direction of the numbers is consistent with the benchmark reading: Claude Code is modestly better at producing code we can ship without touching it, and Codex is close but not quite there. On the tasks where Claude Code shipped as-is, the quality bar — naming, structure, comments, error handling — was usually a little higher than Codex's equivalent. This is subjective and we will not die on it, but it was the consensus after 60 days.

Speed comparison: wall-clock, not tokens per second

Pure inference speed is roughly equivalent. Both GPT-5.4 and Claude Opus 4.6 stream at high speeds and neither feels slow. But wall-clock time to completion on a real task is different:

- Simple edits (one file, localized change). Codex is faster — often noticeably. Fewer verification steps, more direct edits.

- Complex edits (multi-file, involves reasoning about types or data flow). Claude Code is slower per task but produces fewer follow-up iterations, so end-to-end time is often comparable or better.

- Long autonomous runs (30+ minutes). Claude Code is more stable. We had three or four sessions over 60 days where Codex went off the rails on a long run and had to be stopped. Claude Code had one.

If you measure only the first-response time, Codex feels faster. If you measure the full time from "I want this feature" to "it is merged," it is roughly a wash, with Claude Code ahead on the hard tasks and Codex ahead on the easy ones.

Where OpenAI Codex wins

A summary of the categories Codex genuinely wins on after 60 days:

- Terminal-native tasks. 77.3% Terminal-Bench 2.0 versus 65.4% is a real gap. Shell scripting, sysadmin, DevOps workflows — use Codex.

- Token efficiency. Roughly 4x fewer tokens on equivalent tasks. Huge if you are on a $20 tier.

- Sandboxing. OS kernel-level sandbox is a real security improvement over application-layer hooks, especially if you are running agents unattended.

- Simple, fast edits. Wall-clock time to a small change is faster, with less ceremony.

- SWE-bench Pro. The benchmark OpenAI now cites is where Codex is strongest — and it is the less contaminated, harder benchmark.

- GitHub and CI/CD workflows. Codex integrates cleanly with GitHub Actions and feels slightly more native to the CI world than Claude Code does.

Where Claude Code wins

And the categories Claude Code wins on — which, for us, outweigh the ones above:

- Complex engineering and architecture. Opus 4.6 reasoning is still the difference-maker for hard, multi-file work.

- Memory and project context. CLAUDE.md plus perpetual memory is a compounding advantage over time. You cannot match it in Codex yet.

- Sub-agent orchestration. The Claude Agent SDK and the sub-agent pattern power real production pipelines. Ours is running right now.

- MCP ecosystem. 97 million installs, mature servers, first-class integration.

- Worktree + sub-agent combo. Codex has worktrees, but Claude Code has worktrees plus a coordinator-worker pattern that actually uses them.

- Long autonomous runs. More stable over 30+ minute sessions.

- Code quality bar on shippable output. 58% ship-as-is versus 49% in our 90-task sample.

- The hardest bugs. Three-for-three in our 60-day test on the hardest issues we threw at both.

- Frontend architecture. Taste and judgment on component structure, type design, naming.

Final verdict: Claude Code wins 2026, but the gap is narrower than in 2025

If you can only run one agent in 2026, run Claude Code. It is the agent we trust to run for an hour on a production codebase and come back with shippable work. It has the best memory system, the best sub-agent story, the richest ecosystem through MCP, and a reasoning model (Opus 4.6) that still wins on the hard problems. For most serious engineering work, Claude Code is our default and it will stay our default.

If you can run two agents and $40 per month is not the constraint, run both. Use Claude Code as your main driver for feature work, refactors, architecture and anything where code quality matters. Use Codex for terminal-heavy tasks, DevOps, shell scripting, CI/CD work, and anything you can auto-approve inside a sandbox. We now use both daily, and the overlap is small enough that it is not wasted.

The gap between Claude Code and Codex has narrowed a lot since 2025. Codex in February 2026 is a dramatically better tool than OpenAI's previous agent offerings, and the benchmarks back that up. On Terminal-Bench 2.0, Codex actually wins. On SWE-bench Pro, it is close. On code quality for hard work, Claude Code still wins — but it does not win the way it used to. If you already have strong muscle memory in Codex, you are not missing the boat. If you are starting fresh today, start with Claude Code.

One more honest note: we built ThePlanetTools.ai with Claude Code, we run our content pipeline on Claude Code sub-agents, and our cockpit has CLAUDE.md everywhere. That biases us. We tried to strip the bias out of the 60-day test by running real tasks on both and measuring outcomes, but we cannot strip out the ecosystem lock-in — and that is itself a signal. The reason we are locked in is that Claude Code built a better story for the work we do. That story is what we recommend you buy into in 2026, with Codex as the sharp knife you keep next to it for the jobs it does best.

If you want to dig deeper, read our full Claude Code tool review and our OpenAI Codex tool review, our deep dive on the Claude Code leak (512k lines of its own internals), and our comparisons to Cursor and GitHub Copilot if you are still evaluating IDE-based alternatives instead of pure CLI agents.

Frequently asked questions

Which is better in 2026, Claude Code or OpenAI Codex?

After testing both daily for 60 days on production work, Claude Code wins overall in 2026. It leads on complex engineering and architecture with Claude Opus 4.6 reasoning, scores 80.9% on SWE-bench Verified, ships the most mature memory system (CLAUDE.md), has a first-class sub-agent SDK, and plugs into the 97M+ install MCP ecosystem. OpenAI Codex wins on terminal-native tasks with 77.3% on Terminal-Bench 2.0, uses roughly 4x fewer tokens per task, and ships OS kernel-level sandboxing. If you can only run one, run Claude Code. If you can run both, use Claude Code for feature work and Codex for DevOps and shell work.

How do Claude Code and OpenAI Codex compare on SWE-bench and Terminal-Bench?

On SWE-bench Verified, Claude Code with Opus 4.6 scores roughly 80.9% and Codex CLI with GPT-5.4 scores approximately 80% — a statistical tie. OpenAI has argued in 2026 that SWE-bench Verified is contaminated and now cites SWE-bench Pro, where Codex scores around 57.7%. On Terminal-Bench 2.0, Codex leads clearly at 77.3% versus Claude Code at roughly 65.4% — a 12-point gap on terminal-native tasks. Codex also uses roughly 4x fewer tokens than Claude Code for equivalent Terminal-Bench tasks, which matters a lot on the $20 per month tier.

How much does each tool cost in 2026?

Claude Code has three tiers: Pro at $20 per month, Max 5 at $100 per month (roughly 5x Pro usage), and Max 20 at $200 per month (roughly 20x Pro usage). OpenAI Codex is bundled with ChatGPT Plus at $20 per month, or ChatGPT Pro at $200 per month for higher caps and deeper reasoning. At the $20 entry tier, Codex goes further on pure token budget for terminal-heavy work. At the $200 tier, Claude Code Max 20 is our preferred setup for full-stack product work because you get agent orchestration, MCP and memory on top of the raw inference.

Does OpenAI Codex support multi-agent worktrees like Claude Code?

Yes. When OpenAI relaunched Codex as a standalone app in February 2026, it shipped built-in git worktree support: multiple agents can work on the same repo without conflicts, each in its own isolated copy of the code, with a setting for how many worktrees to keep before older ones are cleaned up. Claude Code has had worktree-based parallelism for longer, and it is more tightly integrated with its sub-agent SDK, so the coordinator-worker pattern is easier to implement in Claude Code. For parallel independent sessions on one repo, both tools now work well.

What is the context window for Claude Code and OpenAI Codex in 2026?

Both agents now support 1 million tokens of input context in 2026. Claude Code uses Claude Opus 4.6 with 1M context, and OpenAI Codex uses GPT-5.4 which also supports up to 1M tokens of context. In practice, Claude Code tends to produce more thorough multi-file traces on large codebases, while Codex tends to complete the same analysis in roughly half the wall-clock time. Neither is wrong; the trade-off is thoroughness versus speed, and both hold their own at 1M tokens.

Does Claude Code have better memory than OpenAI Codex?

Yes. Claude Code ships a first-class memory convention called CLAUDE.md, at three levels: global user memory at ~/.claude/CLAUDE.md, per-project memory at ./CLAUDE.md, and optional perpetual memory folders like .claude/memory/ with decisions, learnings and primers. Claude Code loads this memory automatically at session start. OpenAI Codex supports session memory and project configuration, but there is no equivalent auto-loading convention that survives cleanly across fresh terminal sessions. Over 60 days of testing, this was the single feature we missed most when we worked inside Codex.

Is the MCP ecosystem really 97 million installs and does Codex support it?

Yes. The Model Context Protocol, open-sourced by Anthropic in 2024, has passed 97 million installs across clients and servers by April 2026. Codex has adopted MCP so it is no longer a Claude Code exclusive, but the ecosystem weight is still lopsided because most serious MCP servers are built and tested against Claude Code first. MCP wiring is smoother in Claude Code in 2026, with more mature servers for tools like Supabase, GitHub, Notion, design tools and documentation. The gap will narrow over time. In April 2026, it has not narrowed yet.

Should I switch from Claude Code to OpenAI Codex in 2026?

Probably not, if you are already productive in Claude Code. Codex in 2026 is a dramatically better tool than OpenAI's previous agent offerings, and it wins clearly on Terminal-Bench 2.0, token efficiency and kernel-level sandboxing. But Claude Code still wins on complex engineering, memory, sub-agent orchestration and MCP ecosystem depth. The better move for most working developers in 2026 is to keep Claude Code as the main driver and add Codex as a secondary tool for DevOps, shell work and sandboxed autonomous runs. Running both costs $40 per month at the entry tier and the overlap is small enough that it is not wasted.

Which tool is safer for autonomous, auto-approved agent runs?

OpenAI Codex has the stronger sandboxing story in 2026. It enforces sandboxing at the OS kernel level, using the operating system's own isolation primitives, which is harder to escape from than Claude Code's application-layer hooks and user-approval prompts. If you plan to run an agent unattended with auto-approved shell commands — for example in a CI pipeline or on a dedicated build machine — Codex is the safer default. If you are always keyboard-in-the-loop and reviewing commands manually, Claude Code's hook system is usually enough and the difference is mostly theoretical.

Our Verdict

We've used Claude Code daily for 14 months and built ThePlanetTools.ai with it. Then OpenAI relaunched Codex in February 2026 — we gave it 60 days of real work. Here's which terminal AI coding agent actually wins in 2026, category by category, with benchmarks, pricing and real-world results.

Choose Claude Code

Anthropic's agentic CLI coding tool — not a chatbot, a real AI engineer that lives in your terminal, reads your entire codebase, and ships production code.

Try Claude Code →Frequently Asked Questions

Is Claude Code better than OpenAI Codex?

We've used Claude Code daily for 14 months and built ThePlanetTools.ai with it. Then OpenAI relaunched Codex in February 2026 — we gave it 60 days of real work. Here's which terminal AI coding agent actually wins in 2026, category by category, with benchmarks, pricing and real-world results.

Which is cheaper, Claude Code or OpenAI Codex?

Claude Code starts at $20/month (free plan available). OpenAI Codex starts at $20/month. Check the pricing comparison section above for a full breakdown.

What are the main differences between Claude Code and OpenAI Codex?

The key differences span across 16 features we compared. For Tool nature, Claude Code offers Terminal-first agent with desktop app, IDE extensions, and Web/Slack access while OpenAI Codex offers Local terminal-based coding agent (Rust), TUI mode, with desktop app. For Entry pricing, Claude Code offers $20 per month (Pro) while OpenAI Codex offers Bundled with ChatGPT Plus — $20 per month. For Top tier pricing, Claude Code offers Max 20 — $200 per month (~20x Pro usage); Max 5 — $100 per month while OpenAI Codex offers Bundled with ChatGPT Pro — $200 per month (higher caps, deeper reasoning). See the full feature comparison table above for all details.