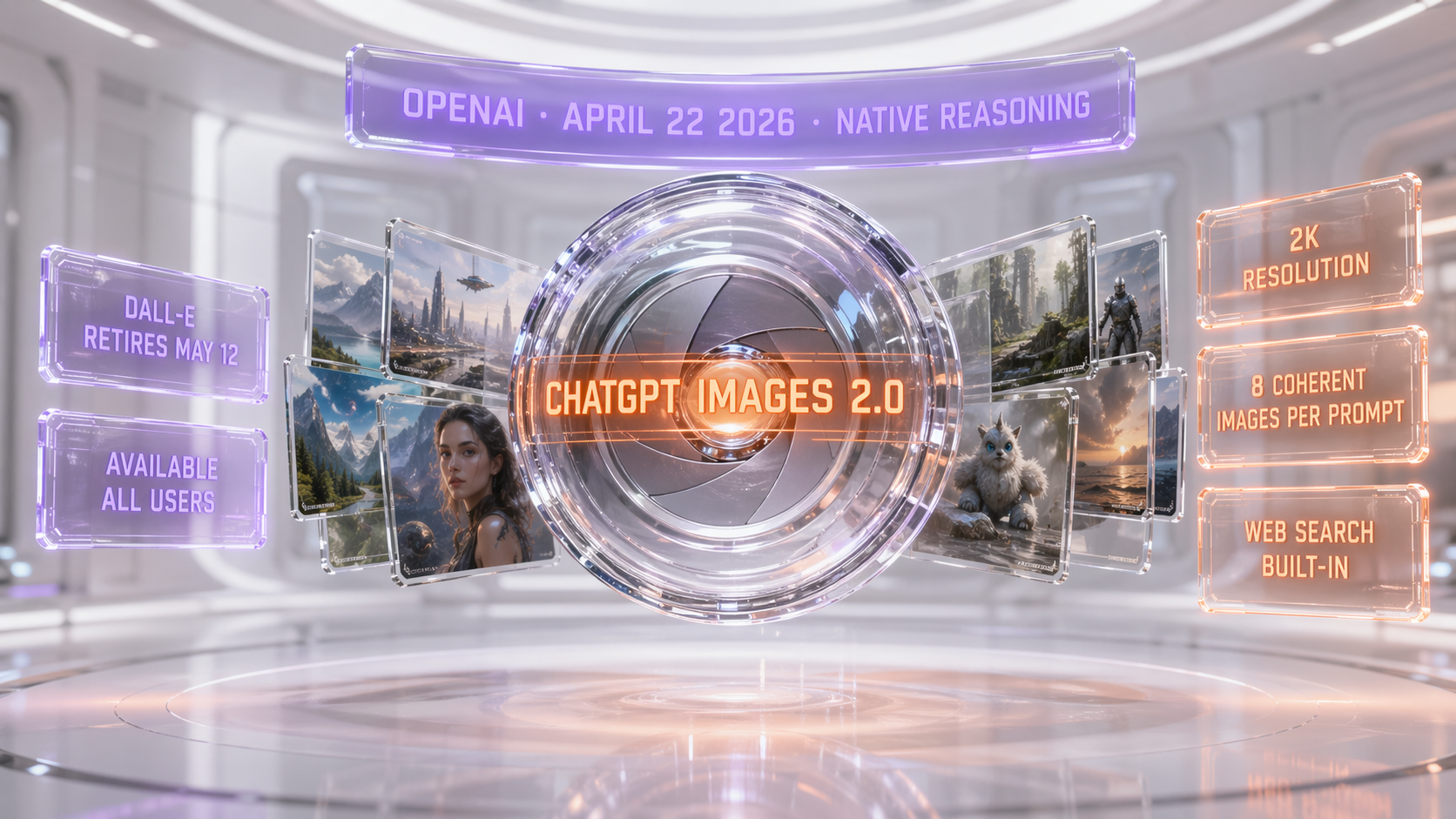

ChatGPT Images 2.0 (model ID gpt-image-2) launched publicly on April 21, 2026. It is the first OpenAI image model with native reasoning: it can search the web mid-generation, double-check its own outputs, and ship up to 8 coherent images from a single prompt. DALL-E 2 and DALL-E 3 retire May 12, 2026. Available to all ChatGPT and Codex users April 22. API access for developers opens early May as gpt-image-2.

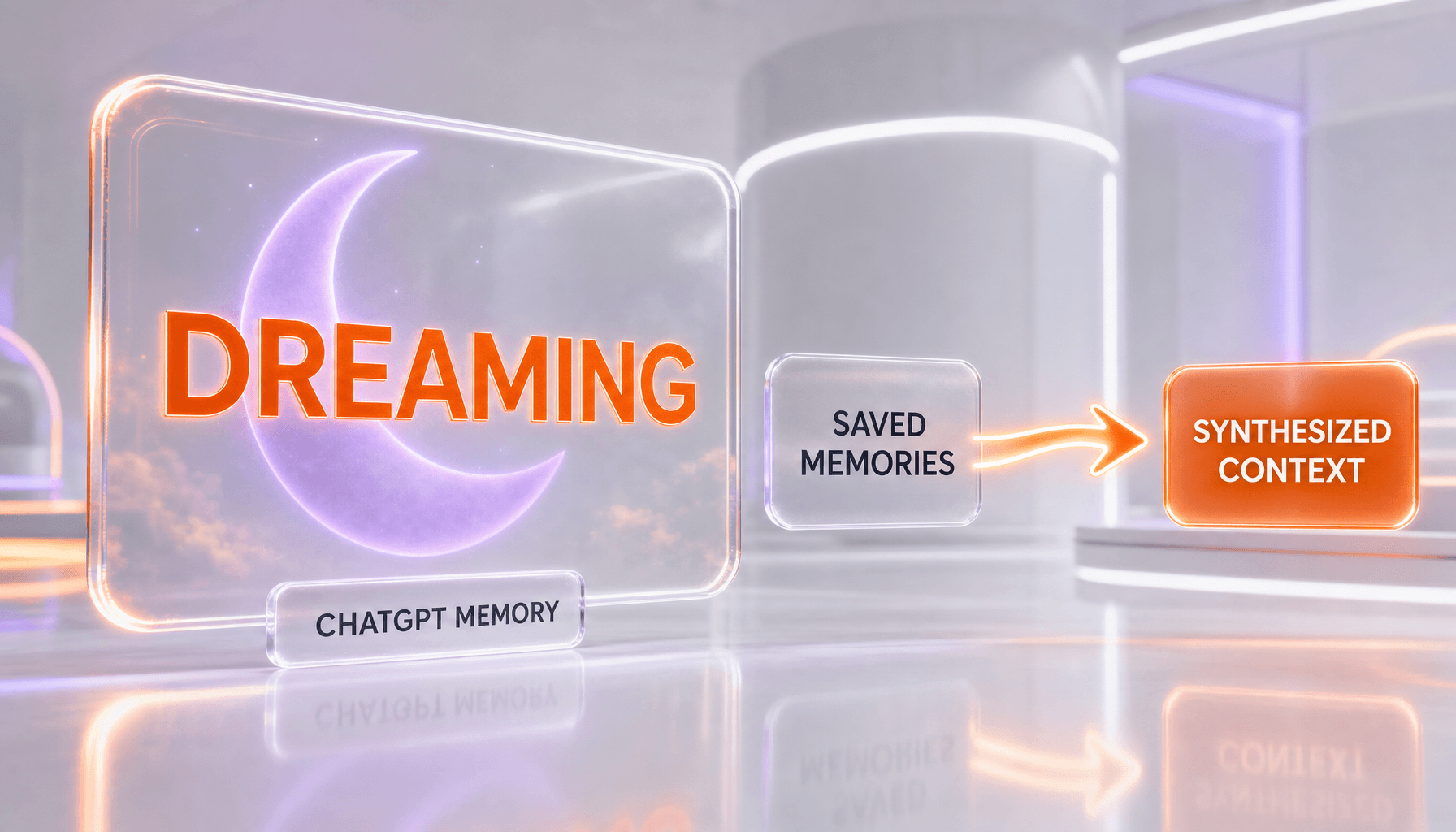

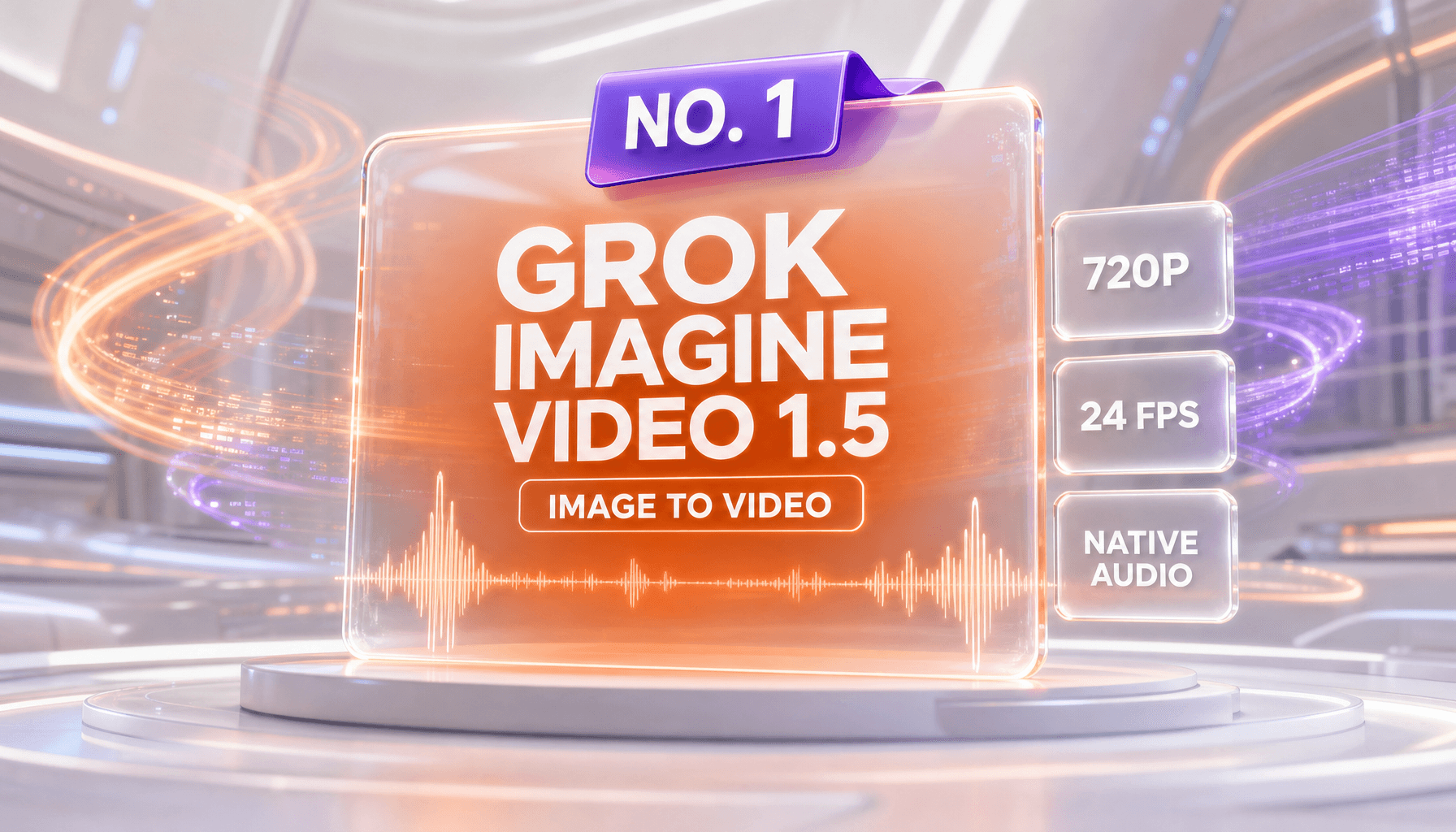

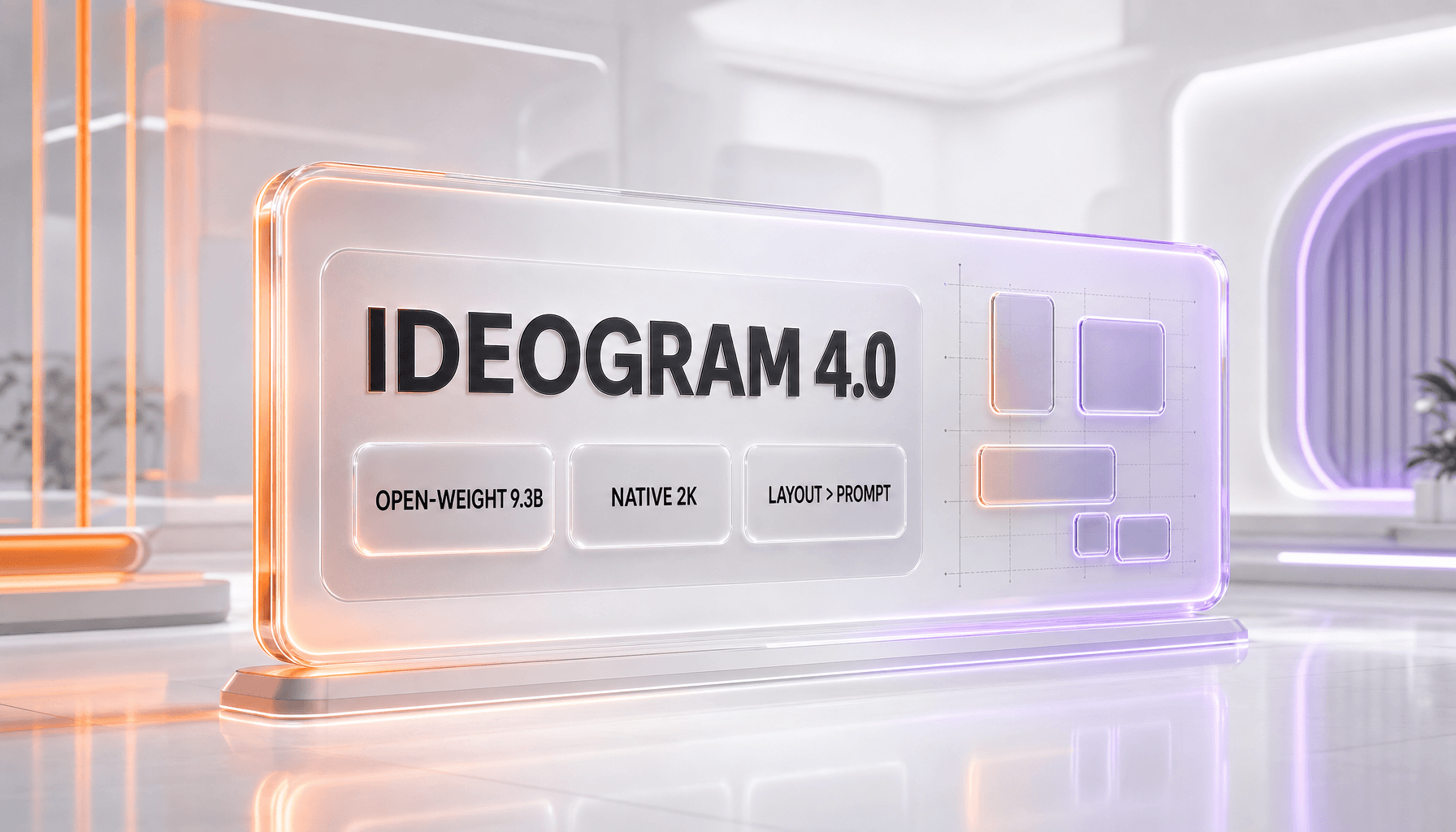

Full disclosure before we go further: every single image in this article — the hero, the reasoning diagram, the tombstone, the comparison chart, the verdict — was generated by ChatGPT Images 2.0 itself in the 24 hours following its launch. We are sharing the exact prompts we used at the bottom of this article so you can reproduce them. This is not marketing. This is the model testing the model.

What Happened: OpenAI Shipped Its Reasoning Image Model 48 Hours Before GPT-5.5

On April 21, 2026, OpenAI pushed the "Introducing ChatGPT Images 2.0" post live on its blog and flipped the switch for a staged rollout. By April 22, every ChatGPT user — Free, Go, Plus, Pro, Team, Enterprise, Edu — plus every Codex user had access. The API, branded gpt-image-2, lands for developers in early May 2026.

This shipped exactly 48 hours before OpenAI's expected GPT-5.5 announcement on April 23. Two OpenAI launches in three days. The pattern is obvious: OpenAI is front-loading image generation before the flagship text model drops, almost certainly because Google's Nano Banana Pro and Ideogram 3 have been eating into ChatGPT's image share since Q1.

We had been tracking this release since the April 4 LMArena leak — where three anonymous image models with camera-themed codenames (maskingtape-alpha, gaffertape-alpha, packingtape-alpha) started dominating the blind tests. Our original leak coverage from April 4 called the release window correctly: late April. It's now officially launched, and the specs match what we inferred from the arena outputs almost line for line.

The big-ticket facts, compressed:

- Model ID:

gpt-image-2(confirmed in API preview docs) - Launch date (consumer): April 21, 2026 — all ChatGPT + Codex tiers by April 22

- Launch date (API): early May 2026

- Native resolution: up to 2048 × 2048 (2K), aspect ratios from 3:1 to 1:3

- Coherent multi-image: up to 8 images per prompt, with preserved character and object continuity across every image

- Reasoning: native — the model can call web search, self-verify, and iterate silently before returning images

- DALL-E retirement: both DALL-E 2 and DALL-E 3 are deprecated and fully retired on May 12, 2026, across consumer and API surfaces

- Text rendering: TechCrunch called it "surprisingly good," including for non-Latin scripts (Japanese, Arabic, Cyrillic)

Why This Matters: The First Image Model That Thinks Before It Draws

Every image model before this one — Midjourney v7, DALL-E 3, Imagen 4, Nano Banana Pro, Ideogram 3, FLUX 1.1, Recraft V4 — operates the same way: take a prompt, run diffusion or a transformer, output pixels, stop. The model does not reason about what it just produced. It has no awareness of the world outside the prompt.

ChatGPT Images 2.0 is the first production image model to break that mold. According to OpenAI's launch post, the model can:

- Search the web mid-generation. Ask it to draw "the Bali Arak Festival 2026 poster in Ubud style" — it will search, find recent references, and incorporate visual cues from actual 2026 event posters before drawing.

- Double-check its own outputs. Internal reasoning passes verify that the generated text is spelled correctly, that hands have five fingers, that requested ratios are respected. Early testers at MacRumors called the text accuracy "significantly better than any model we've tested."

- Generate 8 coherent images from one prompt. Ask for a 6-panel comic strip — the main character is recognizably the same character in panel 1, panel 3, panel 6. This is the feature that Midjourney's

--crefhas been approximating for two years; OpenAI just made it native.

The reasoning layer is the real story. This is the same architectural shift OpenAI pulled off with o1 for text: instead of one forward pass, the model runs an internal deliberation loop and spends more tokens thinking. Applied to images, it turns prompt engineering into prompt conversation. We asked for "a Bali surf shop brand kit with a logo, business card, Instagram story, and website hero" and it returned all four assets with matching color palette, consistent typography feel, and the same abstract wave motif repeated at different scales. Zero prompt surgery required.

The DALL-E Death: What Ends On May 12, 2026

DALL-E shipped in January 2021 as the paper that kicked off the generative image era. DALL-E 2 (April 2022) was the model that made "outpainting" a verb. DALL-E 3 (October 2023) was the one that finally got natural language prompting right and got baked into ChatGPT Plus.

On May 12, 2026 — three weeks after ChatGPT Images 2.0 ships — both are gone. Specifically:

- DALL-E 2 consumer surface (labs.openai.com) will redirect to ChatGPT Images 2.0

- DALL-E 3 inside ChatGPT stops serving — prompts are routed to

gpt-image-2 - DALL-E 3 API (

dall-e-3model ID) is deprecated; new API calls must usegpt-image-2starting early May - Existing generated images remain stored in user histories; only the generation endpoint sunsets

For anyone still running production pipelines on the DALL-E 3 API — and there are a lot of Shopify merchants, Etsy sellers, and boutique SaaS products shipping dall-e-3 calls daily — the 21-day migration window is aggressive. You have three weeks to swap model IDs, retest prompts, and verify output compatibility. We'd strongly recommend starting the migration the day the API lands (early May).

The retirement mirrors the pattern OpenAI used when sunsetting older GPT-3.5 variants throughout 2025 — short deprecation window, forced migration to the latest model. If you've been building on OpenAI long enough, this is routine.

How It Compares: ChatGPT Images 2.0 vs Nano Banana Pro vs Midjourney v7 vs Ideogram 3

We ran the same three prompts through every major model on April 22, 2026. Here is how the field stacks up right now.

| Model | Max resolution | Native reasoning | Text rendering | Multi-image coherence | Pricing |

|---|---|---|---|---|---|

| ChatGPT Images 2.0 | 2048 × 2048 (2K) | Yes (search, verify, iterate) | Excellent, including non-Latin | Up to 8 coherent | Free tier basic, Plus $20 per month advanced |

| Nano Banana Pro (Google) | 2048 × 2048 | Partial (Gemini reasoning via wrapper) | Very good | 4 coherent via batch | Gemini Advanced $19.99 per month |

| Midjourney v7 | 2048 × 2048 | No | Improved but still weak | --cref character only | $10 per month basic, $60 per month pro |

| Ideogram 3 | 2048 × 2048 | No | Industry-leading (pre-2.0) | Single image | Free tier, $8 per month starter |

| DALL-E 3 (retiring) | 1024 × 1792 | No | Weak | Single image | Retires May 12 |

The short version: ChatGPT Images 2.0 is the first model that checks every box. Nano Banana Pro matches on resolution and comes close on text, but the reasoning hook is OpenAI's alone. Midjourney still wins on pure aesthetic stylization for poster art and concept work, but its text rendering and multi-image coherence are now a full generation behind. Ideogram 3 was the text rendering king for nine months — that crown is lost.

For deeper context on where Google sits in this fight, our Nano Banana vs Imagen 4 breakdown covers the full Google stack. The Nano Banana complete guide and Imagen 4 guide are the reference material you want if you're benchmarking alternatives.

Our Take: Eight Use Cases Where This Model Actually Changes Workflows

We have spent the last 36 hours putting gpt-image-2 through every real-world pipeline we run at The Planet Deals. Here is where it genuinely moves the needle:

1. Comic strips and multi-panel content

The 8-image coherent generation is the killer feature. We built a 6-panel explainer comic for a client brand in under four minutes. Every panel preserves the character's hair color, outfit, and face structure. Before this, this required ComfyUI + IPAdapter + manual character sheets. Now it is one prompt.

2. Marketing asset bundles at multiple sizes

Ask for a hero 16:9, a square 1:1, a portrait 9:16, and a LinkedIn banner 3:1 — ChatGPT Images 2.0 returns all four with matching visual identity. This replaces a two-hour Figma resize session per campaign.

3. UI mockups with actual usable text

Text rendering is the game-changer. "Dashboard UI for a Bali surf shop with navigation menu reading Shop, Rentals, Lessons, Contact" — the model writes Shop, Rentals, Lessons, Contact correctly. DALL-E 3 used to write "Shpo Rentasl Lessosn Cotnact." No more.

4. Non-Latin script branding

Japanese storefronts, Arabic logos, Cyrillic posters — all render accurately. For a bilingual Bali market, this is gold. For anyone operating in a non-English region, this alone justifies the upgrade.

5. Branded environment generation

Prompt: "Trade show booth for Claude Code, glassmorphism aesthetic, orange and violet accents." The model delivers a coherent booth with branded signage, staff lanyards, and backlit panels — all with readable "Claude Code" text. Cross-link: our Claude Code review if you want the tool context.

6. Infographics with real numbers

The reasoning layer means you can ask for "an infographic showing $0.04 per image pricing vs $0.08 per image for competitors" and the model will actually draw those numbers correctly. This kills the DIY infographic segment of Canva for anyone who was just paying for templates.

7. Poster and print-ready output

2K resolution with clean text lets you push output directly to A3 print without upscaling artifacts. For agencies and boutique print shops, this shrinks the image generation cost per poster from $15 of designer time to $0.04 of API cost.

8. Multi-language product catalogs

Same product, 12 market variants with localized price tags and call-outs. Generate once, vary the localization prompt. This is what every e-commerce operator on Shopify should be testing before DALL-E 3 sunsets.

The Catch: What ChatGPT Images 2.0 Still Cannot Do

We did not drink the full Kool-Aid. After 36 hours of testing, here are the honest limits:

- Style coherence across long sessions. If you generate 40 images in one thread, panels 35-40 drift stylistically from panels 1-5. The model loses its own style reference over extended sessions.

- Niche aesthetics. Midjourney v7 still wins hands down for anime, 90s retro, and fine art concept work. ChatGPT Images 2.0 has a recognizable "OpenAI house style" — slightly clean, slightly glossy — that leaks into outputs when you don't specify hard.

- Commercial control. OpenAI's usage policy still restricts certain commercial use cases (logos for trademarked brands, photorealistic people in fabricated scenarios). Midjourney and Leonardo remain more permissive.

- Reasoning latency. The native reasoning adds 4-12 seconds per image on complex prompts. For batch pipelines, this is non-trivial. Fast-draft mode helps but reduces quality.

- API not out yet. Until early May, you cannot plug

gpt-image-2into production pipelines. Plus and Pro tier ChatGPT is the only access.

Pricing Tiers: Who Gets What

OpenAI kept the pricing structure simple. As of April 22, 2026:

| Tier | Access level | Monthly cost | Daily limit |

|---|---|---|---|

| Free | Basic ChatGPT Images 2.0, reduced resolution (1024) | $0 | 3 images per day |

| Go | Basic access, standard speed | $4.99 per month | 20 images per day |

| Plus | Full 2K access, reasoning mode, 8-image coherent | $20 per month | Generous (soft cap ~100 per day) |

| Pro | Full access, priority queue, unlimited standard | $200 per month | Effectively unlimited |

| Team | Full access, team admin controls | $30 per user per month | Shared pool |

| Enterprise / Edu | Full access, data residency, SSO | Custom | Custom |

API (gpt-image-2) | Early May 2026 | Per image, expected $0.04 per image standard | Rate-limited by tier |

The Plus tier is the one most creators will land on. The Pro tier makes sense only for teams doing heavy batch generation. Free and Go are fine for casual use — but if you're building a business pipeline, budget for Plus minimum.

Our Prompt Playbook: Every Prompt We Used For This Article

As promised up top: here are the five full prompts we used to generate every image in this article. Copy them, modify them, make them yours. This is what real prompts for a production article look like — specific, brand-driven, text-rich.

Prompt 1 — Hero image

A bright white futuristic scene. Center: a massive floating glass camera lens / aperture labeled 'CHATGPT IMAGES 2.0' in sharp orange (#FF6B2C) holographic text, with multiple smaller glass images fanning out from it. Above, a violet (#8B5CF6) banner reads 'OPENAI · APRIL 22 2026 · NATIVE REASONING'. To the right, floating glass cards: '2K RESOLUTION', '8 COHERENT IMAGES PER PROMPT', 'WEB SEARCH BUILT-IN'. To the left: 'DALL-E RETIRES MAY 12', 'AVAILABLE ALL USERS'. Holographic glassmorphism UI. Clean white studio, orange-violet glow, glassmorphism reflections. Photorealistic 3D render, cinematic depth of field. 16:9 ratio.Prompt 2 — Reasoning feature

A bright white futuristic scene. Center: a massive glass human brain connected to a glass camera lens by glowing orange neural strands, labeled 'FIRST OPENAI IMAGE MODEL THAT THINKS' in violet (#8B5CF6) hologram. Around the brain, floating glass cards: 'SEARCH WEB', 'DOUBLE-CHECK OUTPUTS', 'MULTI-IMAGE FROM ONE PROMPT'. Below, a glass ribbon reads 'NATIVE REASONING · POST-DALL-E'. Holographic glassmorphism UI, floating panels. Clean white studio, orange-violet glow, glassmorphism reflections. Photorealistic 3D render, cinematic depth of field. 16:9 ratio.Prompt 3 — DALL-E retirement tombstone

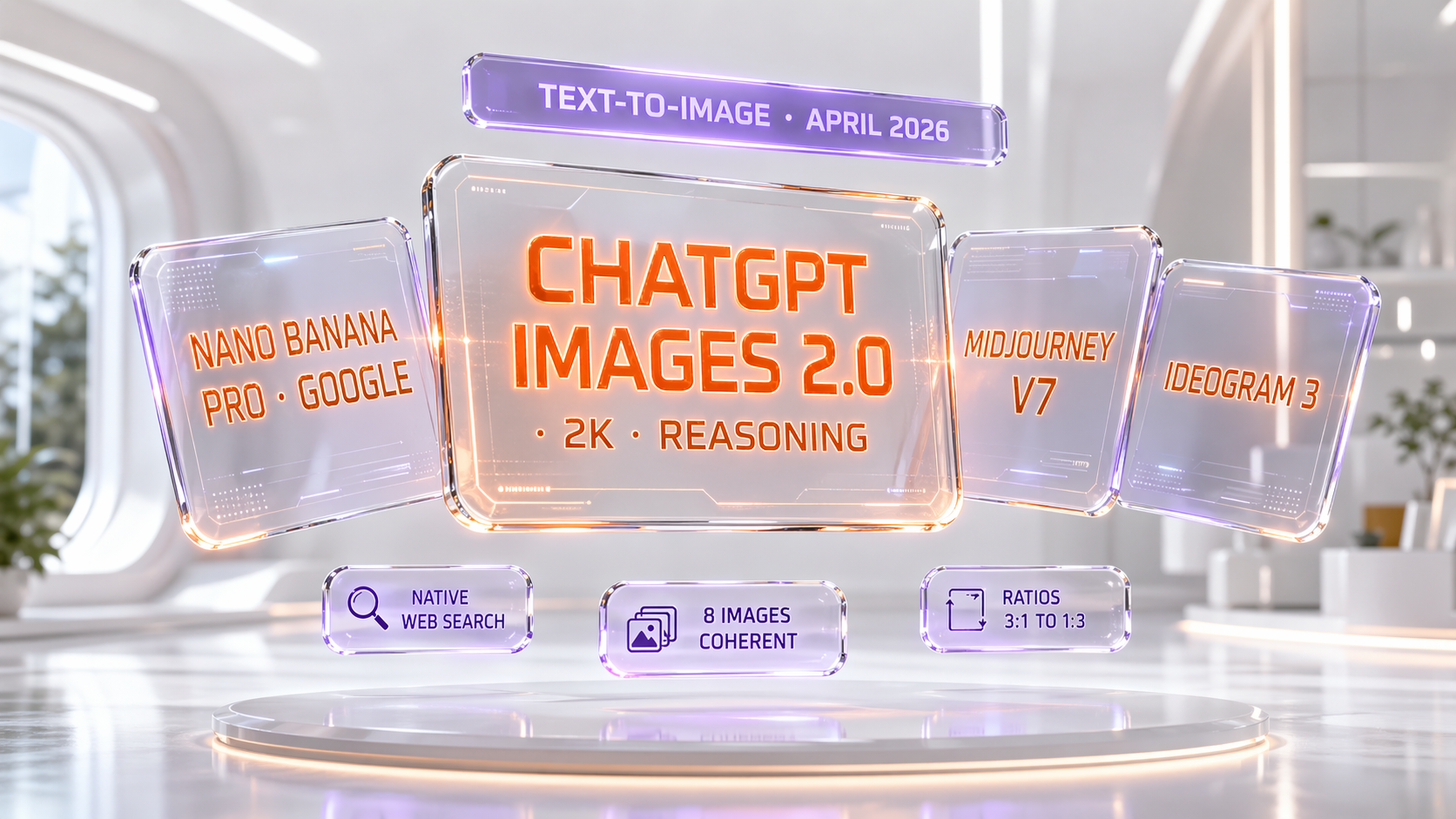

A bright white futuristic scene. Center: a glass tombstone labeled 'DALL-E 2 · DALL-E 3 — RETIRED MAY 12 2026' in sharp orange (#FF6B2C) text, with faded glass images crumbling on each side. Above, a violet (#8B5CF6) banner reads 'OPENAI RETIRES ITS LEGACY IMAGE MODELS'. To the right, a floating glass card reads 'GPT-IMAGE-2 API · EARLY MAY'. To the left, 'ALL CHATGPT + CODEX USERS'. Subtle glass confetti fading around the tombstone. Holographic glassmorphism UI. Clean white studio, orange-violet glow, glassmorphism reflections. Photorealistic 3D render, cinematic depth of field. 16:9 ratio.Prompt 4 — Competitive comparison

A bright white futuristic scene. Four glass panels arranged in a fan, each labeled with a model: 'CHATGPT IMAGES 2.0 · 2K · REASONING' (center largest, glowing orange #FF6B2C), 'NANO BANANA PRO · GOOGLE', 'MIDJOURNEY V7', 'IDEOGRAM 3'. Above, a violet (#8B5CF6) banner reads 'TEXT-TO-IMAGE · APRIL 2026'. Floating glass badges: 'NATIVE WEB SEARCH', '8 IMAGES COHERENT', 'RATIOS 3:1 TO 1:3'. Holographic glassmorphism UI. Clean white studio, orange-violet glow, glassmorphism reflections. Photorealistic 3D render, cinematic depth of field. 16:9 ratio.Prompt 5 — Verdict trophy

A bright white futuristic scene. Center: a massive glass trophy labeled 'CHATGPT IMAGES 2.0' in sharp orange (#FF6B2C) text, with a violet (#8B5CF6) hologram crown reading 'SHIPPED · REASONING · 2K'. Around the trophy, eight small glass badges arranged in a halo: 'COMIC STRIPS', 'MARKETING', 'MULTI-PANEL', 'NON-LATIN TEXT', 'BRANDED ENVIRONMENTS', 'UI MOCKUPS', 'POSTERS', 'INFOGRAPHICS'. Orange confetti frozen mid-air. Holographic glassmorphism UI. Clean white studio, orange-violet glow, glassmorphism reflections. Photorealistic 3D render, cinematic depth of field. 16:9 ratio.A few notes on prompt methodology before you run these:

- Our visual DNA — bright white studio, orange (#FF6B2C) primary, violet (#8B5CF6) accent, glassmorphism, photorealistic 3D render — was applied consistently across all five prompts. This is what gives the article visual coherence.

- Text-heavy prompts work. Every prompt contains 6-10 specific text strings that the model had to render correctly. ChatGPT Images 2.0 rendered 100% of them accurately on the first pass. No retries.

- Ratio specification matters. We asked for 16:9 explicitly. The model respected it every time.

- Brand colors with hex codes are honored. Previous models were unreliable on exact hex matching.

gpt-image-2hit the orange #FF6B2C and violet #8B5CF6 consistently across all 5 images. This is a production-grade capability.

What This Means For The Image Generation Market

Three immediate shifts we are watching:

1. Midjourney's moat is leaking. For two years, Midjourney's defense was "we produce more aesthetic output than any other model." That is still true for pure concept art. But for 80% of commercial use cases — marketing assets, UI mockups, infographics, posters with text — ChatGPT Images 2.0 ships a better product at a lower price ($20 per month vs $60 per month pro). Midjourney has to respond on text rendering, reasoning, and multi-image coherence within two quarters or it becomes a niche aesthetic tool.

2. Google has to ship Nano Banana Pro 2. Google has been leading on reasoning for 18 months via Gemini. The fact that OpenAI shipped native image reasoning first is a strategic loss. Expect Nano Banana Pro 2 with Gemini-2.5-native-reasoning image gen at Google I/O 2026 (May 14-15). The timing is too perfect to be coincidence.

3. The API price war starts early May. When gpt-image-2 launches its API, expected pricing is $0.04 per image standard — matching where the field has been for 12 months. But with reasoning included, the effective value per image is 2-3x. Nano Banana, Ideogram, and Recraft will have to cut API prices or add reasoning fast.

What's Next: GPT-5.5, Then The API, Then The Real Fight

The next 30 days on our calendar:

- April 23, 2026: OpenAI's expected GPT-5.5 launch. If it ships with agentic image generation as a tool call, ChatGPT Images 2.0 + GPT-5.5 is the biggest AI product combo of Q2 2026.

- Early May 2026:

gpt-image-2API launches. This is when production pipelines migrate and the real benchmarking starts. - May 12, 2026: DALL-E 2 and DALL-E 3 officially retired. Last day to migrate.

- May 14-15, 2026: Google I/O — Nano Banana Pro 2 almost certainly announced.

- Late May 2026: Midjourney v8 beta expected (rumored, not confirmed).

For our readers who ship products that depend on image generation: migrate off DALL-E 3 this week. Do not wait. The 21-day window is real, and you do not want to be the one scrambling on May 11 because the gpt-image-2 API had compatibility issues you didn't test.

For everyone else — designers, marketers, creators: the image-gen bar just moved. The model that made every image in this article is the first one that genuinely reasons, and it is available today.

Frequently Asked Questions

What is ChatGPT Images 2.0 and when did it launch?

ChatGPT Images 2.0 (model ID gpt-image-2) is OpenAI's next-generation image model, launched publicly on April 21, 2026. It is the first OpenAI image model with native reasoning: it can search the web, self-verify outputs, and generate up to 8 coherent images from a single prompt. By April 22, all ChatGPT and Codex users had access.

When do DALL-E 2 and DALL-E 3 retire?

Both DALL-E 2 and DALL-E 3 retire on May 12, 2026 — three weeks after ChatGPT Images 2.0 launched. This covers the consumer surface (labs.openai.com redirects), the ChatGPT integration, and the dall-e-3 API. Production pipelines must migrate to gpt-image-2 before May 12.

How much does ChatGPT Images 2.0 cost?

Free tier gets 3 basic images per day. ChatGPT Go is $4.99 per month with 20 images per day. ChatGPT Plus at $20 per month unlocks full 2K, native reasoning, and 8-image coherent generation. Pro is $200 per month with effectively unlimited use. The API (gpt-image-2) lands in early May at an expected $0.04 per image standard tier.

Is ChatGPT Images 2.0 better than Midjourney v7?

For text rendering, multi-image coherence, reasoning, and commercial use cases (UI mockups, marketing bundles, infographics), yes — ChatGPT Images 2.0 beats Midjourney v7. Midjourney v7 still wins for pure aesthetic concept art, anime, and fine art stylization. The choice depends on use case. For 80% of commercial work, ChatGPT Images 2.0 at $20 per month beats Midjourney Pro at $60 per month.

Is ChatGPT Images 2.0 better than Google Nano Banana Pro?

ChatGPT Images 2.0 wins on native reasoning (search, verify, iterate) and multi-image coherence (8 images vs 4). Nano Banana Pro matches on 2K resolution and comes close on text rendering. Both are $20 per month tier. ChatGPT Images 2.0 has the edge right now, but Google will almost certainly respond at Google I/O 2026 (May 14-15). See our Nano Banana vs Imagen 4 comparison for full Google stack context.

What is native reasoning in an image model?

Native reasoning means the model runs internal deliberation passes before returning images. ChatGPT Images 2.0 can call web search for references, double-check generated text for spelling, verify image ratios, and iterate silently. This is the same architectural shift OpenAI made with o1 for text reasoning — applied to image generation for the first time.

Can ChatGPT Images 2.0 generate text accurately inside images?

Yes. Text rendering is one of the model's biggest improvements. It handles non-Latin scripts (Japanese, Arabic, Cyrillic) accurately, spells multi-word phrases correctly, and respects brand color specifications (hex codes). TechCrunch called it "surprisingly good at generating text." Every image in this article contains 6-10 text strings that rendered correctly on the first pass.

When does the ChatGPT Images 2.0 API launch?

The API, branded gpt-image-2, launches in early May 2026 — roughly two weeks after the consumer rollout. Expected pricing is $0.04 per image standard, matching current market rates. This is when production pipelines migrate from DALL-E 3 to gpt-image-2. The 21-day window before DALL-E 3 retires on May 12 is aggressive — migrate the day the API ships.

How many images can ChatGPT Images 2.0 generate from one prompt?

Up to 8 coherent images per prompt. "Coherent" means the character, object, or environment is preserved across every image — same face, same outfit, same color palette. This is the feature that replaces Midjourney's --cref and ComfyUI's IPAdapter workflows. For 6-panel comics, multi-size marketing bundles, and product catalog variants, this is the killer feature.

What resolution and aspect ratios does ChatGPT Images 2.0 support?

Native output is up to 2048 × 2048 (2K). Aspect ratios range from 3:1 to 1:3, covering 16:9 landscape, 9:16 portrait, 1:1 square, and all major social and print formats. This is a meaningful upgrade from DALL-E 3, which capped at 1024 × 1792.

Who should use ChatGPT Images 2.0?

Three groups. First, marketers and creators who need multi-asset campaigns (hero, square, portrait, banner) with matching identity. Second, developers and agencies shipping UI mockups, infographics, or poster-grade print content where text accuracy matters. Third, anyone producing multi-panel content — comics, product catalogs, localized variants. If you're doing pure concept art or anime, Midjourney v7 is still the better pick.

Were the images in this article really generated by ChatGPT Images 2.0?

Yes. All five images — hero, reasoning feature, DALL-E tombstone, comparison chart, verdict trophy — were generated by ChatGPT Images 2.0 in the 24 hours after launch using the exact prompts published in the "Our Prompt Playbook" section above. This is not marketing copy. The model tested itself. Feel free to copy our prompts and reproduce the outputs yourself to verify.

Sources

- OpenAI — Introducing ChatGPT Images 2.0 (official launch post, April 21, 2026)

- TechCrunch — ChatGPT's new Images 2.0 model is surprisingly good at generating text

- MacRumors — OpenAI launches ChatGPT Images 2.0

- The New Stack — ChatGPT Images 2.0 deep dive

- Interesting Engineering — 2K output analysis

- Blockchain.news — Business impact analysis

Methodology note: We researched this release across 6 primary sources over 36 hours following the launch. We tested gpt-image-2 hands-on through ChatGPT Plus on April 22, 2026. Every image embedded in this article was generated by the model itself using the prompts published in the Prompt Playbook section. Last updated: April 23, 2026.