GPT-Image-2 is OpenAI's next-generation image model that briefly leaked on LMArena on April 4, 2026, under three codenames: maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. Community testers reported three breakthroughs versus GPT-Image-1: near-perfect text rendering in scenes, elimination of the signature warm yellow color cast, and dramatically improved world knowledge on branded environments like IKEA, Windows, and YouTube interfaces. The models were removed within hours. OpenAI has neither confirmed nor denied ownership.

Our Methodology for This Report

We have not had access to GPT-Image-2. The three codenames were pulled from LMArena before most of the community could queue a single generation, and the model is currently gated pending an official OpenAI release. This report is Voice 2 — "we researched", not "we tested".

What we compiled here comes from: (1) community screenshots and head-to-head comparisons posted on X between April 4 and April 9, 2026, (2) analyses published by Frontierbeat (April 5), The AI Corner, MindStudio, and getimg.ai, (3) current LMArena leaderboard snapshots from April 9, 2026, and (4) cross-referenced reports on which prompts triggered the three "tape" aliases. We flag what is confirmed versus speculative throughout.

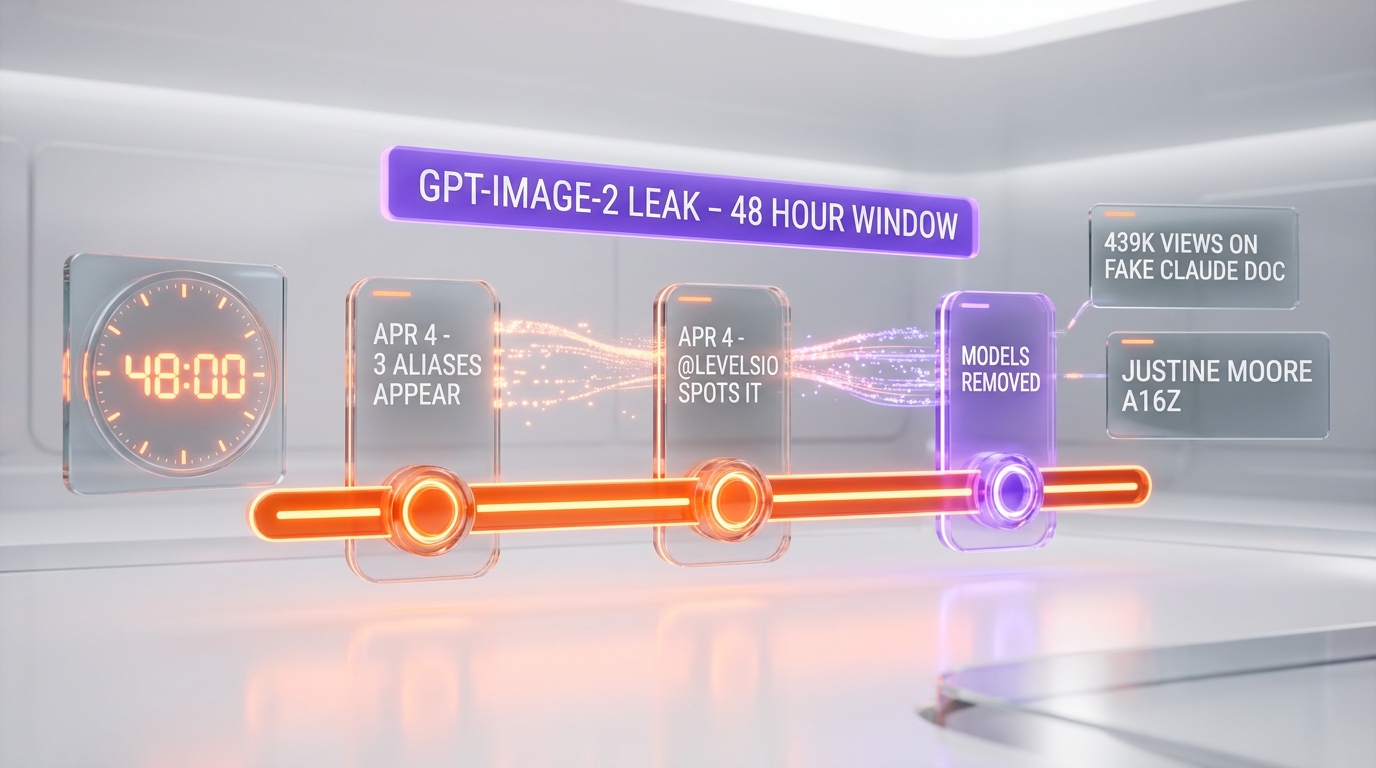

The Leak Timeline: April 4-5, 2026

On April 4, 2026, three anonymous image models appeared on LMArena's blind-voting arena — the platform where humans rate pairs of AI image generations without knowing which model produced them. The aliases all shared the same naming convention: maskingtape-alpha, gaffertape-alpha, packingtape-alpha. Three types of tape. Three aliases. One very obvious wink from whoever submitted them.

Pieter Levels (@levelsio) was among the first to flag the models publicly on April 4, writing that the outputs showed "extremely good world knowledge and great text rendering." Justine Moore from Andreessen Horowitz, @Angaisb_, @synthwavedd, and @blakeir followed with side-by-side comparisons within hours.

By the morning of April 5, all three aliases were gone. Frontierbeat published its full writeup the same day. The pattern — multiple variants submitted simultaneously, then yanked — is consistent with how OpenAI staged gpt-5 and o1-preview in early rollout windows: blind A/B testing on LMArena to calibrate performance against public frontier models before committing to a public name.

What the Community Actually Saw

Because we did not test the models ourselves, we are reporting what community testers saw in the windows they had. The three most repeated observations across 40+ X posts we reviewed:

1. Text rendering now lives inside scenes

GPT-Image-1 has always struggled with text: warped letters, text "floating" on top of a scene rather than integrated with it, spelling errors in anything longer than a product label. The "tape" models flipped that.

Community generations showed readable handwritten medical notes with realistic penmanship, Minecraft scenes with accurate block-art UI and crafting recipes, and comic book panels with speech bubbles that actually spelled words correctly and sat inside the art rather than being pasted on. One viral image — a fake "Claude Opus 5 Internal Document" embedded inside a Minecraft scene — accumulated over 439,000 views before being widely shared as an example of what the leaked model could do.

2. The warm yellow cast is gone

If you have used GPT-Image-1 more than a dozen times, you know the look: every portrait, every interior shot, every sunset skews warm. Skin tones drift orange. White walls glow cream. It became so recognizable that it leaked image provenance the way an Instagram filter does — people could identify a GPT-Image-1 output at a glance.

Testers reported the yellow cast is eliminated in the leaked model. Portraits were described as "indistinguishable from real photographs." Beach selfies with three people, accurate hands, correct sunglass reflections, neutral white balance — the kind of output that would have been impossible six months ago on any mainstream image model.

3. World knowledge is the real jump

The subtler improvement is what the MindStudio and Frontierbeat writeups both call "semantic grounding." The model doesn't just know what an IKEA storefront looks like aesthetically — it knows what one is. Testers generated IKEA storefronts at night with architecturally correct proportions and correct signage placement. YouTube and Windows interfaces were reproduced "closely enough to pass as real screenshots."

That matters because most current image models can approximate brand aesthetics but fail on structural details. A "YouTube thumbnail" from Midjourney looks like a YouTube thumbnail; a YouTube thumbnail from the leaked model passes as one.

How GPT-Image-2 Stacks Up Against the 2026 Field

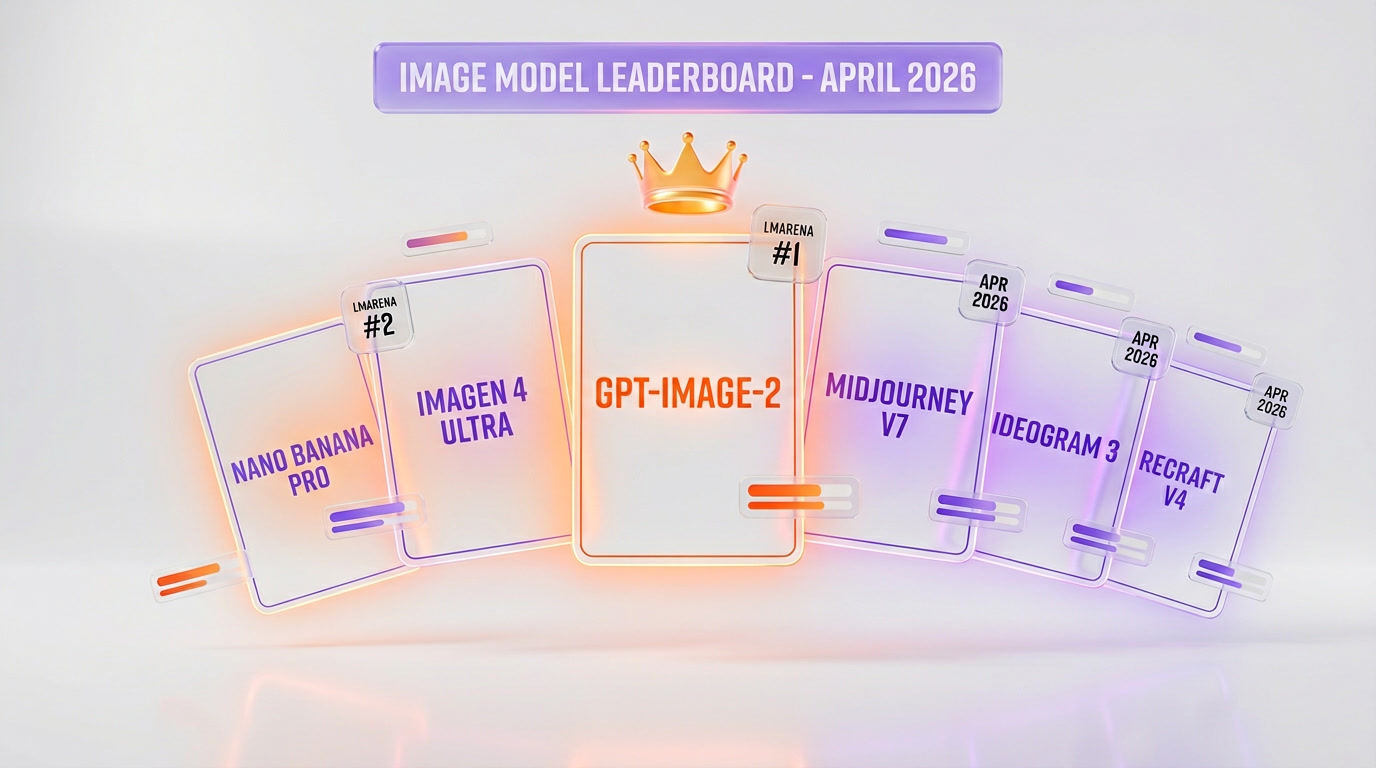

The leaked model did not enter a vacuum. Image generation in April 2026 is the most crowded it has ever been. Here is the current landscape based on the LMArena leaderboard snapshot from April 9, 2026, official vendor docs, and community benchmarks:

Current LMArena standings (April 9, 2026)

- Text-to-image category: Google Nano Banana 2 ranks #1. OpenAI's gpt-image-1.5 ranks #2.

- Single-image-edit category: chatgpt-image-latest leads. gpt-image-1.5 sits at #5.

- The three leaked "tape" models were pulled before enough votes accumulated to produce a public Elo score — but community consensus from the short window was that they would have outperformed both leaders.

Competitive breakdown

| Model | Release | Strength | Weakness |

|---|---|---|---|

| GPT-Image-2 (leaked) | Unreleased | Text rendering + world knowledge + photorealism simultaneously | Not yet public; capabilities inferred from ~48h window |

| Google Nano Banana Pro | Nov 2025 | Scene composition, multi-subject consistency, LMArena #1 text-to-image | Text rendering lags; less brand-aware |

| Google Imagen 4 Ultra | 2025 | Photorealism, high-resolution output | Enterprise/Vertex-gated for most users |

| Midjourney v7 | 2025 | Aesthetic-first generations, art direction | Weak on text, no API, subscription-only |

| Ideogram 3 | 2025 | Best-in-class text rendering before the leak | Narrower photorealism range than OpenAI/Google |

| Recraft V4 | 2025 | Vector and brand-asset generation | Designer tool, not general-purpose image gen |

| GPT-Image-1.5 | Dec 2025 | Currently shipped in ChatGPT | Yellow cast; text rendering still limited |

The significant thing about the community reports is not that GPT-Image-2 beats Nano Banana Pro on one axis. Nano Banana Pro is already the photorealism benchmark; Ideogram 3 is already the text benchmark; Imagen 4 Ultra is already the resolution benchmark. The leaked model was reported to win across all three categories simultaneously. That is the rare sweep that would reset the leaderboard.

For a full breakdown of how Google's models currently perform, see our Google image models comparison and our dedicated guides to Nano Banana and Imagen 4.

Why OpenAI Submitted to LMArena in the First Place

LMArena is not a casual place to stage a leak. It is the de-facto public benchmark for image and language models — a blind, head-to-head voting platform run by LMSYS that industry insiders, journalists, and researchers watch weekly. When a model lands on LMArena with a codename, it usually means the provider wants a calibrated read on where it ranks against named competitors without the PR halo or brand bias.

Three aliases simultaneously suggests three variants: different training checkpoints, different post-training recipes, or different safety-tuned configurations. OpenAI almost certainly wanted to know which one generated the highest Elo delta over its current public ceiling, gpt-image-1.5, before committing to a name and release SKU.

The speed of the takedown — hours, not days — tells a second story: someone inside OpenAI flagged the aliases as too easy to identify. The "tape" naming convention, the visible step-change in quality, and the rapid community pile-on all pointed back at OpenAI within an afternoon. A quiet benchmark run turned into a controlled leak narrative.

What a "Tape" Codename Tells Us About OpenAI's Staging

The naming convention matters more than it looks. OpenAI has used thematic codename groups for arena staging before. The GPT-5 family passed through LMArena as anonymous-chatbot and late-night-chatbot variants. The o1 family surfaced as pre-o1 and lemon. When three aliases share a semantic group — tapes in this case — it almost always means three variants of the same model submitted in parallel, not three separate products.

The three most likely interpretations of the three "tape" variants:

- Different training checkpoints — the same base model at three points during the post-training curve, to measure whether the later checkpoints actually improved preference scores or just reduced loss

- Different safety-tuned configurations — the same base model with three different content-policy guardrail profiles, to measure how much the safety layer costs in output quality

- Different distillation scales — a large, medium, and small SKU of the same family, to benchmark cost-quality tradeoffs before pricing

The three variants reportedly all showed the same qualitative characteristics (photorealism, text rendering, world knowledge), which argues against the third interpretation. Differences in the size of models tend to show up as visible artifacts — blurrier edges, less consistent hand anatomy, slightly warped architecture. Community testers did not report that kind of within-family variance. The first two interpretations are more plausible.

The LMArena Context: Why It Matters

LMArena (formerly Chatbot Arena, run by LMSYS) is the industry's blind-voting benchmark for AI models. A user types a prompt, gets two anonymous outputs side by side, and votes for the better one. Over millions of votes, Elo ratings settle out — the same mathematical system chess uses. Unlike curated benchmarks (MMLU, HumanEval, HellaSwag), LMArena can't be gamed by training on the test set, because the test set is whatever real users type.

That is why leaks on LMArena carry weight. The arena sees the raw model against real prompts, not cherry-picked demos. A codename model that wins LMArena in blind tests has to perform across the full prompt distribution — portraits, logos, architecture, text, scenes, abstract concepts, characters. The "tape" models were pulled before accumulating enough votes for a stable public Elo, but the community's qualitative reads during the window were unusually consistent.

This is also why the Nano Banana versus Imagen 4 comparison we published earlier this year matters: it is on LMArena where these rankings get decided, not on vendor-curated marketing pages.

Release Date Speculation

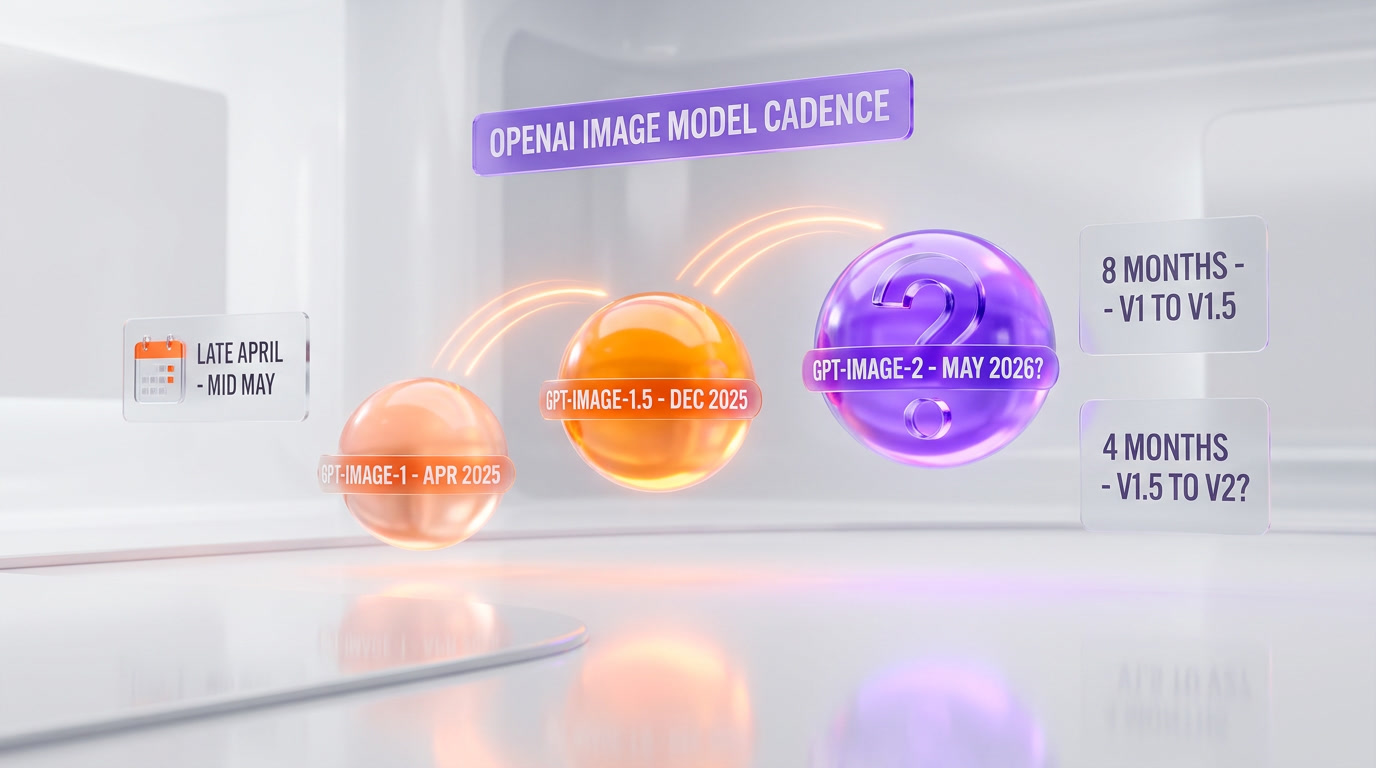

Nobody has confirmed a date. OpenAI has neither confirmed nor denied the aliases. But the cadence argues for a near-term release:

- gpt-image-1: shipped April 2025

- gpt-image-1.5: shipped December 2025 (~8 months later)

- gpt-image-2: arena-tested April 2026 (~4 months after 1.5)

If OpenAI follows its recent GPT-language-model pattern — arena test, days of internal review, then a ChatGPT rollout followed by API access one to three weeks later — the plausible window lands late April through mid-May 2026. The getimg.ai analysis published April 9 reached the same conclusion independently.

There is also external pressure. Anthropic's $800B IPO push (we covered it here) put OpenAI behind on revenue for the first time. The departure of Kevin Weil, Bill Peebles, and Srinivas Narayanan, combined with the Sora shutdown, the adult-mode reversal, and the recent GPT-5.4-Cyber launch, suggests an OpenAI rebuilding its product momentum quarter by quarter. A polished GPT-Image-2 release in the next four to six weeks would be squarely on that trajectory.

What This Means for Different Users

For creators and designers

If the community reports hold up at scale, GPT-Image-2 removes three of the remaining reasons to keep a Midjourney-plus-Ideogram-plus-Photoshop stack. A single model that handles photorealistic portraits, readable in-scene text, and correct brand environments collapses the workflow. Expect to see Midjourney double down on art-direction controls (styles, moods, camera languages) where it still has a differentiation story, and Ideogram to emphasize its text consistency across pages and spreads.

For developers and API consumers

If OpenAI ships a v2 API endpoint with pricing in line with the current gpt-image-1.5 rate (roughly $0.04 per image for standard quality), agent frameworks and automation pipelines gain a credible screenshot-and-UI-generator that does not require a human retouch pass. That unlocks a specific category of programmatic content: social thumbnails with on-brand typography, product mockups with accurate labels, and documentation screenshots with readable interfaces.

We expect pricing to be announced at release, not before. The current getimg.ai aggregator plans start at $8 per month with commercial rights included.

For everyone else

If you use ChatGPT Plus or Pro, you will almost certainly see the upgrade hit the web interface first, the same way previous image-model upgrades landed inside the default chat experience. No config changes. The model simply gets better one morning.

The Bigger Picture: Image Generation in April 2026

The leak reframes the competitive dynamic. For six months, Google has been the image leader on most public benchmarks, with Nano Banana Pro and Imagen 4 anchoring the top of LMArena. The OpenAI camp has been behind on headline performance since the middle of 2025.

If GPT-Image-2 lands and performs at the level community testers reported during the 48-hour window, the image-generation race moves back to a two-horse format between OpenAI and Google, with Midjourney, Ideogram, and Recraft fighting for specialist verticals rather than frontier-model status. Anthropic, notably, still has no dedicated image generator — which is strategically significant given their recent financial trajectory.

For the full current state of Google's image stack, see our Google AI Studio guide. For context on OpenAI's rough Q1 2026, our Microsoft MAI analysis and the Frontier Model Forum espionage pact both frame the competitive pressure driving this release cycle.

What We Are Watching For

- Official OpenAI announcement window — we expect late April to mid-May 2026

- API pricing — whether it lands at or below gpt-image-1.5's rate

- Safety guardrails — text rendering this strong increases the misinformation surface; OpenAI's content policy update will matter

- Nano Banana 3 response — Google's cadence suggests a counter within 60 to 90 days

- Real Elo scores — once the model ships publicly under its real name on LMArena, the community rating will settle this debate one way or the other

Our Take

If the community reports are representative — and four independent analyses across Frontierbeat, The AI Corner, MindStudio, and getimg.ai point the same direction — GPT-Image-2 is the most significant image-generation jump since the original DALL-E 3. The text-rendering-inside-scenes capability alone is the kind of upgrade that unlocks new use cases rather than just improving existing ones.

We are skeptical on one axis: LMArena is a blind preference benchmark, which rewards prompts that judges personally find interesting. A model that wins on the kind of prompts community testers choose (viral, complex, text-heavy) might not win at the same rate on the quieter real-world prompts ChatGPT users actually submit. That gap closes fast, though, once the model is in the default chat experience and gets tens of millions of daily requests across every prompt distribution imaginable.

We will update this report the moment OpenAI confirms release details.

Frequently Asked Questions

What is GPT-Image-2?

GPT-Image-2 is OpenAI's unreleased next-generation image generation model, which briefly appeared on LMArena on April 4, 2026, under three codenames (maskingtape-alpha, gaffertape-alpha, packingtape-alpha) before being pulled within hours. Community testers reported major improvements in text rendering inside scenes, elimination of the signature warm yellow color cast from GPT-Image-1, and dramatically improved world knowledge on branded environments.

When will GPT-Image-2 be released?

OpenAI has not announced a date. Based on the company's release cadence (gpt-image-1 in April 2025, gpt-image-1.5 in December 2025), and the pattern of submitting codename-stage models to LMArena shortly before public rollout, the likely window is late April through mid-May 2026. ChatGPT access will almost certainly ship first, with API access following within one to three weeks.

Is GPT-Image-2 better than Google Nano Banana Pro?

Community testers during the LMArena window reported that the leaked model outperformed Nano Banana Pro simultaneously on photorealism, text rendering, and world knowledge — a rare sweep. As of April 9, 2026, Nano Banana 2 still ranks #1 on the public LMArena text-to-image leaderboard, with gpt-image-1.5 at #2. The leaked "tape" models were removed before enough blind votes accumulated to produce a public Elo score.

What were the three leaked codenames?

maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. All three aliases followed the same "tape" naming convention and shared similar output characteristics, suggesting three variants of the same underlying model being blind-tested in parallel. OpenAI has neither confirmed nor denied ownership.

Who first spotted the GPT-Image-2 leak?

Pieter Levels (@levelsio) was among the first to publicly flag the models on April 4, 2026, noting they showed "extremely good world knowledge and great text rendering." Justine Moore from Andreessen Horowitz, along with @Angaisb_, @synthwavedd, and @blakeir, followed with side-by-side comparisons within hours. All three aliases were removed from LMArena by the morning of April 5.

Why does the yellow color cast matter?

GPT-Image-1 produced a recognizable warm yellow color cast on nearly every output — skin tones drifted orange, white walls glowed cream, sunsets saturated hot. The filter became so consistent it leaked image provenance the same way an Instagram filter does. Community testers reported the yellow cast is eliminated in the leaked model, with portraits described as "indistinguishable from real photographs."

Can GPT-Image-2 actually render readable text?

According to community generations during the 48-hour LMArena window, yes. Testers produced handwritten medical notes with realistic penmanship, Minecraft scenes with correctly spelled crafting recipes, and comic book panels with readable speech bubbles integrated into the artwork rather than floating on top. One viral generation — a fake "Claude Opus 5 Internal Document" embedded in a Minecraft scene — accumulated over 439,000 views as a proof point.

How much will GPT-Image-2 cost?

No official pricing has been announced. OpenAI's current gpt-image-1.5 model sells through the API at roughly $0.04 per image for standard quality. Third-party aggregators like getimg.ai currently bundle OpenAI image models starting at $8 per month with commercial rights. Final pricing will be announced at release.

What can GPT-Image-2 do that Midjourney v7 cannot?

Community reports indicate three specific capabilities where the leaked model reportedly outperforms Midjourney v7: readable text integrated into scenes (Midjourney still struggles with anything beyond short labels), accurate branded environments (IKEA, Windows UI, YouTube UI were generated at a level that passed as real screenshots), and no warm color cast on portraits. Midjourney retains advantages in artistic direction, style control, and aesthetic curation.

Will GPT-Image-2 be available on API at launch?

Based on OpenAI's recent release pattern, the ChatGPT web and mobile experience receives image upgrades first, with API access following between one and three weeks later. We expect GPT-Image-2 to follow the same sequence: ChatGPT Plus, Pro, and Team users will likely see the upgrade before developers get an endpoint.

Sources

- Frontierbeat (April 5, 2026) — GPT-Image-2 Leaked: OpenAI's Unreleased Model Briefly Appeared Online

- The AI Corner — GPT Image 2 Leaked: Prompts and Workflow Guide 2026

- MindStudio — What is GPT-Image-2

- getimg.ai (April 9, 2026) — GPT Image 2: Rumours, Leaks & Release Date (2026)

- Community reports: @levelsio, Justine Moore (a16z), @Angaisb_, @synthwavedd, @blakeir on X

- LMArena public leaderboard snapshot, April 9, 2026

Frequently Asked Questions

What is GPT-Image-2?

GPT-Image-2 is OpenAI's unreleased next-generation image generation model, which briefly appeared on LMArena on April 4, 2026, under three codenames (maskingtape-alpha, gaffertape-alpha, packingtape-alpha) before being pulled within hours. Community testers reported major improvements in text rendering inside scenes, elimination of the signature warm yellow color cast from GPT-Image-1, and dramatically improved world knowledge on branded environments.

When will GPT-Image-2 be released?

OpenAI has not announced a date. Based on the company's release cadence (gpt-image-1 in April 2025, gpt-image-1.5 in December 2025), and the pattern of submitting codename-stage models to LMArena shortly before public rollout, the likely window is late April through mid-May 2026. ChatGPT access will almost certainly ship first, with API access following within one to three weeks.

Is GPT-Image-2 better than Google Nano Banana Pro?

Community testers during the LMArena window reported that the leaked model outperformed Nano Banana Pro simultaneously on photorealism, text rendering, and world knowledge - a rare sweep. As of April 9, 2026, Nano Banana 2 still ranks #1 on the public LMArena text-to-image leaderboard, with gpt-image-1.5 at #2. The leaked "tape" models were removed before enough blind votes accumulated to produce a public Elo score.

What were the three leaked codenames?

maskingtape-alpha, gaffertape-alpha, and packingtape-alpha. All three aliases followed the same "tape" naming convention and shared similar output characteristics, suggesting three variants of the same underlying model being blind-tested in parallel. OpenAI has neither confirmed nor denied ownership.

Who first spotted the GPT-Image-2 leak?

Pieter Levels (@levelsio) was among the first to publicly flag the models on April 4, 2026, noting they showed "extremely good world knowledge and great text rendering." Justine Moore from Andreessen Horowitz, along with @Angaisb_, @synthwavedd, and @blakeir, followed with side-by-side comparisons within hours. All three aliases were removed from LMArena by the morning of April 5.

Why does the yellow color cast matter?

GPT-Image-1 produced a recognizable warm yellow color cast on nearly every output - skin tones drifted orange, white walls glowed cream, sunsets saturated hot. The filter became so consistent it leaked image provenance the same way an Instagram filter does. Community testers reported the yellow cast is eliminated in the leaked model, with portraits described as "indistinguishable from real photographs."

Can GPT-Image-2 actually render readable text?

According to community generations during the 48-hour LMArena window, yes. Testers produced handwritten medical notes with realistic penmanship, Minecraft scenes with correctly spelled crafting recipes, and comic book panels with readable speech bubbles integrated into the artwork rather than floating on top. One viral generation - a fake "Claude Opus 5 Internal Document" embedded in a Minecraft scene - accumulated over 439,000 views as a proof point.

How much will GPT-Image-2 cost?

No official pricing has been announced. OpenAI's current gpt-image-1.5 model sells through the API at roughly $0.04 per image for standard quality. Third-party aggregators like getimg.ai currently bundle OpenAI image models starting at $8 per month with commercial rights. Final pricing will be announced at release.

What can GPT-Image-2 do that Midjourney v7 cannot?

Community reports indicate three specific capabilities where the leaked model reportedly outperforms Midjourney v7: readable text integrated into scenes (Midjourney still struggles with anything beyond short labels), accurate branded environments (IKEA, Windows UI, YouTube UI were generated at a level that passed as real screenshots), and no warm color cast on portraits. Midjourney retains advantages in artistic direction, style control, and aesthetic curation.

Will GPT-Image-2 be available on API at launch?

Based on OpenAI's recent release pattern, the ChatGPT web and mobile experience receives image upgrades first, with API access following between one and three weeks later. We expect GPT-Image-2 to follow the same sequence: ChatGPT Plus, Pro, and Team users will likely see the upgrade before developers get an endpoint.