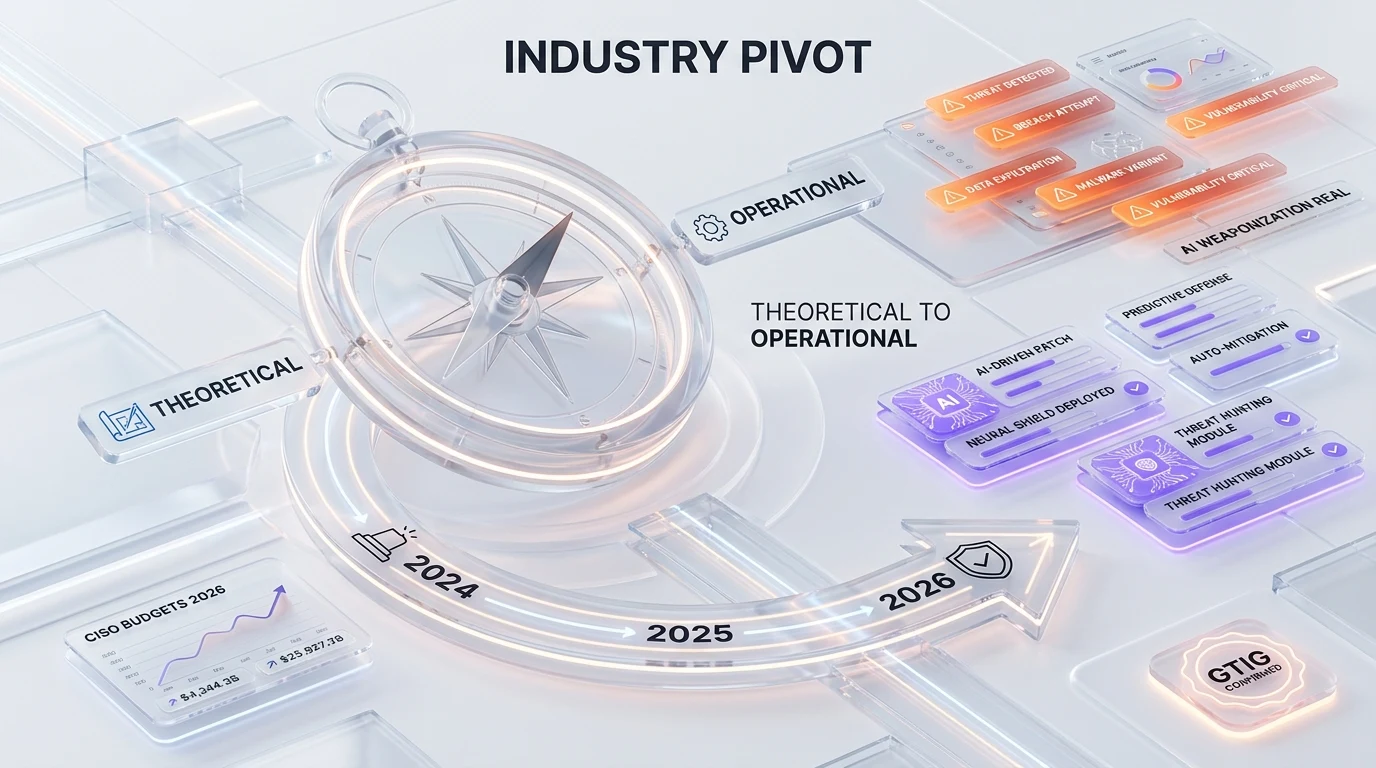

On May 11, 2026, Google's Threat Intelligence Group (GTIG) confirmed the first AI-built zero-day used in a real mass-exploit attempt. Threat actors used artificial intelligence to find AND weaponize a Python script flaw that bypassed two-factor authentication on an open-source system. GTIG analyst John Hultquist, of Google's Mandiant unit, told Bloomberg the disclosure is "tip of the iceberg." North Korea's APT45 has been running AI through thousands of exploit validations; Chinese state actors are experimenting in parallel. Coupled with Anthropic's Mythos misuse disclosure one week earlier — where the model was used to surface decades-old OpenBSD zero-days — the industry's AI-weaponization debate just shifted from theoretical to operational.

TL;DR — what GTIG confirmed on May 11, 2026

- The disclosure: Google's Threat Intelligence Group (GTIG) confirmed the first artificial-intelligence-built zero-day used in the wild, in a mass-exploit attempt on an open-source system.

- The flaw: A Python script vulnerability bypassing two-factor authentication. Threat actors used AI to both DISCOVER and WEAPONIZE the bug — not just write phishing emails around an existing CVE.

- The voice: John Hultquist, an analyst at Google's Mandiant unit and GTIG, called the disclosure "tip of the iceberg" in a Bloomberg interview.

- APT45 (North Korea): Running AI through thousands of exploit validations to stress-test which attack chains actually work end-to-end. Per Fortune's May 12 follow-up, GTIG cited "notable activity among actors associated with China and North Korea."

- China state actors: Experimenting with similar mass-validation pipelines in parallel — though GTIG did not name a specific Chinese threat group with the same confidence as APT45.

- Industry context: Anthropic delayed Mythos in April after the model was used to expose zero-days in OpenBSD that had sat unpatched for 27 years. The model has since shipped in a limited-access fashion.

- Why it matters: Every threat-intel briefing through 2025 framed AI-as-attacker as "expected within 12 to 18 months." GTIG just compressed that window to "shipped."

What happened

On May 11, 2026, Bloomberg published the first report citing Google Threat Intelligence Group on a confirmed AI-built zero-day exploited in the wild. The next day, Fortune ran an aligned follow-up summarizing the disclosure and naming Anthropic Mythos as the closest analog still under partial wraps. Both pieces converged on the same load-bearing claim: threat actors did not use AI as a force-multiplier around an existing CVE. They used AI to find the vulnerability inside a Python script handling 2FA, and to generate the exploit code that bypassed it. That is the threshold the industry has been watching since OpenAI's GPT-4 system card in 2023 first warned about "uplift" in offensive security.

GTIG's framing — through John Hultquist's "tip of the iceberg" quote and the broader pattern around APT45 mass validations — is that the May 11 disclosure is not a single incident. It is a sample point on a curve. The curve has been climbing for at least eighteen months in private threat-intel feeds. What changed in May 2026 is that a Tier-1 vendor put its name on the disclosure publicly.

For threat-intel teams reading this, the operational implication is immediate. The detection assumption that "an exploit chain takes humans weeks to construct" no longer holds for state-aligned actors. The defensive assumption that AI patch tooling — Anthropic's Claude Security, OpenAI's GPT-5.5-Cyber, GitHub Copilot Autofix, Snyk DeepCode AI — has time to catch up no longer holds either. Both sides are now running on the same clock.

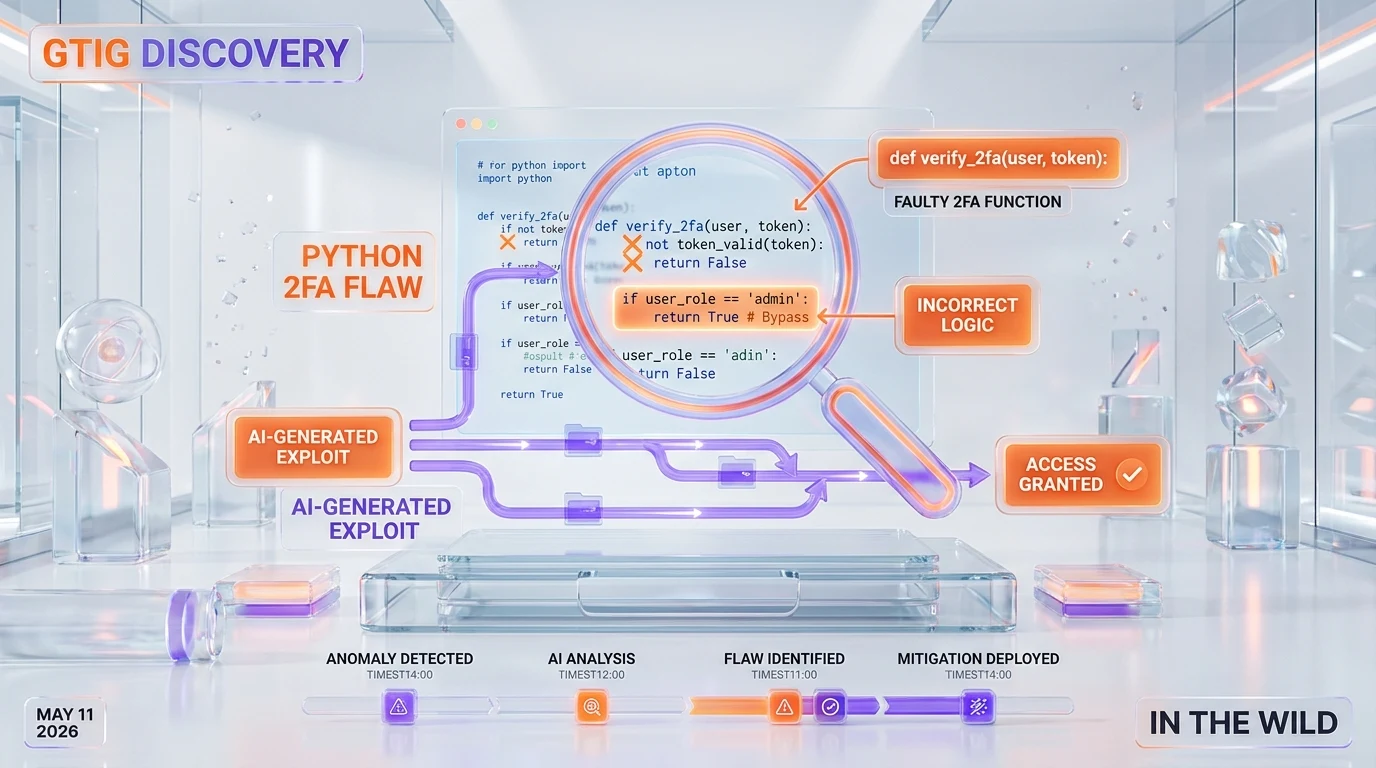

Anatomy of the AI-built zero-day GTIG named

GTIG's public characterization stayed at a deliberately abstracted level. No CVE number, no named victim, no exact open-source project. That is consistent with responsible disclosure practice when the underlying flaw still has remediation gaps to coordinate. What GTIG did confirm:

The flaw type — Python 2FA bypass

The vulnerability sat inside a Python script that implemented two-factor authentication on an open-source system. 2FA bypasses are a high-value vulnerability class because they collapse the entire identity-and-access stack — a single working bypass usually opens up downstream lateral movement across the same identity surface. AI weaponizing a 2FA bypass specifically is not a coincidence. It is an efficient frontier choice from the offensive perspective: highest blast radius per exploit construction hour.

The role of AI — discovery AND weaponization

This is the load-bearing distinction. Three prior "AI used in cyber attack" stories that hit the press in 2024 and 2025 — including phishing-content generation for the Cl0p ransomware crew, recon-summarization for North-Korean Lazarus operations, and a Russian GRU influence-content workflow — all involved AI as a productivity layer around existing human-found vulnerabilities. GTIG's May 11 disclosure breaks that pattern. The AI tool both surfaced the 2FA flaw inside a Python script and generated the working exploit code. That sequence is what the industry has been waiting to see confirmed by a Tier-1 vendor.

Why "in the wild" matters more than the lab demonstrations

Lab demonstrations of AI-built exploits have existed since 2023. Researchers at Carnegie Mellon's CyLab, DARPA AI Cyber Challenge entrants, and independent red-team consultancies have published proof-of-concept work showing LLMs constructing working exploit code under controlled conditions. None of those were "in the wild." GTIG putting the phrase "in a mass-exploit attempt" into a public statement is the line that separates research from operational threat intelligence. Anthropic's defensive answer — Claude Security public beta — shipped only on April 30, 2026. The offense reached "in the wild" twelve days later.

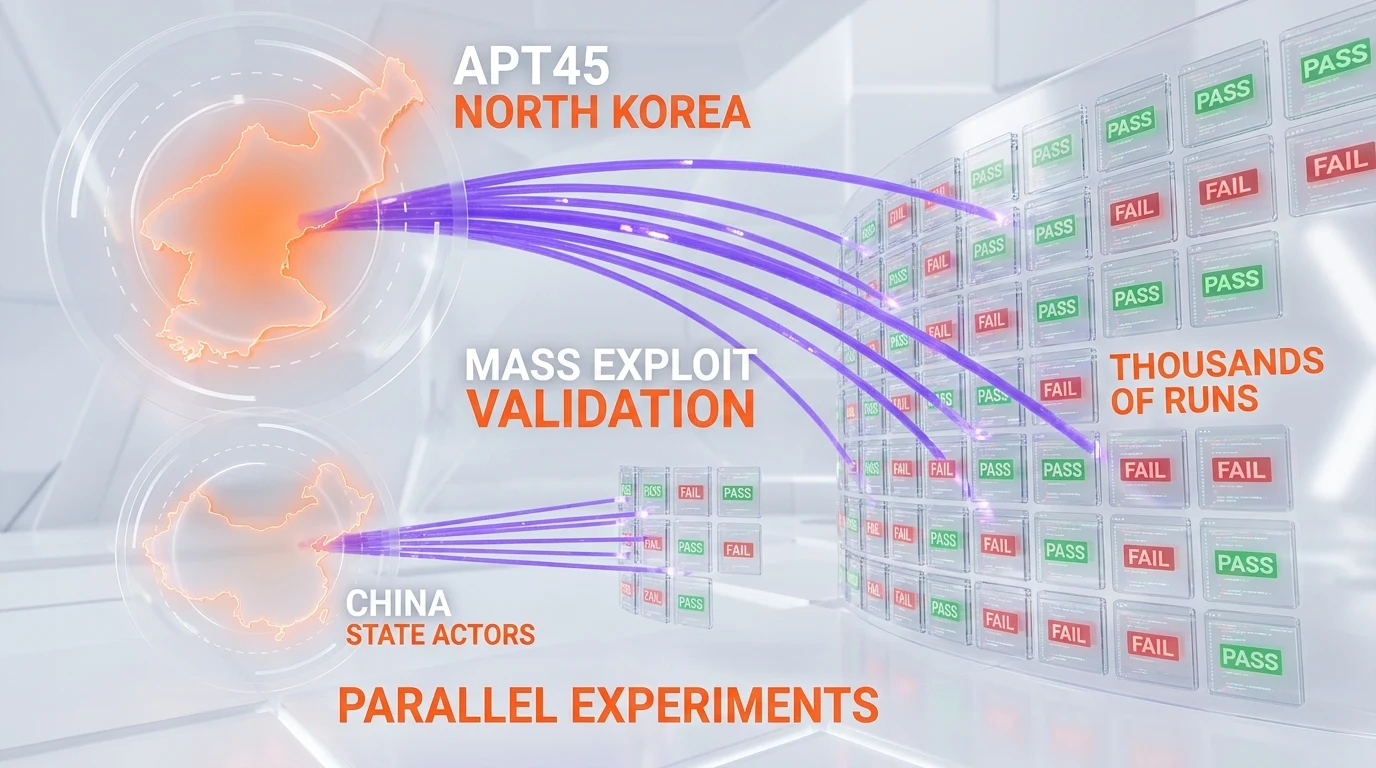

APT45 and the mass exploit validation pipeline

The specific North-Korean threat group GTIG highlighted is APT45 — a state-aligned cluster historically tracked for financial-sector cyber-espionage and cryptocurrency theft funding the Kim regime. Mandiant has tracked APT45 since 2019. What is new is the operational tempo. Per the GTIG disclosure cycle, APT45 has been "running AI through thousands of exploit validations" — a pipeline where AI generates candidate exploit chains, attempts them against test targets, classifies the failures and successes, and then iterates on the failed branches.

The economic logic of mass validation

Mass validation at AI speed compresses the cost of the offensive R&D cycle from human-weeks to machine-hours. A traditional state-aligned offensive team would dedicate a senior exploit developer to a single high-value target for two to six weeks. APT45 running thousands of variants through an AI pipeline replaces that linear allocation with parallelized exploration. The cost per validated exploit drops by an order of magnitude — possibly more, depending on how good the model is at scoring its own outputs without supervision.

Why this is structurally different from "fuzzing"

Fuzzing has existed as an automated vulnerability-discovery method for two decades. Google's own OSS-Fuzz has surfaced more than 36,000 bugs in open-source projects since 2016. What APT45's AI pipeline does that fuzzing does not is generate contextual exploit chains — code that reasons about the target's architecture, picks plausible parameter values, and pivots when an attempt fails. Fuzzers throw random inputs. AI-built exploit pipelines throw argumentative inputs.

China state actors running parallel experiments

The Fortune coverage on May 12 cited GTIG's framing of "notable activity among actors associated with China and North Korea." GTIG did not name a specific Chinese threat group with the same confidence as APT45. That asymmetry matters. It suggests GTIG had cleaner forensic attribution on APT45 — possibly through infrastructure overlap with prior North-Korean operations — but only pattern-level observation of similar workflows out of China.

The plausible Chinese groups

Without GTIG naming names, the candidate Chinese state-aligned clusters that have shown the operational maturity to adopt AI weaponization workflows are short. APT41 (financial-and-espionage dual-mandate, tracked by Mandiant), APT10 (Stone Panda, MSS-aligned), and Volt Typhoon (critical-infrastructure pre-positioning, called out by CISA in 2023 and 2024) are the realistic candidates. Each has demonstrated the budget and personnel depth to run mass-validation infrastructure. None has been publicly tied to AI-built zero-days yet — but the GTIG framing leaves the door open.

Why parallel adoption is the worst-case shape

If APT45 alone were running AI weaponization, the defensive response could concentrate around one threat actor's known tradecraft. If APT45 plus several Chinese state-aligned clusters are running it in parallel, the defensive response has to assume a fan-out across multiple regional adversaries with non-overlapping target portfolios. That changes how defensive teams allocate analyst attention, threat-intelligence subscriptions, and incident-response retainer dollars.

Mythos comparison: defense five weeks behind offense

The closest defensive analog the industry has discussed publicly is Anthropic's Mythos model. Anthropic delayed Mythos's planned rollout in April 2026 specifically because internal testing showed the model could be used to surface decades-old unpatched zero-days. The clearest example Anthropic disclosed: the model surfaced bugs in OpenBSD that had sat in the codebase for 27 years. Anthropic later shipped Mythos in a limited-access fashion, while OpenAI moved in the opposite direction with the EU Cyber Action Plan and GPT-5.5-Cyber.

Mythos was the defensive frame; GTIG is the offensive proof

Mythos exists primarily as a defensive tool — surface latent vulnerabilities so they can be patched. Anthropic gated it specifically to keep it out of offensive hands. GTIG's May 11 disclosure demonstrates that even without access to a Mythos-class frontier model, threat actors built a working AI weaponization pipeline using whatever they had — likely a combination of open-weight models, custom fine-tuning, and orchestration code. The Mythos gating did not slow the offensive timeline. It only meant defense did not get the lead it expected.

Five weeks is the gap that matters

Anthropic delayed Mythos in early April. Anthropic shipped Claude Security in public beta on April 30. GTIG confirmed an AI-built zero-day in the wild on May 11. The gap between defense's flagship offering and offense's first confirmed live exploit is eleven days. The gap between the first defensive-tool delay (Mythos) and the first confirmed offensive-tool deployment in the wild is roughly five weeks. That is the structural problem: defense is iterating in research previews while offense is iterating in production targeting.

What this means for CISO budgets and the AI security stack

The GTIG disclosure resets several assumptions that have been priced into security budgets through Q1 2026.

Assumption 1 — AI-as-attacker was 12 to 18 months out

Every CISO briefing deck since the OpenAI o1 release in late 2024 has framed AI-assisted attacker capability as "expected within 12 to 18 months." Most enterprise threat-intel subscriptions priced their forward-looking sections around that timeline. GTIG's May 11 confirmation compresses that window to retrospective. Budget arguments built on "we have time to build" no longer hold.

Assumption 2 — AI patch tooling would arrive in time

The defensive narrative around Claude Security, GPT-5.5-Cyber, GitHub Copilot Autofix, Snyk DeepCode AI, and Veracode Fix has been "the defensive AI race is keeping pace with the offensive AI race." The May 11 disclosure does not invalidate that narrative — but it does invalidate the comfortable version of it where defenders get to roll out at enterprise procurement speed. Procurement teams now have to compress evaluation timelines for AI-native SAST and AppSec tools by six to twelve months.

Assumption 3 — EDR/XDR stacks could continue running detection-first

Endpoint detection and response from CrowdStrike, SentinelOne, and Microsoft Defender for Endpoint has historically prioritized post-execution behavioral detection. An AI-built zero-day that successfully bypasses 2FA does not need to run noisy post-exploitation tooling to monetize the access. It can pivot directly to data exfiltration through legitimate identity-and-access pathways. Detection-first stacks have to add identity-anomaly and session-graph analysis upstream of the endpoint — which most enterprise deployments have not budgeted for yet.

What plays out next — the four scenarios

Reading the GTIG disclosure against Mythos, Claude Security, and the OpenAI EU Cyber Action Plan, four plausible 90-day scenarios sit on the table.

Scenario 1 — Defensive AI tools accelerate dramatically

Anthropic, OpenAI, Google, and Microsoft compress their defensive-tooling roadmaps to ship aggressively into the gap. Claude Security adds Team and Max tiers ahead of plan. GPT-5.5-Cyber expands beyond the EU pilot. GitHub Copilot Autofix moves from feature to product. This is the optimistic case. It assumes the defensive vendors are willing to absorb the marginal-revenue cost of acceleration.

Scenario 2 — Open-source weaponization spreads

If APT45's AI weaponization pipeline runs on open-weight models — likely some combination of Llama, Mistral, Qwen, and DeepSeek fine-tunes — the marginal cost of replicating the workflow drops to essentially zero for other state-aligned actors. The 90-day count could see Iranian (APT34, APT35), Russian (APT28, Sandworm), and additional Chinese clusters all confirmed as running similar pipelines. This is the realistic-pessimistic case.

Scenario 3 — Regulatory response moves fast

The EU AI Act's high-risk classification arguments around offensive cyber capabilities get re-invoked. The U.S. Cyber Solarium Commission and CISA push for enforceable disclosure obligations on AI vendors. Anthropic's Responsible Scaling Policy framework either becomes a de facto industry standard or gets superseded by a regulatory-first version. This is the political-response case. Historical base rate on speed of regulatory response to cyber-disclosure events is poor.

Scenario 4 — Nothing changes operationally

The disclosure absorbs into the news cycle. Security teams add "AI-built zero-day" to their risk registers. Budgets do not move materially. Some vendors run thought-leadership campaigns. The next confirmed in-the-wild AI-built zero-day arrives in eight to twelve weeks because the underlying capability is in deployment. This is the null hypothesis. It is also the most consistent with the industry's historical response curve.

Why "tip of the iceberg" is the operative phrase

John Hultquist's "tip of the iceberg" framing deserves a closer read. Hultquist is not a hype-prone analyst. His career — through iSIGHT Partners, FireEye, Mandiant, and now Google — has been built on conservative, evidence-anchored threat intelligence framings. When Hultquist says "tip of the iceberg" on a Bloomberg interview, the operational read is that GTIG has multiple additional cases it cannot yet disclose publicly because attribution work or remediation coordination is still in flight.

The disclosure pacing tells you what is coming

Threat-intelligence disclosure pacing usually follows a predictable sequence. First, a single named incident with conservative attribution. Then, two to four weeks later, a quarterly threat report folding the incident into broader pattern analysis. Then a Black Hat or DEF CON briefing in August that names the technical details with the disclosure cycle complete. If May 11 is the first beat of that sequence, expect a GTIG quarterly threat report in mid-June and a Black Hat briefing in early August expanding the picture significantly.

The "iceberg" implies APT45 is not alone

Hultquist's specific phrasing does not say "this one incident is the tip of an iceberg of APT45 activity." It says the broader phenomenon of AI weaponization is the iceberg. That choice of words implies GTIG has visibility on multiple threat actors, not one. The next 90 days of GTIG disclosures should be read with that frame in mind.

The defensive playbook for the next 30 days

For security leaders reading this in the week after the GTIG disclosure, the immediate action set is shorter than the headlines suggest. Five concrete moves matter in the next 30 days.

1. Tighten 2FA implementation reviews

The specific vulnerability class GTIG named is 2FA bypass in a Python implementation. Inventory every internal and vendor-facing 2FA implementation. Pay special attention to Python-based authentication services. Prioritize implementations that handle TOTP code validation, recovery flow, and session token issuance — those are the three subsurfaces where 2FA bypasses cluster historically.

2. Re-evaluate AI patch tooling RFPs in flight

If your security team has an active RFP for AI-native SAST or AppSec tooling, the GTIG disclosure changes the evaluation criteria. Specifically, ask each vendor for their position on AI-built exploit detection — not just AI-built patch generation. Vendors that conflate the two are not credible. The defensive product has to do something fundamentally different from the offensive one.

3. Run a tabletop exercise against the Python 2FA bypass scenario

Use the GTIG disclosure as the kickoff scenario for a 90-minute tabletop. Have the red team simulate an AI-built bypass against your specific 2FA stack. Have the blue team work through detection, incident response, and customer disclosure. The output matters less than the conversation about how detection assumptions hold up against AI-built exploit chains.

4. Update threat-intel vendor evaluation

If your threat-intel subscription is up for renewal, ask the vendor how they are tracking AI-built exploit pipelines specifically. The good answers will reference GTIG, Mandiant, CrowdStrike Falcon Intelligence, Microsoft Threat Intelligence Center, and the major government CERTs. The bad answers will repeat "we monitor AI threats" without specifics.

5. Brief the board with the right framing

The May 11 disclosure deserves a board-level briefing. The framing that lands is short: "The capability we have been warning about for 18 months is now confirmed in production by Google Threat Intelligence. Our defensive posture was built for a 12-month adoption curve. The curve compressed to zero. Here is what we are doing in the next 30 days." That framing earns the budget conversation. Vague AI-threat framing does not.

The strategic takeaway

The industry's AI-weaponization debate has been performative for two years. GTIG's May 11 disclosure makes it operational. APT45 weaponized AI to find AND build a zero-day for a Python 2FA bypass — and used it in a mass-exploit attempt in the wild. Chinese state actors are running parallel pipelines. Anthropic's Mythos disclosure five weeks earlier already signaled what was coming on the defensive side. The disclosure cycles have now aligned.

The strategic question is no longer whether AI weaponization is real. The strategic question is which defensive vendors can move at the same speed as APT45's pipeline. Claude Security shipping on April 30 was a relevant move. GPT-5.5-Cyber and the EU Cyber Action Plan are relevant moves. The PyTorch Lightning supply-chain attack three weeks earlier showed how exposed the upstream AI dependency chain already is. The Mexico water utility hack one week earlier showed how AI-assisted attacks land against operational targets. May 2026 will be remembered as the month the AI weaponization conversation stopped being theoretical.

For the rest of 2026, every CISO budget, every threat-intel briefing, and every AppSec procurement decision sits against a new operational baseline. Plan accordingly.

Frequently asked questions

What did Google's Threat Intelligence Group confirm on May 11, 2026?

Google's Threat Intelligence Group (GTIG) confirmed the first artificial-intelligence-built zero-day used in the wild — a Python script vulnerability bypassing two-factor authentication on an open-source system. Threat actors used AI to both discover the flaw and generate the working exploit code, then deployed it in a mass-exploit attempt. The disclosure ran in Bloomberg on May 11 and was confirmed by Fortune the next day. GTIG analyst John Hultquist, of Google's Mandiant unit, called the disclosure "tip of the iceberg."

What is an AI-built zero-day and how is it different from AI-assisted phishing?

An AI-built zero-day is a previously-unknown vulnerability that AI both discovered and weaponized. The model finds the flaw inside a target codebase and generates the working exploit code. AI-assisted phishing is the opposite end of the spectrum — AI writes the email content or scaffolds the recon, but the underlying vulnerability and exploit are human-found and human-built. The GTIG May 11 disclosure is the first publicly-confirmed case of the former. Prior AI-in-cyber-attack stories through 2024 and 2025 were exclusively the latter.

Who is APT45 and why is North Korea running mass exploit validations?

APT45 is a North-Korea-aligned threat group tracked publicly by Mandiant since 2019. The group has historically targeted financial-sector cyber-espionage and cryptocurrency theft to fund the Kim regime. Per GTIG's May 2026 disclosure, APT45 has been running AI through thousands of exploit validations — a pipeline where AI generates candidate exploit chains, attempts them, classifies the outcomes, and iterates. Mass validation at AI speed compresses offensive R&D cycles from human-weeks to machine-hours.

Which Chinese state actors are experimenting with AI weaponization?

GTIG referenced "notable activity among actors associated with China" but did not name a specific cluster with the same confidence as APT45. The realistic candidates are APT41 (financial-and-espionage dual-mandate), APT10 (Stone Panda, MSS-aligned), and Volt Typhoon (critical-infrastructure pre-positioning called out by CISA in 2023 and 2024). All three have the budget and personnel depth to run mass-validation infrastructure. None has yet been publicly tied to a specific AI-built zero-day, but GTIG's framing leaves the door open.

How does this relate to Anthropic Mythos?

Anthropic delayed Mythos's rollout in April 2026 specifically because internal testing showed the model could be used to surface decades-old unpatched zero-days, including bugs in OpenBSD that had sat in the codebase for 27 years. Anthropic later shipped Mythos in a limited-access fashion. The gap between Anthropic's defensive delay and GTIG's confirmation of an offensive deployment in the wild is roughly five weeks. The Mythos gating did not slow the offensive timeline — it only meant defense did not get the lead it expected.

What does "tip of the iceberg" mean in this context?

John Hultquist's phrasing implies GTIG has multiple additional cases it cannot yet disclose publicly because attribution work or remediation coordination is still in flight. Threat-intelligence disclosure pacing usually follows a sequence: a single named incident first, then a quarterly threat report folding the incident into broader pattern analysis, then a Black Hat or DEF CON briefing in August naming technical details. If May 11 is the first beat, expect a GTIG quarterly report in mid-June and an August briefing expanding the picture.

How is this different from fuzzing-based vulnerability discovery?

Fuzzing has existed as an automated vulnerability-discovery method for two decades. Google's own OSS-Fuzz has surfaced more than 36,000 bugs in open-source projects since 2016. What APT45's AI pipeline does that fuzzing does not is generate contextual exploit chains — code that reasons about the target's architecture, picks plausible parameter values, and pivots when an attempt fails. Fuzzers throw random inputs at a target. AI-built exploit pipelines throw argumentative inputs — inputs that reason about why the prior attempt failed.

What should CISOs do in the next 30 days?

Five concrete moves matter. First, tighten 2FA implementation reviews — inventory every internal and vendor-facing 2FA implementation, prioritize Python-based authentication services. Second, re-evaluate AI patch tooling RFPs and ask vendors how they detect AI-built exploits specifically. Third, run a tabletop exercise against the Python 2FA bypass scenario. Fourth, update threat-intel vendor evaluation by asking how they track AI-built exploit pipelines. Fifth, brief the board with the framing that an 18-month risk window just compressed to zero.

Does this mean every Python 2FA library is now vulnerable?

No. GTIG did not name the specific Python project or library. The disclosure stayed at an abstracted level consistent with responsible-disclosure practice when remediation coordination is still active. The actionable read is that 2FA bypasses cluster historically in three subsurfaces — TOTP code validation, recovery flow, and session token issuance. Security teams should prioritize review of those three subsurfaces across all 2FA implementations, not panic about a specific named library.

Will defensive AI tools catch up before more AI-built zero-days hit?

Probably not at parity. Anthropic Claude Security shipped its public beta on April 30, 2026, twelve days before the GTIG disclosure. OpenAI's EU Cyber Action Plan and GPT-5.5-Cyber are moving in the same direction. But the defensive AI tools are still in beta or pilot tiers, while the offensive pipelines are already in production targeting. Expect the next confirmed in-the-wild AI-built zero-day in eight to twelve weeks based on disclosure cadence patterns.

How does this connect to other AI-cyber incidents in 2026?

The May 11 GTIG disclosure sits on a curve of escalating AI-cyber incidents in 2026. The PyTorch Lightning supply-chain attack demonstrated how exposed the upstream AI dependency chain is. The Mexico water utility hack a week earlier showed AI-assisted attacks landing against operational targets — Dragos reported 17,000 lines of malware. The GTIG disclosure is qualitatively different because it confirms AI weaponization, not just AI-assistance, in a state-aligned offensive operation. The three incidents together reset the 2026 threat baseline.

Is this the start of mandatory AI vendor disclosure regulation?

Possibly, but the historical base rate on regulatory response to cyber-disclosure events is slow. The EU AI Act's high-risk classification arguments around offensive cyber capabilities will likely be re-invoked, and the U.S. Cyber Solarium Commission plus CISA may push for enforceable disclosure obligations on AI vendors. Anthropic's Responsible Scaling Policy framework either becomes a de facto industry standard or gets superseded by a regulatory-first version. None of that happens in 30 days. The realistic timeline for actual regulatory action is 12 to 24 months — well after multiple additional AI-built zero-day incidents will have surfaced.

External sources used in this analysis: