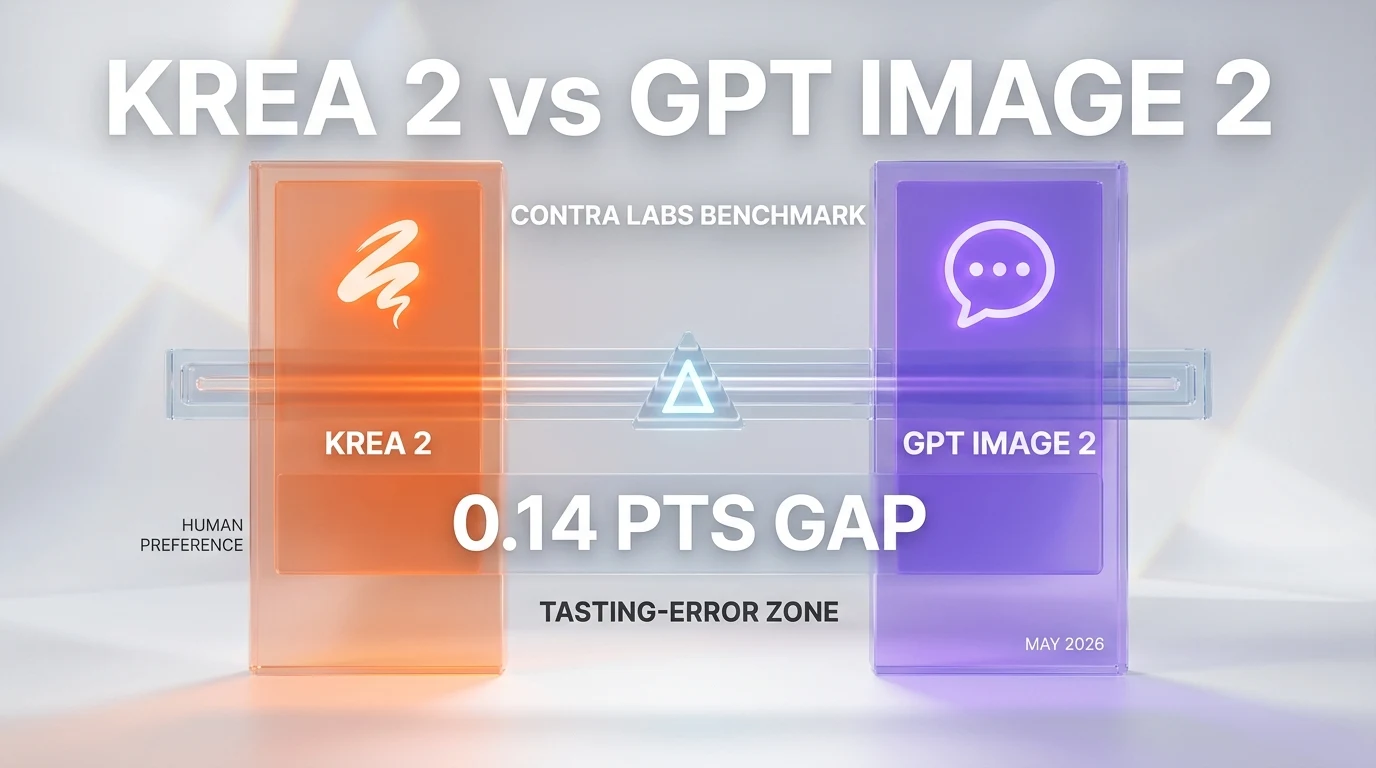

Krea released Krea 2 on May 12, 2026 — its first proprietary foundation image model, built from scratch with a style-transfer architecture at the core. Independent benchmarks from Contra Labs place Krea 2 within 0.14 points of GPT Image 2 on human-preference scoring, putting a creative-suite vendor inside frontier-lab distance for the first time. The pivot from aggregator to foundation model resets how the image-generation market gets priced.

For three years Krea sold a creative suite — a polished interface on top of someone else's models. Stable Diffusion, FLUX, Recraft, Ideogram, the usual deck. The market read Krea as a workflow product, not a model lab. May 12 changed that read. Krea 2 is "the first foundation model built completely from scratch" by the company, according to the launch post on krea.ai/blog/krea-2-image-model. The implicit positioning is loud: Krea no longer wants to be the IKEA of generative imagery. It wants to be the cabinetmaker.

The interesting move is not that Krea trained a model. Everyone with a Series B can train a model in 2026. The interesting move is that style transfer — the feature that historically lives inside ControlNet adapters, IP-Adapter weights, or expensive LoRA training runs — got pulled into the foundation model's primary loss objective. Krea's own framing in the blog post: equal engineering effort went into the style transfer system as into the foundation model itself. Style stops being a prompt word and becomes a control surface.

What launched on May 12, 2026

Krea 2 is a text-to-image foundation model available to anyone with a free Krea account, accessible at krea.ai/image/k2. The model takes both text prompts and reference images as input. The reference images feed the style transfer pipeline, which exposes user-facing controls for style influence intensity, multi-style combination, and batch variation cohesion. Free signup, no paywall on the public endpoint at launch — a notable pricing decision in a market where Midjourney still asks $30 per month for V8 access and GPT Image 2 bills per image through the OpenAI API.

The architecture details Krea published are deliberately thin. No parameter count. No training-set disclosure. No reference to whether the model is a diffusion transformer, a rectified flow variant, or something post-diffusion. What Krea did emphasize is the design philosophy: AI as "raw, flexible, unopinionated, and unconstrained" rather than the polished safety-padded default that has come to dominate Adobe Firefly, Midjourney V8, and the consumer-facing tiers of Nano Banana Pro. The product framing reads as a direct critique of frontier-lab aesthetic centrism.

Style transfer as a primary control surface

Most foundation image models bolt style transfer on after the fact. Stable Diffusion 1.5 needed ControlNet. SDXL needed IP-Adapter. Flux Schnell needed Redux. The pattern: train the base model on text-image pairs, then plug an external module to handle reference-image control. The module is always a second-class citizen. It works, but it works the way a USB hub works on a laptop — fine until you need bandwidth.

Krea 2's claim is that the style transfer system was trained alongside the foundation model. Same training run, same gradient updates, same loss landscape. That is a meaningful architectural choice if true, because it means style influence is not a post-hoc adapter — it is a native control input to the model. The user-facing implications: combining two style references should not collapse into the average of the two (the classic IP-Adapter failure mode). Strengthening a style should not also strengthen unrelated content from the reference. Reducing a style should not require iterative prompt surgery.

Pricing and availability at launch

Free access at launch is the most aggressive pricing decision in the image-generation market in 2026. The closest comparable is Black Forest Labs' free tier on FLUX.1 Schnell, but FLUX Schnell is a deliberately throttled distillation of a larger model. Krea 2 is positioned as the flagship. The unit economics math for Krea — if even one in twenty free users converts to a paid creative-suite tier — works out at scale, but only if the model serves at a marginal cost per image significantly below the competition.

The full Krea creative suite — which integrates other models alongside Krea 2 — runs on a tiered subscription. The free Krea 2 access exists as a customer-acquisition funnel. The same playbook Leonardo.ai used through 2023 and 2024 to scale before pricing pressure forced enterprise upsell loops.

The aggregator-to-foundation pivot

Krea is the first creative-suite vendor to ship a foundation model. Every comparable platform — Leonardo, Recraft, Playground, Magnific — has stayed on the integrate-other-models side of the line. The reasoning is sensible. Training a competitive foundation model in 2026 costs somewhere between $5 million and $40 million in compute alone, before research salary. The market read on creative-suite vendors has always been that their moat is workflow and UX, not raw model quality.

Krea's bet is that the moat from workflow alone shrunk between 2024 and 2026. Midjourney shipped Discord, then a web client, then native style controls. GPT Image 2 launched through ChatGPT, immediately swallowing the casual creative market. Adobe Firefly absorbed every Adobe Creative Cloud user the day it launched. The creative-suite vendors lost differentiation pressure from above (the frontier labs got better at UX) and from below (open-weights models got better at quality). Foundation-model-as-defensive-investment becomes rational.

The Contra Labs benchmark numbers

The 0.14 point gap to GPT Image 2 on the Contra Labs human-preference leaderboard is the most-cited number from the Krea 2 launch. Context on what that means in practice: Contra Labs runs a forced-choice evaluation where human raters pick between two generated images for the same prompt, and the Elo-style score reflects win rate across thousands of comparisons. A 0.14 point gap on a typical Contra Labs scale is roughly within tasting-error tolerance for many prompt categories. It is not "Krea 2 ties GPT Image 2." It is "Krea 2 is close enough that the gap depends heavily on prompt selection."

For a first-generation foundation model from a creative-suite vendor, hitting within tasting-error tolerance of the OpenAI flagship is genuinely impressive. The relevant comparison is Stability AI's first model versus DALL-E 2 in 2022 — a gap measured in years, not points. Krea 2 closed that gap in one model release.

Benchmark vs GPT Image 2: what the gap actually means

The 0.14 points number gets repeated. The interpretation does not. Three categories matter for the Krea 2 vs GPT Image 2 comparison, and the gap shifts by category.

Photorealistic portrait generation

GPT Image 2 retains a clear edge on photorealistic portrait synthesis, particularly on skin tone fidelity, fabric texture, and complex lighting situations. This is partly a training-data scale advantage — OpenAI sits on the largest curated portrait dataset in the market through its various human-feedback pipelines — and partly a fine-tuning advantage from the ChatGPT image generation user base, where portrait requests dominate the top-1000 prompts. Krea 2 closes the gap meaningfully but does not close it entirely. The honest read: GPT Image 2 still wins on portrait realism, by a margin smaller than it was six months ago.

Stylized illustration and artistic direction

This is where Krea 2's style transfer system pays off. With reference images injected, Krea 2 produces stylized illustration output that meaningfully exceeds GPT Image 2's reference-free baseline. Without reference images, the two models trade blows depending on prompt specificity. The structural advantage favors Krea 2 because the style transfer pipeline lets users hit a target aesthetic through demonstration rather than prompt-engineering — a workflow most creative professionals prefer when working with brand guidelines or visual identity systems.

Text rendering and typography

GPT Image 2's text rendering is the cleanest in the public-API tier. Krea 2 is roughly on par with FLUX 2 on text rendering — usable for short captions, label text, and one-word emphasis, brittle on long headlines or paragraph-level typography. The gap closes when style references include strong typographic samples, but the foundation-model-level text rendering favors GPT Image 2.

The composite picture: GPT Image 2 still wins on average across the Contra Labs prompt mix, but by an average of 0.14 points. Krea 2 wins decisively in style-transfer scenarios and trades approximately even on most other categories. The market read is that Krea 2 is now a legitimate choice for design-first workflows where reference-image control matters more than absolute portrait-fidelity benchmarks.

How Krea 2 compares to the rest of the 2026 image-gen field

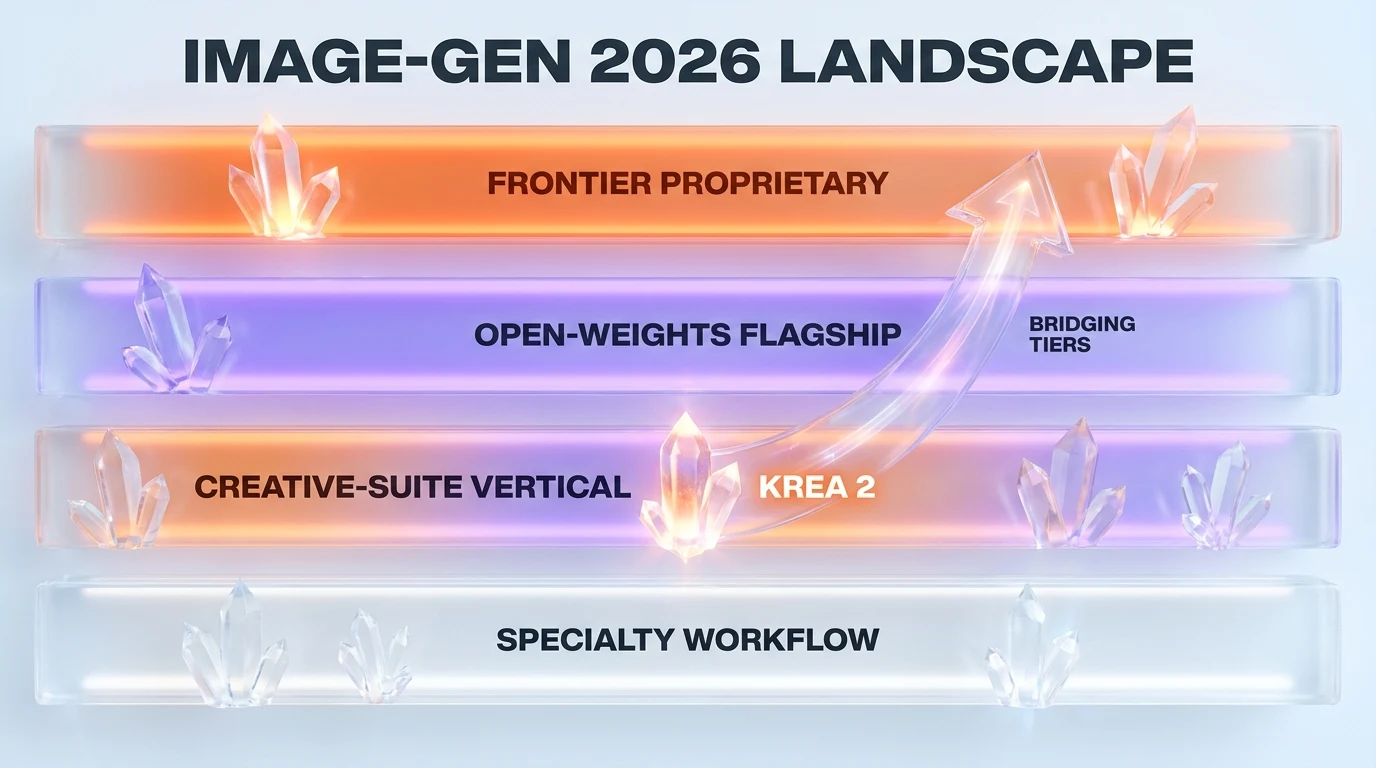

The image generation market in May 2026 has four credible tiers. Frontier proprietary (GPT Image 2, Nano Banana Pro). Open-weights flagship (FLUX 2, Stable Diffusion 4). Creative-suite vertical (Midjourney V8, Adobe Firefly 4, Krea 2). Specialty workflow (Leonardo.ai, Recraft, Magnific). Krea 2 lands in tier three but with a foundation-model claim that bridges into tier one.

Krea 2 vs Nano Banana Pro

Nano Banana Pro — Google's flagship image generator served via the Gemini API and AI Studio — has the second-strongest portrait realism in the market and the strongest text rendering. The detailed head-to-head between OpenAI's flagship and Google's flagship is covered in our GPT Image 2 vs Nano Banana Pro comparison. Krea 2 sits behind Nano Banana Pro on raw fidelity but ahead on style-transfer workflows. The pricing differential matters too — Nano Banana Pro charges per image through the Google AI Studio billing, while Krea 2 is free for signed-in users at launch.

Krea 2 vs Midjourney V8

Midjourney V8 retains the aesthetic-default advantage. The Midjourney house style — that warm, painterly, slightly oversaturated look — is unmistakable and remains a target many users want by default. Krea 2 is more neutral by design. The "unopinionated" framing in the launch post is a deliberate contrast to Midjourney's aesthetic gravity. For users who want to hit a specific look through reference images rather than through Midjourney's house style, Krea 2 is the better fit. For users who want the Midjourney look without specifying it, Midjourney V8 still owns the category. Our Midjourney vs Adobe Firefly comparison walks through the aesthetic-default question in more detail.

Krea 2 vs FLUX 2

FLUX 2 is the open-weights flagship. It can run locally on consumer hardware with 24GB VRAM, it can be fine-tuned, and it has no per-image API cost beyond electricity. Krea 2 has neither the local-run option nor the fine-tuning surface. The trade-off is workflow integration: Krea 2 ships inside a polished creative suite with reference-image management, project organization, and team-collaboration features. FLUX 2 is a model weights file. The audiences diverge — FLUX 2 wins with developer-tier users and small studios with technical capacity, Krea 2 wins with designers and agencies who price their time at $200 per hour and need workflow over weights.

Krea 2 vs Leonardo.ai

Leonardo and Krea are the closest direct competitors in the creative-suite vertical. Both ship polished web interfaces, both target the design-first user. The difference May 12 forward: Leonardo aggregates other models, Krea ships its own foundation model. Leonardo still has the larger asset library and the more mature team-collaboration features. Krea now has the architecture story. Our Higgsfield AI vs Leonardo.ai comparison covers the creative-suite category dynamics that Krea 2's launch now reshapes.

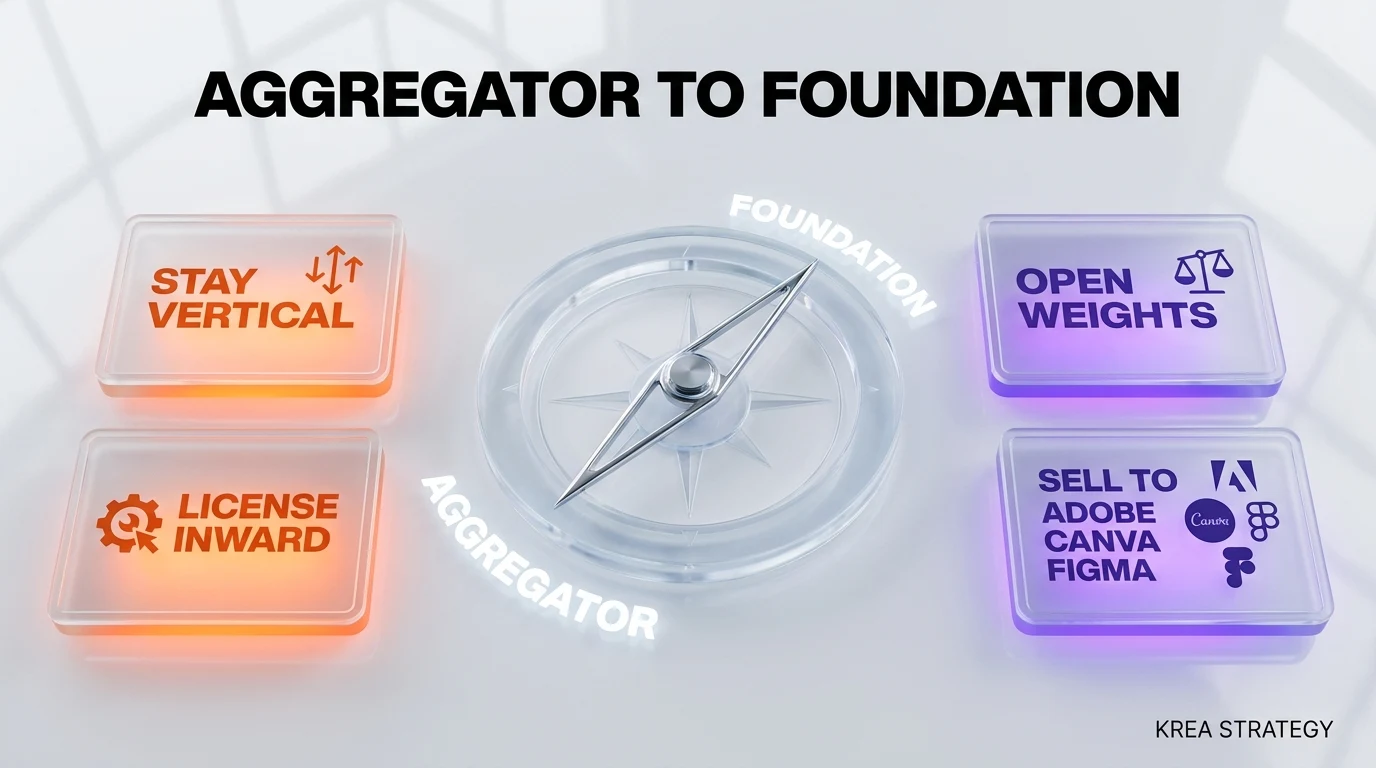

Strategic implications for Krea

The foundation-model pivot reshapes Krea's risk profile in three ways. First, model-quality risk shifts from "the partner labs ship a great upgrade we can integrate" to "we ship a great upgrade ourselves." That is harder, capital-intensive, and exposes the company to the same training-cost-curve pressures that hit Stability AI in 2023 and 2024. Second, defensibility increases — Krea now has a proprietary asset (the model weights and the style-transfer system architecture) that cannot be reproduced by a competitor switching API providers. Third, fundraising narrative shifts from "the best creative suite" to "the best creative suite with frontier-grade model assets," which unlocks a different valuation comparable set.

Capital requirements going forward

Training Krea 2 cost something. Krea did not disclose the number. Industry priors for a competitive image foundation model in 2026 run between $3 million and $15 million depending on parameter count and training mix. Krea raised a Series A in 2023 and what reads as a Series B-ish round in 2024 (terms not public). The math implies Krea allocated a meaningful fraction of its runway to the foundation-model bet. The follow-on consequence: subsequent rounds will need to bridge to either profitable creative-suite economics or a larger model-lab fundraise.

Strategic options from here

Krea has four credible paths from May 12. (1) Stay vertical — keep refining the creative suite, ship Krea 3 in 12 to 18 months, monetize through subscriptions. (2) Open the model — release Krea 2 weights to build developer ecosystem, license commercial use through a tiered model. The Stability AI / Black Forest Labs playbook. (3) License inwards — sell Krea 2 API access to other creative-suite vendors who want to skip the foundation-model investment. The OpenAI-as-platform playbook. (4) Sell — Adobe, Canva, or Figma all have plausible acquisition theses against a creative-suite-plus-foundation-model bundle. The Krea team's public framing favors (1), but (2) and (3) have meaningful financial logic.

What this means for the image-gen market in 2026

The Krea 2 launch matters less as a single model release and more as a category-changing precedent. If a creative-suite vendor with a roughly $50 million to $100 million Series B can ship a frontier-tier image foundation model, the rest of the creative-suite category has to consider the same move. Three implications cascade.

Pressure on Leonardo, Recraft, Playground

Each of those vendors now has to answer the question their boards will ask within 30 days: do we ship our own foundation model too? The capital math is similar. The product-positioning logic is similar. Leonardo has the largest user base in that cohort and the strongest case for the investment. Recraft has a specialized vector and text-rendering wedge that complicates the math. Playground has the most challenging path forward because its product positioning is the most aggregator-pure.

Pressure on Midjourney and Adobe Firefly

The aesthetic-default players face a different pressure. Krea 2's "unopinionated" positioning is a direct critique of Midjourney V8's house style and Adobe Firefly's safety-padded aesthetic. The market has historically tolerated aesthetic defaults because the alternatives were either lower-quality or required technical capacity. Krea 2 removes both excuses. Midjourney and Firefly will either need to add more aggressive style-transfer surfaces (Firefly is closer to this with Reference Image features) or accept that the "design-first professional" segment shifts to Krea.

Pricing pressure on OpenAI and Google

The most consequential pressure lands on the frontier labs. Krea 2 is free at signup and 0.14 points behind GPT Image 2. That benchmarks the floor of the market at zero. OpenAI prices GPT Image 2 per image. Google prices Nano Banana Pro per image. Both pricing structures get harder to defend when a free alternative sits within tasting-error tolerance. Expect the per-image API pricing for the frontier image models to compress within 90 days, similar to how Gemini 3.1 Flash-Lite reset the LLM pricing floor earlier in 2026.

What the launch does not tell us yet

The Krea 2 announcement was intentionally light on technical specifics. The architecture details are absent. The training-data composition is undisclosed. The parameter count is not public. The inference-cost-per-image is not stated. The fine-tuning availability is unspecified. The commercial-use license terms are not in the launch post. The enterprise-tier pricing has not been published. These are the questions enterprise buyers and serious creative agencies will ask in the next 30 days, and Krea will need to answer at least the commercial-use and pricing questions to convert the launch momentum into enterprise contracts.

The model identity and training-data composition matter for content-credit risk specifically. Image generation models trained on commercial-license-ambiguous datasets carry real legal exposure for agencies and brand teams. Adobe Firefly's "commercially safe" positioning was built on this gap. If Krea does not articulate its training-data position clearly within 60 days, the enterprise segment will route around Krea 2 toward the conservative incumbents regardless of benchmark performance.

How to evaluate Krea 2 in the next 30 days

For design teams, product managers, and creative directors evaluating whether to add Krea 2 to the stack, five evaluation moves matter in the May-to-June window.

First, run a head-to-head on your actual production prompts. The Contra Labs benchmark is a population average. Your specific use cases will diverge — sometimes in Krea 2's favor, sometimes not. Pick 30 representative prompts from your last quarter of design work and generate each on Krea 2, GPT Image 2, and your current primary tool. Score blind. The signal noise dominates the population number.

Second, stress-test the style transfer system specifically. Take a brand reference image — a brand guideline page, a competitor mood board, an existing campaign deck — and run multiple variations. The native style transfer claim is the differentiator and the place where Krea 2 has to deliver. Watch for two failure modes: style influence that bleeds unrelated content from the reference, and style-strength controls that move in unintuitive directions.

Third, check the commercial-use license carefully. Until Krea publishes explicit commercial-use terms, treat enterprise deployment as carrying license-uncertainty risk. Indemnification language matters more than benchmark scores for brand teams.

Fourth, evaluate fit with existing pipeline integrations. If your team works in Adobe Firefly's Creative Cloud surface or has built workflows around Midjourney's Discord-or-web split, the integration cost of adding Krea 2 is real. The model quality has to clear that bar, not just the absolute bar.

Fifth, run the unit economics. Free at launch is a customer-acquisition decision, not a pricing decision. Expect Krea 2 to move behind a paid tier within 6 to 12 months once enterprise interest develops. Budget accordingly.

The broader foundation-model democratization question

Krea 2 is the second-most-interesting data point in 2026 on the question of whether foundation models stay frontier-lab-only. The first was Black Forest Labs' continued shipping of competitive open-weights image models on a presumably smaller budget than OpenAI. The Krea 2 launch suggests that competitive image foundation models can come from Series B creative-suite vendors. By 2027, the question becomes: which vertical SaaS vendors with adjacent data assets and enough capital to train mid-sized models will ship their own foundation models in their domain?

Candidates with the most plausible setup: Canva (presentation and design), Figma (interface design), Notion (productivity and document generation), Webflow (web design), Linear (technical project management), Loom (video). Each has accumulated user-generated content and workflow telemetry that would benefit from a domain-tuned foundation model. Each has either the cash or the financing access to fund a $5 million to $20 million training run. The Krea precedent makes the strategic question harder to defer.

What I am watching for in the next 90 days

Three specific signals will tell us whether Krea 2 represents the start of a category shift or a one-off vendor move. First, whether Leonardo, Recraft, or Playground announce their own foundation model within 90 days. The competitive response pattern in SaaS is consistent — major moves trigger 60 to 120 day responses from direct competitors when the move is product-architectural rather than feature-additive. The absence of a response from this cohort would be a strong signal that Krea is alone in this bet.

Second, whether OpenAI or Google adjusts GPT Image 2 or Nano Banana Pro pricing. The image-gen API pricing has been remarkably stable through 2025 and early 2026. A 20% to 40% per-image price reduction in either model within 90 days would confirm that Krea 2 has reset the floor of the market.

Third, whether Krea publishes a technical report or paper detailing the style-transfer joint training architecture. The marketing-only framing in the launch post leaves the technical claim unverified. A subsequent technical write-up — even at a workshop or industry-conference level — would either substantiate the architectural novelty or expose it as marketing surface. Both outcomes matter.

Bottom line

Krea 2 is the first foundation image model from a creative-suite vendor, shipped at a 0.14 points benchmark gap to the OpenAI flagship and at a price of zero at launch. The model itself is interesting. The strategic move is more interesting. Krea bet that workflow alone is not enough moat in the 2026 image-gen market and that owning a foundation model unlocks both defensibility and a different valuation comparable set. The bet is rational. The execution looks credible. The category implications — pressure on aggregator competitors, pricing pressure on frontier labs, vertical-SaaS foundation-model precedents — will play out over the next 90 days.

For design teams, the practical read is straightforward: add Krea 2 to your evaluation list, stress-test the style transfer system specifically, watch the commercial-use license disclosure, and run unit economics assuming pricing migrates behind a paid tier within 12 months. For the market, the read is that a creative-suite vendor just did something only frontier labs were supposed to be able to do. That changes the available strategies for every adjacent player.

Frequently asked questions

What is Krea 2 and when did it launch?

Krea 2 is Krea's first proprietary foundation image model, launched on May 12, 2026, accessible at krea.ai/image/k2. According to the launch post on krea.ai/blog/krea-2-image-model, Krea 2 is "the first foundation model built completely from scratch" by the company. The model accepts text prompts and reference images, and exposes user-facing controls for style transfer intensity, multi-style combination, and batch variation cohesion. Free signup at launch, no paywall on the public endpoint.

How does Krea 2 score versus GPT Image 2?

Independent benchmarks from Contra Labs place Krea 2 within 0.14 points of GPT Image 2 on human-preference scoring. That gap sits inside tasting-error tolerance for many prompt categories — the gap depends heavily on prompt selection. GPT Image 2 retains an edge on photorealistic portrait synthesis and text rendering. Krea 2 wins decisively in style-transfer scenarios where users feed reference images for visual direction. The composite picture: GPT Image 2 still wins on average across the Contra Labs prompt mix by 0.14 points, but Krea 2 is now a legitimate choice for design-first workflows.

What makes Krea 2's style transfer different from ControlNet or IP-Adapter?

Most foundation image models bolt style transfer on after the fact through external modules — ControlNet for Stable Diffusion 1.5, IP-Adapter for SDXL, Redux for FLUX Schnell. The module is always a post-hoc adapter trained separately from the base model. Krea 2's claim is that its style transfer system was trained alongside the foundation model in the same training run with shared gradient updates. If accurate, that means style influence is a native control input rather than a second-class citizen. The user-facing implication is that combining two style references should not collapse into the average of the two, and strengthening a style should not also strengthen unrelated content from the reference.

Is Krea 2 free or paid?

Krea 2 is free at launch with a Krea account signup. Free access is a customer-acquisition decision rather than a permanent pricing position. Industry priors and Krea's likely unit economics suggest the model migrates behind a paid tier within 6 to 12 months as enterprise interest develops. The full Krea creative suite — which integrates other models alongside Krea 2 — already runs on a tiered subscription, so the funnel from free Krea 2 access to paid creative suite tiers is the implicit conversion logic.

How does Krea 2 compare to Midjourney V8 and Adobe Firefly?

Midjourney V8 owns the aesthetic-default position with its distinctive warm and painterly house style. Adobe Firefly owns the commercially-safe-license position with explicit training-data conservatism. Krea 2 positions itself as "raw, flexible, unopinionated" — a direct contrast to both aesthetic-default players. For users who want a specific look through reference images rather than through Midjourney's house style, Krea 2 is the better fit. For users who want the Midjourney look without specifying it, Midjourney V8 still owns the category. For users with brand-safety requirements demanding explicit commercial-use indemnification, Adobe Firefly retains the edge until Krea publishes commercial-use terms.

What does the aggregator-to-foundation pivot mean for Krea's business model?

Krea sold a creative suite on top of partner models — Stable Diffusion, FLUX, Recraft, Ideogram — for three years before May 12, 2026. The foundation-model launch reshapes Krea's risk profile in three ways. Model-quality risk shifts from "partner labs ship a great upgrade we integrate" to "we ship a great upgrade ourselves." Defensibility increases through proprietary model weights and style-transfer system architecture that competitors cannot reproduce by switching API providers. Fundraising narrative shifts from "best creative suite" to "best creative suite with frontier-grade model assets," unlocking a different valuation comparable set that frontier-lab investors take seriously.

How much did training Krea 2 cost?

Krea did not disclose the training cost. Industry priors for a competitive image foundation model in 2026 run between $3 million and $15 million depending on parameter count and training-data mix. Krea raised a Series A in 2023 and what reads as a Series B round in 2024 with terms not public. The math implies Krea allocated a meaningful fraction of its runway to the foundation-model bet. The follow-on financing consequence is that subsequent rounds will need to bridge to either profitable creative-suite economics at scale or a larger model-lab-style fundraise.

Will Leonardo, Recraft, or Playground ship their own foundation models too?

This is the single most important market question that opens with the Krea 2 launch. Each creative-suite competitor faces a board-level question within 30 days about whether to match Krea's move. The capital math is comparable. The product-positioning logic is comparable. Leonardo has the largest user base and the strongest case for the investment. Recraft has a specialized vector-and-text-rendering wedge that complicates the math. Playground faces the most challenging path forward because its product positioning is the most aggregator-pure. The competitive response pattern in SaaS suggests responses arrive within 60 to 120 days when the trigger is product-architectural.

What pricing pressure does Krea 2 put on GPT Image 2 and Nano Banana Pro?

Krea 2 is free at signup and benchmarks 0.14 points behind GPT Image 2 on Contra Labs scoring. That benchmarks the floor of the market at zero. OpenAI prices GPT Image 2 per image. Google prices Nano Banana Pro per image through Google AI Studio. Both pricing structures become harder to defend when a free alternative sits inside tasting-error tolerance. The realistic projection is per-image API pricing for the frontier image models compresses 20% to 40% within 90 days, similar to how Gemini 3.1 Flash-Lite reset the LLM pricing floor earlier in 2026.

What is the commercial-use license for Krea 2?

The Krea 2 launch post did not include explicit commercial-use license terms. Until Krea publishes commercial-use language, treat enterprise deployment as carrying license-uncertainty risk. Indemnification language matters more than benchmark scores for brand and agency teams. The Adobe Firefly "commercially safe" positioning was built specifically on this gap, and if Krea does not articulate its training-data position clearly within 60 days, the enterprise segment will route around Krea 2 toward conservative incumbents regardless of benchmark performance.

Is Krea 2 weights available for local inference or fine-tuning?

No. Krea 2 is available exclusively through Krea's hosted endpoint at krea.ai/image/k2 at launch. Neither local inference nor fine-tuning surfaces are public. This is a meaningful difference versus the open-weights image-gen tier represented by FLUX 2 and Stable Diffusion 4. The trade-off favors workflow integration — Krea 2 ships inside a polished creative suite with reference-image management, project organization, and team-collaboration features that open-weights models do not match. The audiences diverge: open-weights models win with developer-tier users and small studios with technical capacity, Krea 2 wins with designers and agencies who price their time at $200 per hour and need workflow over weights.

What signals should we watch in the next 90 days to know if Krea 2 reshapes the category?

Three signals matter. First, whether Leonardo, Recraft, or Playground announce their own foundation model within 90 days — the absence of a competitive response would be a strong signal that Krea is alone in this bet. Second, whether OpenAI or Google adjusts GPT Image 2 or Nano Banana Pro pricing — a 20% to 40% per-image price reduction in either model within 90 days would confirm that Krea 2 has reset the market floor. Third, whether Krea publishes a technical report or paper detailing the style-transfer joint training architecture — the marketing-only framing in the launch post leaves the architectural claim unverified, and a subsequent technical write-up would either substantiate or expose it.