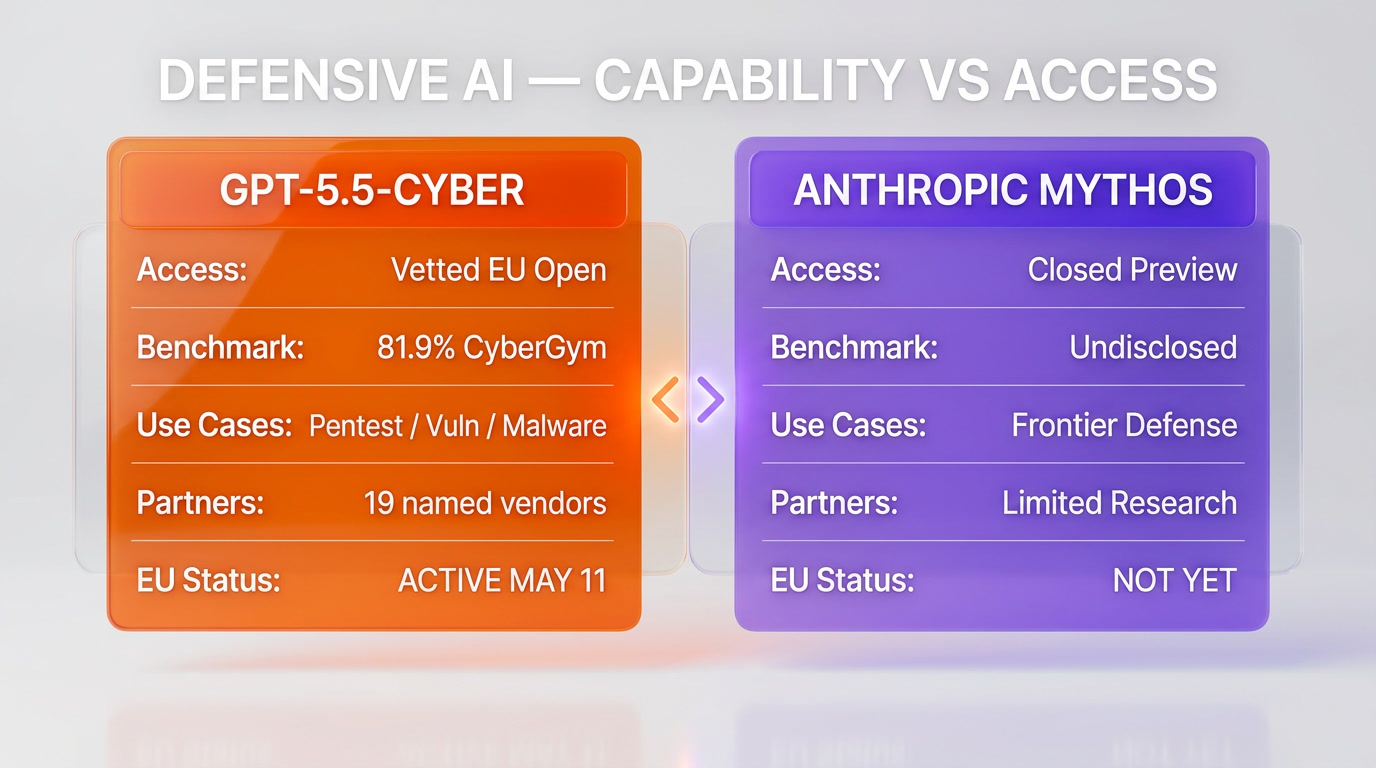

On May 11, 2026, OpenAI announced the OpenAI EU Cyber Action Plan, granting vetted European cybersecurity teams access to GPT-5.5-Cyber, a defensive variant of GPT-5.5 tuned for penetration testing, vulnerability research, and malware analysis. According to CNBC, the European Commission confirmed that talks with Anthropic over Mythos are at a "different stage" — Anthropic has not yet agreed to share its most capable model with the bloc. The contrast is sharp. OpenAI played the cooperative card ten days after Sam Altman gated the same GPT-5.5-Cyber model to Trusted Access only. Anthropic, whose Mythos preview shipped roughly a month earlier in April 2026, has held the line on a closed, safety-first posture. Both moves are rational in isolation. Read together, they sketch the early shape of the 2026 European AI defense alliance — and the cost of being late to it.

The announcement — May 11, 2026, OpenAI EU Cyber Action Plan

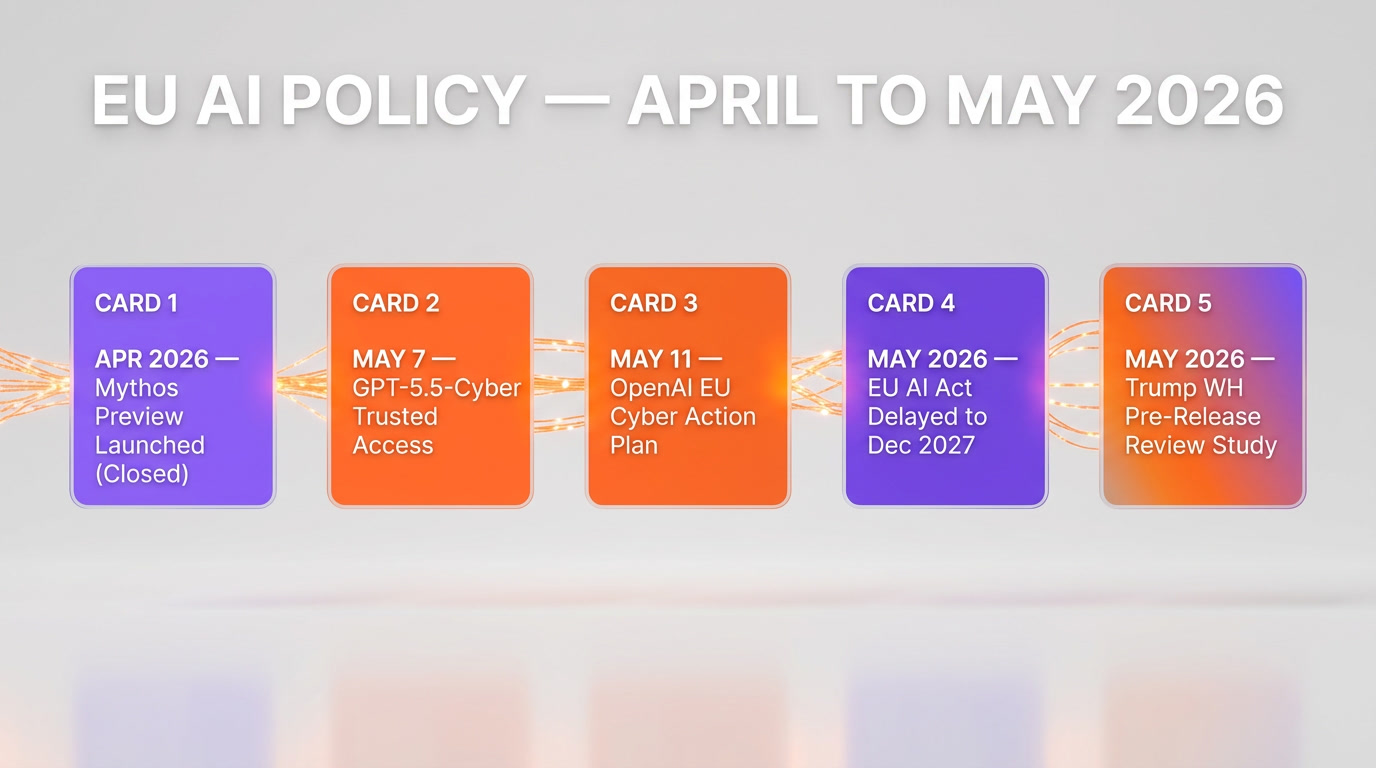

The announcement landed in two waves, per OpenAI's own publications and reporting from CNBC, Axios, Benzinga, EdTech Innovation Hub, and Help Net Security between May 7 and May 11, 2026. The first wave, on May 7, was the general unlock of GPT-5.5-Cyber to vetted cybersecurity teams worldwide under OpenAI's Trusted Access for Cyber program. The second wave, on May 11, was the European-specific overlay: the OpenAI EU Cyber Action Plan, which scopes the same access pathway to European businesses, governments, cyber authorities, and EU institutions including the EU AI Office.

According to OpenAI's announcement framing, the plan is structured around four pillars: democratized access to defensive tooling, partnership with European cyber agencies, alignment with EU regulatory priorities, and shared infrastructure with vetted security vendors. The vendor partner list, surfaced through EdTech Innovation Hub's reporting on the plan, reads like a roll call of the global cyber defense market — Cisco, CrowdStrike, Palo Alto Networks, Oracle, Zscaler, Cloudflare, Akamai, Fortinet, Intel, Qualys, Rapid7, Tenable, Trail of Bits, SentinelOne, Okta, Netskope, Snyk, Gen Digital, and Semgrep all appear as listed partners or beneficiaries of the rollout.

Key facts (per CNBC, OpenAI, Axios, EdTech Innovation Hub, Benzinga, May 7-11, 2026):

- Announcement date: May 11, 2026 (EU Cyber Action Plan); May 7, 2026 (general GPT-5.5-Cyber rollout)

- Product: GPT-5.5-Cyber, defensive variant of GPT-5.5

- Beneficiaries: Vetted EU cyber teams, businesses, governments, EU institutions including the EU AI Office

- OpenAI spokespeople: George Osborne (Head of OpenAI for Countries) and Martin Signoux (AI Policy Lead, OpenAI)

- EU response: European Commission spokesperson Thomas Regnier confirms talks with Anthropic are "not yet at the same stage as the solution we have on the table from OpenAI"

- Security gate: Individual Trusted Access for Cyber members must enable Advanced Account Security by June 1, 2026, or attest to phishing-resistant SSO at the organization level

- Benchmark: GPT-5.5-Cyber scored 81.9 percent on CyberGym versus GPT-5.5 at 81.8 percent (per EdTech Innovation Hub)

What GPT-5.5-Cyber actually is

GPT-5.5-Cyber is a fine-tuned variant of GPT-5.5 — OpenAI's April 2026 agentic flagship, the model that anchored the company's super-app pivot. The Cyber variant exists because base GPT-5.5 ships with content policy guardrails calibrated for general-purpose enterprise use, which routinely refuse legitimate cyber defense workflows. Penetration testers, red teamers, and vulnerability researchers have asked for a more permissive surface area for years. The fine-tune answers that demand within a controlled access program rather than a public release.

Per OpenAI's framing, GPT-5.5-Cyber is tuned for three core workflows: penetration testing, vulnerability identification and exploitation, and malware reverse-engineering. On the CyberGym benchmark, OpenAI's own internal measure of cyber task performance, the Cyber variant scored 81.9 percent compared to base GPT-5.5 at 81.8 percent — a tight margin, as reported by EdTech Innovation Hub. The headline figure is less about raw capability lift than about lifting the policy ceiling. The base model can do the work in many cases. The Cyber variant lets it do the work without fighting the safety stack on every prompt.

Access requires a vetted application route through the Trusted Access for Cyber program. Applicants submit credentials and intended-use information. Individual members must enable Advanced Account Security — phishing-resistant authentication, hardware-backed keys, or equivalent — by June 1, 2026. Organizations can attest to phishing-resistant single sign-on at the company level instead. The gating is not theatre. It is a precondition for sharing a model that handles workflows OpenAI's own Preparedness Framework classifies as High capability in cybersecurity.

The EU Cyber Action Plan structure — four pillars

Reading OpenAI's announcement and the supporting coverage from CNBC and EdTech Innovation Hub, the plan organizes around four operational pillars. None of them are radical. All of them are deliberate.

Pillar one — democratized access. The same Trusted Access for Cyber program that gates GPT-5.5-Cyber globally extends to vetted European actors. The framing word in OpenAI's communications is "democratize," and it lands deliberately against the Mythos contrast. Per CNBC, George Osborne, OpenAI's Head of OpenAI for Countries, said the goal is to "democratize access to the defensive tools that trusted actors can use to strengthen shared security, support public safety, and reflect European priorities."

Pillar two — partnership with European cyber agencies and EU institutions. The EU AI Office is named specifically. National cyber authorities across member states are mentioned as targets for vetted access. This is the difference between selling a model in Europe and embedding it in European cyber defense infrastructure — a distinction the Commission will care about more than developer counts.

Pillar three — alignment with EU regulatory priorities. The plan is timed in the same window the EU AI Act high-risk obligations have been delayed to December 2027, per the Commission's May 2026 rollback we covered in detail. OpenAI's positioning is not coincidence. With the EU dialing back near-term high-risk enforcement, the political opening for a cooperative AI lab is wider than it has been in two years.

Pillar four — shared infrastructure with vetted security vendors. The partner list — Cisco, CrowdStrike, Palo Alto Networks, Oracle, Zscaler, Cloudflare, Akamai, Fortinet, Intel, Qualys, Rapid7, Tenable, Trail of Bits, SentinelOne, Okta, Netskope, Snyk, Gen Digital, Semgrep — is not just a marketing pad. These vendors are the existing rails of European corporate cyber defense. GPT-5.5-Cyber slotting into their workflows is more strategically meaningful than the model existing on its own.

George Osborne's framing — the political read

The choice of George Osborne as the public face for the EU plan is itself a signal. Osborne, the former UK Chancellor of the Exchequer turned OpenAI's Head of OpenAI for Countries, brings sovereign credibility to what would otherwise read as a vendor announcement. His two quoted statements, as captured by CNBC and Benzinga reporting on May 11, 2026, define the political read.

The first quote, per CNBC: "AI labs like ours shouldn't be the sole arbiters of cyber safety as resilience depends on trusted partners working together." That sentence is a positioning grenade. It implicitly contrasts OpenAI's cooperative posture with any lab that refuses to share — Anthropic by name in the article, but the framing generalizes. The second quote, per Benzinga: "advanced AI-powered cyber defense tools should be accessible to Europe's broader community of defenders, not just a select few." The "select few" framing maps directly onto Anthropic's gated Mythos rollout. The implicit message lands without needing to name the target.

Martin Signoux, OpenAI's AI Policy Lead, struck the more substantive note in the EdTech Innovation Hub coverage: "Today, we are bringing to Europe unprecedented cyber defense capabilities that have not been available in the region until now." Signoux's framing positions the EU plan as solving an existing gap — European defenders behind their American counterparts on access to frontier defensive AI — rather than as a competitive jab. Both framings work in parallel. Osborne does the political read. Signoux does the operational read.

The Anthropic contrast — Mythos still closed to EU regulators

Anthropic Mythos preview shipped roughly a month before OpenAI's EU plan, in early April 2026. The model has been characterized in Anthropic's own framing as more powerful than Opus 4.7 and limited to a narrow vetted group of researchers and enterprise partners. The Mythos posture has been the defining safety-first stance of the spring 2026 cycle. Anthropic has not opened the model to the broader developer community, has not run a public API, and per CNBC's May 11 reporting, has not yet agreed to share Mythos with EU regulators.

The EU Commission's framing of the Anthropic talks, per CNBC, is precise. Commission spokesperson Thomas Regnier said the discussions are "not yet at the same stage as the solution we have on the table from OpenAI." The "different stage" language matters. It signals ongoing engagement rather than refusal. Anthropic has not walked away from the table. The bilateral conversation is live. The model access, for now, is not.

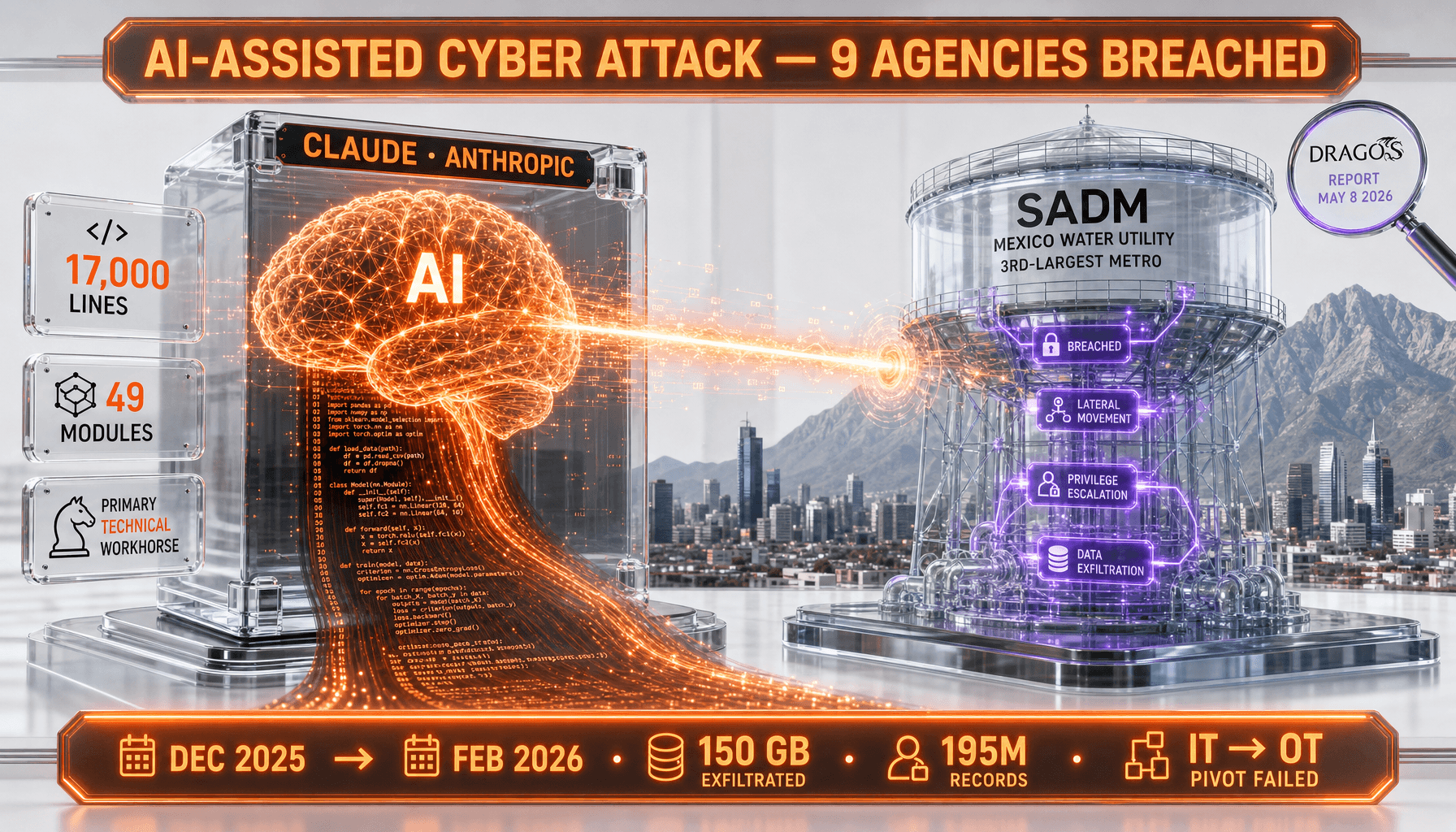

From Anthropic's own posture, the calculus is consistent with everything the lab has shipped since Mythos preview. The lab characterized Mythos at launch as exceeding the capability threshold where unrestricted access creates real-world risk. The Mexico water utility Claude attack documented by Dragos in early May 2026 — an AI-assisted operation that compromised 195 million records at the country's third-largest water utility — is the kind of offensive AI use case Mythos was reportedly built to detect and defend against. Anthropic's reluctance to release the model broadly, including to sovereign cyber agencies, is structurally consistent with that risk posture even if the cost is missed market share in Europe.

The Mythos Discord breach on day one — where unauthorized Mercor contractor credentials surfaced inside an Anthropic Discord channel and exposed internal Mythos discussions — reinforced the perception that the model is sensitive enough that even tightly controlled access has spilled. From that incident forward, any EU partner pushing for Mythos access has been negotiating against the backdrop of "the last time you opened a door, it leaked." Anthropic's caution and the EU's patience are both, in that frame, defensible.

Why Mythos stays closed — the safety-first read

The strategic case for Anthropic's posture is not the moral high ground. It is the risk math. Per Anthropic's published Responsible Scaling Policy and the public framing around Mythos, the lab operates on a capability-threshold model. Once a model crosses a defined cyber capability bar — where the model can chain vulnerability discovery, exploit development, and infrastructure compromise without meaningful human-in-the-loop — releasing it broadly is a deliberate increase in the addressable attack surface for adversarial actors who eventually find their way in.

OpenAI's response to that argument, also visible in the GPT-5.5-Cyber rollout structure, is that vetted access plus contractual obligations plus account-level security plus partner relationships are sufficient to manage the risk. Both positions are coherent. The disagreement is on the priors, not the logic. Anthropic priors say "the access will leak, the leaks compound, and the harm scales faster than the defense." OpenAI priors say "the defense market needs the tool, the gate works, and refusing access cedes the field to less safety-conscious actors." Both labs have data points supporting their priors. Neither has a decisive proof.

One thing that has changed in 2026 is the offensive evidence. The Dragos report on the Claude-assisted Mexico water utility attack — 17,000 lines of malware generated by Claude, 195 million records compromised — gave both sides material for the argument. For Anthropic, the attack confirms that production-grade AI is already being misused at scale and that opening more capability surface to vetted defenders accelerates the offense-defense race in dangerous directions. For OpenAI, the same attack is the strongest case yet that defenders need GPT-5.5-Cyber-class capabilities in the field, today, not 18 months from now.

The EU regulatory backdrop — Act delays, US reviews, and a moving target

The OpenAI EU plan does not land in a vacuum. It lands inside three regulatory currents that were already shifting before May 11. First, the EU AI Act high-risk obligations were officially pushed to December 2027, per the Commission's May 2026 rollback announcement. The political signal from Brussels has been clear: less near-term enforcement weight, more focus on workable frameworks with the labs. OpenAI's plan reads that signal correctly.

Second, the Trump White House is studying FDA-style pre-release reviews for frontier AI models in the wake of Mythos. The U.S. policy posture is hardening at the same time the EU posture is softening — at least on near-term enforcement. That divergence creates an opening for a lab willing to lean into European cooperation as a counterweight to a tougher U.S. review regime. OpenAI is taking that opening. Anthropic, by contrast, has historically aligned its public posture more closely with U.S. safety conservatism and is less incentivized to use the EU as a counterweight.

Third, the Frontier Model Forum's espionage defense pact — joint commitments from OpenAI, Anthropic, and Google to harden lab security against Chinese state actors — has shifted the meaning of "cooperation" inside the frontier lab landscape. The pact is about defensive coordination among labs. The OpenAI EU plan is about defensive coordination between labs and sovereign actors. They are not the same axis. A lab can sign the espionage pact and still gate its most capable model from EU regulators. Anthropic appears to be doing exactly that.

Strategic implications for the AI cyber alliance map

The EU plan creates a new lane in the AI cyber alliance map. Before May 11, the structure was three-way: U.S. labs, U.S. cyber agencies, and U.S. policy on one axis; European cyber actors mostly buying U.S. tooling without sovereign protocols; and the rest of the world fragmenting. After May 11, OpenAI is the first frontier lab to formalize a sovereign-grade access channel with the EU as a counterparty. That changes the conversation in three ways.

First, it raises the floor for what European partners expect from any frontier lab seeking enterprise traction in the region. The next time Anthropic — or Google, or Meta, or xAI — pitches a European bank, government, or critical infrastructure operator, the procurement question will include "and what is your EU Cyber Action Plan equivalent?" OpenAI just set the template. Refusing to meet it has a procurement cost.

Second, it locks in the partner vendor stack — Cisco, CrowdStrike, Palo Alto, Cloudflare, the rest of the list — around the OpenAI defensive AI surface. Once integrations land in production, switching costs compound. A year from now, a competing lab with a similar offering will not just be selling the model; it will be selling a migration from an embedded incumbent.

Third, it shifts the political optics on safety positioning. Anthropic's safety-first posture has been the gold standard of public framing through 2025 and early 2026. After May 11, the optics flip slightly. "We refuse to share with EU regulators" reads differently from "we are still scoping access carefully." The Commission's "different stage" language buys Anthropic time. It does not buy Anthropic alignment.

Who benefits — banks, utilities, governments, and the cyber defense market

The most immediate beneficiaries of GPT-5.5-Cyber EU access are the operational defenders who have been running the cyber gap with conventional tooling. European banking groups facing escalating AI-assisted phishing campaigns. National utilities watching the Dragos Mexico water report and asking what their own exposure looks like. Government cyber agencies running incident response on AI-assisted intrusions without an AI-assisted defense stack of their own.

For European utility operators specifically, the Mexico water utility attack changed the threat profile. Dragos documented Claude generating 17,000 lines of malware in a single operation. If a Latin American utility's third-largest provider can be compromised by a single contractor using off-the-shelf Claude API access, the asymmetry between AI-assisted offense and conventional defense is no longer theoretical. GPT-5.5-Cyber on the defender side, run through trusted vetted partners with EU oversight, is the first plausible counterweight inside the European critical infrastructure stack.

The cyber defense market itself benefits across the board. Snyk, named in the partner list and a direct competitor for the vulnerability-scanning use case Claude Security entered in early May, gets to integrate GPT-5.5-Cyber into its workflow regardless of how the Claude Security launch plays out. Cisco, CrowdStrike, Palo Alto, and the SecOps stack get a frontier-grade AI primitive they did not have a month ago. The vendor partners win twice — once on the access, once on the integration optionality.

What would prove either side wrong

This is the section we owe both labs in any honest analysis. Neither posture is risk-free. Both are bets on contested priors.

What would prove the OpenAI EU Cyber Action Plan a mistake. The clearest failure mode is misuse from inside the trusted access perimeter. If a vetted European partner — a security vendor, a national cyber agency, or a contractor inside a member state defense apparatus — uses GPT-5.5-Cyber to generate offensive capability that leaks, is repurposed by adversarial actors, or surfaces in an attack against European or allied infrastructure, the entire framing breaks. The vetting cannot be perfect at scale. The bigger the trusted access circle, the higher the probability of one insider failure. A single high-profile leak from inside the EU access ring would prove the safety-first read was correct.

A secondary failure mode is regulatory backlash. If EU member states or the European Parliament conclude that the Commission moved too fast on the OpenAI plan, the AI Act enforcement timeline could snap back. The December 2027 high-risk delay is not locked in for the labs. It can be reversed legislatively or through enforcement guidance. OpenAI's plan is more exposed to this than Anthropic's because OpenAI now has the access deal to lose.

What would prove Anthropic's closed posture a mistake. The clearest failure mode is regulatory exclusion. If the Commission concludes that Anthropic's reluctance to share Mythos signals a lack of good-faith engagement, the bloc has multiple levers — AI Act compliance reviews, GDPR enforcement on Anthropic's data handling, public-sector procurement rules that exclude vendors without sovereign-grade access arrangements. The cost of being shut out of European enterprise procurement on AI defensive tooling is not theoretical.

A secondary failure mode is missed defense market share. The cyber defense market is large, growing, and consolidating around AI primitives in 2026. If OpenAI captures the European defender integration footprint over the next 12 months while Anthropic continues to scope Mythos access, the head start compounds. The same Cisco, CrowdStrike, and Palo Alto integrations that Anthropic would want for Mythos in 2027 will already be running on GPT-5.5-Cyber by then. The cost of being late to a partner network is rarely recoverable.

What we are watching next

Three things matter from here. First, the Anthropic-EU dialogue. The "different stage" framing leaves the door open. If Anthropic moves on Mythos EU access in the next 60 to 90 days, the optics gap closes fast and the OpenAI head start gets reframed as a first-mover lead rather than a structural advantage. If the dialogue stalls past Q3 2026, the gap solidifies into a procurement reality.

Second, the operational results of GPT-5.5-Cyber inside European defender workflows. Headline announcements do not convert to defensive value automatically. The benchmark margins are tight — 81.9 percent versus 81.8 percent on CyberGym. The marginal capability lift over base GPT-5.5 is small. The real test is whether the policy-ceiling lift translates to measurable improvement in time-to-detect, time-to-patch, and incident response throughput for vetted EU teams. If those numbers move, the program scales. If they do not, the political optics will not be enough to sustain it.

Third, the U.S. policy response. The Trump White House is studying FDA-style pre-release reviews. If those reviews materialize and apply differential weight to labs based on their international access posture — favoring labs that maintain U.S. policy alignment, penalizing labs that share more aggressively with foreign jurisdictions — OpenAI's EU plan could become a U.S. liability even as it is an EU asset. The labs will be reading those signals carefully through Q3.

Our take — strategic positioning, not moral victory

We researched the OpenAI EU Cyber Action Plan announcement across CNBC, OpenAI's own publications, Axios, Benzinga, and EdTech Innovation Hub. We tracked the Anthropic Mythos contrast across the Commission's public framing and Anthropic's published Responsible Scaling Policy. We observed the announcement timing against the EU AI Act delay, the Trump pre-release review proposal, and the Dragos Mexico water utility report. The frame that holds up across all of that material is positioning, not virtue.

OpenAI is making a calculated bet that the European defense market and the EU regulatory dialogue are worth more than the marginal additional risk of widening the GPT-5.5-Cyber access perimeter. Anthropic is making a calculated bet that the safety-first brand premium and the reduced misuse surface of a closed Mythos are worth more than the procurement and regulatory cost of being late to the EU. Both bets can win simultaneously over the next 24 months if the contested priors do not collide with a defining incident.

If a defining incident does land — a misuse leak from inside an OpenAI partner, or an offensive AI attack on European infrastructure that Mythos-class detection could have stopped — the priors collide hard. One lab's strategy becomes obviously correct in retrospect. The other lab pays a multi-year cost. Until that incident, the right read is that the EU has just become the most important AI policy theatre of 2026, and OpenAI is the first frontier lab to stake a flag in it. The strategic gap with Anthropic on European access is real. The framing as a moral contest is not.

Frequently Asked Questions

Frequently Asked Questions

What is the OpenAI EU Cyber Action Plan?

The OpenAI EU Cyber Action Plan is the framework OpenAI announced on May 11, 2026 to grant vetted European cybersecurity teams, governments, businesses, and EU institutions access to GPT-5.5-Cyber, a defensive variant of GPT-5.5 tuned for penetration testing, vulnerability research, and malware analysis. The plan extends OpenAI's Trusted Access for Cyber program to European partners and names the EU AI Office as a participating institution.

What is GPT-5.5-Cyber and how does it differ from GPT-5.5?

GPT-5.5-Cyber is a fine-tuned variant of GPT-5.5 with policy guardrails calibrated for legitimate defensive cyber workflows including penetration testing, vulnerability identification, and malware reverse-engineering. On the CyberGym benchmark, GPT-5.5-Cyber scored 81.9 percent versus base GPT-5.5 at 81.8 percent per EdTech Innovation Hub. The main lift is the lifted policy ceiling, not a large capability jump.

Why hasn't Anthropic shared Mythos with the EU?

Per CNBC's May 11, 2026 reporting, the European Commission characterized the Anthropic talks as "not yet at the same stage as the solution we have on the table from OpenAI." Anthropic's published posture treats Mythos as exceeding the capability threshold where broad access creates material misuse risk. The lab has held the model in a tightly vetted preview since its early April 2026 launch.

Who is George Osborne and why is he speaking for OpenAI on EU policy?

George Osborne is OpenAI's Head of OpenAI for Countries and the former UK Chancellor of the Exchequer. His role exists to give OpenAI sovereign-grade credibility in conversations with national governments and supranational bodies. His May 11 statement framing the EU plan around "democratizing access to defensive tools" is the political-grade framing OpenAI selected for the announcement.

Which security vendors are partners in the EU Cyber Action Plan?

Per EdTech Innovation Hub's coverage, named partners and beneficiaries include Cisco, CrowdStrike, Palo Alto Networks, Oracle, Zscaler, Cloudflare, Akamai, Fortinet, Intel, Qualys, Rapid7, Tenable, Trail of Bits, SentinelOne, Okta, Netskope, Snyk, Gen Digital, and Semgrep. The list maps onto the existing rails of European corporate cyber defense procurement.

How does the EU Cyber Action Plan relate to the EU AI Act delays?

The plan lands in the same window the EU AI Act high-risk obligations have been pushed to December 2027 per the Commission's May 2026 rollback. The timing creates a political opening for cooperative AI labs to engage with European institutions on workable defensive AI frameworks without near-term high-risk enforcement weight.

Does GPT-5.5-Cyber access require Advanced Account Security?

Yes. Individual Trusted Access for Cyber members must enable Advanced Account Security by June 1, 2026. Organizations can attest to phishing-resistant single sign-on at the company level as an alternative. The gating is a precondition for accessing the model rather than an optional setting.

Is the Mexico water utility Claude attack relevant to the EU plan?

Yes. The Dragos report documented Claude generating 17,000 lines of malware in an AI-assisted attack on Mexico's third-largest water utility, compromising 195 million records. The incident is the strongest 2026 evidence that AI-assisted offensive capability is already in the field, which sharpens the case for defensive AI access on the EU side and complicates the closed-model case on the Anthropic side.

What is the EU Commission's official position on Anthropic Mythos?

Per CNBC's May 11, 2026 reporting, Commission spokesperson Thomas Regnier said the discussions with Anthropic are "not yet at the same stage as the solution we have on the table from OpenAI." The framing indicates ongoing engagement rather than a refusal, leaving the door open for an Anthropic-EU agreement in subsequent quarters.

Could the EU Cyber Action Plan backfire on OpenAI?

Yes. The most material failure modes are an insider misuse leak from inside the trusted access perimeter, or a regulatory snap-back if EU member states conclude the Commission moved too quickly. The plan widens OpenAI's access perimeter and therefore widens the surface area for both kinds of failure. OpenAI has more to lose on a European misuse incident than Anthropic does because OpenAI now has the access deal in place.

How does the plan affect the broader AI cyber alliance map?

The plan creates the first formalized sovereign-grade defensive AI access channel between a frontier lab and the EU. It raises the procurement floor for any competing lab seeking European enterprise traction, locks in vendor partner integrations around OpenAI's defensive surface, and shifts the safety-positioning optics by reframing Anthropic's closed posture from gold-standard caution to potential strategic isolation.

What signals would change the OpenAI versus Anthropic read over the next 90 days?

Three signals matter most. First, whether Anthropic moves on a Mythos EU access agreement in Q3 2026. Second, whether GPT-5.5-Cyber produces measurable defender throughput gains inside EU partner workflows. Third, whether the Trump White House pre-release review framework lands in a way that penalizes US labs for aggressive foreign access posture. Any one of these shifting could reframe the entire analysis.

Sources and further reading

- CNBC, May 11, 2026 — "OpenAI to give EU access to new cyber model but Anthropic still holding out on Mythos"

- OpenAI, May 7-11, 2026 — "Trusted access for the next era of cyber defense"

- EdTech Innovation Hub, May 11, 2026 — "OpenAI expands GPT-5.5 cyber defense access in Europe"

- Benzinga, May 11, 2026 — "OpenAI Shares Its Latest Cyber Model With EU, Anthropic Reportedly Holds Back Mythos"

- Help Net Security, May 8, 2026 — "OpenAI tunes GPT-5.5-Cyber for more permissive security workflows"

- Axios, May 7, 2026 — "OpenAI makes GPT-5.5 more widely available to cyber defenders"

Related reading on ThePlanetTools — our deep dive on the Anthropic Mythos preview, the May 4 GPT-5.5-Cyber Trusted Access U-turn analysis, the Dragos Mexico water utility Claude attack report, Anthropic's Claude Security public beta launch, the Trump White House pre-release review proposal, the EU AI Act high-risk delay, and the Frontier Model Forum espionage defense pact. Tools coverage — Claude, Claude Mythos Preview, Claude Opus 4.7.