On May 12, 2026, Meta rebuilt its consumer AI app natively on Muse Spark, its closed-source frontier model from the Alexandr Wang superintelligence lab. Three product layers shipped together: a multi-agent Contemplating mode (visible parallel reasoning UI, the answer to Gemini Deep Think and GPT Pro), a Live AI camera that streams vision in real time, and a Marketplace shopping agent that books inside WhatsApp, Instagram, and Facebook. Meta touches 3.4 billion daily users — this is the first time a proprietary Meta model will sit in front of all of them at once.

What Shipped on May 12, 2026

We covered Muse Spark's closed-source launch on April 8 when the model first appeared with $14.3B in Scale AI training compute behind it, and we tracked Meta's open-source exit when Muse Spark scored 52 versus Llama 4 Maverick's 18. Both stories were about the model and the lab strategy. The May 12 release is the third act: the app the world will actually use.

The Meta AI app — the standalone consumer surface that competes with the ChatGPT app, Gemini app, and Claude.ai — now runs natively on Muse Spark instead of routing to a Llama backend. The rebuild ships three consumer-visible features at once:

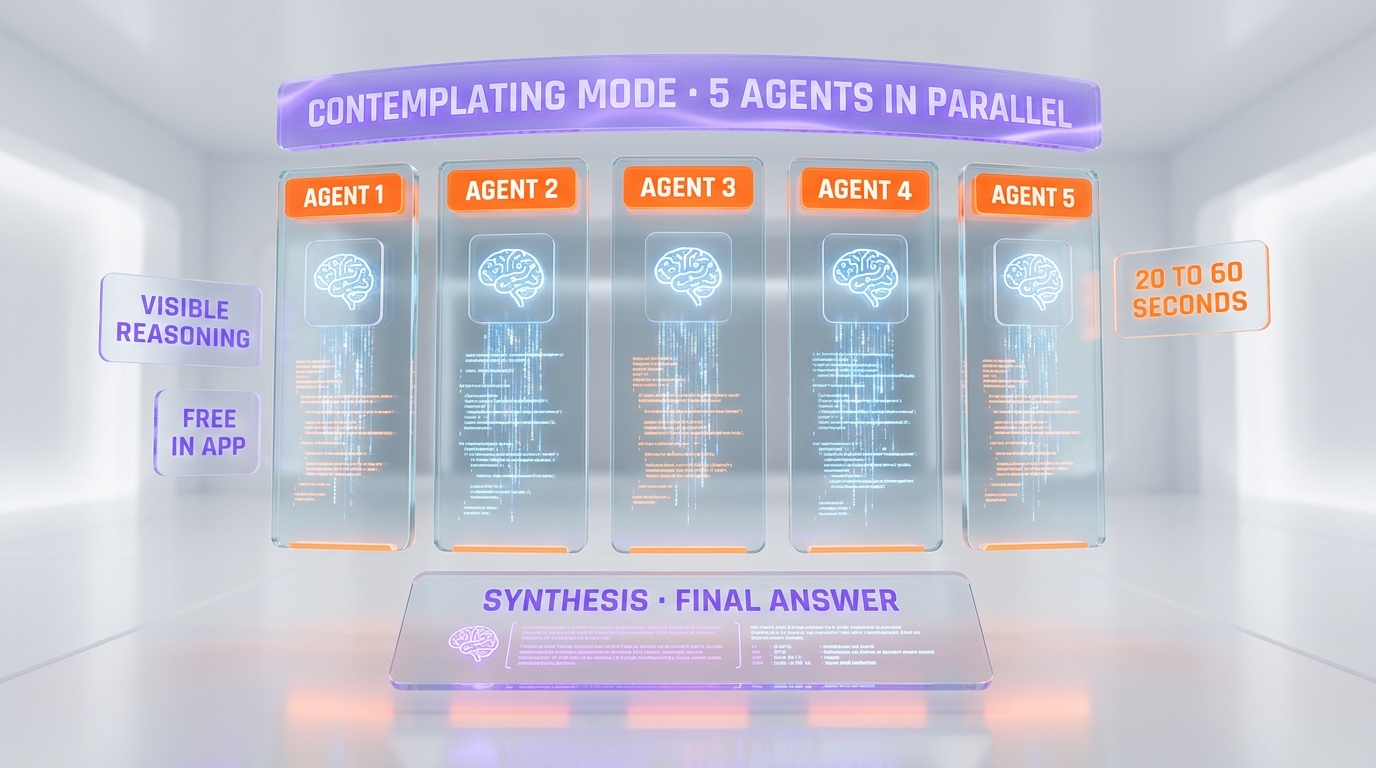

- Contemplating mode — a deliberation UI that streams parallel agent thoughts. Multiple Muse Spark agents run in parallel and the user watches their reasoning unfold before consensus.

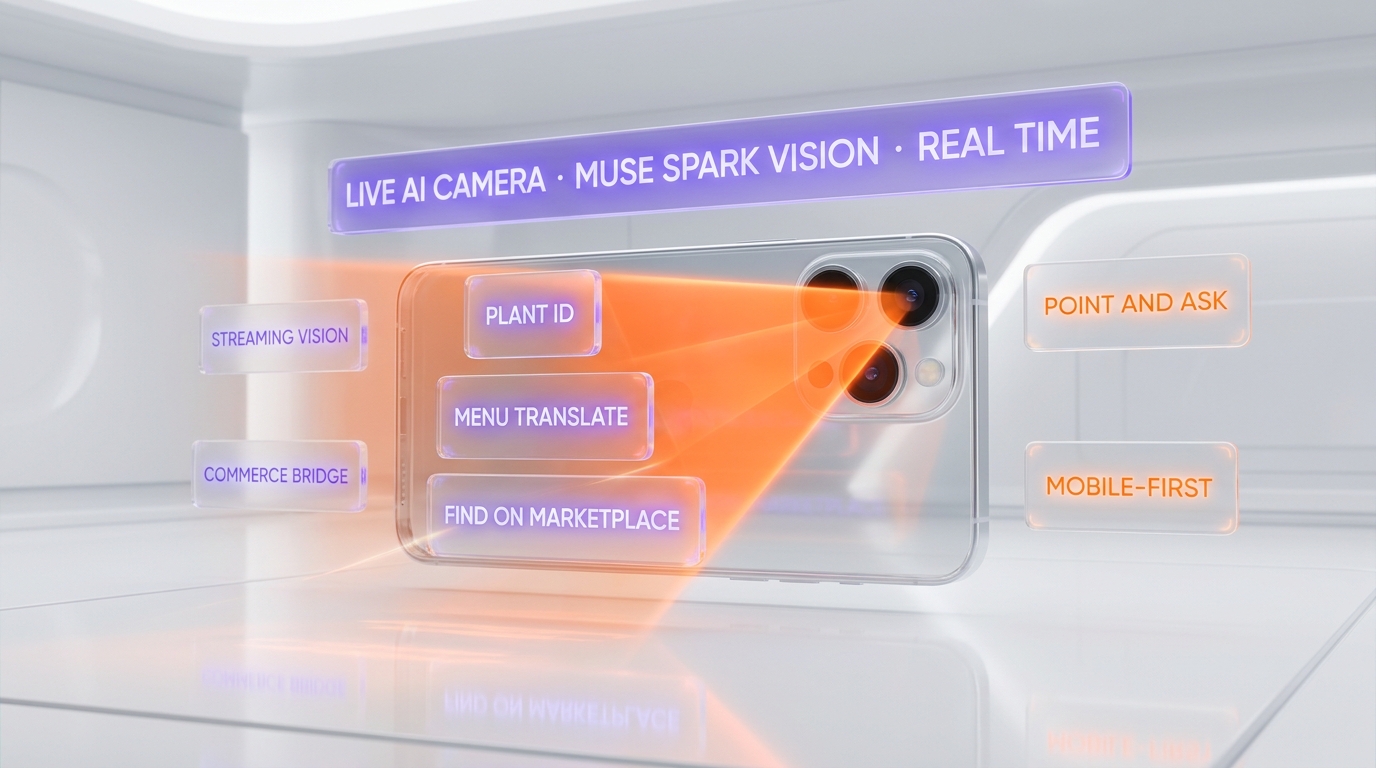

- Live AI camera — point your phone, the model sees through the lens and narrates, identifies, translates, or critiques in real time. Vision is no longer a separate "upload a photo" workflow.

- Marketplace shopping agent — an agent embedded across Facebook Marketplace, WhatsApp, and Instagram Shopping. It searches, negotiates, and books on the user's behalf inside the message thread.

According to 9to5Mac and Engadget, the rollout is global on iOS, Android, and web from May 12, with the Marketplace agent staged region by region as commerce partnerships are signed.

Contemplating Mode: Multi-Agent Reasoning Becomes Consumer UI

Contemplating mode is the headline feature for power users and the answer to a category Google created with Gemini Deep Think and OpenAI established with GPT-5 Pro. The pattern is identical at the model layer — spin up parallel reasoning paths, then merge — but the product layer is where Meta differentiates.

How Contemplating Mode Renders in the App

When a user toggles Contemplating mode, the response area splits into three to five live panels. Each panel shows a Muse Spark agent thinking through the prompt in real time, with timestamps, intermediate citations, and a confidence score. The agents do not see each other's drafts. After the parallel pass finishes — typically 20 to 60 seconds — a sixth synthesis agent merges the streams and writes the final answer below.

The deliberate UI choice is to show the reasoning, not hide it. Gemini Deep Think hides the chain of thought by default and exposes only the final answer plus an optional "thoughts" expander. GPT-5 Pro hides it entirely. Meta puts the parallel agents front and center as the product.

Why Meta Made It Visible Instead of Hidden

Two reasons stand out from the engineering decision. First, Meta's consumer audience is not paying $200 per month for hidden compute — they need to see what they are getting for free to perceive the value. Second, the visible UI is a content engine — every Contemplating session is essentially a debate the user can screenshot and share, which is exactly the surface area Meta optimizes for on Instagram Reels and Facebook feed.

The Live AI camera is the second pillar, and the one that pushes Meta's mobile-first DNA into a frontier-model wrapper. Where ChatGPT's voice mode and Gemini Live still feel like attached features, Meta builds the camera as the primary input surface for the AI app on phone form factor.

Live AI Camera: Vision as the Default Input

The Live AI camera is technically a Muse Spark multimodal endpoint with low-latency streaming, but the product framing matters more than the spec. Meta is the only consumer AI company that owns three vertical camera surfaces — Instagram, WhatsApp, and Ray-Ban Meta glasses — and the rebuilt app is the unified entry point for all three.

Point and Ask: The New Consumer Loop

The flow is point-and-ask. You open the Meta AI app, the camera is already on. You point at a restaurant menu in Italian, Muse Spark translates inline. You point at a plant, it identifies the species and tells you whether it is safe for cats. You point at your living room, it suggests three furniture layouts and offers to find each piece on Facebook Marketplace.

The last bridge — from "identify" to "find on Marketplace" — is where the Live AI camera stops being a clone of Gemini's vision capability and starts being a Meta-only product. Google can identify the chair. Meta can identify the chair, find a used one near you, and book the meetup in Messenger.

How the Camera Compares With Gemini Live and ChatGPT Vision

Gemini Live (Google) and ChatGPT Vision (OpenAI) both stream video and respond. Both have been live since 2024 and 2025 respectively. The Meta differentiator is not the streaming model — it is the integration with consumer transaction surfaces. Gemini Live cannot place an order. ChatGPT Vision cannot start a chat with a Marketplace seller. The Meta AI Live camera can do both, by design, from launch day.

This vertical integration also means Meta does not need to win on raw model quality to win the consumer use case. Muse Spark only needs to be good enough to identify the chair — Meta's distribution does the rest.

Marketplace Shopping Agent: Commerce Inside the Message Thread

The Marketplace shopping agent is the most strategically important feature in the May 12 release because it is the first time a frontier-model agent ships across WhatsApp, Instagram, and Facebook Marketplace simultaneously, with a transactional layer baked in.

What the Agent Can Do on Day One

The agent operates as a conversational layer over Meta's existing commerce graph. A user types "find me a used MacBook Air under $700 within 10 miles" into WhatsApp. The agent queries Facebook Marketplace listings, filters by location and price, and returns a ranked list inside the chat. The user replies "ask the seller of the second one if they can do $650." The agent opens a Messenger thread with the seller and negotiates.

This is not a hypothetical. It is the demo Meta showed press on May 12, and the rollout is staged across WhatsApp Business API customers, Instagram Shopping merchants, and Facebook Marketplace listings sequentially.

Why This Is the First Real Consumer Agent at Scale

Anthropic ships agents for enterprises. OpenAI ships ChatGPT Agent for paying users. Google ships agent features through Workspace. Meta is the first to put an agent in the hands of a multi-billion-user free consumer audience with native transactional plumbing. The Marketplace shopping agent does not need a new app, a new login, or a new payment surface — it lives inside conversations people are already having.

This is also the moment Meta's investment in WhatsApp Business API monetization comes into focus. Meta has spent five years convincing merchants to do customer service inside WhatsApp threads. The shopping agent is the consumer-side counterpart — and Meta now owns both sides of the commerce conversation.

3.4 Billion Users: The Distribution Moment for Muse Spark

Until May 12, Muse Spark was a closed-source frontier model with benchmarks but no real consumer footprint. The rebuilt Meta AI app changes that overnight. Meta's family-of-apps daily active users sit above 3.4 billion as of Q1 2026 earnings. Even if 10 percent of those users open the Meta AI app weekly, that is more weekly users than ChatGPT and Gemini combined according to public traffic estimates.

Why Distribution Matters More Than Benchmarks

The post-2025 LLM market has converged on quality. The top frontier models are within a few percentage points of each other on most public benchmarks. The differentiator is no longer "which model is smarter" but "which model is in front of users when they need an answer." Meta does not need Muse Spark to beat GPT-5 or Gemini 3.1 on MMLU. It needs Muse Spark to be the model the Instagram user reaches for when they want to identify a sunset filter, and the WhatsApp user reaches for when they want to find a used bike.

What 3.4 Billion Users Means for Training Data

The second-order effect is data. Every Contemplating session, every Live camera frame, every Marketplace negotiation generates feedback Meta can use to fine-tune Muse Spark. OpenAI has 800 million weekly users feeding ChatGPT. Anthropic has Claude.ai usage in the tens of millions. Meta is bringing a 3 billion to 4 billion user feedback loop online in a single release. The data flywheel implication is significant — but it is also the reason the consumer-privacy debate around the rebuild will intensify in the weeks ahead.

Meta vs Gemini Deep Think vs GPT-5 Pro: The Reasoning UI War

Contemplating mode lands in a category that already has two strong entries. Gemini Deep Think on the Pro tier offers parallel reasoning with the agents hidden. GPT-5 Pro offers hidden reasoning with extended compute. Meta's contribution is not a new reasoning technique — it is a new product framing for the same underlying primitive.

Gemini Deep Think: Hidden Reasoning, Priced at Google One AI Premium

Gemini Deep Think is the reasoning tier inside Gemini 3 Deep Think, available to Google One AI Premium subscribers. The pattern hides the parallel thinking behind a single visible answer. It is the right choice for Google's "ambient assistant" framing — show the answer, hide the work.

GPT-5 Pro: Pure Hidden Reasoning, $200 per Month

GPT-5 Pro is the $200 per month tier from OpenAI. Reasoning is hidden entirely. The framing is "trust the answer because we spent more compute." That works for prosumers and developers, but it does not work for the 3.4 billion casual users Meta is now serving — they need to see why an answer is the answer.

Meta Contemplating Mode: Visible Reasoning, Free

Meta puts the parallel agents on screen and gives the feature away for free in the consumer app. That is the central product distinction. The three approaches are all valid, but they target different audiences. Meta is the only one optimizing for the casual mobile user who wants to feel the AI working.

The App Rebuild: What Changed Under the Hood

The May 12 release is described by 9to5Mac and Engadget as a rebuild, not an update. That word choice is intentional. The app's previous backend ran on a Llama-based stack with bolt-on multimodal endpoints. The new app routes natively to Muse Spark inference clusters, with a unified context window across text, vision, and the Marketplace agent's tool surface.

Muse Spark Becomes the Default Router

Every prompt — text, voice, camera frame, or agent command — now hits a Muse Spark routing layer that decides which capability to invoke. There is no model picker. There is no toggle between "fast" and "smart." The app makes the choice based on the query, and Contemplating mode is the user-toggleable override when the user wants deliberate reasoning.

The End of Llama in the Meta AI App

This is the operational signal that the open-source pivot we covered in April is now production reality on the consumer side. Llama is no longer the inference engine behind the consumer-facing Meta AI app. It still exists in the open-source community, in third-party products, and in Meta's developer-facing surfaces, but the consumer app no longer touches it. Muse Spark is now the engine for everything Meta ships to end users with the "Meta AI" label.

Privacy and Data: The Questions This Release Forces

A consumer agent that lives inside WhatsApp threads and reads Marketplace listings creates a unique data surface. Meta says the Marketplace agent operates with the same end-to-end encryption rules as WhatsApp chats — meaning the agent runs server-side when commerce APIs are involved, and on-device for purely conversational queries. The exact split has not been disclosed in detail.

How the Agent Handles End-to-End Encryption on WhatsApp

For purely conversational queries inside WhatsApp threads where end-to-end encryption is preserved, Meta routes inference through on-device Muse Spark distillates. When the agent needs to query Marketplace or open a Messenger negotiation thread with a seller, the request enters Meta's server infrastructure with explicit user consent shown in the chat surface. That is the model Meta describes — the exact technical boundary is something the company will need to document more thoroughly as regulatory scrutiny ramps up.

The Pattern Emerging Across Meta AI Products

The privacy framing here connects to a broader pattern across Meta's AI internal surfaces. The Model Capability Initiative we covered in April logs every employee keystroke and mouse click to train AI agents on internal workflows. The May 12 consumer release routes 3.4 billion users through Muse Spark with a fresh data flywheel. Meta is building two parallel data engines — one internal, one consumer — and both feed the same model family.

What This Means for Anthropic, OpenAI, Google, and Perplexity

The competitive implications are unevenly distributed. Three frontier labs feel this release differently.

OpenAI: The Consumer Monopoly Just Got a Second Incumbent

ChatGPT has been the default consumer AI app since November 2022. Meta is the first competitor with comparable distribution. The numbers tell the story — ChatGPT has roughly 800 million weekly active users on its own surfaces, and Meta now has the potential to surface Muse Spark to 3.4 billion users across the family of apps. Even modest engagement turns Meta into the second consumer AI brand overnight. The OpenAI response will likely come through deeper integration into iOS via the rumored iOS 27 Extensions framework and a pricing-tier squeeze on the free ChatGPT plan.

Google: The Deep Think Edge Just Became a Product Feature Everyone Has

Deep Think was Google's premium reasoning tier — the differentiator that justified Google One AI Premium pricing. Meta just shipped the same primitive for free with a more compelling UI. Google still has the model quality argument and the Android distribution argument, but it loses the "we are the only frontier lab with visible multi-agent reasoning" framing.

Anthropic and Perplexity: Different Segments, Less Direct Pressure

Anthropic competes on enterprise, developer trust, and Claude.ai consumer reach. Perplexity competes on AI search. Neither sits in front of Meta's exact audience — casual mobile users who want a free AI inside the apps they already use. The pressure on Anthropic and Perplexity comes indirectly, through user mindshare and the second-order effect on enterprise procurement once the Meta AI brand becomes a household name.

What the Rebuild Tells Us About Meta's Product Strategy

The May 12 release crystallizes a product strategy Meta has been hinting at since the Scale AI acquisition. Three layers stack visibly.

Layer 1 — Frontier Model: Muse Spark, Closed-Source

Muse Spark is the engine. Closed weights, no open release planned, Alexandr Wang's superintelligence lab owns the roadmap. The April launch coverage documented the $14.3B Scale AI training commitment behind the model and the closed-weights strategy. The May 12 release is operational confirmation that this is the only consumer-facing model now.

Layer 2 — Consumer Surface: Meta AI App and Family of Apps

The standalone Meta AI app is one entry point. The integrated AI inside WhatsApp, Instagram, and Facebook is another. The Ray-Ban Meta glasses are a third. All three surfaces will run on Muse Spark, and Contemplating mode plus Live camera plus Marketplace agent will progressively roll out across all of them.

Layer 3 — Commerce and Distribution: The Data Flywheel

The third layer is the one no other frontier lab has — direct commerce integration. Meta is the only AI company that owns a peer-to-peer marketplace, a payments rail in WhatsApp Business, and a shopping graph in Instagram. The Marketplace shopping agent is the product expression of that integrated stack.

Open Questions and What to Watch Next

A few important things are not clear yet from the May 12 announcement and reporting.

Will Meta Charge for Contemplating Mode Eventually?

Contemplating mode is free on day one. The compute cost of running five parallel agents per prompt is non-trivial. Meta has not committed to keeping it free permanently. The most likely trajectory is a freemium model where Contemplating sessions are rate-limited for free users and unlimited for a paid tier that Meta has not yet announced.

How Will the Marketplace Agent Handle Disputes and Fraud?

The agent negotiates and books on the user's behalf. When something goes wrong — a no-show seller, an item misrepresented in the listing — the liability question is genuinely new. Meta has not published the dispute process. Expect this to be the operational issue that surfaces fastest in the first quarter of usage.

When Will the Ray-Ban Meta Glasses Get Muse Spark?

The Ray-Ban Meta glasses currently run an earlier multimodal stack. The May 12 release does not mention glasses. The natural roadmap is the glasses get Muse Spark in the next quarterly update — and that is where the Live AI camera becomes truly differentiated, because a glasses-form-factor camera changes the consumer use case from "I pull out my phone" to "I just look at it." The competitive position there is unique to Meta.

The Bottom Line: Meta Just Became a Frontier Model Consumer Brand

For the first time in Meta's history, the company has a foundation model people will name. ChatGPT is OpenAI's brand. Gemini is Google's. Claude is Anthropic's. Until May 12, Meta's model identity was Llama — a developer brand, not a consumer brand. Muse Spark, inside the rebuilt Meta AI app, is the first Meta model the average WhatsApp user will use, recognize, and possibly refer to by name.

The model layer is closed. The lab strategy is closed. The product layer is now in front of 3.4 billion people. The next 90 days will tell us how many of them actually use it.

Frequently Asked Questions

What did Meta announce on May 12, 2026?

Meta rebuilt its consumer Meta AI app natively on Muse Spark, its closed-source frontier model. The rebuild shipped three new features simultaneously: Contemplating mode (multi-agent visible parallel reasoning UI), Live AI camera (real-time vision streaming), and a Marketplace shopping agent that operates inside WhatsApp, Instagram, and Facebook Marketplace.

What is Contemplating mode in the Meta AI app?

Contemplating mode is Meta's multi-agent reasoning UI. When the user toggles it on, three to five Muse Spark agents reason in parallel on the same prompt, each visible in its own live panel with timestamps and confidence scores. After 20 to 60 seconds, a synthesis agent merges them into a final answer. It is Meta's answer to Gemini Deep Think and GPT-5 Pro, with the difference that Meta shows the parallel reasoning instead of hiding it.

How is Contemplating mode different from Gemini Deep Think and GPT-5 Pro?

All three are parallel-reasoning features at the model layer. The product layer differs significantly. Gemini Deep Think hides the parallel thinking and shows only the final answer by default. GPT-5 Pro hides reasoning entirely. Meta Contemplating mode makes the parallel agents the central visible UI. Meta's version is also free in the consumer app, while Gemini Deep Think requires Google One AI Premium and GPT-5 Pro requires the $200 per month ChatGPT Pro tier.

What is the Live AI camera in the Meta AI app?

The Live AI camera is a real-time vision feature. Users open the camera inside the Meta AI app and Muse Spark streams a continuous interpretation of what the lens sees — translating menus, identifying plants and objects, suggesting furniture layouts, and bridging directly into Marketplace listings when something can be purchased. It is similar to Gemini Live and ChatGPT Vision in capability, but integrated with Meta's commerce surfaces.

How does the Marketplace shopping agent work?

The Marketplace shopping agent runs inside WhatsApp, Instagram Shopping, and Facebook Marketplace. The user describes what they want in natural language. The agent queries Marketplace listings, filters by criteria, and returns ranked results in the chat. The user can then ask the agent to negotiate with the seller — the agent opens a Messenger thread on the user's behalf and handles the back-and-forth before confirming the deal.

How many users will the rebuilt Meta AI app reach?

Meta's family of apps had 3.4 billion daily active users as of the Q1 2026 earnings report. The rebuilt Meta AI app is available globally on iOS, Android, and web from May 12, 2026. The integrated Muse Spark surfaces inside WhatsApp, Instagram, and Facebook reach the full family-of-apps user base. The Marketplace shopping agent is rolling out region by region as commerce partnerships are signed.

Is the Meta AI app still running on Llama?

No. The May 12, 2026 rebuild replaces the Llama-based backend with native Muse Spark inference. Llama still exists in the open-source ecosystem and in Meta's developer-facing surfaces, but the consumer Meta AI app — and the AI features inside WhatsApp, Instagram, and Facebook — now route to Muse Spark exclusively. We covered the strategic shift in our April analysis of Meta abandoning Llama open-source.

How does the Marketplace agent handle WhatsApp end-to-end encryption?

Meta describes a split model. Purely conversational queries inside WhatsApp run through on-device Muse Spark distillates, preserving end-to-end encryption. When the agent needs to query Facebook Marketplace listings or open a Messenger thread with a seller, the request leaves end-to-end encryption with explicit user consent shown in the chat surface. The exact technical boundary has not been documented in full detail.

Is Contemplating mode free or paid?

Contemplating mode is free in the Meta AI app on day one of the May 12, 2026 release. Meta has not committed to keeping it free permanently. Given the compute cost of running multiple Muse Spark agents in parallel per prompt, a freemium model with rate limits for free users and unlimited sessions for a paid tier is the most likely future direction, though Meta has not announced any pricing.

What does this release mean for ChatGPT and OpenAI?

ChatGPT held a near-monopoly position as the default consumer AI app from November 2022 through May 2026. Meta is the first competitor with comparable consumer distribution — 3.4 billion family-of-apps users versus roughly 800 million ChatGPT weekly active users. OpenAI's response is expected to come through deeper iOS integration, especially via the rumored iOS 27 Extensions framework, and through pricing adjustments on the free ChatGPT tier.

Will the Ray-Ban Meta glasses get Muse Spark and the Live AI camera?

The May 12, 2026 release covers the phone and web app surfaces but does not mention the Ray-Ban Meta glasses. The natural roadmap puts Muse Spark on the glasses in a future quarterly update. That is where the Live AI camera changes from "pull out your phone" to "just look at it" — a use case unique to Meta among frontier-model consumer brands, because no other AI lab ships glasses hardware at scale.

What should developers and businesses do now?

WhatsApp Business API customers and Instagram Shopping merchants should expect Marketplace agent integration to roll out across their accounts in the coming months and should review their listing data quality, since the agent ranks results algorithmically. App developers building consumer AI products on iOS and Android should treat the May 12 release as proof that the consumer AI default is now contested — distribution inside existing social and messaging apps is a category Meta has just claimed.