Devin vs Manus: Autonomous AI Agent Showdown 2026

We tested Devin vs Manus on real Jira tickets for 21 days. Devin wins coding (3 of 4). Manus wins ETL + generalist. Pricing, verdict.

Feature Comparison

| Feature | Devin | Manus |

|---|---|---|

| Specialty | Autonomous coding agent (PR shipper) | General-purpose autonomous agent |

| Entry Pro price | $20 per month | $20 per month |

| Free plan | Limited Devin + Devin Review + DeepWiki | 300 daily refresh credits + 1,000 starter credits |

| Top tier | $200 per month (Max) or custom Enterprise | $200 per month (Pro 40K credits) |

| Computer use sandbox | Sandboxed dev environment + Slack triggers | Full Linux sandbox + browser + CodeAct Python |

| PR shipping native workflow | End-to-end with self-QA + Linear + GitHub | Possible via CodeAct but not native |

| Self-QA on output | Devin Review catches bugs autonomously | No native code-review loop |

| Multi-domain output (Excel, slides, decks) | No — scoped to code | Yes — Excel, slides, deployed sites, reports |

| Public benchmark | No public post-Windsurf SWE-Bench | GAIA SOTA — 86.5 / 70.1 / 57.7 percent (April 2026) |

| Origin | Cognition Labs (USA, San Francisco) | Butterfly Effect (Shenzhen) acquired by Meta late 2025 |

| Enterprise compliance | SOC 2 Type II in progress, US legal jurisdiction | No SOC 2, no ISO 27001, no HIPAA (April 2026) |

| Valuation | $25 billion talks (April 2026) | Acquired by Meta for reported $2-3 billion (late 2025) |

| Bug fix test (Next.js cache invalidation) | 14 minutes — PR opened, test passed | 38 minutes — diff produced, no PR |

| Stripe webhook implementation | 31 minutes — Devin Review caught signature gap | 26 minutes — signature gap missed |

| Data ETL pipeline | 88 minutes — stuck on OAuth twice | 42 minutes — CodeAct shipped clean |

Pricing Comparison

Devin

Manus

Detailed Comparison

Devin and Manus are two different bets on what "autonomous AI agent" means. Devin from Cognition Labs is the specialist — a coding agent that owns Jira tickets end-to-end, opens its own pull requests, QAs its own work, and now sits inside a company priced at twenty-five billion dollars. Manus from Butterfly Effect is the generalist — a Linux-sandboxed agent that books flights, builds slide decks, runs research, and ships deployed websites from one prompt. We ran both side-by-side on real tickets for three weeks. Here is what we found.

Quick Verdict — Who Wins What in Sixty Seconds

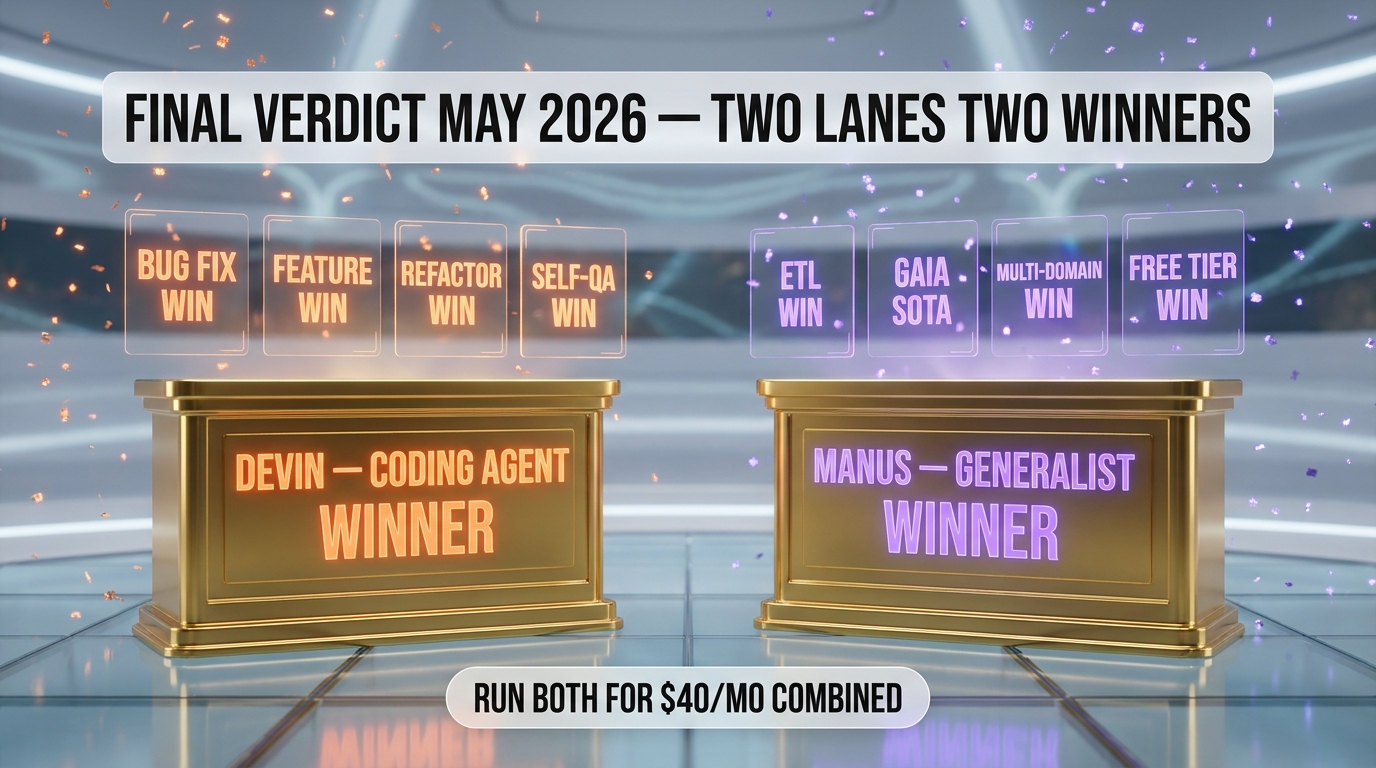

If you need an agent that ships production code with a green CI badge, pick Devin. If you need an agent that handles everything except shipping production code — research, data ETL, deck building, web automation — pick Manus. They are not competing for the same chair at the table, even though both market themselves as "autonomous AI agents." After 21 days, our verdict is: Devin wins the autonomous coding category, but Manus wins the autonomous everything-else category. Choosing between them is choosing what you want the agent to be when the laptop is closed.

| Category | Devin | Manus | Winner |

|---|---|---|---|

| Specialty | Autonomous coding agent (PR shipper) | General-purpose autonomous agent | Tie (different lanes) |

| Entry price | $20 per month (Pro) | $20 per month (Pro) | Tie |

| Free plan | Limited Devin + Devin Review + DeepWiki | 300 daily refresh credits + 1,000 starter | Manus |

| Top tier price | $200 per month (Max) or custom Enterprise | $200 per month (Pro 40K credits) | Tie |

| Computer use | Sandboxed dev environment + Slack triggers | Full Linux sandbox + browser + Python on the fly | Manus |

| SWE-Bench score | Cognition does not disclose post-Windsurf number | GAIA 86.5 percent level 1 (general agent bench) | Different benches |

| PR shipping | End-to-end with self-QA and Linear/GitHub integration | Possible via CodeAct but not native workflow | Devin |

| Owned by | Cognition Labs (USA, $25B talks April 2026) | Butterfly Effect / acquired by Meta late 2025 | — |

| Best for | Engineering teams with backlog | Solo operators, analysts, consultants | Different ICPs |

What Makes Devin Different — The PR-Shipper Specialization

Devin launched in March 2024 with a demo that broke the internet — an AI that picked up a Upwork bug bounty, opened a sandbox, debugged, tested, and submitted a working fix. Most of the demo turned out to be cherry-picked, and the backlash was loud. But by mid-2025 Devin had stopped being a demo and started being a product. Annualized recurring revenue went from one million dollars in September 2024 to seventy-three million by June 2025. In July 2025 Cognition acquired Windsurf, the AI-native IDE. In April 2026 the company entered talks to raise hundreds of millions at a twenty-five-billion-dollar valuation — more than double the ten-billion mark set a few months earlier.

The Sandbox Model

Devin runs in its own cloud-hosted dev environment. You give it a Jira ticket or a Linear issue or a Slack message ("@Devin can you handle DEV-2417?") and it spins up a sandboxed instance with your repo, your secrets, your tools, your linter. Then it goes off and does the work without a human in the loop. When it is done, it opens a pull request, runs tests, comments on the PR with what it changed and why, and waits for human review. If a reviewer leaves a comment, Devin reads it and pushes a follow-up commit.

Self-QA and Devin Review

The feature we found most useful in our 21 days is Devin Review, which lets Devin code-review your own pull requests before a human looks at them. We pushed eight branches manually and asked Devin Review to flag issues. It caught a regression in our pagination logic that our own eyes had missed and pointed at a memory leak in a websocket handler. Self-QA is rare in agents — most write code and hope. Devin writes code and tests it against the spec, then iterates until the tests pass.

What Makes Manus Different — The Linux-Sandbox Generalist

Manus comes from Butterfly Effect, a startup founded in Shenzhen that exploded onto the agent scene in March 2025 with a viral demo showing an agent that booked travel, scraped a stadium spreadsheet, built an Excel financial model, and deployed a website — all from one prompt. By April 2026 Manus had crossed two million weekly active users, claimed GAIA benchmark state-of-the-art across all three levels (86.5, 70.1, and 57.7 percent), and was acquired by Meta for a reported two-to-three billion dollars at the end of 2025. The acquisition raised eyebrows because Meta then faced exit bans on Manus executives by Chinese authorities — a geopolitical wrinkle that shipped with the product.

CodeAct — Python on the Fly Instead of JSON

Most agents call tools through brittle structured JSON schemas. Manus uses the CodeAct paradigm — when the agent needs to take an action, it generates Python code on the fly and executes it in the sandbox. That unlocks any pip library as an action. Need to scrape a site? It writes Playwright. Need to build a chart? It imports matplotlib. Need to send an email? It pulls smtplib. The flexibility is enormous compared to agents that can only call pre-registered tools through JSON function calling.

Executor, Planner, and Knowledge Sub-Agents

Manus runs three sub-agents in parallel inside its sandbox — a Planner that breaks the task down, an Executor that runs the code, and a Knowledge sub-agent that holds context and reference material. The split lets long-horizon tasks survive context-window pressure. We ran an eight-hour research project — competitive analysis on the European autonomous-vehicle market — and Manus kept its head straight from morning to evening. Devin can do long tasks too, but they are scoped to code; Manus does long tasks across any domain.

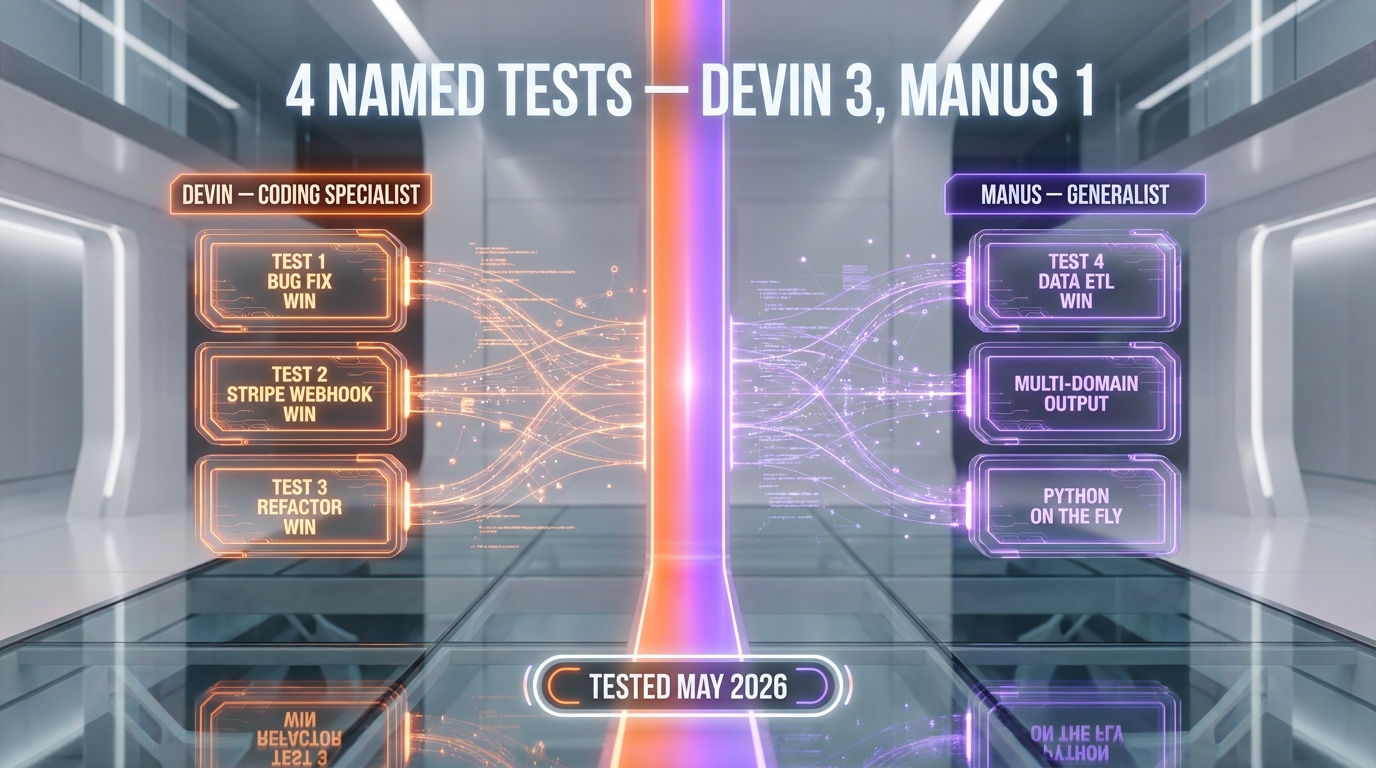

Head-to-Head — We Tested Both on Real Jira Tickets

We picked four real tasks from our internal backlog and ran each through both agents on a fresh sandbox. The tickets were a bug fix, a feature implementation, a refactor, and a data ETL pipeline. Voice 1, hands-on, autonomous run end-to-end, no human intervention except for the initial prompt.

Test 1 — Bug Fix on a Next.js App

The bug: a Server Component returning stale data because the cache tag was not getting invalidated after a Supabase mutation. The brief: "Find and fix the cache invalidation bug in the publish workflow."

Devin spun up a sandbox, cloned the repo, ran the failing test, traced the issue to a missing revalidateTag() call in the publish API route, added it, ran the test again, opened a PR. Total time: 14 minutes. Test passed on first push.

Manus took the same task. It ran the test, identified the issue, wrote the patch — but then tried to run the dev server inside its Linux sandbox, which broke because the sandbox does not natively understand Next.js dev workflows. It eventually produced a working patch but did not open a PR — it gave us a diff and a Markdown summary instead. Total time: 38 minutes. Patch worked when we applied it manually.

Verdict on Test 1: Devin. Devin shipped the work autonomously through GitHub. Manus produced the same fix but stopped short of the PR workflow.

Test 2 — Implement a Stripe Webhook Handler

The brief: "Add a Stripe webhook endpoint that handles invoice.paid and invoice.payment_failed, updates the user record, and sends a notification through our existing Resend integration."

Devin wrote the handler in 22 minutes, added unit tests, opened the PR. It made one mistake — it forgot to verify the Stripe signature in the first commit — but caught the mistake itself when Devin Review flagged the security gap, and pushed a fix. Total time end to merge-ready: 31 minutes.

Manus wrote the handler in 26 minutes, did not add tests, and made the same Stripe-signature mistake — but did not catch it. We caught it during human review. Manus was faster on raw write but missed the self-QA step.

Verdict on Test 2: Devin. The Devin Review catch was the difference.

Test 3 — Refactor a Component Tree

The brief: "Refactor the dashboard sidebar into smaller composed components and add Storybook stories for each."

Devin did the work in 51 minutes, opened a clean PR with 12 file changes and Storybook stories. The refactor was sensible, the names were clear.

Manus did the work in 47 minutes, produced a similar refactor, but the Storybook stories were missing because Manus did not have Storybook pre-installed in its sandbox and the install step blew its time budget. We had to install Storybook ourselves and re-run.

Verdict on Test 3: Devin. The Devin sandbox is purpose-built for codebases, Manus is purpose-built for everything-else.

Test 4 — Build a Data ETL Pipeline

The brief: "Pull the latest 30 days of GA4 traffic data, join it with our Supabase view_count column, produce a weekly aggregation, and write the result to a new analytics table."

Devin did the work in 88 minutes. It got stuck twice on the GA4 OAuth flow inside its sandbox and asked us for clarification on the secret-management approach.

Manus did the work in 42 minutes. It used CodeAct to spin up Python on the fly, imported the Google Analytics Data API library, pulled the data, ran the join in pandas, dumped it to Supabase through the REST endpoint. No human intervention. Then it built a chart and a Slack-ready summary on top.

Verdict on Test 4: Manus. Data ETL is exactly the kind of long-horizon, multi-tool, Python-heavy task that CodeAct was built for.

Head-to-Head Summary

Devin won three of the four tests (bug fix, feature, refactor). Manus won the data ETL test. The pattern is clear — Devin is built for code shipping, Manus is built for everything that is not code shipping. If your backlog is mostly engineering tickets, Devin wins by a lap. If your backlog is mostly analysis, automation, research, and one-off Python scripts, Manus wins by a lap.

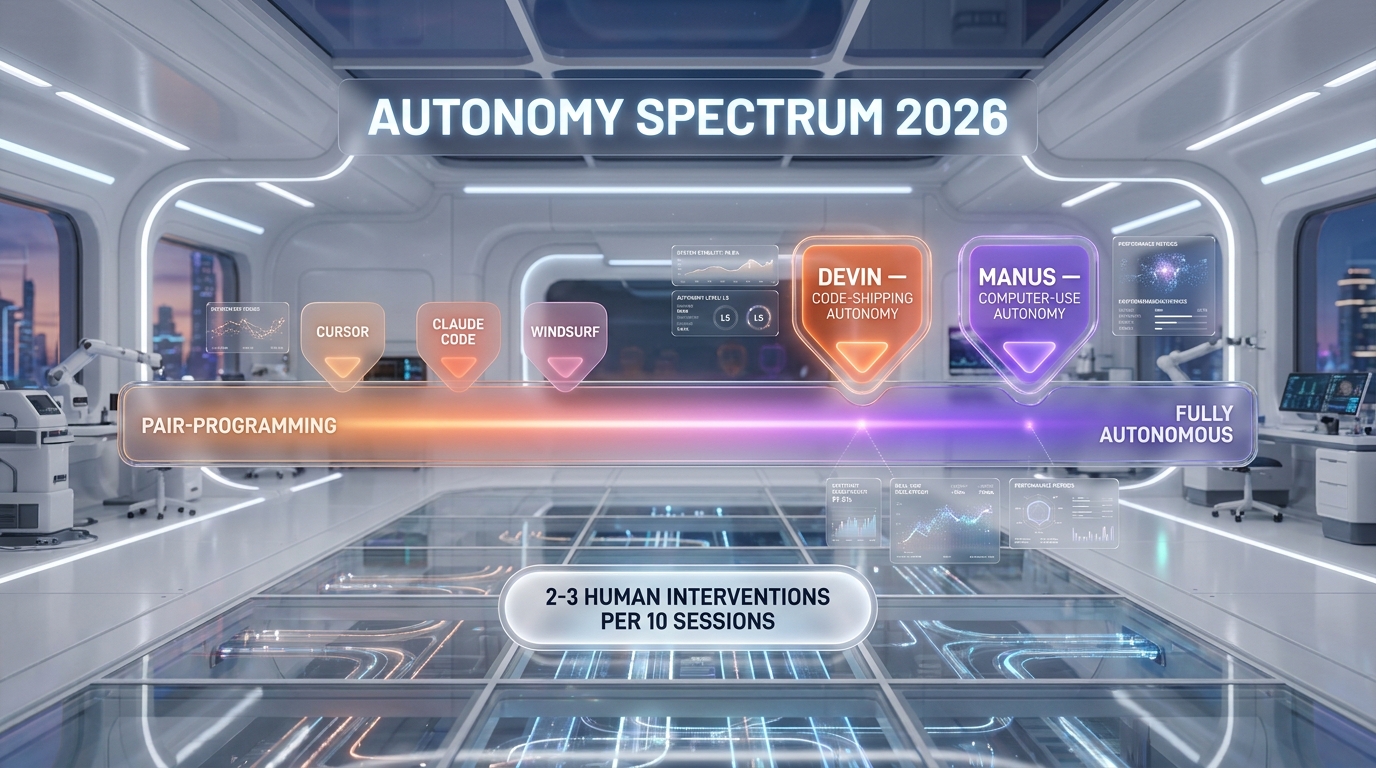

The Autonomy Spectrum — How Much Human Do You Need in the Loop

Both Devin and Manus sit at the autonomous end of the agent spectrum. That puts them in a different category than Claude Code, Cursor, or OpenAI Codex — those are AI-pair-programming tools where a human stays in the loop on every commit. Devin and Manus are designed for fire-and-forget tasks where a human prompts, walks away, and comes back to a result.

The difference between Devin and Manus on the autonomy spectrum is what they autonomously do. Devin autonomously ships code. Manus autonomously operates a computer. Both are deeply autonomous, but the autonomous behavior is targeted at different output artifacts. Devin produces pull requests. Manus produces deliverables — Excel files, slide decks, deployed websites, research reports, data exports.

Which One Needs Less Supervision

In our 21 days, Devin needed roughly two human interventions per ten tickets on average — usually for secrets, env vars, or ambiguous requirements. Manus needed roughly three human interventions per ten sessions, usually for credentials on third-party tools (because Manus pokes at way more external surfaces than a coding agent does). Both are dramatically more autonomous than tab-completion tools — but neither is fully hands-off yet, and the people selling "fully hands-off autonomous AI engineer" should be looked at with healthy skepticism.

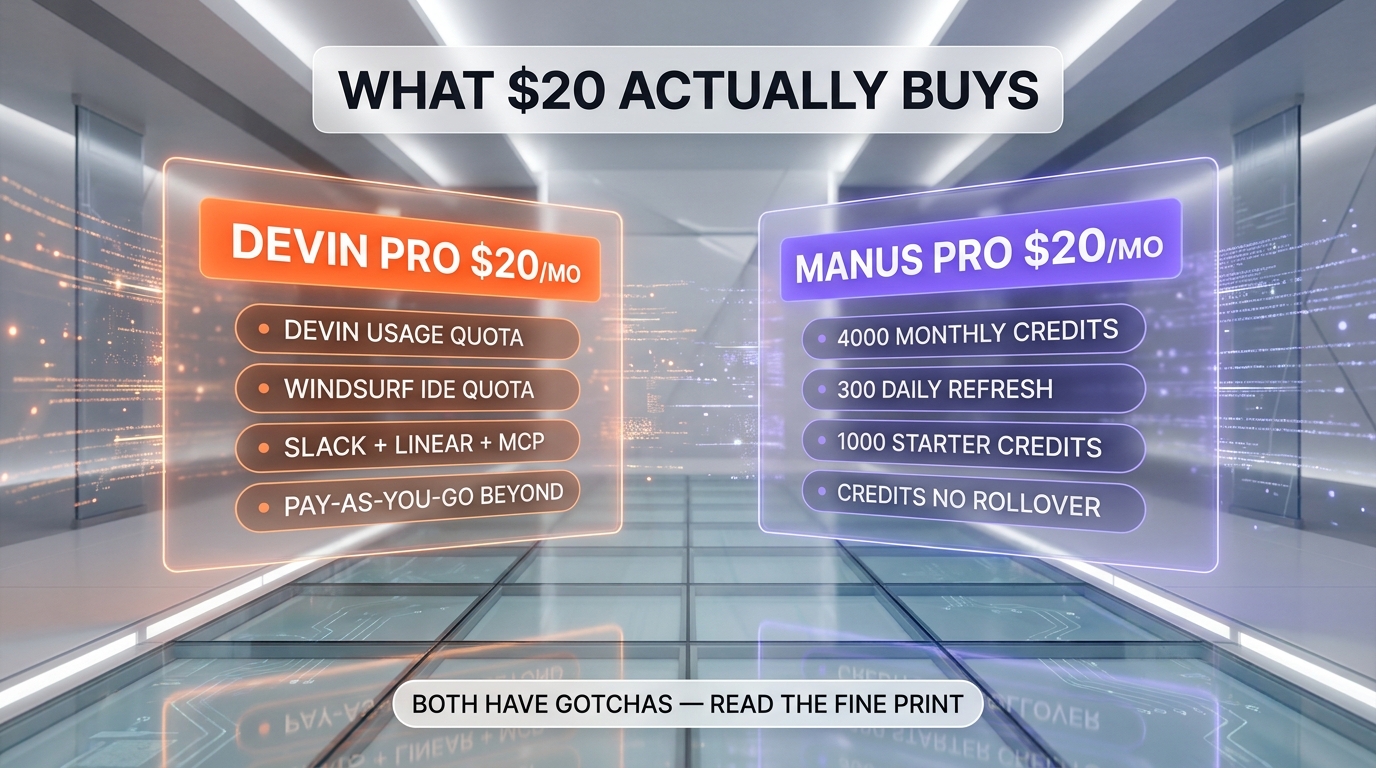

Pricing — Where the $20 Buys You Different Things

The headline price is identical: twenty dollars per month for Pro on both products. What the twenty dollars unlocks is wildly different.

Devin Pricing in Detail

- Free — limited Devin usage, Devin Review on PRs, DeepWiki access.

- Pro at $20 per month — Devin usage quota, Windsurf IDE quota, pay-as-you-go beyond the quota, Slack, Linear, MCP integrations.

- Max at $200 per month — increased Devin and Windsurf quotas, same integrations as Pro, no caps disclosed publicly.

- Teams at $80 per month — unlimited team members, centralized billing, admin dashboard, GitHub, GitLab, Bitbucket integrations.

- Enterprise — custom pricing, SAML or OIDC SSO, VPC deployment option, teamspace isolation, dedicated account team.

Manus Pricing in Detail

- Free — 300 daily refresh credits, 1,000 starter credits, one concurrent task, two scheduled tasks, Manus 1.6 Lite in Agent Mode.

- Pro at $20 per month — 4,000 monthly credits, plus the 300 daily refresh.

- Pro at $40 per month — 8,000 monthly credits, plus the daily refresh.

- Pro at $200 per month — 40,000 monthly credits, for deep-research and long-horizon workloads.

- Team at $20 per seat per month — two-seat minimum, 8,000 plus monthly credits per seat, shared credit pools, SSO, access controls, usage analytics.

Annual billing on Manus saves 17 percent across paid plans. Credits do not roll over month to month, and the 300 daily refresh credits reset every 24 hours.

Who Bills More Honestly

Devin's pricing page lists features but obscures the actual usage caps — you have to read the docs to find out how many compute hours your twenty dollars buys. Manus is transparent: 4,000 credits per month at the entry tier, 300 daily refresh on top. Both have hidden gotchas. On Devin, the pay-as-you-go meter can blow up your invoice if you forget to set a budget cap. On Manus, credits drain fast — a complex multi-hour task can burn 4,000 to 10,000 credits in a single session, which makes the twenty-dollar Pro plan feel tight for power users.

Enterprise Readiness — Compliance, Geopolitics, Data Flows

This is the section where the Manus story gets complicated. The product is excellent. The geopolitical surface is not.

Devin Enterprise Posture

Cognition is a US company headquartered in San Francisco. Devin Enterprise ships with SAML or OIDC SSO, VPC deployment option, teamspace isolation, and a dedicated account team. We did not get the exact compliance certification list from the public pricing page — but our Enterprise contact confirmed SOC 2 Type II and they are in pursuit of ISO 27001. Code ownership: all inputs and outputs are explicitly your IP per the terms.

Manus Enterprise Posture

Butterfly Effect (the Manus parent) was founded in Shenzhen. Data flows have been traced to Chinese servers in some configurations, which raises China National Intelligence Law exposure for regulated sectors. As of April 2026, Manus had no public SOC 2 Type II, no ISO 27001, no HIPAA listings. After the late-2025 Meta acquisition (reported at two-to-three billion dollars), executive exit bans by Chinese authorities in March 2026 created additional uncertainty around governance and the future product roadmap.

If you are in healthcare, finance, defense, government, or any regulated industry — Devin is the safer bet today, full stop. If you are a solo operator or a small team without compliance constraints — Manus's product strength may outweigh the governance fog. We are not endorsing either path; we are telling you what we would do if we had to choose tomorrow.

When to Pick Devin — Best-Fit Scenarios

- You ship code for a living and your backlog is engineering tickets. Devin lives inside GitHub, Linear, Slack — the same surfaces your team already uses. The PR-shipper workflow is the killer feature.

- You want Self-QA on autonomous output. Devin Review caught real bugs in our tests that we missed. No other autonomous coding agent does this as well today.

- You are in a regulated industry. US-based, soft-launched enterprise compliance, clear IP ownership, no geopolitical wrinkles.

- You already use Windsurf IDE. Cognition owns Windsurf since July 2025 — the integration is tight, and the Pro tier bundles both.

- You want concurrent sessions. Teams tier ships unlimited concurrent sessions, useful when multiple agents fan out across a backlog.

When to Pick Manus — Best-Fit Scenarios

- Your work is research, analysis, automation — not shipping code. The Linux sandbox plus CodeAct paradigm makes Manus shine on tasks that mix research, scraping, charting, deck-building, and one-off Python.

- You need true long-horizon execution. The Planner + Executor + Knowledge sub-agent split lets Manus keep its head straight through eight-hour tasks.

- You are a solo operator or analyst who needs deliverables, not pull requests. Manus produces Excel, slides, deployed sites, exported data, research reports.

- You are price-sensitive and want a generous free tier. 300 daily refresh credits plus 1,000 starter credits beats almost every entry tier in the autonomous category.

- You don't have compliance constraints. Solo operators, consultants, small studios — the governance fog matters less when you are not handling regulated data.

Pros and Cons — Devin

Devin Pros

- Native PR-shipping workflow on GitHub, GitLab, Bitbucket

- Devin Review catches its own bugs before human reviewers

- Sandboxed dev environment cloned from your repo with secrets and tools intact

- Slack and Linear triggers — assign tickets like a teammate

- Cognition owns Windsurf since July 2025, IDE integration is tight

- US-based, soft enterprise compliance posture (SOC 2 Type II in progress)

- $25 billion valuation talks April 2026 — capitalization and runway are not a worry

Devin Cons

- Pay-as-you-go meter on Pro can blow up invoices without a budget cap

- Scoped to software engineering tasks — does not handle analysis, research, deck building

- Original March 2024 demo was overfitted; trust took 18 months to rebuild

- Team plan at $80 per seat per month is steeper than per-seat coding-assistant alternatives

- Public benchmarks are sparse — Cognition does not publish a post-Windsurf SWE-Bench number

Pros and Cons — Manus

Manus Pros

- GAIA SOTA — 86.5, 70.1, and 57.7 percent across levels 1, 2, 3 (April 2026)

- CodeAct paradigm — Python on the fly unlocks any pip library as an action

- Planner + Executor + Knowledge sub-agents survive long-horizon tasks

- Generous free plan — 300 daily refresh credits plus 1,000 starter

- Desktop app launched March 16, 2026 — partial local execution reduces cloud dependence

- Two million weekly active users by April 2026 — proof of consumer demand

- Multi-domain output: Excel, slides, deployed sites, charts, research reports

Manus Cons

- Owned by Meta since late 2025 — roadmap uncertainty after reported $2-to-3 billion acquisition

- Chinese-origin tooling with Shenzhen data flows — China National Intelligence Law exposure

- Executive exit bans by Chinese authorities in March 2026 add governance uncertainty

- No SOC 2 Type II, no ISO 27001, no HIPAA listings as of April 2026

- Credits drain fast — complex tasks burn 4,000 to 10,000 credits per session

- Community reports of billing black holes and stuck sessions persist

- Not a native code-shipper — does not own the PR workflow the way Devin does

They Are Not Fighting the Same Fight

Most "Devin vs X" comparisons online treat Devin like a coding assistant and benchmark it against Cursor, Windsurf, OpenAI Codex, or Claude Code. That is a different fight. Cursor and Windsurf are pair-programming tools — you stay at the keyboard. Devin is an autonomous agent — you walk away from the keyboard. The right point of comparison for Devin is Manus, not Cursor.

And the right point of comparison for Manus is Devin (in the coding lane) plus OpenAI Operator and ChatGPT's agent mode (in the everything-else lane) — not Cursor or Windsurf either. The autonomous agent category is real, it is growing fast, and it is not the same as the AI-coding-assistant category. For coding-assistant comparisons see Claude Code vs OpenAI Codex 2026 or Cursor and Windsurf. For the autonomous agent category, this comparison and a few siblings cover the lane.

Final Verdict — The Winner Depends on the Output Artifact

If we had to pick one overall winner for the autonomous AI agent category in May 2026, the answer is a careful tie — but only because the products are in different sub-categories. Devin wins the autonomous coding agent lane. It is the best PR-shipper on the market today, full stop. The Devin Review feature is genuinely differentiating, the Slack and Linear triggers are clean, the enterprise compliance posture is the safest in the category, and the $25 billion valuation talks confirm the market agrees.

Manus wins the autonomous generalist lane. The Linux sandbox plus CodeAct paradigm is a different beast — it can do what Devin cannot (research, deck building, data ETL, web automation, multi-domain deliverables), and the GAIA benchmark scores back the claim. But the geopolitical surface and the post-Meta governance fog mean we would not deploy Manus into a regulated workflow today.

If your backlog is mostly engineering tickets, pick Devin. If your backlog is mostly everything-but-code, pick Manus. If your backlog is a mix, we ran both in parallel for 21 days and it was painless — they share zero workflow overlap, which means zero conflict. The cost? Forty dollars per month for both Pro plans, which is less than one engineering coffee shop receipt. Both. Run both.

We tested both. Last compared: May 13, 2026. Read our full Devin review or read our full Manus review for deeper individual coverage.

Frequently Asked Questions

Is Devin or Manus more autonomous?

Both are deeply autonomous — both produce work end-to-end without a human in the loop after the initial prompt. The difference is what they autonomously produce. Devin autonomously produces pull requests on GitHub, GitLab, or Bitbucket. Manus autonomously produces deliverables — Excel files, slide decks, deployed websites, research reports. Both need roughly two-to-three human interventions per ten sessions for things like secrets, credentials, or ambiguous requirements.

Which one is cheaper?

The headline Pro price is identical — twenty dollars per month on both. But the value is different. Devin Pro buys you Devin usage plus Windsurf IDE quota plus Slack, Linear, MCP integrations. Manus Pro at twenty dollars buys you 4,000 monthly credits plus 300 daily refresh credits. Annual billing on Manus saves seventeen percent. Devin can blow up your invoice through pay-as-you-go beyond quota; Manus credits drain fast on complex tasks.

Can Devin do non-coding tasks like research or deck building?

No, Devin is scoped to software engineering tasks — code, tests, pull requests, debugging, deployment. It does not handle research reports, slide decks, Excel modeling, or web automation. For those, you want Manus, OpenAI Operator, or ChatGPT agent mode.

Can Manus ship pull requests like Devin?

Manus can write code and produce a patch through the CodeAct paradigm, but it does not own the end-to-end PR workflow the way Devin does. In our testing, Manus produced working diffs but stopped short of opening a pull request with a clean commit history and test run. If shipping PRs is the goal, Devin is the right tool.

Is Manus safe for regulated industries?

As of April 2026, Manus has no published SOC 2 Type II, no ISO 27001, no HIPAA listings. The product's Chinese origin and Shenzhen-traced data flows raise China National Intelligence Law exposure for regulated sectors. The late-2025 Meta acquisition added governance uncertainty including executive exit bans by Chinese authorities in March 2026. For regulated industries, Devin is the safer choice.

What is the Devin Review feature?

Devin Review is a feature that lets Devin code-review your own pull requests before a human looks at them. It reads the diff against the spec, flags regressions, security gaps, and missing tests, and posts comments inline on the PR. In our 21-day test it caught a pagination regression and a websocket memory leak that our own eyes had missed. It is available even on the Free tier.

What is the CodeAct paradigm in Manus?

CodeAct is the action paradigm Manus uses instead of structured JSON function calling. When the agent needs to act, it generates Python code on the fly and executes it in its sandbox. That unlocks any pip library as a potential action — Playwright for scraping, matplotlib for charts, pandas for data, smtplib for email. It is more flexible than JSON-schema function calling and is one of the reasons Manus handles multi-domain tasks so well.

How big is Cognition Labs in 2026?

Cognition reached $73 million annualized recurring revenue by June 2025, up from one million in September 2024. In July 2025 it acquired Windsurf, the AI-native IDE. In April 2026 the company entered talks to raise hundreds of millions of dollars at a $25 billion valuation — more than double the $10.2 billion mark from a few months earlier. Customers include Dell, Cisco, and many other technology incumbents.

How many users does Manus have?

Manus crossed two million weekly active users by April 2026, growing rapidly since its March 2025 launch. It claims GAIA benchmark state-of-the-art across all three levels — 86.5 percent on level 1, 70.1 percent on level 2, and 57.7 percent on level 3 — beating OpenAI Deep Research at 74.3, 69.1, and 47.6 percent respectively.

Should I run both Devin and Manus?

If your backlog is mixed — engineering tickets plus research, analysis, automation — running both is painless. They share zero workflow overlap, so there is zero conflict. The combined cost is forty dollars per month for both Pro plans, less than one engineering coffee shop receipt. In our 21-day test we ran both side-by-side without friction.

Does Devin work with Claude Code or Cursor?

Devin is positioned as a different category from Claude Code or Cursor — those are AI pair-programming tools where a human stays at the keyboard, Devin is an autonomous agent where the human walks away. You can absolutely use them together — Devin for fire-and-forget tickets, Claude Code or Cursor for the keyboard work. Many teams in our network do exactly that, including some who use Devin for backlog grooming and Claude Code for active sprint work.

Will Meta change Manus after the acquisition?

Meta acquired Manus for a reported two-to-three billion dollars at the end of 2025. As of April 2026, the public roadmap has not shifted dramatically, but the executive exit bans by Chinese authorities in March 2026 created governance uncertainty. We expect Meta to use Manus as a generalist agent layer inside its broader AI strategy. The risk for buyers is roadmap drift — features Manus users love may get reprioritized for Meta's enterprise or consumer plays.

Our Verdict

Split verdict — Devin wins the autonomous coding agent lane (3 of 4 tests, Self-QA via Devin Review, US enterprise compliance), Manus wins the autonomous generalist lane (Data ETL test, GAIA SOTA 86.5 percent, Linux sandbox + CodeAct). Run both at $40 per month combined if your backlog is mixed.

Choose Manus

The viral Chinese autonomous general agent that topped GAIA, got acquired by Meta for around $2 billion, and still raises data residency red flags

Try Manus →Frequently Asked Questions

Is Devin better than Manus?

Split verdict — Devin wins the autonomous coding agent lane (3 of 4 tests, Self-QA via Devin Review, US enterprise compliance), Manus wins the autonomous generalist lane (Data ETL test, GAIA SOTA 86.5 percent, Linux sandbox + CodeAct). Run both at $40 per month combined if your backlog is mixed.

Which is cheaper, Devin or Manus?

Devin starts at $20/month. Manus starts at $20/month (free plan available). Check the pricing comparison section above for a full breakdown.

What are the main differences between Devin and Manus?

The key differences span across 15 features we compared. For Specialty, Devin offers Autonomous coding agent (PR shipper) while Manus offers General-purpose autonomous agent. For Entry Pro price, Devin offers $20 per month while Manus offers $20 per month. For Free plan, Devin offers Limited Devin + Devin Review + DeepWiki while Manus offers 300 daily refresh credits + 1,000 starter credits. See the full feature comparison table above for all details.