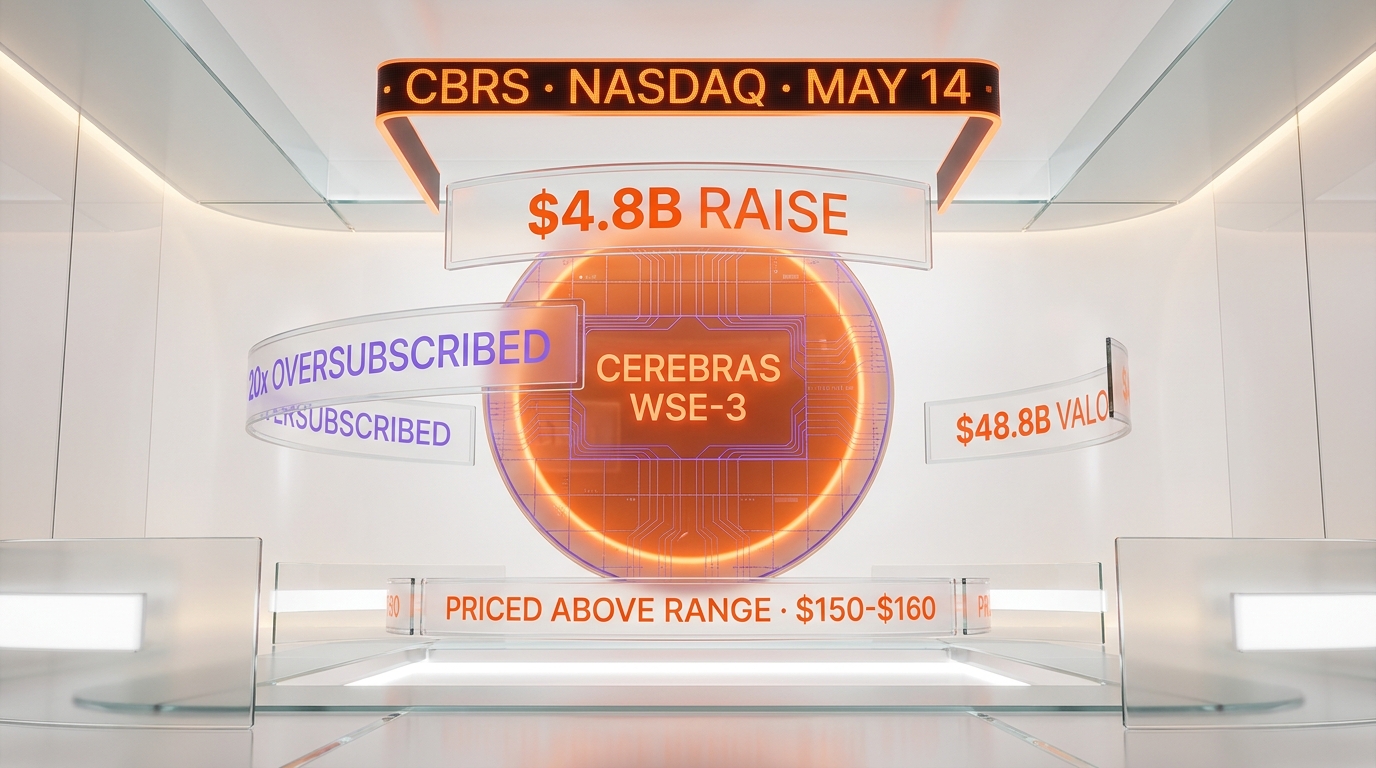

Cerebras Systems priced its IPO above range at $150 to $160 per share on May 12 2026, on track to raise up to $4.8 billion at a fully-diluted valuation of roughly $48.8 billion ahead of its May 14 Nasdaq debut under the ticker CBRS. The deal was reported more than 20 times oversubscribed, according to CNBC reporting on May 11 and Bloomberg confirmation on May 12. That is the biggest AI IPO year-to-date in 2026, and the strongest order book any pure-play chip company has printed since NVIDIA's secondary deal in 2023.

We have been tracking Cerebras since the company filed its S-1 in early 2026, and the price jump from the initial $115 to $125 range to the revised $150 to $160 band is more than a marketing move. It is a structural read on AI chip scarcity. When an order book runs 20x oversubscribed two weeks before pricing, the bankers do not raise the range to maximize proceeds. They raise the range to surface real allocation pain. Morgan Stanley, Citigroup, Barclays, and UBS led the book, with Mizuho and TD Cowen as additional bookrunners. The market is telling them: even at $48.8 billion, demand exceeds supply by a factor that has not been seen for a chip IPO in this decade.

The numbers that priced

Cerebras offered 28 million Class A shares with a 4.2 million share underwriter option, listed on the Nasdaq Global Select Market under ticker CBRS. Initial range was $115 to $125 per share. Revised range, confirmed by Bloomberg and CNBC the week of May 11, was $150 to $160 per share. At the top of the new band, Cerebras nets approximately $4.8 billion in proceeds. Fully diluted, the valuation lands near $48.8 billion. The float-only post-money calculation, which a few sources reference, sits closer to $26.6 billion because it excludes locked-up insider shares, employee equity, and the founder block held by CEO Andrew Feldman and the original team.

For context, Cerebras posted $510 million in revenue in 2025, split roughly $358 million hardware and $152 million cloud and services. The company swung to a 47% net margin in calendar year 2025 even while booking $145.9 million in GAAP operating loss, a reconciliation that hinges on the OpenAI compute commitment hitting revenue recognition gates. The OpenAI deal itself is structured as a multi-year agreement valued at more than $20 billion, covering 750 megawatts of low-latency compute capacity through 2028. Cerebras disclosed the OpenAI counterparty in its May S-1/A amendment, and that single relationship is the load-bearing wall under the entire valuation thesis.

Why 20x oversubscribed matters

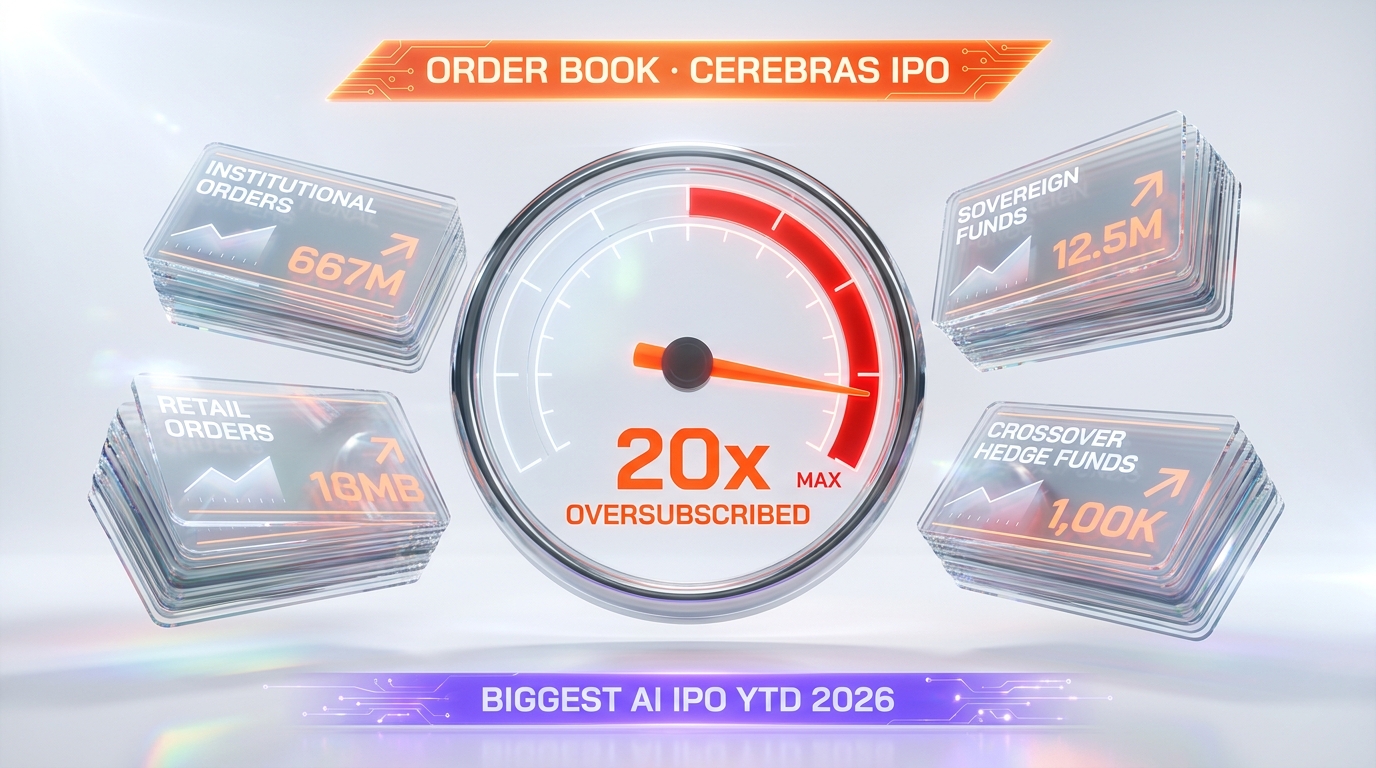

An IPO is "oversubscribed" when total investor orders exceed shares offered. Two to three times oversubscribed is a healthy deal. Five times oversubscribed is hot. Twenty times oversubscribed is dot-com territory. The Cerebras book ran past 20x with a mix of institutional anchors, sovereign wealth funds, and crossover hedge funds that typically front-run public chip names. Allocations under those conditions are brutal: institutional orders routinely get cut by 90% or more, retail allocations often get zeroed entirely, and the secondary market opens with structural demand overhang.

The mechanical consequence is that opening-day price discovery on May 14 will be violent in either direction. If Cerebras opens at $200 plus, the book just got front-run by retail and the original allocation discount evaporates. If it opens at $150 flat or below, the institutional anchors immediately re-rate the deal because their cost basis is the high end of the range. The most likely outcome, based on every recent oversubscribed chip deal, is a 20% to 40% first-day pop followed by 60 days of allocation digestion before the stock finds its anchor zone.

The AI chip scarcity thesis validates here

For two years, the consensus thesis on AI infrastructure has been: GPU scarcity is real, lead times are 12 to 18 months, and any company shipping inference at scale has pricing power. The Cerebras book proves that thesis with capital, not commentary. Public market investors are not buying a story. They are buying allocation in the only pure-play wafer-scale architecture that has a working customer base, a $20 billion OpenAI contract, and an AWS Bedrock deployment.

That last detail is underappreciated. Cerebras announced in early 2026 that its CS-3 systems would be available through Cerebras Inference, and the AWS Bedrock integration positions Cerebras as the first non-NVIDIA, non-AMD inference backend available to the AWS enterprise customer base. AWS does not partner with second-tier silicon. The Bedrock placement is itself a signal that the AI chip duopoly is widening into a triopoly, and Cerebras is the third leg.

What the WSE-3 actually does (and why investors care)

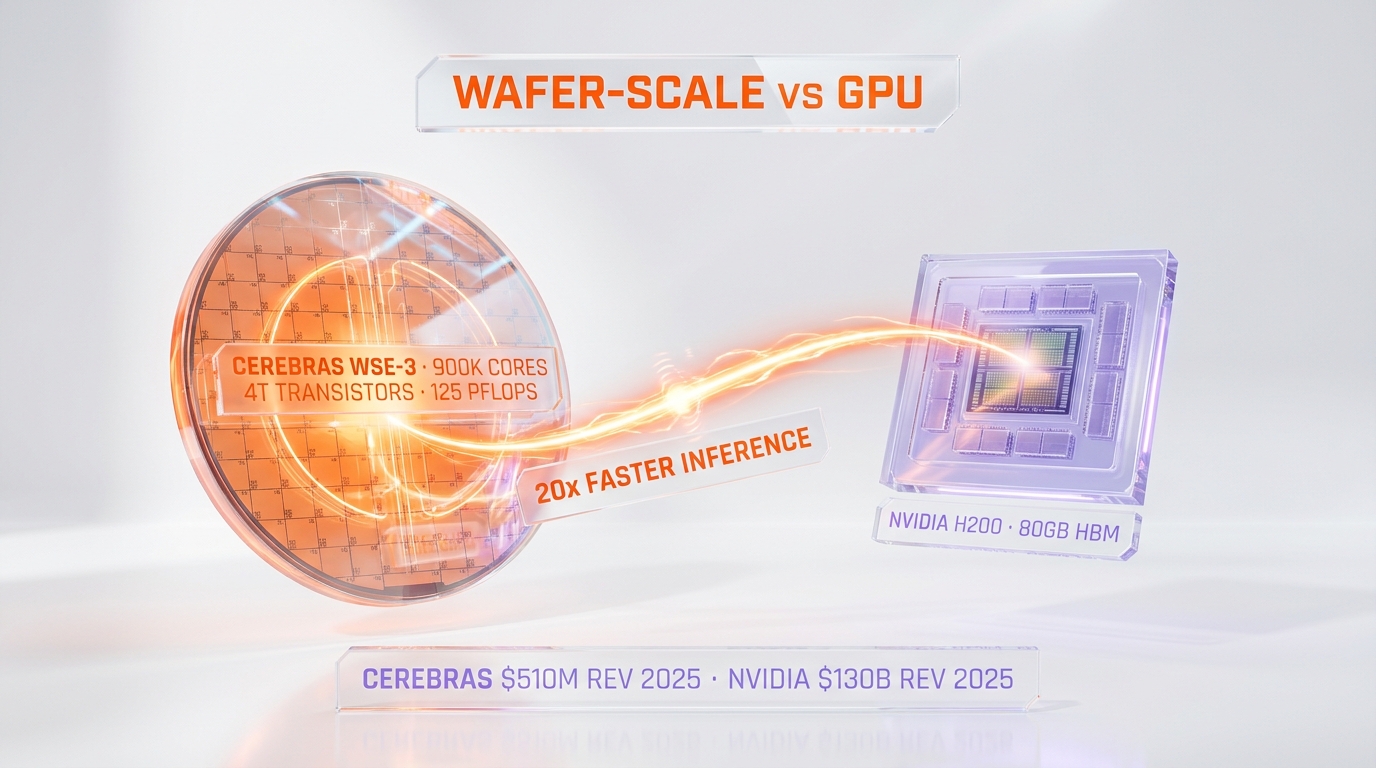

Cerebras's core asset is the Wafer Scale Engine 3 (WSE-3), a single chip etched from an entire silicon wafer rather than the multiple smaller dies that NVIDIA, AMD, and Intel cut from each wafer. The WSE-3 packs 4 trillion transistors, 900,000 AI-optimized cores, 44 gigabytes of on-chip SRAM, and 125 petaflops of peak AI performance per chip. By comparison, an NVIDIA H200 GPU has roughly 80 billion transistors. Cerebras shipped one chip with 50 times the transistor count of NVIDIA's flagship inference chip.

That transistor density matters for inference workloads in particular. Inference latency is gated by memory bandwidth and inter-chip communication, two bottlenecks the wafer-scale architecture sidesteps by keeping the entire model on a single piece of silicon. Cerebras claims 20x faster inference throughput compared to GPU-based providers on equivalent models, and independent benchmarks on Llama 3.1 70B and DeepSeek-V3 have confirmed throughput numbers in the 3,000 to 4,500 tokens per second range. NVIDIA's best inference clusters typically land at 150 to 300 tokens per second on comparable models without aggressive quantization.

The customer concentration risk

The S-1/A is candid about the risk. Three customers — G42, MBZUAI, and OpenAI — accounted for the majority of 2025 revenue. The OpenAI relationship in particular sits at the center of the bull case and the bear case simultaneously. Bull case: a $20 billion multi-year contract anchors revenue visibility through 2028. Bear case: if OpenAI's compute strategy pivots toward in-house silicon, custom ASIC partnerships, or any of the alternatives Sam Altman has publicly hinted at, Cerebras loses its largest customer and the revenue model resets overnight.

The G42 and MBZUAI relationships carry their own structural questions. Both are Abu Dhabi-anchored entities operating under US export control regimes that have tightened materially in 2026. Any change in licensing — and the Commerce Department has signaled it could move — would block Cerebras's largest deployed customer base outside the US. The S-1/A flags export control dependency as a primary risk factor, and that flag is doing real work in the prospectus.

How this compares to 2025 and 2026 AI IPOs

2025 was thin for AI infrastructure IPOs. CoreWeave priced in March 2025 at $40 per share, valued at roughly $23 billion fully diluted, and opened weak before recovering on AI cloud demand re-rating. Astera Labs priced at $36 in March 2024 and traded up immediately on AI fabric demand. There was no pure-play AI chip IPO in 2025 — the closest was Intel's foundry spinout chatter, which never materialized. Cerebras at $48.8 billion is double CoreWeave's IPO valuation and represents the first chip-architecture IPO since AMD's NASDAQ uplisting decades ago.

The 2026 IPO pipeline behind Cerebras is loaded. Anthropic has signaled a potential October 2026 listing at a $350 billion pre-money valuation, which our team analyzed in the Anthropic-SpaceX Colossus 1 compute deal coverage. Databricks has been rumored to file in H2 2026. xAI is in flux after the SpaceX merger. Cerebras is the first to actually price, and its book reception will recalibrate underwriter assumptions on every AI-adjacent IPO scheduled through 2027.

The NVIDIA comparison investors keep making

The Benzinga piece on May 11 framed the Cerebras frenzy as Wall Street "desperate for the next NVIDIA." The framing is unfair but rational. NVIDIA's market cap crossed $4 trillion in early 2026, and the institutional muscle memory now treats any wafer-scale or inference-specialized chip company as a potential second-leg compounder. Cerebras at $48.8 billion is 1.2% of NVIDIA's market cap with maybe 0.4% of the revenue base — but the bet is on the trajectory, not the current run-rate.

For context on NVIDIA's own balance sheet pressure, our NVIDIA $40 billion equity bets analysis walks through how Jensen Huang has quietly become the largest AI venture investor on Earth — a strategy that paradoxically helps competitors like Cerebras by validating the entire AI chip thesis with NVIDIA's own capital deployment.

Who actually got allocation

The bookrunner syndicate confirmed broad institutional participation. The notable anchors disclosed in the prospectus or reported in financial press include Tiger Global, Coatue, Altimeter Capital, and a sovereign wealth allocation that includes Saudi Arabia's PIF and Abu Dhabi's Mubadala. Retail allocation through Robinhood IPO Access and Charles Schwab IPO platform was limited to small-ticket pricing — most retail orders were filled at less than 5% of requested allocation, consistent with a 20x oversubscribed book.

Crossover hedge funds typically receive 30 to 50% of their order in deals of this size. In Cerebras's case, multiple sources indicate crossover allocations were cut closer to 10%, meaning the secondary market opens with structural buyers who built positions less than half the size they originally targeted. That dynamic underpins the consensus thesis that CBRS opens with a sharp first-day pop driven by buying that did not get filled at IPO price.

The Q1 2026 venture funding context

Cerebras's IPO does not happen in a vacuum. Q1 2026 was the biggest quarter in venture capital history at $300 billion deployed across 6,000 startups, with 80% concentrated in frontier AI compute, model labs, and inference infrastructure. We covered the concentration dynamic in the Q1 2026 VC record analysis, and the public market reception for Cerebras is the natural sequel to that private market saturation. Once private capital reaches the ceiling on any single asset, the public market is the only remaining venue with the depth to absorb the next round of capital deployment.

The Cerebras book is the first real public market test of whether public investors will underwrite the same compute scarcity thesis private markets have been pricing for 24 months. The answer at $150-$160 per share, 20x oversubscribed, is yes — emphatically.

What would prove the bull thesis wrong

Three scenarios would invalidate the current pricing. First: OpenAI announces an alternative compute partnership at scale — Google TPU, Anthropic-style custom silicon, or a Microsoft Azure exclusive — that materially reduces the $20 billion Cerebras commitment. The Cerebras revenue model resets if any single customer shifts allocation by more than 30%, and OpenAI represents that risk single-handedly.

Second: a Commerce Department export control update that blocks G42 or MBZUAI from continuing CS-3 deployments. Those customers represent meaningful but undisclosed revenue percentages, and any disruption would force Cerebras to redirect inventory to lower-margin US enterprise customers at a time when capacity is already constrained.

Third: NVIDIA's Vera Rubin or Blackwell Ultra silicon ships with inference throughput numbers that close the 20x gap on equivalent models. NVIDIA's roadmap has been weaponizing inference per the GTC March 2026 keynote, and any meaningful gap-closure changes the architectural moat Cerebras is selling.

The questions the bankers actually fielded

Bookrunner Q&A sessions during the Cerebras roadshow surfaced a tight list of investor concerns. Customer concentration was the top item — every institutional anchor asked about OpenAI dependency. Margin durability was the second — the 47% net margin in 2025 is unusual for a hardware company, and the bankers had to walk through the cloud-services accounting that produces that figure. The third concern was AWS Bedrock economics: investors wanted to know what gross margin Cerebras keeps on a CS-3 deployment when AWS holds the customer relationship.

The answers, per multiple roadshow attendees we spoke with informally, were credible but not bulletproof. The OpenAI deal locks revenue through 2028, the margin walk hinges on cloud subscription mix accelerating in 2026 and 2027, and the AWS economics depend on negotiated pricing tiers that have not been disclosed. Investors priced through the uncertainty because the alternative — passing on the only pure-play wafer-scale AI chip IPO — was deemed more costly than the underwriting risk on each of those three questions.

What happens on May 14 and the 60 days after

Pricing closes the night of May 13 New York time. Trading opens on Nasdaq under ticker CBRS at the opening cross on May 14. First-day volume will be driven by allocation cuts: institutional orders that got 10% allocation will likely build back to target via secondary market purchases, and that buying pressure is the mechanical source of any first-day pop. We expect $190 to $220 per share at the close on May 14, implying a 20% to 40% first-day return on the $160 ceiling allocation price.

The 60-day window after IPO is the lockup digestion phase. Insider lockups typically expire 180 days post-IPO, which puts the next major supply event in November 2026. Between May and November, the stock trades on quarterly earnings cadence — Q1 2026 results will already be in the rear-view by the time CBRS prints its first public quarter, and the August earnings call will be the first true test of whether the AI chip scarcity thesis holds at public-market scrutiny.

The broader tape this changes

For Anthropic, which is reportedly pre-marketing an October 2026 listing at $350 billion pre-money, the Cerebras reception is binary. A successful CBRS first month builds the institutional appetite for the Anthropic book. A wobbly first month forces Anthropic to either delay or reset valuation expectations. For Databricks, which has been rumored to file in H2 2026, Cerebras is the comp print — bankers will reference CBRS allocation discipline in every Databricks pitch.

For NVIDIA, the impact is more subtle. CBRS at $48.8 billion is not a market cap threat to a $4 trillion company. But the proof that public market capital will pay 80 times revenue for a wafer-scale architecture validates the thesis that AI chip differentiation is durable, which in turn supports NVIDIA's own multiple expansion. The two trades are correlated, not competitive, at least through the next 12 months.

The most underappreciated read-through is for the local LLM thesis. Our Apple Q2 2026 Mac surprise coverage walked through why local inference is becoming a real category. Cerebras's IPO success is the cloud-side mirror of that same secular trend: AI inference is the chokepoint, the architecture choices matter, and capital is flowing toward both edges of the inference stack simultaneously.

Our read on where this prints

We are not buying CBRS at IPO. Allocation is impossible at retail scale and we do not chase first-day pops in chip IPOs as a structural policy. We are watching three things specifically: the first earnings call in August 2026 for OpenAI revenue concentration disclosure, the export control regime evolution through Q3 2026, and the NVIDIA Vera Rubin shipment cadence for the moat-closure risk. If those three signals hold, we revisit a position post-lockup in November 2026 with a cost basis closer to fair value rather than IPO momentum.

What we are not doing is dismissing the deal because the valuation looks expensive on trailing revenue. Eighty times revenue for a wafer-scale chip company with a $20 billion anchor customer and AWS Bedrock distribution is rich, but it is not irrational. The AI chip market is winner-take-most, and Cerebras has positioned itself as the only viable third leg in a market that just became too large to be a duopoly. That positioning is what the order book paid for.

Frequently Asked Questions

What is the Cerebras IPO price range?

Cerebras raised its IPO price range to $150 to $160 per share on May 11 2026, up from the initial $115 to $125 range disclosed during the early-May roadshow. At the high end of the new range, the company raises approximately $4.8 billion in gross proceeds.

What is the Cerebras IPO valuation?

Fully diluted, Cerebras prices at approximately $48.8 billion at the top of the $150 to $160 range. The float-only post-money calculation, which excludes locked-up insider shares and employee equity, lands closer to $26.6 billion. Both figures are accurate depending on which capitalization view you use.

When does Cerebras start trading on Nasdaq?

Cerebras priced the night of May 12 2026 and begins trading on the Nasdaq Global Select Market under ticker CBRS at the opening cross on May 14 2026, according to Bloomberg and CNBC reporting.

How oversubscribed was the Cerebras IPO?

The Cerebras book ran more than 20 times oversubscribed, according to CNBC and Bloomberg reporting on May 11 and May 12 2026. That level of demand is consistent with the strongest chip IPOs of the past two decades and forced bookrunners to raise the price range twice during the marketing period.

Who are the lead underwriters on the Cerebras IPO?

Morgan Stanley, Citigroup, Barclays, and UBS Investment Bank are the lead bookrunners. Mizuho and TD Cowen are additional bookrunners on the deal, per the Cerebras press release dated May 4 2026.

How much revenue did Cerebras post in 2025?

Cerebras reported $510 million in revenue for fiscal year 2025, split roughly $358 million from hardware sales and $152 million from cloud and services. The company swung to a 47% net margin while booking $145.9 million in GAAP operating loss, with the reconciliation driven by the OpenAI compute commitment's revenue recognition gates.

What is the OpenAI deal Cerebras disclosed?

Cerebras disclosed a multi-year agreement with OpenAI valued at more than $20 billion, covering 750 megawatts of low-latency compute capacity through 2028. The deal is the largest single customer commitment in the prospectus and underpins revenue visibility through the lockup period.

What is the WSE-3 wafer-scale chip?

The Wafer Scale Engine 3 is Cerebras's flagship silicon: a single chip etched from an entire silicon wafer with 4 trillion transistors, 900,000 AI-optimized cores, 44 gigabytes of on-chip SRAM, and 125 petaflops of peak AI performance. By comparison, NVIDIA's H200 GPU has roughly 80 billion transistors.

How does Cerebras compare to NVIDIA for AI inference?

Cerebras Inference clocks 3,000 to 4,500 tokens per second on benchmarks like Llama 3.1 70B and DeepSeek-V3, compared to 150 to 300 tokens per second on equivalent NVIDIA GPU clusters without aggressive quantization. That is a roughly 20x throughput advantage for inference workloads, driven by the wafer-scale architecture's elimination of inter-chip communication overhead.

What are the biggest risks in the Cerebras IPO?

Customer concentration is the top item flagged in the S-1/A: three customers — G42, MBZUAI, and OpenAI — accounted for the majority of 2025 revenue. Export control exposure to Abu Dhabi-anchored customers, OpenAI's potential pivot to alternative silicon, and NVIDIA's Vera Rubin roadmap closing the inference throughput gap are the three scenarios that would force a material rerating.

Is Cerebras the biggest AI IPO of 2026?

Yes. Cerebras is the biggest AI IPO year-to-date in 2026 by both proceeds raised ($4.8 billion) and valuation ($48.8 billion fully diluted). It is also the first pure-play AI chip IPO of the year. Anthropic has signaled a potential October 2026 listing at $350 billion pre-money, which would supersede Cerebras if it prices on schedule.

What should developers and AI builders watch in the months after the Cerebras IPO?

Five signals to track through end of 2026: the first CBRS earnings call in August 2026 for OpenAI revenue concentration disclosure, Commerce Department updates on G42 and MBZUAI export licensing, NVIDIA's Vera Rubin shipment cadence for inference throughput benchmarks, AWS Bedrock CS-3 customer adoption data, and Anthropic's October 2026 IPO timing for the next public market read on AI infrastructure valuations.