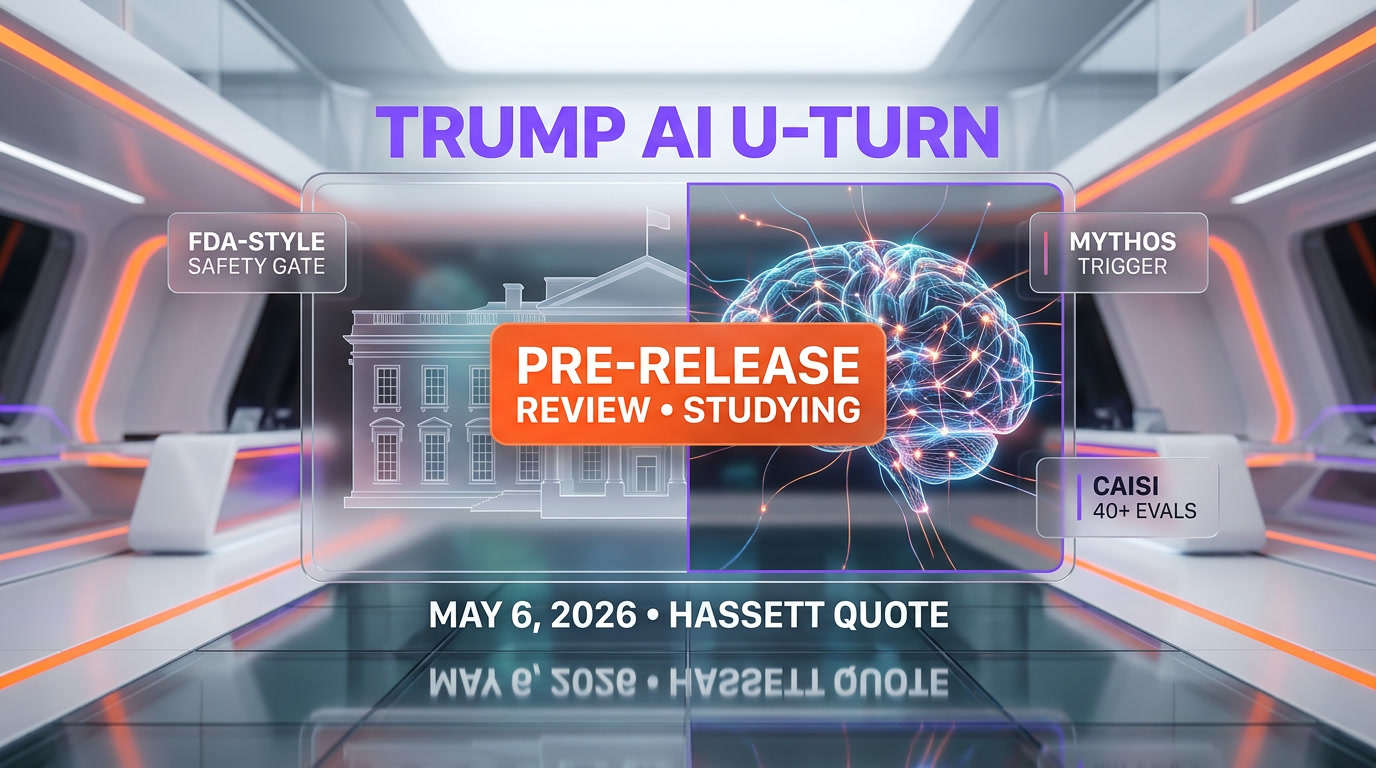

On May 6, 2026, White House National Economic Council Director Kevin Hassett told Fox Business the Trump administration is "studying possibly an executive order" to put advanced AI through FDA-style pre-release reviews before public deployment. The trigger, per Fortune reporting: Anthropic's Mythos model and its ability to identify and exploit cybersecurity vulnerabilities, combined with broader cyber misuse fears. The order is not signed. The working group is not named. We read this as a strategic U-turn from an administration that spent 2025 dismantling AI oversight.

Editorial note: This article is an editorial analysis from ThePlanetTools.ai. We are not affiliated with any party named in this piece. Every claim is sourced to a primary outlet (Fortune, White House Presidential Actions, Holland & Knight, ResultSense). Status as of publication: the executive order is proposed and being studied, not signed. Read our editorial policy.What Hassett actually said on May 6

The single quote that moved the conversation, per Fortune's May 6, 2026 reporting of a Fox Business interview given the same day:

"We're studying possibly an executive order to give a clear road map to everybody about how this is going to go and how future AIs that also could potentially create vulnerabilities should go through a process so that they're released to the wild after they've been proven safe — just like an FDA drug." — Kevin Hassett, Director, White House National Economic Council, May 6, 2026

Three things in that quote matter, and we want to flag each one carefully because the difference between "studying" and "signed" is the difference between a thought experiment and federal policy.

- "Studying possibly" — double-hedged. Not drafted, not circulated for inter-agency review, not on a signing schedule. According to Hassett, the administration is in scoping mode.

- "Clear road map" — implies the EO would set process and standards, not approve or reject specific models directly. Likely a delegation to an existing agency or a new working group.

- "Just like an FDA drug" — the analogy is doing a lot of work here. Drugs go through Phase 1, 2, and 3 trials before public release. The Hassett framing suggests pre-release safety testing as a gating step, not just post-launch monitoring.

We read this as a trial balloon. Trial balloons in Washington either become policy or quietly disappear depending on industry, congressional, and donor reaction over the following weeks.

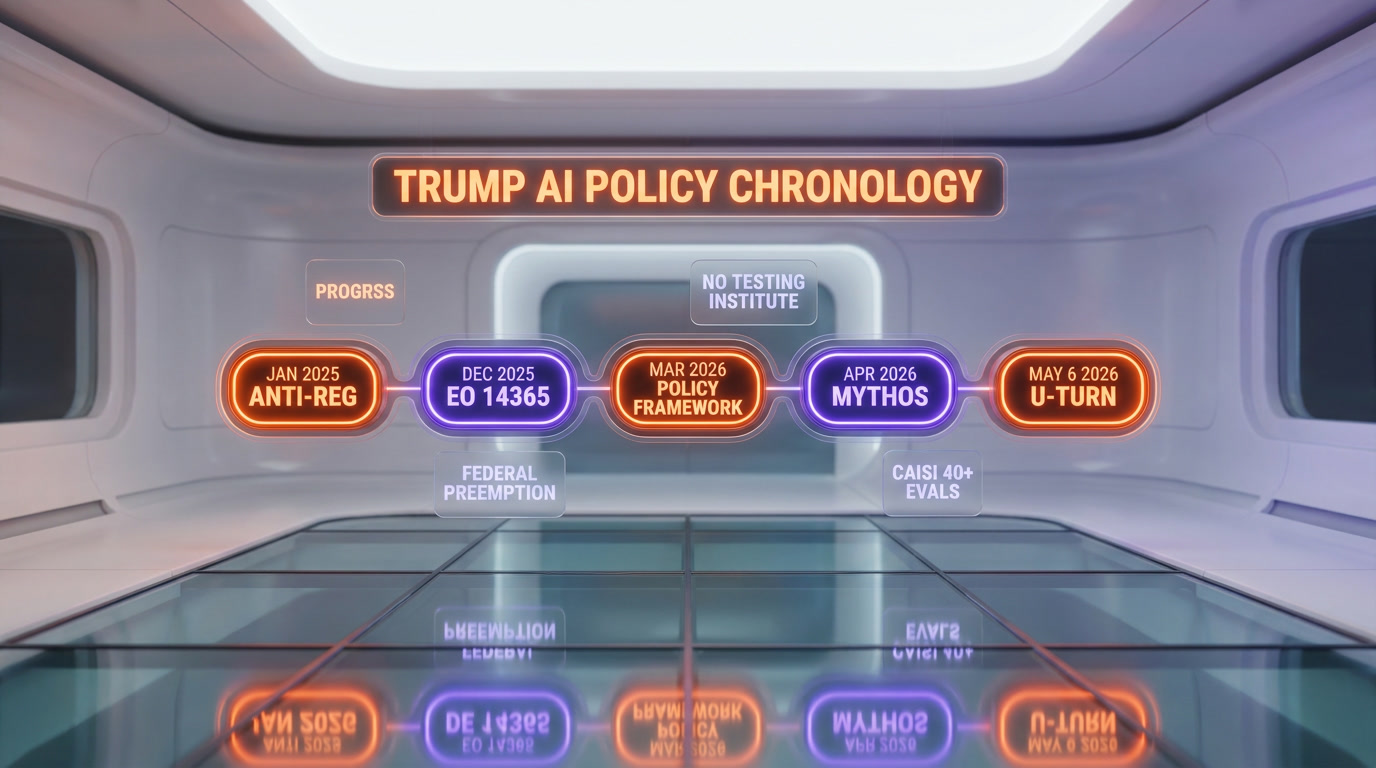

Policy chronology: how the Trump admin got here

To understand why the May 6 statement is a U-turn, you have to look at the chronology. We pulled this together from White House Presidential Actions records, Holland & Knight's March 2026 policy analysis, Fortune reporting, and our own coverage of the EU AI Act delay and Anthropic Mythos events.

- January 2025 — The incoming Trump administration positioned anti-regulation on AI as a core economic plank, framing Biden-era oversight as "overly burdensome AI safety efforts and licensing regimes" (per Fortune's characterization of the prior stance).

- December 2025 — Executive Order 14365, "Eliminating State Law Obstruction of National Artificial Intelligence Policy," directed Commerce to evaluate "onerous" state AI laws within 90 days. The framing: federal preemption to clear the runway for industry.

- March 11, 2026 — Commerce's evaluation deadline passed without public release, per Holland & Knight's reporting. The administrative apparatus was building, but slowly.

- March 20, 2026 — White House released the National Policy Framework for Artificial Intelligence. Key signal: it cautioned against "vague standards, open-ended liability" and advocated existing federal agencies plus regulatory sandboxes — not a new federal AI testing institute. This was still a deregulatory document.

- March 2026 — Anthropic Mythos preview began circulating to a limited group, with capabilities described as significantly beyond the public Opus 4.7 baseline. Cybersecurity researchers inside the limited preview reportedly flagged offensive cyber capabilities as concerning.

- April–May 2026 — A series of Mythos-adjacent events compounded: Sam Altman's "bomb shelter" jab at Anthropic's safety messaging, the Discord/Mercor breach, and the EU AI Act high-risk rules slipping to December 2027. CAISI (Center for AI Standards and Innovation, the renamed AI Safety Institute) had completed 40+ model evaluations and locked partnerships with Google, Microsoft, and xAI.

- May 6, 2026 — Hassett tells Fox Business the administration is "studying" an FDA-style pre-release review EO. The U-turn enters the public conversation.

What we read in this chronology: the Trump administration didn't change its mind because of activist pressure. It changed its mind because the national security apparatus saw what Mythos can do, and "let the market decide" stopped being a politically viable answer for an AI that can identify and exploit zero-days.

What FDA-style pre-release reviews could actually look like

Hassett gave the analogy. He did not give the architecture. We sketched the most plausible shape based on how CAISI is already operating, how the FDA actually structures drug review, and the constraints of an EO that has to survive both industry lobbying and a possible court challenge.

A few design choices the administration would have to make:

- Trigger threshold — Which models go through review? FDA reviews every new molecule, but applying that to AI would block GPT-5 fine-tunes and 7B open-source releases. The likely cutoff: compute-based or capability-based. The Biden-era EO 14110 used 10^26 FLOPs as a reporting threshold. A pre-release EO could re-use that, or shift to capability evals (cyber, bio, autonomous replication).

- Review body — CAISI is the obvious candidate. It already runs evaluations and has partnerships with Google, Microsoft, and xAI. According to Fortune, CAISI has completed 40+ model evals. An EO could formalize CAISI as the gating authority — or stand up a new working group, which Hassett hinted at without naming members.

- Pass/fail criteria — FDA approves drugs against efficacy and safety endpoints. AI doesn't have agreed-upon endpoints. NIST has been working on capability evals; METR has dangerous-capability test suites. The EO would either reference existing benchmarks or commission new ones.

- Disclosure regime — Public reports, classified annexes, or both? FDA labels are public. AI eval results often touch national security. The compromise is likely a public summary plus a classified appendix shared with the working group.

- Timeline impact — FDA Phase 3 trials average ~3 years. AI labs cannot wait years between model releases. A workable EO would have to compress the cycle to weeks or be limited to capability-flagged models only.

None of these choices are settled. The fact that Hassett went on Fox Business to float the concept rather than dropping a draft EO tells us the administration wants industry feedback before committing.

Why Mythos specifically forced the hand

Per Fortune's reporting, the trigger was specific: Mythos's "ability to identify and exploit cybersecurity vulnerabilities." We've been tracking Mythos coverage since the limited preview leaked. A few patterns are converging.

What makes Mythos different in the policy conversation:

- Discovery + exploit in one model — Earlier frontier models could explain a CVE; reportedly Mythos can find a novel vulnerability and chain an exploit. That is the capability that would trigger an FDA-analogous review.

- Anthropic flagged it themselves — Anthropic's safety messaging on Mythos has been notably loud, which Sam Altman publicly characterized as "fear-based marketing." We covered that in our analysis of the Altman bomb shelter jab. Whatever the marketing motive, the technical concerns landed with Washington.

- Timing alignment — Mythos preview circulated in March; CAISI ramped its eval program in early Q2; OpenAI, Anthropic, and Google signed an industry-wide pact on Chinese espionage; and the Pentagon's $200M Anthropic deal dynamics meant federal stakeholders were already inside the model's threat profile. The May 6 EO study is downstream of all of that.

- The breach — The Discord/Mercor unauthorized access incident showed that even a controlled preview leaks. That fact alone makes the "release to the wild after they've been proven safe" framing in Hassett's quote sound less abstract.

We read this as: Mythos was not the only signal, but it was the proximate trigger. The administration would not have pivoted on policy framework alone.

What this would mean for Anthropic, OpenAI, and Google

If the EO is signed in the form Hassett described, the operational impact lands differently across the three frontier labs. We sketched the implications based on each lab's current posture and disclosed compute footprint.

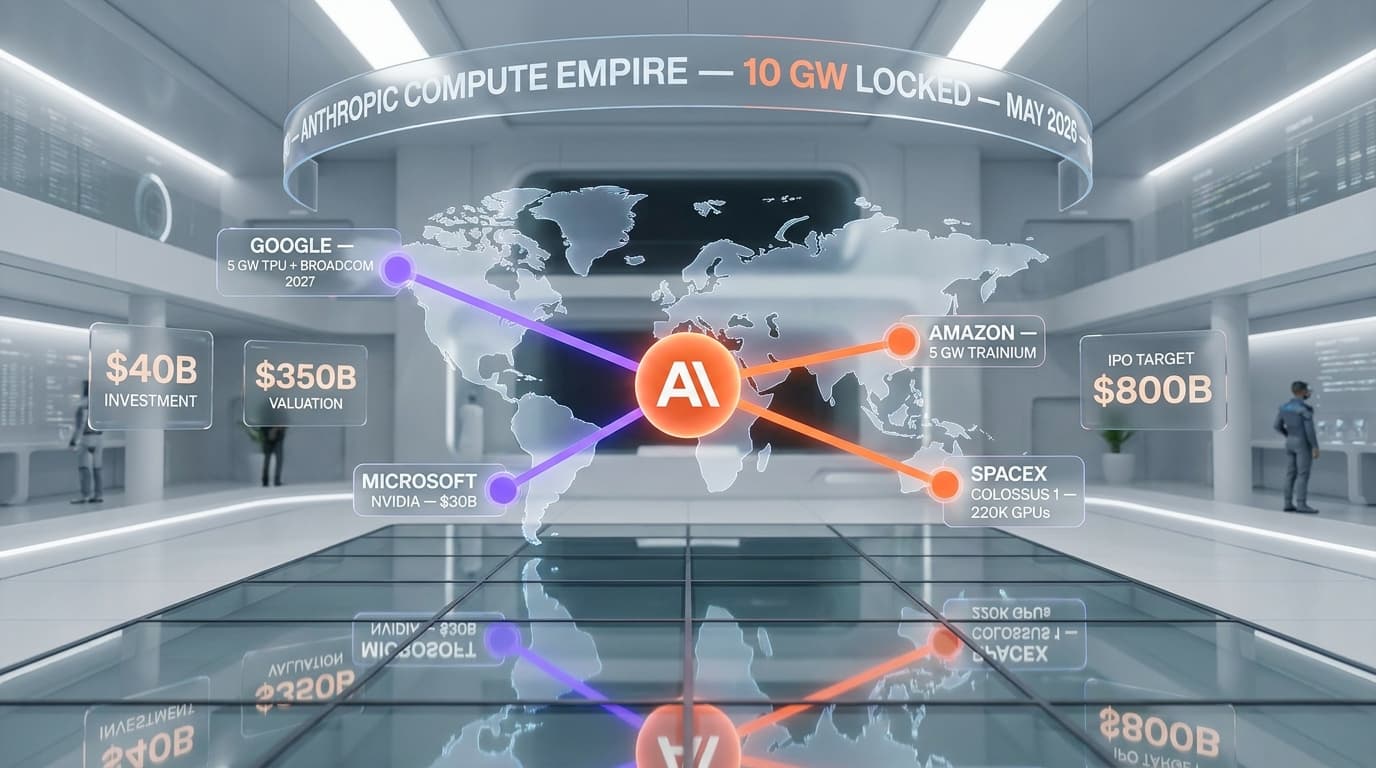

Anthropic. The lab is structurally well-positioned for a pre-release review regime. Its constitutional AI program, Responsible Scaling Policy, and CAISI partnership mean review-style processes are already part of its release cadence. Reportedly, Anthropic is one of the labs that has voluntarily worked with CAISI on Mythos evaluations. The downside: a formal pre-release review adds weeks to launch, slowing the velocity it needs to keep its 10 GW compute commitments earning return.

OpenAI. OpenAI also runs internal evals and has a CAISI partnership per Fortune. Sam Altman's public stance — see our "fear-based marketing" coverage — has been more skeptical of safety theater. A formal review regime is less aligned with OpenAI's current narrative, but the company has compliance scaffolding to comply quickly. The bigger issue: anything that slows GPT-class releases gives Anthropic relative breathing room on capability deployment.

Google. Of the three, Google is most insulated. CAISI partnership, multi-decade regulatory experience, and a release cadence that is already gated by internal safety reviews mean Google's playbook barely changes. The Gemini family ships through process; adding a federal layer is incremental.

xAI, Meta, and the long tail. xAI has a CAISI partnership but its public posture has been more permissive. Meta's Llama strategy of open weights is the hardest fit — pre-release reviews on weights that ship to anyone with an HF account is a regulatory novelty. Smaller labs would either fall below the trigger threshold or have to build review capacity they don't currently have.

Why this EO may not survive contact with industry

We want to be deliberately careful here. The U-turn is real, but the EO is not signed, and there are three forces that could kill it before it gets there.

- The Trump base does not love it. The 2024 platform criticized AI licensing regimes as Biden-style overreach. A faction inside the GOP would view an FDA-analogous AI EO as a betrayal of campaign messaging. Hassett himself is positioned center-right pro-business; floating this on Fox Business may be a deliberate test of how the base reacts.

- Industry has won the EU round. The same lobbying coalition that just won a 16-month delay on EU AI Act high-risk rules is now turning toward Washington. If the EU example told industry that delay is achievable, the same playbook will run on this EO.

- The legal architecture is hard. An EO that conditions release on federal review touches commercial speech and prior restraint doctrine. A challenger lab — or a libertarian legal foundation — could file the day the EO drops. Holland & Knight's March framework explicitly cautioned against "vague standards" precisely because vague standards lose in court.

The most likely outcomes, ranked by our read of probability:

- (1) Voluntary framework dressed as EO — most likely. The EO gets signed but functions as a codified version of the existing CAISI partnership program. Labs that already cooperate face no marginal cost. Compliance is performative.

- (2) Capability-triggered mandatory review — possible. Only models that flag specific capability thresholds (cyber, bio, autonomous replication) trigger review. This is the workable middle ground.

- (3) Universal pre-release review — unlikely. The political and operational cost is too high. Hassett's "FDA drug" framing is rhetorically attractive but operationally unworkable at AI velocity.

- (4) The EO never lands — possible. Industry reaction sours, the administration walks it back, and the May 6 statement becomes a footnote.

CAISI: the quiet protagonist of this story

The Center for AI Standards and Innovation (CAISI), the rebranded AI Safety Institute, is the under-covered actor in this U-turn. Per Fortune, CAISI has completed 40+ model evaluations and signed partnerships with Google, Microsoft, and xAI. That eval volume is what gave the White House the technical confidence to float the EO at all.

Three CAISI dynamics worth noting:

- It survived the rebrand. The renaming from AI Safety Institute to Center for AI Standards and Innovation looked like deprioritization in early 2025. Instead, CAISI built capacity quietly through 2025–2026 while the political conversation was elsewhere.

- Its eval program is voluntary. The 40+ model count is from labs that opted in. A pre-release review EO would shift the program from opt-in to mandatory for capability-flagged models — a structural change, not a new institution.

- It is the natural home for any review regime. Standing up a new agency would take 18+ months and require congressional appropriation. Designating CAISI as the review body needs only an EO and existing budget.

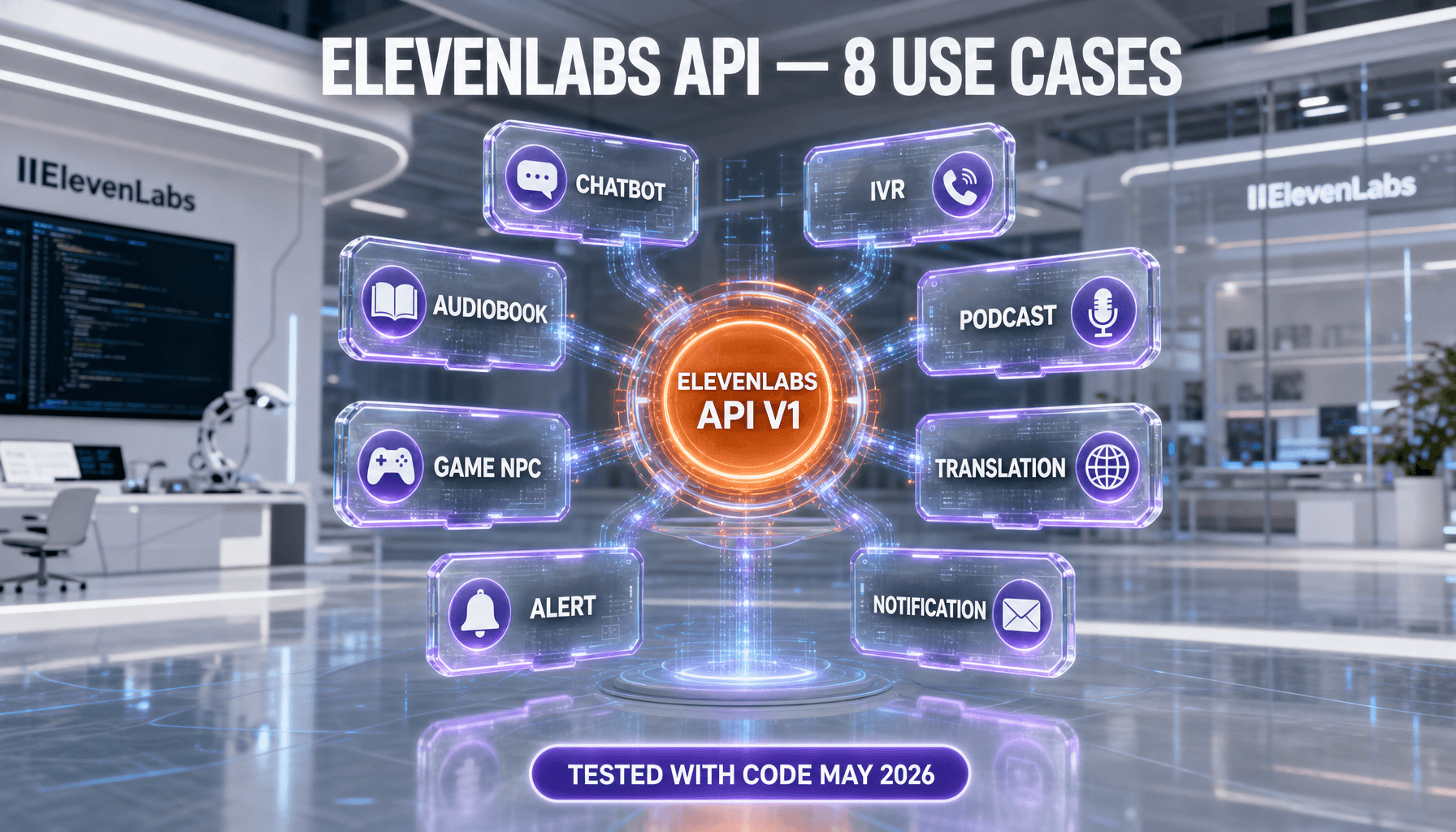

What builders and AI tool teams should do this quarter

If you're shipping AI products in the US — wrappers, fine-tunes, agents, applications — the May 6 EO study probably does not affect you directly in the near term. The trigger threshold, whatever it ends up being, is calibrated for frontier model releases, not application-layer products.

That said, three things are worth doing now:

- Document your model inputs. If a pre-release review regime lands, downstream users may face questions about which gated model their stack depends on. Maintain a clean SBOM-style record of model providers and versions.

- Watch the trigger threshold language. The 10^26 FLOPs threshold from EO 14110 is the most likely starting point. If the new EO uses capability evals instead — cyber, bio, autonomous replication — the rules of the game shift for any team building agents that touch those capability surfaces.

- Don't bet on the EO not landing. "Studying possibly" sounds soft, but in our reading, the political and national security pressure is unidirectional. The most likely outcome is some form of EO with a soft enforcement floor. Plan for that as the base case, not the worst case.

Our editorial position and what would prove us wrong

We've been editorial-bullish on Anthropic for most of 2026 — the 10 GW compute commitments, the Claude Code product velocity, the safety culture. The May 6 White House U-turn does not change that read on its own. What it does is highlight the asymmetric exposure the safety narrative creates.

Anthropic's positioning — "we build and we warn" — is internally consistent and good for civilization. It is also the positioning that handed Washington the political cover to consider an EO that will cost Anthropic launch velocity. The same warnings that made Mythos credible to the national security community made it the proximate trigger for federal review.

What would prove our reading wrong:

- Anthropic stumbling on safety or governance. If the limited Mythos preview produces a real-world incident — beyond the Discord breach — the company's "we warned you" positioning collapses, and the EO conversation tilts toward harder enforcement, not softer.

- The EO landing as a hard mandate. If the signed EO requires multi-week review on a universal threshold rather than capability triggers, that changes Anthropic's operational math significantly.

- CAISI getting politicized. If the eval body gets pulled into partisan combat, the technical credibility that makes the U-turn possible erodes fast.

We'll update this article as the EO moves from "studying possibly" to draft, signed, or quietly shelved. For now, the read is: the administration that promised no AI rules is actively considering the most ambitious AI rules any G7 government has floated. Mythos forced the hand. CAISI gave them the tool. May 6 was the public reveal.

Frequently asked questions

Frequently Asked Questions

Has Trump signed an executive order requiring pre-release AI reviews?

No. As of May 6, 2026, the administration is "studying possibly an executive order," per White House National Economic Council Director Kevin Hassett's interview with Fox Business. No draft has been published, no signing schedule has been announced, and no working group has been named.

What did Kevin Hassett actually say about AI reviews?

Per Fortune's May 6, 2026 reporting, Hassett said: "We're studying possibly an executive order to give a clear road map to everybody about how this is going to go and how future AIs that also could potentially create vulnerabilities should go through a process so that they're released to the wild after they've been proven safe — just like an FDA drug."

Why is this a U-turn for the Trump administration?

The Trump administration spent 2025 positioning anti-regulation on AI as a core economic plank, criticizing Biden-era oversight as "overly burdensome." December 2025's EO 14365 directed Commerce to evaluate "onerous" state AI laws to clear federal preemption. The March 2026 National Policy Framework cautioned against "vague standards" and avoided proposing new federal AI testing institutions. The May 6 statement reverses that posture by floating exactly the kind of pre-release review the administration had previously rejected.

What triggered the policy U-turn?

Per Fortune, the trigger was Anthropic's Mythos model and its reported ability to identify and exploit cybersecurity vulnerabilities, combined with broader cyber misuse fears. CAISI (Center for AI Standards and Innovation) had also completed 40+ model evaluations through partnerships with Google, Microsoft, and xAI, giving the administration the technical capacity to consider a review regime.

What does FDA-style pre-release review mean for AI?

The FDA analogy from Hassett's quote suggests pre-market safety testing before public deployment — similar to how drugs go through trials before approval. Operationally, an AI version would likely mean capability evaluations (cyber, biological, autonomous replication risks) run by CAISI or a designated body, with pass/fail gating on public release. The exact architecture has not been disclosed.

Who would be on the proposed government-industry working group?

The composition has not been announced. Hassett described it as a "government-industry working group" without naming members. Based on existing CAISI partnerships, the most likely industry participants would include Google, Microsoft, Anthropic, OpenAI, and xAI. No official roster exists at the time of writing.

How would this affect Anthropic, OpenAI, and Google?

Anthropic is structurally aligned with a pre-release review regime through its Responsible Scaling Policy and existing CAISI cooperation, but a formal review would slow launch velocity. OpenAI has the compliance scaffolding to comply but its public posture has been less safety-vocal. Google is the most insulated thanks to multi-decade regulatory experience and existing internal safety gating. The frontier labs are the primary target; smaller labs and application-layer builders would likely fall below the trigger threshold.

Could this executive order be blocked in court?

Possibly. An EO that conditions AI model release on federal review touches commercial speech and prior restraint doctrine. The Holland & Knight March 2026 policy analysis specifically cautioned against "vague standards" precisely because vague standards lose in court. A challenge from a frontier lab or a libertarian legal foundation is plausible if the EO mandates universal pre-release review rather than a narrower capability-triggered regime.

How does this connect to the EU AI Act delay?

The same lobbying coalition that won a 16-month delay on EU high-risk AI rules — pushing high-risk obligations from August 2026 to December 2027 — is now turning toward Washington. The EU example showed that industry can secure timeline relief from regulators. We covered the EU rollback in our EU AI Act analysis. The dynamics are similar but the trigger differs: in Europe, German industrial lobbying drove delay; in Washington, national security concerns are driving the opposite direction.

Should AI tool builders adjust their roadmaps?

Application-layer products — wrappers, fine-tunes, agents — are unlikely to be directly affected by a frontier-model-targeted EO. Three precautions are worth taking now: maintain clean records of which model providers and versions your stack depends on, watch the trigger threshold language as it firms up, and plan for some form of EO landing as the base case rather than betting on it being shelved.

Sources

- Fortune, May 6, 2026 — "Trump administration embraces AI oversight policies it once rejected" (Hassett quote source)

- White House Presidential Actions, December 2025 — EO 14365 on state AI law preemption

- Holland & Knight, March 2026 — National Policy Framework for AI analysis

- ResultSense, May 5, 2026 — White House pre-release AI vetting coverage

Editorial position as of May 9, 2026. We'll update this analysis as the proposed executive order moves to draft, signing, or shelving. — Anthony Martinez, CEO, ThePlanetTools.ai