Anthropic's 2026 Developer Conference, held in San Francisco on May 7, 2026, shipped four agent platform updates: Multi-Agent Orchestration went live in production (cuts costs by ~33% and shaves 20-30 seconds from multi-draft requests). Memory (Global + Personal markdown stores) went live for subscribers. Dreaming launched as a research preview where agents review up to 100 past sessions in background and rewrite their own memory between runs. Outcomes, a grader-AI feedback loop, is coming soon — not yet shipped.

TL;DR — what actually shipped, what is preview, what is coming

We read every public source on the May 7 keynote (the every.to chain-of-thought report, the Anthropic newsroom, the Angela Jiang sessions) and pulled out the four announcements. The headline is not the one most outlets ran with. The bombshell wrapper was "Dreaming" — but the unit-economics shift is Multi-Agent Orchestration going GA. Here is the honest status table:

| Feature | Status (May 7, 2026) | What it actually does |

|---|---|---|

| Multi-Agent Orchestration | Live in production | A coordinator deploys subagents in parallel; cuts ~33% costs and 20-30s on multi-draft requests. |

| Memory (Global + Personal) | Live | Markdown-based memory stores. Subscribers get a personal memory layer on top of the global one. |

| Dreaming | Research preview | Background process that reviews up to 100 past sessions and rewrites the agent's own memory between runs. |

| Outcomes | Coming soon | A grader AI evaluates another AI's work against goals and rubrics. Closed feedback loop. |

If you only remember one quote from the conference, make it Angela Jiang, Anthropic's head of product for the Claude platform: "Different harnesses paired with the same model produce drastically different results." That sentence is the strategic frame for the entire May 7 release — it is no longer a model race, it is a harness race.

Dreaming, explained without the sci-fi gloss

Anthropic borrowed the metaphor from neuroscience and Asimov on purpose. In humans, sleep is when the day's experiences get replayed, pruned, and consolidated into long-term memory. In Anthropic's research preview, "Dreaming" is the same loop on an agent: between runs, a background process pulls up to 100 past sessions out of memory, replays them through the model, and edits the agent's memory file in place — strengthening what worked, deleting what failed, refactoring repeated patterns into reusable instructions.

The mechanic is mundane and the implication is not. Until May 7, every Claude agent was a goldfish: it could read a memory store, write to it during a run, but it could not reflect on its own behavior after the run was done. Dreaming is the first publicly-announced consumer-facing implementation of asynchronous self-improvement on a major Western model. It is also, to be precise, a research preview — Anthropic is explicit that it is not GA, not beta, not generally available. It is shipped as an opt-in capability on Managed Agents and is under active study.

Why 100 sessions, and why background

The 100-session ceiling is the part that matters technically. Reviewing every prior session every night would be both expensive and useless — most sessions are noise. Anthropic's choice of "up to 100" is a research-preview hyperparameter that bounds the cost while giving the dreaming pass enough signal to spot recurring patterns. Doing it in background — when the agent is idle, off the user's clock — is what makes it economically viable. You pay for the dream once; you stop paying every time the agent has to re-derive the same lesson during a live run.

Dreaming vs fine-tuning vs RLHF

This is not fine-tuning. Fine-tuning rewrites model weights — heavy, slow, requires ML ops, and Anthropic does not let you do it on Claude. This is not RLHF either; RLHF requires human raters and a reward model trained offline. Dreaming sits in the third lane: memory editing, not weight editing. The base model is unchanged. What changes is the markdown memory file the agent reads at the top of every run. That is the only thing required for an Asimov-grade loop on top of an off-the-shelf foundation model — and it is the design choice that makes Dreaming deployable as a consumer feature instead of a research-lab artifact.

Multi-Agent Orchestration is the under-reported headline

Dreaming dominated Twitter. Multi-Agent Orchestration dominated the spreadsheets. As of May 7, every paid Claude account that uses Managed Agents can deploy a coordinator agent that spawns subagents in parallel. The Anthropic-reported numbers from the keynote: 20-30 seconds shaved from multi-draft generation tasks, and ~33% cost reduction on the same workloads versus the sequential single-agent baseline.

The cost number is the one to internalise. A 33% cut is not a feature, it is a pricing event. It re-prices every team that was running long-form content generation, multi-file refactors, or research synthesis through the Claude API. The implicit message: if you were paying for sequential agent loops in March 2026, you are now overpaying by a third.

How the orchestrator actually decomposes a task

The coordinator does not run the work itself. It reads the request, decides whether the task is parallelisable, splits it into independent subtasks, fans out subagents (each with its own context window), waits for them to finish, and merges results. For multi-draft requests — generate three variants of a landing page, write four code review comments on a PR — the parallel cost overlaps and you pay for one wall-clock window instead of four. That is where the 20-30 second savings come from.

When orchestration is the wrong tool

Sequential reasoning chains do not parallelise. If task B depends on task A's output, the coordinator falls back to a single agent and you save nothing — you may even lose a few hundred milliseconds to overhead. Anthropic was honest about this in the keynote: orchestration is for fan-out workloads, not chain-of-thought. For coding agents like Claude Code, the gain shows up most on multi-file edits and parallel test generation, less on tightly coupled refactors.

Memory: Global plus Personal, markdown-first

The Memory product shipped with two layers. Global memory is the markdown store the agent reads on every run — a persistent context document the user can edit. Personal memory, available to subscribers, is a per-user layer that perzonalises the agent's behavior across sessions: your project conventions, your code style, your favorite test frameworks, your tone preferences.

The choice of markdown is not aesthetic. Markdown is human-readable, diffable in git, and easy for the model to edit in place. It is the same design Anthropic uses internally — the Claude Code project memory file is markdown — and it is what makes Dreaming possible: a background process can rewrite a markdown file safely and reversibly. A binary memory format would have made the entire stack opaque to users and risky to mutate.

Outcomes: the grader-AI feedback loop that is coming next

Outcomes did not ship on May 7. Anthropic announced it as a "coming soon" capability in the same keynote, and the design is straightforward: a grader AI evaluates another AI's work against an explicit goal and rubric, returns a score plus structured feedback, and the working agent uses that feedback to iterate. It closes the loop that Dreaming opens — Dreaming reviews past sessions; Outcomes reviews the current session in real time.

If the four-feature stack is the product, the order is the strategy: orchestration first (parallelise), memory second (persist), Dreaming third (self-edit), Outcomes fourth (self-correct). Antho's read on this from the cockpit: Anthropic just laid out the full agent-platform roadmap on a single slide, and they sequenced the cheap-to-ship features (orchestration, memory) before the research-grade ones (Dreaming, Outcomes). That order is how a serious platform team ships.

Why this re-prices the entire agent race

The Claude vs Cursor vs Devin vs Replit competition until May 6 was a feature race: who has the best inline edits, the cleanest agent UX, the most integrations. After May 7 it is a different race: who has the harness that gets better while you sleep.

That is the Angela Jiang quote weaponised. If two teams use the same Claude Sonnet 4.5 base model — one with Anthropic's harness (orchestration + memory + dreaming) and one with a vanilla wrapper — the first team gets compounding returns over weeks. The second team is running the same agent on day 30 as on day 1. That gap is what the Anthropic platform team is selling.

What it means for Cursor, Devin, and Replit

Cursor's $50B valuation and own-model bet was always partly a hedge against exactly this scenario. If Anthropic is going to ship platform-level capabilities directly to end users, the IDE wrappers either need their own equivalent stack or they become commodity front-ends. Devin's autonomous-engineer pitch is closer to Outcomes than to Dreaming — they have been doing closed-loop evaluation for a year. Replit sits in a different lane (cloud-IDE plus deployment) and is the least exposed.

Managed Agents as the distribution wedge

The April Managed Agents launch — and the OpenClaw controversy that followed — now reads as setup. Anthropic needed a distribution surface to ship orchestration, memory, and Dreaming into. Managed Agents is that surface. The criticism that Anthropic was "embracing-extending-extinguishing" the open-source agent ecosystem looks more pointed in retrospect: every capability shipped on May 7 makes the Managed Agents platform stickier and the rolled-your-own alternative further behind.

Hands-on research-preview caveats — what we learned reading the docs

We did not run Dreaming end-to-end (research preview, gated). What we did do: read the public docs, the every.to dev conference report, and pattern-match against Anthropic's release cadence on prior previews like prompt caching and tool use. Three caveats worth flagging:

- Cost is not zero. Dreaming runs the model over up to 100 past sessions in background. Anthropic has not yet published a per-dream price. Expect it to be billed; expect it to be smaller than the savings it produces.

- Memory hygiene matters more now. If your agent's memory is full of bad patterns from the first week, Dreaming will reinforce them before you notice. Audit your markdown memory weekly during the preview.

- Privacy surface expands. Personal memory plus Dreaming means more user-specific data flowing through more passes. Anthropic's privacy posture is strong (no training on customer data by default for API), but enterprise teams should re-read the data-handling docs before opting in.

What we read this as — strategic interpretation

Three reads:

- Platform play, not feature drop. Orchestration plus Memory plus Dreaming plus Outcomes is one product, not four. Anthropic is shipping the agent platform layer that competitors will spend 18 months trying to clone.

- Cost compression is the wedge. The 33% orchestration discount is the customer-acquisition mechanism. New teams will switch for the price. The platform features lock them in.

- Dreaming is brand strategy as much as research. The name is sticky, the metaphor is universal, the science-fiction frame is free PR. We are writing this article. Bloomberg, Forbes, every dev outlet is writing this article. That is not an accident — it is exactly the universalise-the-niche move that gets a research preview onto every CEO's desk.

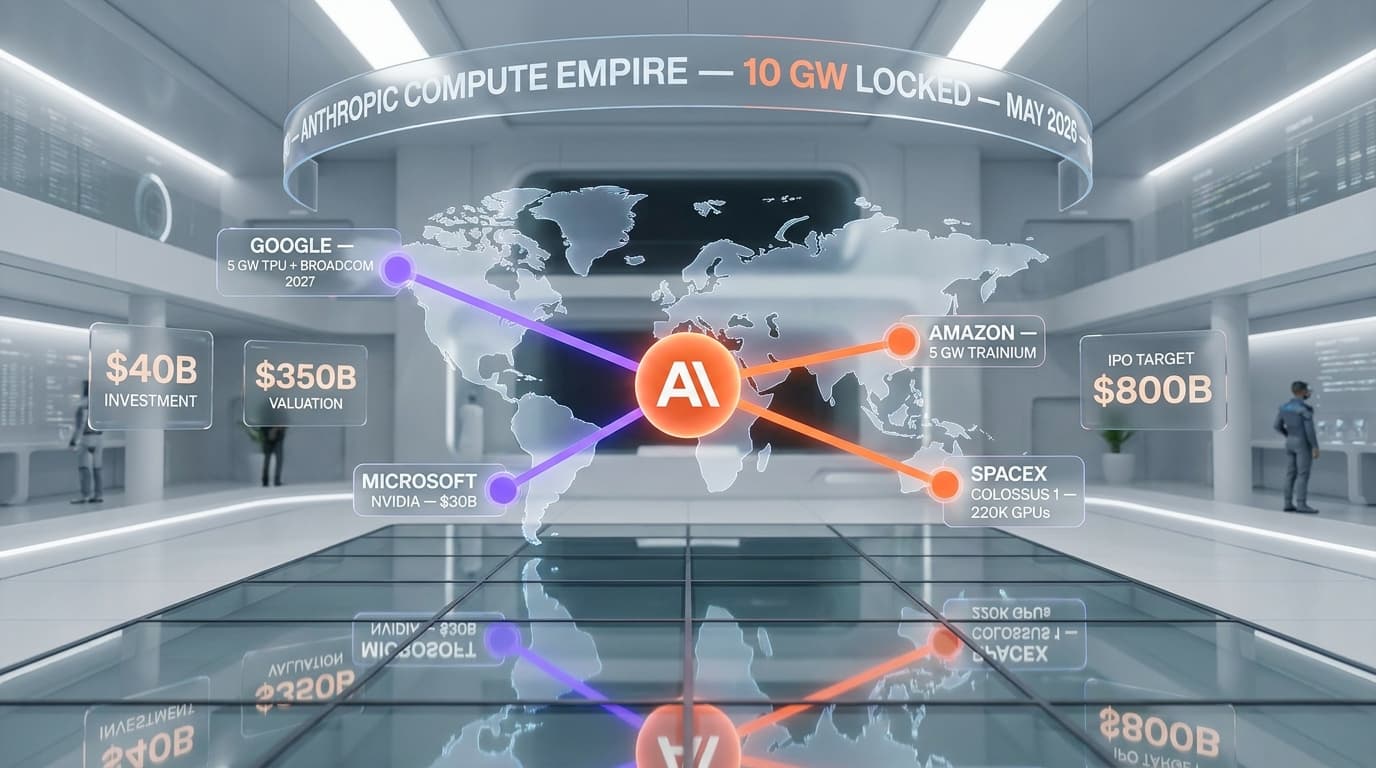

Antho's call from the cockpit: this is the most strategically important Anthropic announcement since the SpaceX Colossus compute deal. The compute deal solved the supply problem. May 7 solved the differentiation problem. Pair them with the $800B valuation backdrop and the 10 GW compute empire, and you have a company that has stacked four wins inside a single quarter.

How Dreaming pairs with Claude Opus 4.7

The model under all of this is Claude Opus 4.7, launched April 16. Opus 4.7 is the best harness-amplified base model Anthropic has shipped — 70% on CursorBench, 98.5% on XBOW vision, the new xhigh effort tier and task budgets. As we noted at launch, Opus 4.7 was clearly designed with managed agents in mind. May 7 is the other half of that bet: give the best model the best harness, and the gap to everyone else widens.

What this means if you are building on Claude this week

Three concrete moves we would make if we were shipping a Claude-based product on May 8, 2026:

- Turn on Multi-Agent Orchestration immediately. The 33% cost cut is real and it requires no code changes for fan-out workloads. Audit your agent calls; flag the parallelisable ones; switch them.

- Migrate to Memory if you are not already. Personal memory plus markdown plus diffable history is strictly better than the homegrown context-stuffing pattern most teams are running. Markdown means you can git-diff your agent's memory.

- Wait on Dreaming for production-critical agents. Research preview means it will change. Test it on internal tooling, not on customer-facing flows. Re-evaluate when Anthropic moves it from preview to GA — usually 8-12 weeks for them.

Bottom line

On May 7, 2026, Anthropic stopped competing on Claude-the-model and started competing on Claude-the-platform. Multi-Agent Orchestration is the cost lever, Memory is the persistence lever, Dreaming is the self-improvement lever, Outcomes is the self-correction lever. Three are live or shipping; one is preview; one is coming soon. The Angela Jiang quote — "different harnesses paired with the same model produce drastically different results" — is the entire 2026 thesis on a single slide.

For builders: the price of staying on a vanilla LLM wrapper just went up. For Cursor, Devin, and Replit: the harness race just got harder. For Anthropic: the agent platform moat is now visible from space. We are watching this one closely, and we will update this analysis when Outcomes ships and when Dreaming graduates from preview.

Frequently Asked Questions

What is Anthropic's Dreaming feature?

Dreaming is a research preview, announced at Anthropic's 2026 Developer Conference on May 7, 2026, where Claude Managed Agents review up to 100 past sessions in background and rewrite their own markdown memory file between runs. It is memory editing, not weight editing — the base model is unchanged.

Is Dreaming generally available?

No. As of May 7, 2026, Dreaming is a research preview, not GA, not beta. It is shipped as an opt-in capability on Managed Agents while Anthropic studies it. Multi-Agent Orchestration and Memory are the two features that went fully live on the same day.

What is Multi-Agent Orchestration on Claude?

Multi-Agent Orchestration is the live-in-production feature where a coordinator agent deploys subagents in parallel for fan-out workloads. Anthropic reported it cuts ~33% of cost and shaves 20-30 seconds off multi-draft generation tasks compared to a sequential single-agent baseline.

How is Dreaming different from fine-tuning?

Fine-tuning rewrites model weights and requires offline ML ops; Anthropic does not offer it on Claude. Dreaming rewrites the markdown memory file the agent reads at the top of every run. The base model is unchanged. It is also distinct from RLHF, which requires human raters and a separate reward model.

What is Anthropic Outcomes?

Outcomes is announced as "coming soon" — not yet shipped as of May 7, 2026. It is a feedback loop where a grader AI evaluates another AI's work against explicit goals and rubrics, returns a score plus structured feedback, and the working agent iterates on that signal in real time.

What did Angela Jiang say at the 2026 Developer Conference?

Angela Jiang, Anthropic's head of product for the Claude platform, said: "Different harnesses paired with the same model produce drastically different results." It is the strategic frame Anthropic used to position the May 7 platform release as a harness race, not a model race.

How does Dreaming affect Cursor, Devin, and Replit?

Dreaming, Memory, and Multi-Agent Orchestration are platform-level capabilities Anthropic ships directly to end users. IDE wrappers like Cursor — which raised at a $50B valuation in part as a hedge against this — either need to build their own equivalent stack or risk becoming commodity front-ends. Devin's closed-loop evaluation is closest to Outcomes; Replit is least exposed.

Where can I read the source reports on the conference?

The most detailed third-party report is on every.to: "Inside Anthropic's 2026 Developer Conference" (chain-of-thought series). Anthropic's own announcements are published on the Anthropic newsroom at anthropic.com/news. We cross-checked both before writing this analysis.

How is Memory structured on Claude Managed Agents?

Memory shipped on May 7 as two markdown layers. Global Memory is a shared, persistent context document the user can edit. Personal Memory, available to subscribers, is a per-user layer that perzonalises agent behavior across sessions — project conventions, code style, tone preferences. Both are diffable in git.

Will Dreaming cost extra to use?

Anthropic has not yet published a per-dream price as of May 7, 2026. Dreaming runs the model over up to 100 past sessions in background, so it is not free. Expect it to be billed when it graduates from research preview, and expect the cost to be smaller than the savings the resulting memory edits produce.

Should I turn on Multi-Agent Orchestration today?

Yes for fan-out workloads — multi-draft generation, parallel code review, research synthesis. The 33% cost cut and 20-30 second wall-clock saving are reported by Anthropic and require no code change for parallelisable tasks. For sequential reasoning chains where task B depends on task A, the coordinator falls back to a single agent and you save nothing.

How does this compare to OpenAI's agent stack?

As of May 7, 2026, OpenAI has shipped Assistants and a separate Agents SDK, but no publicly-announced equivalent to Dreaming (asynchronous self-improvement via memory editing). Anthropic's bet is that platform-level capabilities (orchestration plus memory plus dreaming plus outcomes) are a harder moat to clone than any single feature.