On April 21, 2026, Bloomberg reported that a private Discord group accessed Claude Mythos Preview — the model Anthropic called "too capable to release as-is" — through a compromised contractor account at third-party AI vendor Mercor. The breach landed the same day Anthropic finalized the Project Glasswing rollout to 11 enterprise partners including Google, Microsoft, AWS, Nvidia, and JPMorgan Chase. It is Anthropic's third disclosed security incident in 26 days, and the White House has confirmed the rollout will proceed on schedule despite the unauthorized access. The group is not using Mythos for cybersecurity work, and Anthropic says no internal systems were touched.

What happened — a breach timed to day one

The scoop dropped at 09:10 UTC on April 21, 2026. Bloomberg's reporting, later corroborated by TechCrunch, CBS News, Engadget, Cybernews, Euronews, and The Decoder across the next 18 hours, described a specific chain of events: a private Discord server focused on surfacing information about unreleased AI models obtained credentials belonging to an Anthropic contractor. Those credentials were combined with publicly available data from a prior leak at Mercor, an AI startup that provides vendor services into Anthropic's ecosystem. The combination gave the group live access to Claude Mythos Preview — the locked frontier model Anthropic had announced to its Glasswing partners six days earlier on April 15.

Anthropic's statement, issued to Bloomberg within hours: "We're investigating a report claiming unauthorised access to Claude Mythos Preview through one of our third-party vendor environments. There is currently no evidence that Anthropic's systems are impacted, nor that the reported activity extended beyond the third-party vendor environment." Translation: the blast radius is scoped to Mercor, not Anthropic's core. We note the statement does not deny the Discord group's access — it denies the blast radius extending into Anthropic itself.

The timing is the part that matters. April 21 was the day Anthropic's Project Glasswing Cyber Verification Program was scheduled to flip on for its 11 inaugural partners. In our separate analysis of the Mythos Preview reveal we wrote that the locked-frontier model was Anthropic's clearest signal yet that frontier access was splintering along safety lines. Six days later, an unauthorized Discord group had the model before 10 of the 11 authorized enterprises did.

How the breach happened — the Mercor credentials chain

The mechanism is textbook vendor-chain compromise, and it is boring in exactly the way real security incidents are boring. Mercor, the AI startup, had a prior data leak that exposed personally identifiable information and, per Bloomberg's reporting, operational metadata about its workforce of contractors. That information became publicly available. The Discord group cross-referenced it with a separately compromised credential belonging to an Anthropic contractor working through Mercor's vendor environment. Put those two things together and you have a working session into a Mythos Preview environment.

We have seen this movie before. It is the same class of failure as the Claude Code source map leak on March 31, 2026 — a 60 MB map file shipped inside a public npm package that exposed 512,000 lines of internal logic. Different surface, same underlying truth: the weakest security perimeter on a frontier AI company is rarely the frontier AI company itself. It is the third, fourth, and fifth concentric rings — contractors, vendor environments, shipped build artifacts, internal tooling that leaks through npm packages nobody thought anyone would unpack.

The specific elements in this breach:

- Compromised contractor credential — identity of the contractor has not been disclosed by Anthropic or reporting outlets. The credential was active at the time of unauthorized access.

- Mercor data leak — date of the original Mercor leak has not been published, but the leaked data was publicly available when the Discord group assembled the attack chain.

- Third-party vendor environment — the Mythos Preview access was scoped to a Mercor-operated environment, not the core Anthropic production stack. Anthropic's internal systems show no evidence of compromise.

- Scope of use — the Discord group is not using Mythos Preview for cybersecurity research, red-team testing, or offensive tooling. They are using it, per the reporting, to poke at unreleased AI models.

That last bullet is the one that cuts both ways. The best-case reading: a handful of enthusiasts got early-access bragging rights and did nothing dangerous. The worst-case reading: they are the most benign actors who could have pulled this chain, and the chain itself still exists.

Who is the Discord group?

The reporting is thin and deliberately so. Bloomberg and The Decoder describe the group as a private Discord server with members who "seek information about unreleased AI models." Engadget framed it as a community focused on frontier-model reconnaissance. None of the outlets have named members, the server, or the identities of the individuals who assembled the Mercor-plus-contractor chain. Anthropic has not publicly named the group either.

What we can say with confidence, based on what has been published: the group is not a state actor based on the scope of their use (no offensive tooling applications, no espionage signatures), it is not a known cybercrime syndicate (no ransom demand, no data resale), and it is not a whistleblower-style leak (no materials dumped to journalists or Archive.org). It behaves like an enthusiast community with better OPSEC than it should have and worse ethics than it claims.

The precedent here is important. In the 2023-2024 timeframe, a cluster of Discord servers built reputations around scraping OpenAI's unreleased model checkpoints and leaking system prompts from ChatGPT. The cat-and-mouse dynamic is not new. What is new is that this group is allegedly sitting on live session access to a model Anthropic explicitly categorized as too powerful to ship publicly.

Project Glasswing timeline — from announcement to breach in 10 days

Here is the compressed timeline, with dates verified against Anthropic's announcements and the reporting record.

- April 11, 2026 — Anthropic announces Project Glasswing, an adversarial-safety framework for frontier deployment. The program includes a Cyber Verification step for any partner receiving access to safety-critical capabilities. Our coverage: Claude Mythos Goes Dark: Locked Behind Project Glasswing.

- April 15, 2026 — Anthropic confirms Mythos Preview's existence, posts benchmarks showing it outperforms Opus 4.7 on Cybench (+17.7 points), SWE-bench Verified, and AgentHarm. Announces 60 verified partners will get guarded access, roughly 11 of which are Fortune-scale enterprises — Google, Microsoft, AWS, Nvidia, JPMorgan Chase, plus six unnamed organizations.

- April 16, 2026 — Claude Opus 4.7 ships publicly with Mythos-derived safeguards back-ported in. The split between locked frontier (Mythos) and safeguarded general model (Opus 4.7) goes live.

- April 21, 2026 — Bloomberg publishes the breach report. Same day, the Glasswing rollout begins for the 11 inaugural partners. The White House confirms the rollout proceeds on schedule.

Ten calendar days from Glasswing announcement to day-one breach. Six days from the Mythos reveal to unauthorized access. Before the last of the 11 authorized partners finished provisioning access, a Discord server already had it.

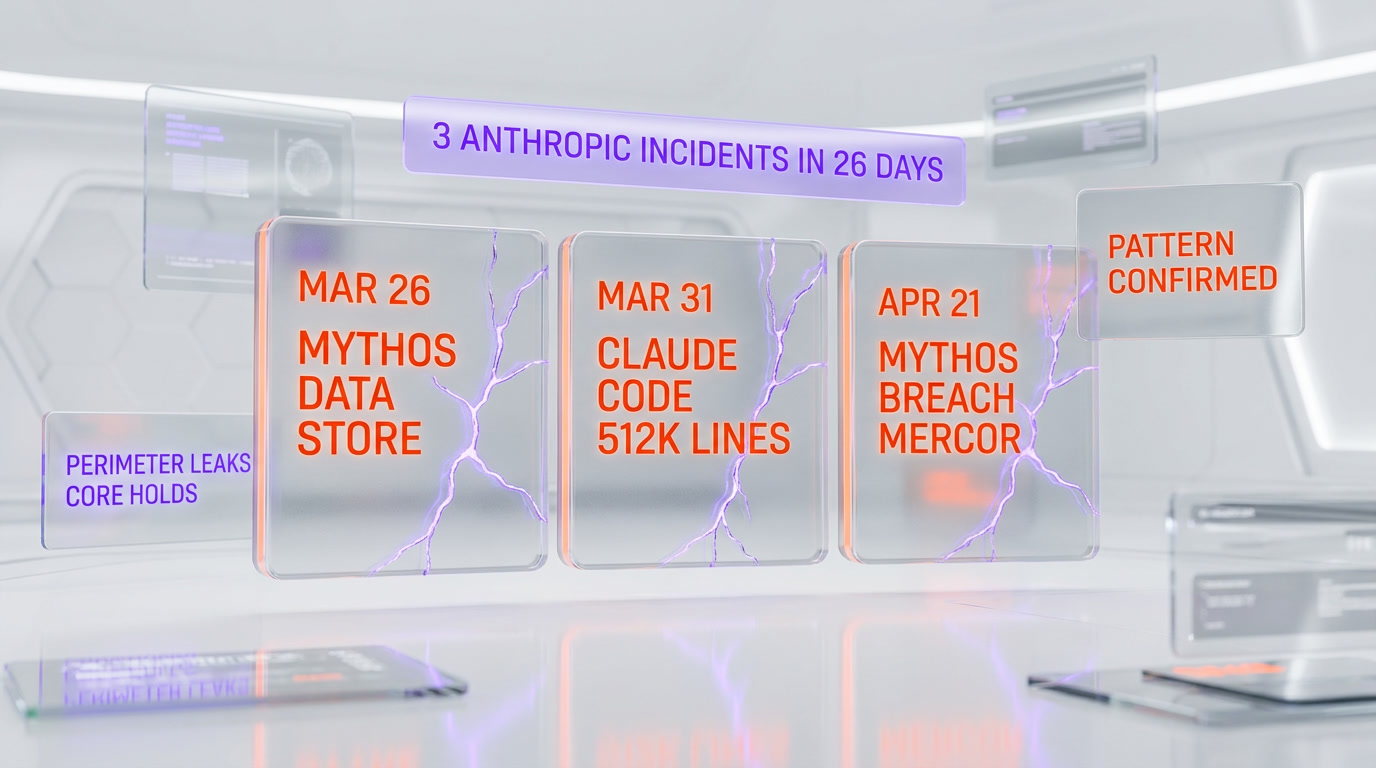

Three Anthropic incidents in 26 days — a pattern emerges

We are going to say something uncomfortable, because it is true. From March 26 through April 21, 2026 — 26 calendar days — Anthropic has disclosed or been subject to three distinct security incidents, each materially different in mechanism, each pointing to the same underlying reality: the company's security culture is not scaling at the pace of its capability releases.

- March 26, 2026 — Mythos data store leak. A misconfigured data store revealed the existence and internal naming of Mythos before Anthropic was ready to announce it. No model weights, no production access — but the branding and strategic positioning of the flagship locked frontier model was compromised. Our coverage: Claude Mythos Leaked via Misconfigured Data Store.

- March 31, 2026 — Claude Code source map leak. A 60 MB source map shipped inside a public npm package, exposing roughly 512,000 lines of internal Claude Code logic. Five days after the Mythos data store incident. Our coverage: Second Anthropic Leak in 5 Days: The AI Safety Lab That Can't Secure Its Own Code and the deep dive 512,000 Lines of Code: Everything Hidden Inside Claude Code's Leaked Source.

- April 21, 2026 — Mythos Preview breach via Mercor. Third-party vendor environment plus compromised contractor credential equals Discord access to the locked frontier. The present article.

The mechanisms differ: cloud misconfiguration, shipped build artifact, vendor-chain compromise. The pattern is consistent: every incident originated outside Anthropic's core security perimeter — in the operational edges where vendor contracts, build pipelines, and cloud configurations live. The core held each time. The perimeter leaked three times.

There is a fair counter-argument. Frontier AI companies operate at a velocity that outpaces traditional enterprise security practice, and every mature cloud-native organization discloses incidents of this general class. Microsoft, Google, and AWS each disclose vendor-chain and misconfiguration incidents quarterly. Anthropic is not abnormally insecure — it is normally insecure while marketing itself as abnormally safe. That gap is the story.

The Altman irony — fear-based marketing meets day-one breach

We owe this section a caveat up front. We are publishing a separate analysis in the same news cycle examining Sam Altman's April 2026 commentary on Anthropic's Mythos rollout — specifically his critique of what he called "fear-based marketing" and his sarcastic "$100M bomb shelter" framing. That piece is being written in parallel. For the purposes of this article, we need only note the sequence.

Altman's critique landed in the days between the April 15 Mythos reveal and the April 21 breach. His argument, stripped to its core: Anthropic is using the language of safety to justify scarcity and premium positioning. A model "too dangerous to release" becomes a model too exclusive to buy. The marketing does the work that the product positioning would struggle to do on its own.

Then, day one of the Glasswing rollout, a Discord server has the model. The sequence is uncomfortable for both sides of the argument. For Anthropic, it weakens the fear-based framing — if the model is too dangerous to release, it should not already be leaking through vendor chains. For Altman, it strengthens the critique on the marketing axis but validates the underlying concern — if frontier models leak this easily, the safety framing matters more, not less, because unauthorized holders of the model are now a live category that did not exist before Glasswing began.

Neither reading is convenient. Both are true.

Why the White House is proceeding anyway

The detail that got under-reported in the Bloomberg piece: the White House, which has been coordinating with Anthropic on Project Glasswing since at least the April 11 announcement, has confirmed the rollout will proceed on schedule. No pause. No suspension. No reassessment of the 11 inaugural partners.

Read that as a signal. It is saying: the federal government's tolerance for frontier-AI incident risk is now calibrated at a level that accommodates day-one breaches via third-party vendor chains. That is either mature acceptance of reality or a dangerously normalized baseline, depending on which analyst you ask. It is also consistent with how the White House treated the 2024-2025 cluster of cloud-provider incidents at AWS, Azure, and GCP — no pause in federal contracts, continued procurement, and a slow tightening of supply-chain requirements through the year following.

For enterprise AI procurement teams watching this, the lesson is clear. Trust-in-Anthropic is being decoupled from trust-in-Anthropic's-vendor-chain. You can rely on the core product while writing contracts that audit the perimeter independently. That is the posture the 11 Glasswing partners will now be forced to adopt, and it is the posture every Claude Opus 4.7 customer should adopt as well.

Implications for enterprise AI security procurement

If you are a CTO, CISO, or head of AI governance at a company that buys frontier-model access, the April 21 breach is not an Anthropic-specific event. It is a genre event. These are the shifts we expect to see reflected in procurement contracts over the next six months, based on how enterprise security tightened after comparable vendor-chain incidents at SolarWinds (2020), Okta (2022), and MOVEit (2023).

- Contractor-tier access audit rights. Enterprise AI contracts will increasingly require the right to audit not just the core vendor but the sub-vendor environments where operational work happens. Mercor-class third parties become named-party obligations.

- Credential rotation minimums. Shorter credential lifetimes for any personnel — contractor or full-time — who touch frontier model environments. 24-hour rotation is not unreasonable for top-tier access.

- Provenance tracking for model weights. If Mythos can leak through a vendor chain, so can any frontier model. Enterprises will start asking for provenance proofs the same way they ask for SOC 2 attestations today.

- Incident-disclosure SLAs. Bloomberg broke the story. Anthropic issued a reactive statement. Enterprise contracts will increasingly require proactive disclosure within hours of internal detection, not hours of media exposure.

- Third-party vendor whitelisting. The most conservative buyers will require frontier-AI vendors to pre-disclose their sub-vendor list and obtain approval before adding new ones — the same model already used for payment processors and identity providers.

For teams building on Claude Code and Claude today, the immediate action is more modest: audit your own Anthropic credentials, rotate any keys older than 60 days, and ensure your organization's AI-access logs ingest into the same SIEM stack you use for cloud audit trails. That last one is the thing most teams still have not done.

Our analysis — trust, security, and positioning

Here is the cold read. Anthropic's product positioning in April 2026 rests on three pillars: safety framing (Mythos is too dangerous to release), capability dominance (Mythos outperforms Opus 4.7 on cyber benchmarks), and enterprise trust (Glasswing is the gold-standard frontier-deployment framework). The April 21 breach does not destroy any of the three pillars. It pressure-tests each one.

Safety framing survives but is weakened. If the rollout proceeds despite the breach, the implicit statement is that the safety framing was always more about responsible capability staging than actual containment. That is a defensible position, but it is not the position Anthropic has been marketing. The marketing has been closer to "this model is so dangerous we will not let it out." The reality is closer to "this model is staged through a vendor chain that we do not fully control." Those are different sentences.

Capability dominance is unaffected by the breach. Mythos Preview is still the highest-scoring model on the benchmarks Anthropic published. A breach does not change the benchmark. In our coverage of the OpenAI revenue memo leak on April 21 — same day as the Mythos breach, which is its own coincidence worth noting — we wrote that Anthropic's capability story and its financial story were operating on decoupled timelines. The Mythos breach reinforces that read: capability is a separate axis from security operations.

Enterprise trust is the pillar under most pressure. Three incidents in 26 days is a pattern the 11 Glasswing partners will have to explain to their own boards. JPMorgan Chase does not tolerate third-party vendor incidents without compensating controls. Google does not tolerate credential compromises in its sub-vendor chain without post-incident review. The Glasswing rollout proceeding on schedule means these controls are being negotiated privately, not publicly — which is the right move for all parties, but it also means the public narrative about Anthropic's enterprise security posture is going to remain asymmetrically negative for several months.

Net: Anthropic remains the frontier-AI company with the strongest safety marketing and a real but not catastrophic gap between that marketing and its operational reality. The gap is closable. It is not closed today.

Frequently asked questions

Frequently Asked Questions

What is the Anthropic Mythos breach?

On April 21, 2026, Bloomberg reported that a private Discord group gained unauthorized access to Claude Mythos Preview — Anthropic's locked frontier model — through a compromised Anthropic contractor credential combined with publicly leaked data from third-party vendor Mercor. Anthropic confirmed the incident and said the breach was scoped to the third-party vendor environment, with no evidence of Anthropic core-system compromise.

How did the Discord group access Mythos Preview?

The group combined two inputs: a previously leaked Mercor dataset that had become publicly available, and a separately compromised Anthropic contractor credential. Together, these provided live session access to a Mythos Preview environment operated through the Mercor vendor chain. It is a classic third-party vendor compromise, not a direct breach of Anthropic's core infrastructure.

Who is the Discord group behind the Mythos breach?

Reporting from Bloomberg, TechCrunch, CBS News, Engadget, and The Decoder describes the group as a private Discord server focused on surfacing information about unreleased AI models. No members, server names, or individual identities have been publicly disclosed. The group is not using Mythos for cybersecurity research or offensive tooling — they appear to be an enthusiast community, not a state actor or cybercrime syndicate.

What is Mercor and why did it matter?

Mercor is an AI startup that provides vendor services into Anthropic's ecosystem. A prior data leak at Mercor exposed information about its contractor workforce. That publicly available data, combined with a separately compromised Anthropic contractor credential, was the attack chain the Discord group assembled to reach Mythos Preview. Mercor became the weakest link in Anthropic's vendor chain for this incident.

Is this the third Anthropic security incident in 26 days?

Yes. March 26, 2026 — Mythos data store leak via misconfigured cloud storage. March 31, 2026 — Claude Code source map leak exposing roughly 512,000 lines of internal logic. April 21, 2026 — Mythos Preview breach via Mercor and a compromised contractor credential. Three incidents, three different mechanisms, 26 calendar days. Each originated outside Anthropic's core security perimeter in its vendor and build-pipeline edges.

Is the White House pausing the Mythos rollout?

No. The White House confirmed that the Project Glasswing rollout to 11 inaugural enterprise partners — including Google, Microsoft, AWS, Nvidia, and JPMorgan Chase — will proceed on schedule despite the breach. This is consistent with federal posture on comparable vendor-chain incidents at AWS, Azure, and GCP in 2024-2025. The rollout is proceeding with privately negotiated compensating controls rather than a public pause.

Did the Discord group use Mythos for cybersecurity attacks?

No, based on the reporting record. The group is not using Mythos Preview for offensive cybersecurity work, red-team tooling, or espionage. Reporting from Bloomberg and The Decoder describes their use as investigating unreleased AI models — enthusiast reconnaissance rather than weaponization. This is the best-case reading of the breach. The worst-case reading is that the attack chain exists regardless of who is at the end of it.

What is Project Glasswing?

Project Glasswing is Anthropic's adversarial-safety framework for frontier-AI deployment, announced April 11, 2026. It includes a Cyber Verification Program that gates access to safety-critical model capabilities like Mythos Preview. The initial rollout on April 21, 2026 covered 11 enterprise partners — Google, Microsoft, AWS, Nvidia, JPMorgan Chase, plus six unnamed organizations. Glasswing is the operational mechanism behind Anthropic's "locked frontier, safeguarded general model" strategy.

Does this breach affect Claude Opus 4.7 users?

No direct impact. Claude Opus 4.7 shipped April 16, 2026 with Mythos-derived safeguards back-ported in, but the April 21 breach was scoped to the Mythos Preview environment accessed through Mercor. Opus 4.7 customers on the standard API, Claude Pro, or Claude Code are not affected. That said, the incident is a reminder to audit your own Anthropic credentials, rotate keys older than 60 days, and ensure AI access logs flow into your SIEM alongside cloud audit trails.

How does this compare to the Claude Code source leak?

Different mechanism, similar underlying failure. The Claude Code leak on March 31, 2026 was a 60 MB source map accidentally shipped inside a public npm package — a build-pipeline artifact failure. The April 21 Mythos breach was a vendor-chain compromise via Mercor and a contractor credential. Both incidents originated in the operational edges of Anthropic's stack rather than its core systems, which is a pattern.

What is Sam Altman's 'fear-based marketing' critique?

In the days between the April 15 Mythos Preview reveal and the April 21 breach, OpenAI CEO Sam Altman criticized what he framed as Anthropic's "fear-based marketing" around Mythos — including a sarcastic "$100M bomb shelter" reference. His argument: Anthropic uses safety framing to justify scarcity and premium enterprise positioning. See our analysis of Altman's "fear-based marketing" takedown of Mythos published in the same news cycle. The breach complicates both sides of the argument.

What should enterprise AI buyers do about the Mythos breach?

Five things. First, audit your Anthropic credentials and rotate any keys older than 60 days. Second, route AI access logs into your SIEM alongside cloud audit trails. Third, require your vendors to disclose their sub-vendor lists in procurement contracts. Fourth, push for incident-disclosure SLAs measured in hours of internal detection, not hours of media exposure. Fifth, treat this as a genre event — these vendor-chain compromises happen to every frontier-AI company and will keep happening until procurement standards tighten.

The bottom line

Anthropic built the most locked frontier-AI deployment framework in the industry, named it Project Glasswing, staged Mythos Preview behind it, and ran a 10-day communications arc from announcement to rollout. Day one of the rollout, a Discord group had the model. Nobody at Anthropic did anything egregious. The contractor credential compromise, the Mercor data leak, the third-party vendor chain — these are the same failure modes every cloud-native enterprise deals with quarterly. What is new is that the marketing promised a different reality than the operational one. The gap between "too dangerous to release" and "already accessed through a vendor chain" is where the story lives. Until Anthropic closes that gap — through tighter sub-vendor whitelisting, shorter credential lifetimes, and proactive incident disclosure — the frontier-safety pillar of its enterprise positioning will keep running into friction it did not need to run into. The capability is real. The safety marketing is real. The operational gap is real. All three can be true, and all three are.