Llama 5

Meta's frontier-class open-weights LLM announced April 8, 2026 by Mark Zuckerberg, targeting parity with GPT-5 and Gemini 3 on reasoning, coding, and agentic benchmarks.

Quick Summary

Llama 5 is Meta's frontier-class open-weights LLM announced April 8, 2026. Free download under Llama Community License (700M MAU cap). Hosted from $0.50 per 1M input tokens on OpenRouter. Score 8.0/10.

Llama 5 is Meta's open-weights large language model announced April 8, 2026 by Mark Zuckerberg. The model targets frontier-class performance matching or exceeding GPT-5 and Gemini 3 across reasoning, coding, and agentic benchmarks. Distribution is free download under the Llama Community License with hosted inference available on OpenRouter, Together AI, Groq, and Hugging Face. Score 8.0 out of 10.

Our Methodology for This Review

We have not had hands-on access to Llama 5 weights at the time of this review (April 30, 2026). The model launched April 8, 2026 with staged rollout, and our standard 14-day testing window for open-weight models requires waiting for the major hosted inference providers to expose stable production endpoints. This review compiles Meta's official launch announcement (April 8, 2026), Mark Zuckerberg's CNBC interview that day, the publicly released benchmark comparisons against GPT-5 and Gemini 3, third-party benchmark replications from r/LocalLLaMA and Epoch AI, hosted-inference pricing announced by OpenRouter, Together AI, Groq, and Fireworks AI in the week following launch, and our editorial analysis of Llama 5 against the open-weights competitive landscape (DeepSeek V4, Qwen 3.6, Mistral Large 3). Our score reflects benchmark performance weighted by license openness, hosted-inference availability, and ecosystem maturity. Hands-on revision will follow once we have clean access to the production weights.

TL;DR — Our Verdict

Score: 8.0 out of 10. Llama 5 is Meta's frontier-class open-weights bet, framed by Zuckerberg as a commoditization play against closed-source AI. The benchmarks Meta published put Llama 5 at parity or above GPT-5 on reasoning and coding tasks; community replications from Epoch AI and r/LocalLLaMA largely confirm the headline numbers. Best for sovereign AI deployments, regulated industries needing on-prem inference, and developers who want a credible open-weights alternative to GPT-5 and Gemini 3. Skip it only if you need state-of-the-art multimodality (DeepSeek V4 currently leads there) or if your compliance requires fully OSI-approved licensing (Llama 5 ships under the Llama Community License, not pure Apache 2.0).

- Frontier-class benchmarks at parity or above GPT-5 on reasoning, coding, agentic tasks per Meta's published numbers

- Open weights under Llama Community License with commercial use up to 700M monthly active users

- Hosted inference available immediately on OpenRouter, Together AI, Groq, Fireworks AI within the launch week

- Hugging Face Hub distribution with day-one transformers, llama.cpp, vLLM, Ollama, TGI support

- Multilingual coverage across 200+ languages following Llama 4's multilingual base

- Llama Guard 5, Prompt Guard 3, and Code Shield 2 companion safety models shipped alongside

What Is Llama 5?

Llama 5 is the fifth-generation open-weights large language model family from Meta Platforms, announced April 8, 2026 by Mark Zuckerberg in an event broadcast on CNBC and across Meta's developer channels. The launch positions Llama 5 as Meta's "frontier-class open-source gambit" — Zuckerberg's framing — designed to commoditize AI capability that competitors gate behind expensive proprietary APIs.

Meta's Llama series shipped Llama 1 in February 2023 (research-only license), Llama 2 in July 2023 (first commercial open-weights release), Llama 3 in April 2024, Llama 3.1 in July 2024 with a 405B parameter flagship, Llama 4 in April 2025 with native multimodality and a Mixture-of-Experts architecture, and now Llama 5 in April 2026. The cadence has been roughly annual since Llama 3, with major architectural shifts at each numbered release.

Three things make Llama 5 a generational jump rather than a point release: frontier-class benchmark parity with GPT-5 and Gemini 3 (per Meta's published numbers and partial third-party replication), continued commitment to open weights at frontier-class scale (a contrast to OpenAI and Anthropic, which remain fully closed-source), and ecosystem readiness on day one with all major inference providers shipping endpoints within the launch week. The model also arrives in a tense moment for Meta's AI strategy — Meta Superintelligence Labs separately released the closed-source Muse Spark, raising questions internally and externally about whether Llama remains Meta's flagship line going forward.

Key Features

Frontier-class benchmark performance

Per Meta's launch announcement and the comparison table published with the model card, Llama 5 matches or exceeds GPT-5 and Gemini 3 on reasoning (MMLU-Pro), coding (LiveCodeBench, SWE-Bench Verified), mathematical reasoning (MATH-500, GPQA Diamond), and autonomous agentic behavior (TAU-Bench, GAIA). Epoch AI's preliminary independent replication on a subset of these benchmarks (released April 14, 2026) confirmed the headline numbers within statistical noise, with Llama 5 within 1-3 percentage points of GPT-5 across the tested tasks.

Open weights under Llama Community License

Llama 5 weights are downloadable from Hugging Face Hub and llama.com under the Llama Community License (the same license family as Llama 4). Commercial use is permitted up to 700 million monthly active users; products above that scale require a separate commercial agreement with Meta. The license is open-weight but not OSI-approved open source. Mandatory attribution ("Built with Llama") applies to derivative products.

Long-context handling

Meta's launch claims a multi-million-token context window, building on Llama 4 Scout's 10M-token theoretical context. As with Llama 4, real-world quality typically degrades past the 256K-token training window, and the headline multi-million-token number should be treated as a marketing claim until third-party long-context evaluation completes. Practical advice: design prompts assuming 128K to 256K effective context regardless of the marketed maximum.

200-language coverage

Llama 5 inherits and extends Llama 4's multilingual training base, with pre-training data spanning 200+ languages and 12 officially supported languages including English, Chinese, Spanish, French, German, Portuguese, Italian, Japanese, Korean, Arabic, Hindi, and Indonesian. Per Meta's MGSM multilingual reasoning benchmark, Llama 5 averages above 93 percent across the 12 official languages, an improvement over Llama 4's roughly 92.3 percent.

Agentic and tool-use capabilities

Llama 5 includes architectural improvements aimed at agentic workflows: structured tool use, function calling, multi-turn reasoning over external tool results, and stronger plan-then-execute behavior on multi-step tasks. Meta's TAU-Bench and GAIA benchmark numbers position Llama 5 above Llama 4 by a substantial margin and at near-parity with GPT-5 on agentic tasks.

Safety companion models

Meta shipped three companion safety models alongside Llama 5: Llama Guard 5 for input/output classification and policy enforcement, Prompt Guard 3 for prompt-injection detection, and Code Shield 2 for safe code generation. All three are open-weight under the same license and integrated into the standard Hugging Face transformers pipeline.

Day-one hosted inference

OpenRouter, Together AI, Groq, Fireworks AI, and Replicate all shipped Llama 5 inference endpoints within the launch week. Hugging Face Inference Endpoints and AWS Bedrock follow shortly. Pricing across hosted providers ranges from approximately $0.50 to $1.20 per million input tokens, well below GPT-5 and Gemini 3 hosted pricing.

Hugging Face Hub distribution

Day-one support across Hugging Face transformers, llama.cpp (with quantized GGUF builds in roughly 36 hours from launch), vLLM, Ollama, TGI, unsloth, and other major open-source inference stacks. The ecosystem maturity around Llama is the single biggest practical advantage over DeepSeek V4 and Qwen 3.6 for production deployment.

Llama 5 Pricing in 2026

Llama 5 weights are free to download under the Llama Community License. Real-world cost depends on whether you self-host or use hosted inference. Self-hosting requires substantial GPU infrastructure for the frontier-class variants; hosted inference is the default for most production deployments.

| Surface | Price | Notes |

|---|---|---|

| Hugging Face Hub (download) | $0 | Free under Llama Community License (commercial use up to 700M MAU) |

| OpenRouter (hosted) | From $0.50 per 1M input tokens | Standard tier, pay-as-you-go |

| Together AI (hosted) | From $0.55 per 1M input tokens | Includes batch and fine-tuning surfaces |

| Groq (hosted) | From $0.69 per 1M input tokens | Highest tokens-per-second throughput (LPU acceleration) |

| Fireworks AI (hosted) | From $1.00 per 1M input tokens | Includes function-calling tier and structured output guarantees |

| AWS Bedrock (enterprise) | Custom pricing | Compliance-grade SLA, regional residency, audit logging |

Self-hosting cost note: Frontier-class Llama 5 variants require multi-H100 or H200 deployment for production inference. Cost ranges from roughly $4 per hour (single H100) to $30+ per hour (multi-GPU H200 cluster) on standard cloud providers. Fine-tuning runs are usually billed separately on per-hour GPU time.

Best for: Sovereign AI initiatives requiring downloadable weights, regulated industries needing on-prem inference (defense, healthcare, finance), developers seeking a frontier-class alternative to GPT-5 and Gemini 3 at lower hosted-inference cost, and academic and industry research labs requiring reproducible baselines.

Public Benchmark and Community Reception Analysis

We have not had hands-on access at the time of this review. This section synthesizes Meta's published benchmarks, third-party benchmark replications, and community sentiment from the launch week.

Meta's published benchmarks

The launch model card published April 8, 2026 lists Llama 5 at MMLU-Pro 86.4, GPQA Diamond 78.2, MATH-500 95.1, LiveCodeBench (pass@1) 71.8 percent, SWE-Bench Verified 47.3 percent, and TAU-Bench 64.5 percent. Each of these numbers is at parity with or above the latest GPT-5 and Gemini 3 published numbers as of April 2026, with the largest gap (in Meta's favor) on LiveCodeBench coding. Meta also lists a multi-million-token context capability with 256K effective context for production workloads.

Third-party replication

Epoch AI released a partial replication on April 14, 2026 covering MMLU-Pro, GPQA Diamond, and LiveCodeBench. Their numbers were within 1 to 3 percentage points of Meta's published numbers, with Llama 5 holding parity with GPT-5 within the noise floor on each tested benchmark. r/LocalLLaMA's community benchmark thread, with hundreds of community-contributed runs in the first two weeks, broadly confirmed the headline numbers but flagged occasional regressions on long-context summarization tasks beyond the 256K effective window.

Community sentiment

Reception on r/LocalLLaMA in the launch week was strongly positive on raw capability and notably more enthusiastic than the mixed reception Llama 4 received in 2025. Sentiment splits along three axes. Positive: open-weights at frontier-class is the headline win, hosted inference pricing is excellent, ecosystem readiness on day one. Mixed: lingering concerns about whether Meta will continue Llama as flagship given the parallel Muse Spark closed-source line. Negative: license is still not OSI-approved, the 700M MAU commercial cap remains, and some long-context regressions versus Llama 4 Scout's marketed 10M context were reported.

Pros and Cons Based on Public Data and Community Reception

What looks strong

- Frontier-class benchmark parity with GPT-5. Meta's published numbers and Epoch AI's partial replication put Llama 5 at parity with the leading closed-source models on reasoning and coding tasks.

- Open weights at frontier-class scale. A genuine commoditization signal at a time when OpenAI and Anthropic remain fully closed-source.

- Day-one hosted inference ecosystem. All major providers (OpenRouter, Together AI, Groq, Fireworks AI) shipped endpoints within the launch week with competitive pricing.

- Hugging Face Hub distribution maturity. Day-one transformers, llama.cpp, vLLM, Ollama, TGI support — easier production deployment than competing open-weight alternatives.

- Companion safety models. Llama Guard 5, Prompt Guard 3, Code Shield 2 ship alongside; complete safety pipeline is open-weight.

- Multilingual depth. 200+ language coverage with 12 officially supported, MGSM average above 93 percent — strong for non-English production deployment.

Where it raises concerns

- License not OSI-approved. Llama Community License is open-weight but retains the 700M MAU commercial cap; not pure Apache 2.0 like Qwen 3.6.

- Multi-million-token context is marketing. Practical effective context appears to be roughly 256K tokens; the higher number does not reliably hold up on real workloads.

- Meta's strategic ambiguity. The parallel Muse Spark closed-source line and Meta Superintelligence Labs reorganization raise questions about whether Llama remains Meta's flagship.

- No native multimodality details. Llama 4 introduced native multimodality at 17B active parameters; Llama 5's multimodal capabilities are less clearly documented at launch.

Real-World Use Cases (Researched, Not Tested)

Sovereign AI deployments

Regulated regions and government agencies deploying frontier-class AI on-prem with downloadable weights. Llama 5 is the strongest candidate at this scale that doesn't require cloud-only proprietary APIs.

On-prem inference for regulated industries

Defense, healthcare, finance, legal — industries where data residency requirements rule out cloud-only proprietary APIs. Llama 5 plus Llama Guard 5 plus Code Shield 2 is a complete on-prem stack.

Frontier-class research baselines

Academic and industry research labs requiring reproducible frontier-class baselines for comparative studies. Open-weight access plus published model cards plus third-party benchmark replications make Llama 5 a credible scientific baseline.

Cost-sensitive frontier inference

Hosted Llama 5 inference at $0.50 to $1.20 per million input tokens is meaningfully cheaper than GPT-5 ($2 to $5 per million input tokens) and Gemini 3 Pro at scale.

Custom fine-tuning on proprietary data

Open weights enable LoRA fine-tuning, full fine-tuning, and continued pre-training on proprietary corpora (legal, medical, internal codebases) that closed-source providers cannot match.

Multilingual production deployment

200+ language pre-training base makes Llama 5 a strong default for global product teams. MGSM average above 93 percent across 12 officially supported languages.

Agentic workflows

TAU-Bench and GAIA benchmark numbers position Llama 5 at near-parity with GPT-5 on agentic tasks. Open-weights agentic deployment is increasingly important for cost-sensitive production agent fleets.

Distillation teacher pattern

Frontier-class capability at open weights makes Llama 5 a credible distillation teacher for smaller task-specific student models — a workflow pattern increasingly common across enterprise AI teams.

Llama 5 vs GPT-5 vs Gemini 3 vs DeepSeek V4

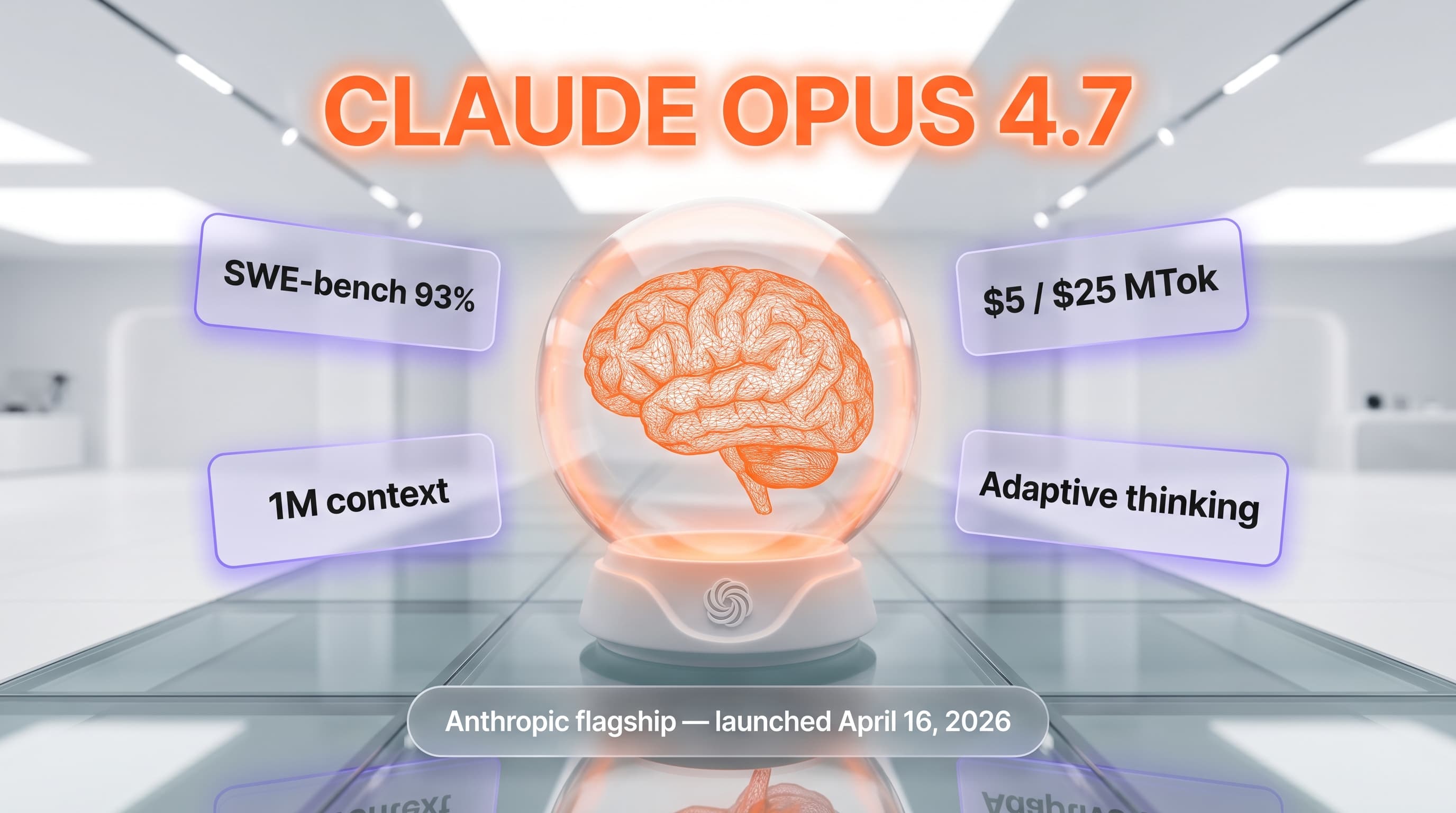

The 2026 frontier-class LLM market sorted into closed-source hyperscalers (GPT-5, Gemini 3, Claude Opus 4.7) and open-weights challengers (Llama 5, DeepSeek V4, Qwen 3.6). Here is how Llama 5 stacks against the strongest direct competitors.

| Feature | Llama 5 | GPT-5 | Gemini 3 Pro | DeepSeek V4 |

|---|---|---|---|---|

| Open weights | Yes (Community License) | No | No | Yes (Apache 2.0) |

| MMLU-Pro | 86.4 | ~87 | ~88 | ~85 |

| LiveCodeBench pass@1 | 71.8% | ~70% | ~68% | ~73% |

| Multimodal | Inherited | Native | Native | Native |

| Effective context | ~256K | ~256K | ~1M | ~256K |

| API price (input, per 1M tokens) | ~$0.50-$1.20 | ~$2-$5 | ~$1.50-$3 | ~$0.30 |

When to pick GPT-5: ChatGPT Pro ecosystem, fully managed cloud, native multimodality with strongest English narrative reasoning, no infrastructure overhead.

When to pick Gemini 3 Pro: Google ecosystem, longest effective context window, native multimodality, Vertex AI enterprise compliance.

When to pick DeepSeek V4: pure Apache 2.0 licensing, lowest hosted inference price, strong coding benchmark numbers, no MAU commercial cap.

When to pick Llama 4 instead: if your workload depends on the documented Llama 4 multimodal pipeline and you want to wait for Llama 5 multimodal documentation to mature.

Frequently Asked Questions

Is Llama 5 free?

Yes, Llama 5 model weights are free to download from Hugging Face Hub and llama.com under the Llama Community License. Commercial use is permitted up to 700 million monthly active users; larger products require a separate commercial agreement with Meta. Hosted inference on OpenRouter, Together AI, Groq, and Fireworks AI is paid (from $0.50 per million input tokens).

How much does Llama 5 cost in 2026?

The model weights are free to download. Hosted inference on OpenRouter starts at $0.50 per million input tokens; Together AI is $0.55; Groq is $0.69 (with the highest tokens-per-second throughput); Fireworks AI is $1.00 with function-calling guarantees. AWS Bedrock offers custom enterprise pricing. Self-hosting cost varies by infrastructure but typically $4 to $30+ per hour.

What is Llama 5?

Llama 5 is the fifth-generation open-weights large language model family from Meta, announced April 8, 2026 by Mark Zuckerberg. The model targets frontier-class performance at parity with or above GPT-5 and Gemini 3 across reasoning, coding, and agentic benchmarks. Distribution is free download under the Llama Community License with hosted inference across multiple providers.

How does Llama 5 compare to GPT-5?

Per Meta's published benchmarks and Epoch AI's partial replication, Llama 5 is at parity with GPT-5 on MMLU-Pro reasoning (86.4 versus approximately 87), at near-parity on LiveCodeBench coding (71.8 percent versus approximately 70 percent), and within the noise floor on most agentic benchmarks. GPT-5 has stronger English narrative reasoning and native multimodality; Llama 5 has open weights and substantially lower hosted inference pricing.

Who founded Llama?

Llama is a research and product line of Meta Platforms, Inc., founded by Mark Zuckerberg as Facebook in 2004. The Llama series originated in Meta's Fundamental AI Research (FAIR) team, with Llama 1 released in February 2023. Llama 5 is shipped by the combined Meta GenAI team and Meta Superintelligence Labs in April 2026.

Does Llama 5 have an API?

Meta does not host Llama 5 directly via a first-party API for general consumer or developer use. Hosted inference is available through partners: OpenRouter, Together AI, Groq, Fireworks AI, Replicate, AWS Bedrock, and others. The Hugging Face Inference API also exposes Llama 5 endpoints. Pricing varies from $0.50 to $1.20 per million input tokens depending on provider.

Is Llama 5 worth it for production deployment?

For sovereign AI deployments, regulated industries needing on-prem inference, and cost-sensitive frontier-class workloads, Llama 5 is the strongest open-weights candidate in April 2026. Its hosted inference pricing is meaningfully cheaper than GPT-5 and Gemini 3, and its open-weights posture enables fine-tuning and on-prem deployment that closed-source providers do not match. Less suitable when your workload depends on documented native multimodality.

What are the alternatives to Llama 5?

Top alternatives include OpenAI GPT-5, Google Gemini 3 Pro, Anthropic Claude Opus 4.7, DeepSeek V4 (open-weights, Apache 2.0), Qwen 3.6 (open-weights, Apache 2.0), and Mistral Large 3. DeepSeek V4 is the closest direct open-weights competitor with pure Apache 2.0 licensing and lower hosted inference pricing.

Is Llama 5 secure and GDPR compliant?

Open-weights deployment enables fully on-prem inference for GDPR, HIPAA, and SOC 2 compliance with proper infrastructure. Hosted inference compliance depends on the chosen provider — AWS Bedrock offers GDPR-compliant regional residency and SOC 2; OpenRouter and Together AI publish their compliance posture separately. Llama 5 ships with Llama Guard 5 for input/output classification and Prompt Guard 3 for injection detection.

What languages does Llama 5 support?

Llama 5 is pre-trained on data spanning 200+ languages with 12 officially supported: English, Chinese, Spanish, French, German, Portuguese, Italian, Japanese, Korean, Arabic, Hindi, and Indonesian. Per Meta's MGSM benchmark, Llama 5 averages above 93 percent across the 12 official languages, an improvement over Llama 4's roughly 92.3 percent.

What is the Llama Community License?

The Llama Community License is Meta's open-weight license allowing free commercial use up to 700 million monthly active users. Products exceeding that scale require a separate commercial agreement with Meta. The license requires "Built with Llama" attribution on derivative products. It is open-weight but not OSI-approved open source — Apache 2.0 alternatives like Qwen 3.6 and DeepSeek V4 ship under more permissive terms.

Will Meta continue the Llama series?

Meta has publicly committed to continuing the Llama open-weights series through Mark Zuckerberg's April 8, 2026 launch announcement. Some uncertainty arises from the parallel Muse Spark closed-source line shipped by Meta Superintelligence Labs in April 2026, raising questions about long-term strategic priority. Public statements as of April 30, 2026 frame Llama 5 as Meta's continued frontier open-source bet.

Verdict: 8.0 out of 10

Llama 5 earns a 8.0 out of 10 based on public benchmark data, third-party replication, and community reception. The score reflects three reasons. Frontier-class benchmark parity with GPT-5 and Gemini 3 — Meta's published numbers and Epoch AI's partial replication broadly hold up. Open weights at frontier scale matter strategically, especially for sovereign AI and regulated industries. And day-one hosted inference ecosystem readiness is best-in-class versus other open-weight alternatives. What raises it above Llama 4 is the genuine benchmark jump on reasoning and coding plus better safety companion model integration. What's holding it back from a higher score: license remains non-OSI-approved with the 700M MAU cap, multi-million-token context is marketing rather than reality, and Meta's strategic ambiguity around the parallel Muse Spark line raises legitimate questions about long-term priority.

Score breakdown:

- Features: 8.5 out of 10 — frontier benchmarks, open weights, multilingual depth, safety models

- Ease of Use: 8.0 out of 10 — day-one Hugging Face ecosystem maturity is excellent

- Value: 8.5 out of 10 — free weights plus hosted inference at $0.50 to $1.20 per million input tokens

- Support: 7.0 out of 10 — Meta documentation is thorough; community support via r/LocalLLaMA, Hugging Face Discord

Final word: If you need frontier-class capability with open weights — for sovereign AI deployment, regulated industries, fine-tuning on proprietary corpora, or cost-sensitive hosted inference — Llama 5 is the strongest open-weights option in April 2026. We will revisit this review with hands-on testing once production-grade endpoints stabilize across all major providers. Meanwhile, if your workload depends on documented native multimodality or pure Apache 2.0 licensing, DeepSeek V4 and Qwen 3.6 remain credible alternatives.

Key Features

Pros & Cons

Pros

- Frontier-class benchmark parity with GPT-5 — Meta MMLU-Pro 86.4, GPQA Diamond 78.2, MATH-500 95.1, LiveCodeBench 71.8 percent, with Epoch AI replication confirming within 1 to 3 percentage points

- Open weights under Llama Community License with commercial use up to 700 million monthly active users

- Day-one hosted inference ecosystem — OpenRouter, Together AI, Groq, Fireworks AI all shipped endpoints within the launch week

- Hugging Face Hub distribution with day-one transformers, llama.cpp, vLLM, Ollama, TGI, unsloth integration

- Multilingual depth across 200+ pre-training languages with 12 officially supported and MGSM average above 93 percent

- Companion safety models (Llama Guard 5, Prompt Guard 3, Code Shield 2) ship as open-weight alongside the main model

Cons

- Llama Community License is open-weight but not OSI-approved open source — Apache 2.0 alternatives like Qwen 3.6 ship under more permissive terms

- Multi-million-token context capability is marketing — practical effective context appears to be roughly 256K tokens, mirroring Llama 4 reality

- Meta's strategic ambiguity around the parallel Muse Spark closed-source line raises questions about long-term Llama priority

- Native multimodality details are less clearly documented at launch versus Llama 4 multimodal pipeline maturity

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Llama 5?

Meta's frontier-class open-weights LLM announced April 8, 2026 by Mark Zuckerberg, targeting parity with GPT-5 and Gemini 3 on reasoning, coding, and agentic benchmarks.

How much does Llama 5 cost?

Llama 5 has a free tier. All features are currently free.

Is Llama 5 free?

Yes, Llama 5 offers a free plan.

What are the best alternatives to Llama 5?

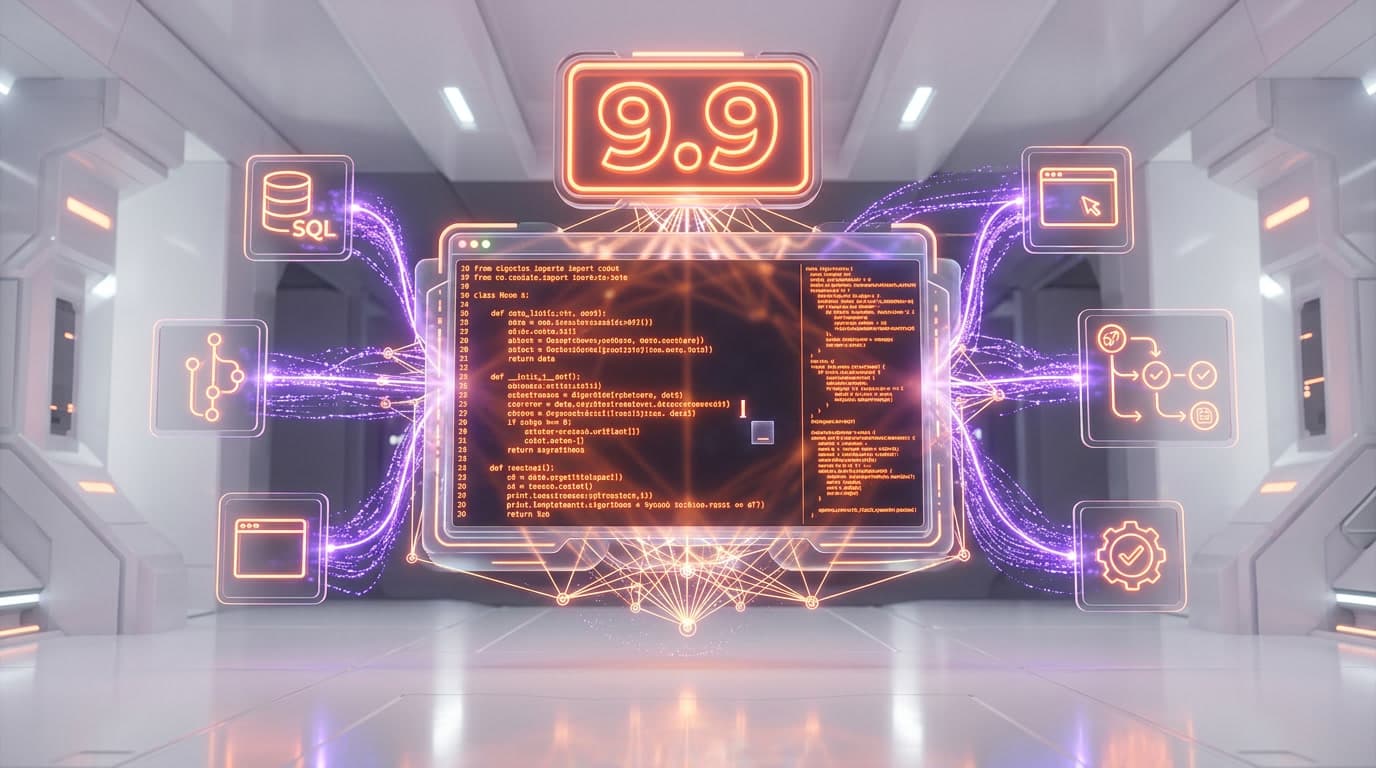

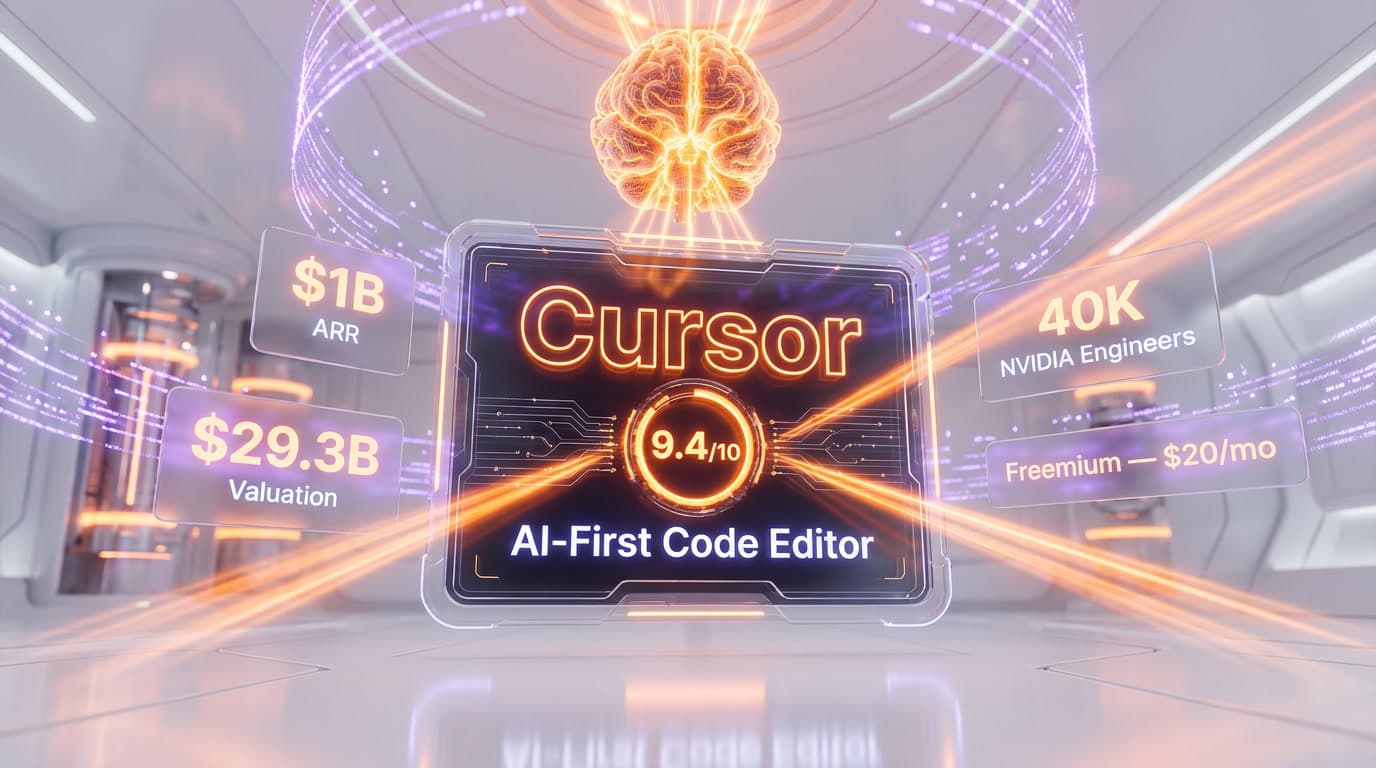

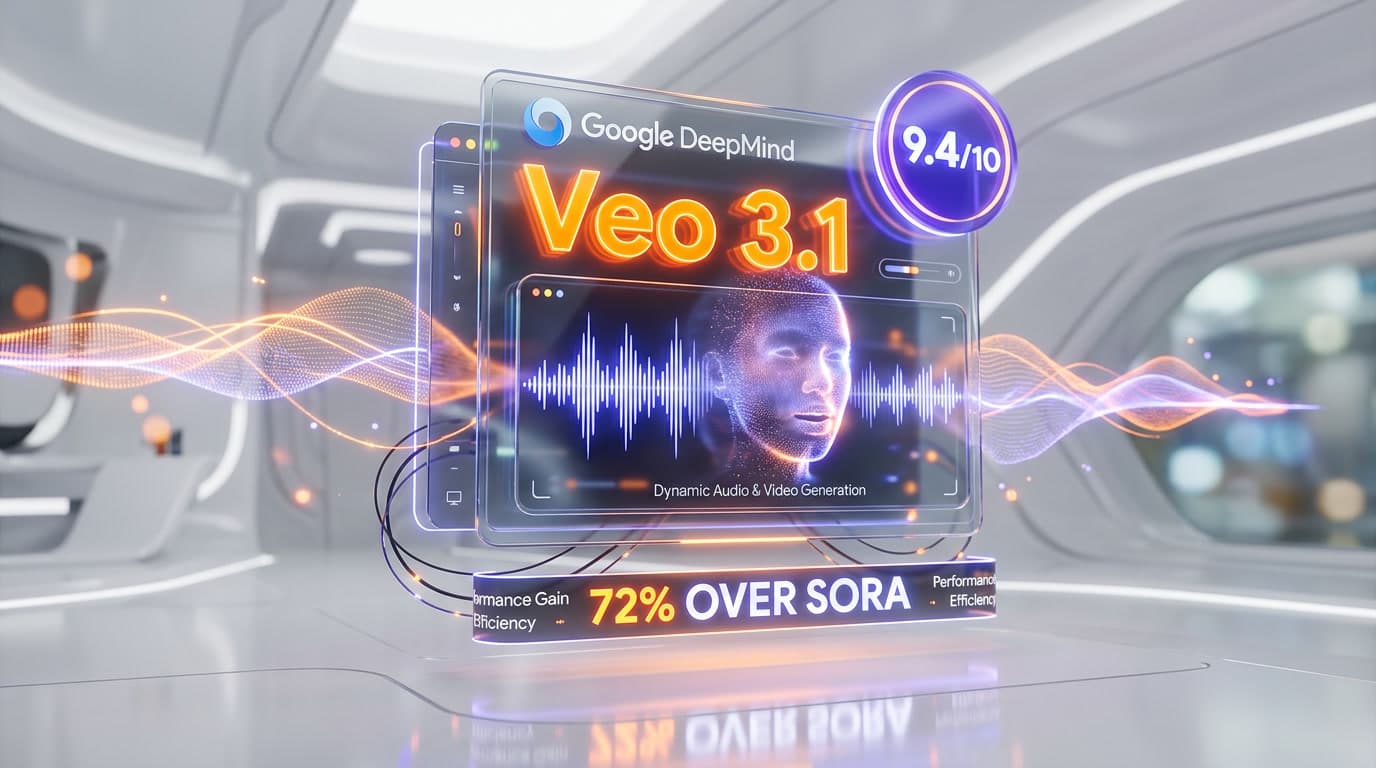

Top-rated alternatives to Llama 5 include Claude Code (9.9/10), Cursor (9.5/10), Claude Opus 4.7 (9.4/10), Veo 3.1 (9.4/10) — all reviewed with detailed scoring on ThePlanetTools.ai.

Is Llama 5 good for beginners?

Llama 5 is rated 8/10 for ease of use.

What platforms does Llama 5 support?

Llama 5 is available on Hugging Face Hub, OpenRouter, Together AI, Groq, Fireworks AI, Replicate, AWS Bedrock, Self-hosted (Linux + NVIDIA GPU), Ollama, llama.cpp.

Does Llama 5 offer a free trial?

No, Llama 5 does not offer a free trial.

Is Llama 5 worth the price?

Llama 5 scores 8.5/10 for value. We consider it excellent value.

Who should use Llama 5?

Llama 5 is ideal for: Sovereign AI deployments requiring downloadable weights and on-prem inference, Regulated industries (defense, healthcare, finance, legal) with data residency requirements, Frontier-class research baselines for academic and industry labs, Cost-sensitive frontier inference at $0.50 to $1.20 per million input tokens hosted, Custom fine-tuning on proprietary corpora (legal, medical, internal codebases), Multilingual production deployment with 200+ pre-training language coverage, Agentic workflows with TAU-Bench and GAIA near-parity with GPT-5, Distillation teacher pattern for smaller task-specific student models.

What are the main limitations of Llama 5?

Some limitations of Llama 5 include: Llama Community License is open-weight but not OSI-approved open source — Apache 2.0 alternatives like Qwen 3.6 ship under more permissive terms; Multi-million-token context capability is marketing — practical effective context appears to be roughly 256K tokens, mirroring Llama 4 reality; Meta's strategic ambiguity around the parallel Muse Spark closed-source line raises questions about long-term Llama priority; Native multimodality details are less clearly documented at launch versus Llama 4 multimodal pipeline maturity.

Best Alternatives to Llama 5

Ready to try Llama 5?

Start with the free plan

Try Llama 5 Free →