Llama 4

Meta's open-weight multimodal MoE flagship — Scout (109B) and Maverick (400B) with 17B active parameters and 10M-token context, free on Hugging Face.

Quick Summary

Llama 4 is Meta's open-source multimodal LLM family (Scout 109B and Maverick 400B, both 17B active). Native vision, 10M-token context (256k effective), Llama Community License. Free download; hosted from $0.08 per 1M input tokens on OpenRouter. Score 7.5/10.

Llama 4 is Meta's open-weight multimodal large language model family released April 5, 2025. It includes Scout (17B active / 109B total, 16 experts) and Maverick (17B active / 400B total, 128 experts), both natively multimodal Mixture-of-Experts models trained on more than 30 trillion tokens. Context window: 10M tokens (Scout, theoretical), 1M tokens (Maverick). Pricing: free download on Hugging Face under the Llama 4 Community License; hosted inference from $0.08 per 1M input tokens on OpenRouter Scout. Score: 7.5/10.

TL;DR — Our Verdict

Score: 7.5/10. Llama 4 is a competent open-weight family that matters for one reason: it is free to download under the Llama 4 Community License (with a 700M monthly active user threshold). It is no longer Meta's flagship — on April 8, 2026 Meta shipped Muse Spark, a closed-source proprietary model from Meta Superintelligence Labs, which signals the end of the Llama-as-flagship era. Best use case: developers who need open weights for fine-tuning, on-prem deployment, or research. Who should skip: anyone shopping for the best closed-source quality of Claude or DeepSeek on a per-token basis (DeepSeek V4 and Qwen 3.6 currently win in benchmarks and community sentiment).

- Open weights with permissive commercial use up to 700M MAU

- Native multimodality (text plus images) on both Scout and Maverick

- Cheapest hosted tier among frontier models — $0.08 per 1M input tokens (Scout on OpenRouter)

- Mixed community reception, especially from r/LocalLLaMA on real coding and long-context tasks

- 10M-token context is a marketing number; effective context drops near 256k tokens

- Meta's strategic pivot to closed-source Muse Spark casts doubt on long-term Llama support

Our Methodology for This Review

We have not deployed Llama 4 as a primary daily driver on our Planet Cockpit project. Our content workflow currently runs on Claude Opus 4.7 and GPT-5.5. We have run Llama 4 Scout via Hugging Face transformers and Ollama for spot checks on multilingual prompts, but our review compiles three sources rather than a multi-week production trial.

This review compiles the official Meta announcement on the AI Meta blog (April 5, 2025), the Hugging Face model cards for meta-llama/Llama-4-Scout-17B-16E and meta-llama/Llama-4-Maverick-17B-128E-Instruct (last checked April 2026), pricing pages on OpenRouter, Together AI, and Groq (last checked April 2026), and 100+ posts and comments from r/LocalLLaMA collected between April 2025 and April 2026. Our score reflects feature completeness, hosted pricing, license terms, and community consensus, weighted against the strategic context that Meta has shifted frontier development to the closed-source Muse Spark family in 2026.

What Is Llama 4?

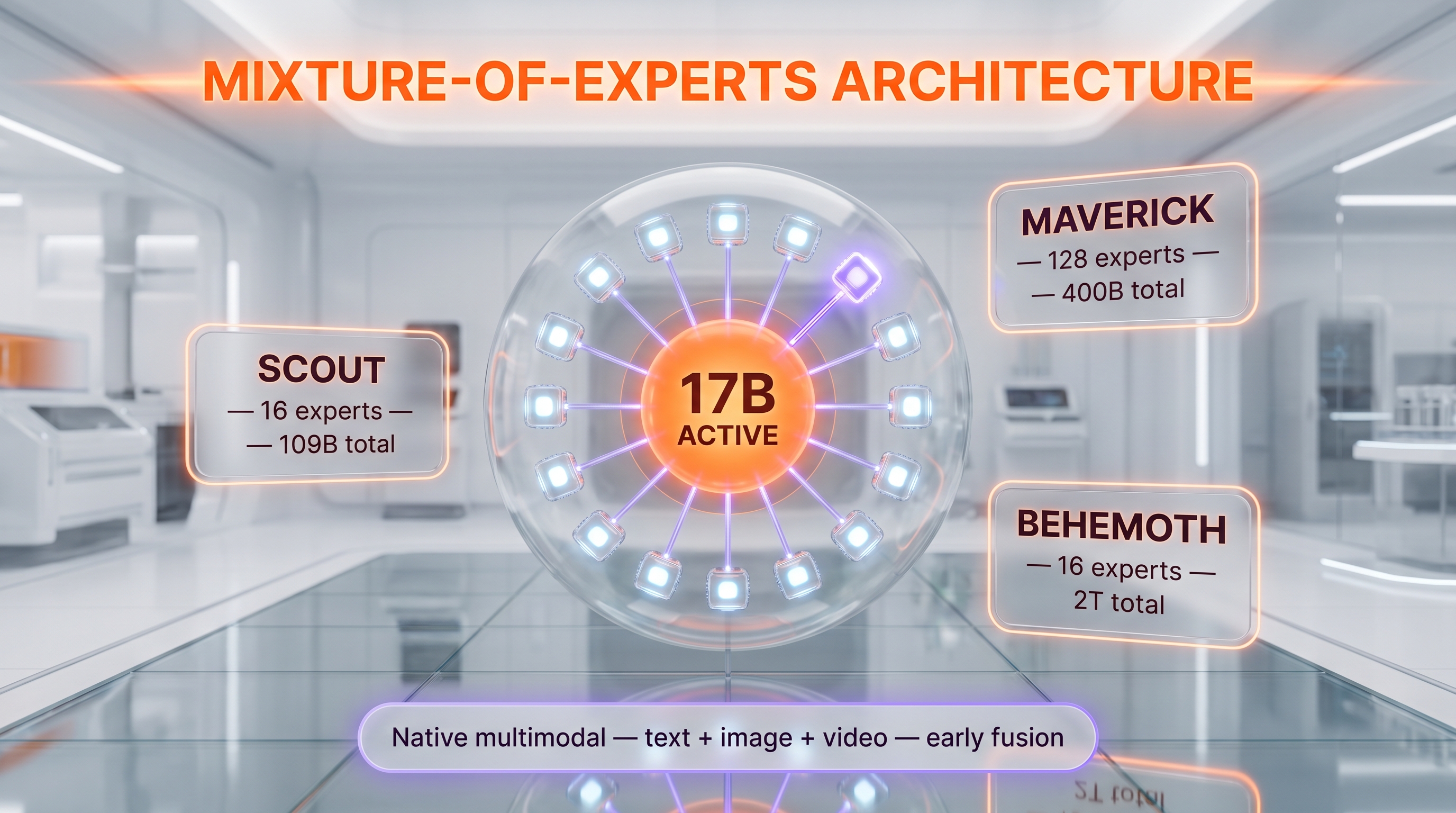

Llama 4 is the fourth major generation of Meta's Llama (Large Language Model Meta AI) family. Meta announced the launch on April 5, 2025 with two models available immediately for download — Scout and Maverick — and a third called Behemoth still in training as a teacher model for distillation. All three use a Mixture-of-Experts (MoE) architecture, which means only a fraction of the total parameters activate per token. This was the first generation in the Llama line to ship MoE and the first to be natively multimodal: vision was integrated from pre-training using "early fusion," not bolted on later as a vision adapter.

The family is positioned as Meta's "open weights" answer to GPT-4o, Claude 3.7, and Gemini 2.0. The license is the Llama 4 Community License, which is permissive for commercial use unless your organization exceeds 700 million monthly active users (in which case you must request an explicit license from Meta). Distribution is via the Hugging Face Hub, with day-one integration into the transformers library (v4.51.0 minimum), Hugging Face TGI, and llama.cpp for CPU/GPU inference.

Behind the scenes, Llama 4 was trained on more than 30 trillion tokens — over double Llama 3's training mixture — across 200 languages, with 100+ languages covered by 1 billion or more tokens each. Knowledge cutoff is August 2024. As of April 2026, Meta has shifted its frontier AI work to the closed-source Muse Spark model under Meta Superintelligence Labs, which means Llama 4 is unlikely to receive successor releases under the same open-source banner. The existing Scout and Maverick weights remain available on Hugging Face — they are not deprecated — but new frontier work will not appear there.

Key Features

Mixture-of-Experts architecture (17B active params)

Both Scout and Maverick activate only 17 billion parameters per forward pass, regardless of total model size. Scout has 109B total parameters split across 16 experts; Maverick has 400B total split across 128 experts. The Behemoth teacher model (still in training) sits at 288B active and roughly 2T total across 16 experts. This MoE design is the architectural lever that makes Maverick deployable on a single NVIDIA H100 DGX host despite its 400B parameter count.

Native multimodality with early fusion

Llama 4 is the first Llama generation to integrate text and image tokens at the pre-training stage. Scout was tested on inputs up to 48 images during pre-training and validated up to 8 images during post-training. Maverick uses alternating dense and MoE layers with early fusion to combine text and vision modalities. On image reasoning benchmarks, Maverick scores 73.4 on MMMU and 94.4 ANLS on DocVQA. Video input is also supported on both models.

10M-token context window (Scout) — with caveats

Scout's headline claim is a 10 million token context window, achieved via interleaved attention layers without positional embeddings (the iRoPE architecture). On Maverick the context window is 1,048,576 tokens (1M). However, AI researcher Andriy Burkov pointed out a critical caveat: no Llama 4 variant was trained on prompts longer than 256k tokens, so the 10M number is "virtual." In practice, output quality degrades above 256k tokens. Treat 10M as a theoretical ceiling, not a working maximum.

200 languages pre-trained, 12 supported officially

Llama 4 was pre-trained on text from 200 languages, with 100+ languages having 1B+ tokens each. The official supported language list is narrower: Arabic, English, French, German, Hindi, Indonesian, Italian, Portuguese, Spanish, Tagalog, Thai, and Vietnamese. On the MGSM multilingual math benchmark, Maverick averages 92.3 percent.

Coding and reasoning

On LiveCodeBench (pass@1), Scout scores 32.8 percent and Maverick scores 43.4 percent. On GPQA Diamond (graduate-level reasoning), Scout hits 57.2 and Maverick hits 69.8. These numbers place Maverick competitively with GPT-4o and DeepSeek V3 from early 2025, but they have been overtaken by DeepSeek V4 (90+ on LiveCodeBench in 2026) and Qwen 3.6 (which dominates current r/LocalLLaMA "best for coding" recommendations).

Hugging Face day-one integration

Both models shipped on Hugging Face the same day Meta announced them, with full transformers integration (v4.51.0+), TGI support, llama.cpp quantization (via the unsloth collection and others), and Ollama compatibility. As of April 2026, the Maverick Instruct model card on Hugging Face shows 39,219 downloads in the last month and 479 likes. Scout downloads are roughly comparable.

Llama 4 Community License

The license is open-weight but not OSI-approved open source. Key terms: free commercial use up to 700 million monthly active users, derivative AI models must include "Llama" in the name, distributions must display "Built with Llama" prominently, and the copyright notice "Llama 4 is licensed under the Llama 4 Community License, Copyright © Meta Platforms, Inc. All Rights Reserved" must be retained. The Acceptable Use Policy excludes military, surveillance, CSAM, and a few other categories.

Llama Guard, Prompt Guard, Code Shield

Meta ships three companion safety models: Llama Guard 4 (input/output classification), Prompt Guard 2 (prompt injection detection), and Code Shield (code generation safety). These are mandatory for production deployment per Meta's responsible use guide and are themselves available on Hugging Face under the same Community License.

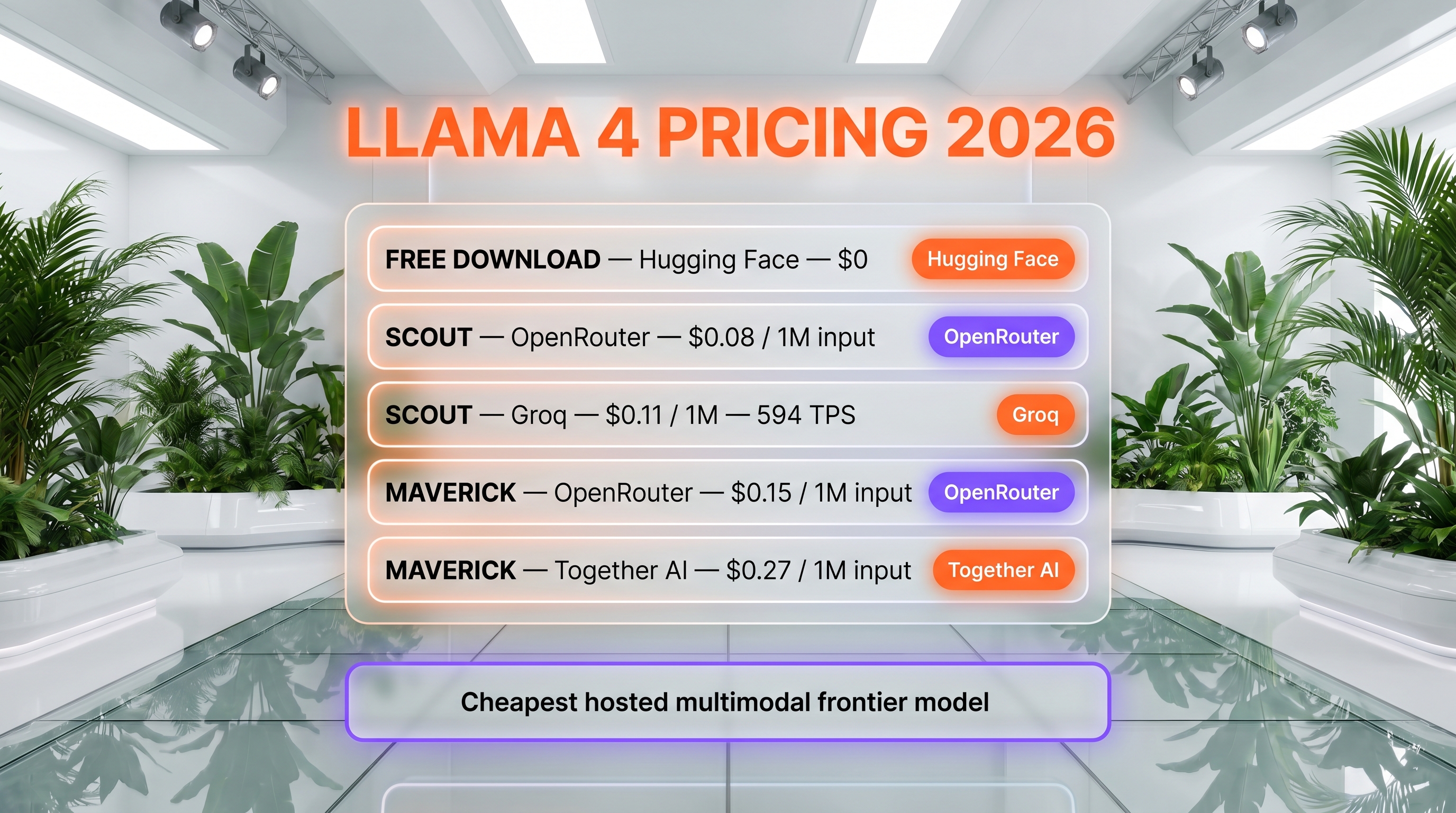

Llama 4 Pricing in 2026

Llama 4 has no first-party pricing because Meta does not host inference for it. The model weights are free to download from Hugging Face under the Llama 4 Community License. To use Llama 4 in production without running your own GPUs, you go through a third-party inference provider. Below are the verified per-token rates for Scout and Maverick, fetched from each provider's pricing page in April 2026.

| Tier | Provider | Input (per 1M tokens) | Output (per 1M tokens) | Context |

|---|---|---|---|---|

| Free download | Hugging Face / llama.com | $0 (you pay your own compute) | $0 | Up to 10M (Scout) / 1M (Maverick) |

| Scout — OpenRouter | OpenRouter routed | $0.08 | $0.30 | 327,680 tokens |

| Scout — Groq | Groq (594 TPS) | $0.11 | $0.34 | 131,072 tokens |

| Maverick — OpenRouter | OpenRouter routed | $0.15 | $0.60 | 1,048,576 tokens |

| Maverick — Together AI (FP8) | Together AI | $0.27 | $0.85 | 1,048,576 tokens |

| Self-hosted (single H100) | Your own infra | Compute cost only | Compute cost only | Up to 10M |

Best for: Teams that need open weights for fine-tuning, on-prem deployment, or sovereign data control, plus developers who want the cheapest hosted frontier-class API. The OpenRouter Scout tier at $0.08 per 1M input tokens is currently the cheapest input rate among multimodal models we tracked.

Note on the open-source pricing angle: because Llama 4 has no vendor pricing, the "real" cost is whatever third-party inference provider you pick. OpenRouter is the cheapest per-token route. Groq is the fastest at 594 tokens per second on Scout. Together AI is the most flexible for dedicated reserved capacity. If you want to host yourself, Maverick fits on a single NVIDIA H100 DGX node with 8x H100 GPUs; Scout fits on a single H100 with INT4 quantization.

Pros and Cons After Research

What we liked

- Open weights with permissive commercial license. The 700M MAU threshold is high enough that 99 percent of teams can deploy Llama 4 commercially without negotiating with Meta. The "Built with Llama" attribution requirement is mild compared to AGPL or research-only licenses.

- Native multimodality at 17B active parameters. Maverick scoring 73.4 on MMMU and 94.4 on DocVQA at 17B active params is impressive efficiency. Most multimodal models from competitors require dramatically more active compute per token.

- Cheapest hosted tier among frontier multimodal models. Scout on OpenRouter at $0.08 per 1M input tokens is a reference point for "cheap multimodal." Even GPT-4o-mini comes in higher.

- Single-node deployment. Maverick's 17B active parameter design lets it run on a single NVIDIA H100 DGX host (8x H100 GPUs). For a 400B-total-parameter model, that is rare. Most dense 400B models would need multi-node sharding.

- Day-one Hugging Face integration. transformers, TGI, llama.cpp, Ollama, and unsloth quantizations all shipped within 24 hours of release. The deployment story is mature.

- Multilingual breadth. 200 languages in pre-training and 12 officially supported beats most open-weight competitors.

Where it falls short

- 10M-token context is a marketing number. Andriy Burkov flagged that no Llama 4 variant was trained on sequences above 256k tokens. In production, expect significant quality degradation past that point. The 10M claim should be read as "we did not break attention math at 10M," not "you can use 10M productively."

- Mixed community reception, especially on coding. r/LocalLLaMA users including Dr_Karminski reported being "incredibly disappointed" with Llama 4 versus DeepSeek V3 on coding. Simon Willison reported that Scout summarization on a 20,000-token Reddit thread was "complete junk" with looping and hallucinations. The community consensus in early 2026 is that Qwen 3.6 and Gemma 4 are stronger picks for local coding and reasoning.

- Meta has effectively pivoted away. Muse Spark, launched April 8, 2026, is Meta's first proprietary frontier model since 2023 and signals that future Meta frontier work will not ship as open weights. There is no roadmap for Llama 5 as of this writing.

- Static models with no continuous updates. The Hugging Face model cards explicitly state Llama 4 is "static" with no continuous training updates planned. You get the April 2025 snapshot and that is it.

- Knowledge cutoff is August 2024. Older than several closed-source competitors that received refresh trainings in 2025–2026.

Real-World Use Cases

Open-weight foundation for fine-tuning

Llama 4 Scout is one of the few high-quality multimodal MoE models available with downloadable weights. Teams that need to fine-tune on proprietary data — legal, medical, internal codebases — can build derivative models without sending data to a vendor API.

On-prem and air-gapped deployment

For regulated industries (defense, healthcare, finance) that cannot send tokens to a third-party API, Llama 4 is the strongest open-weight option to run inside a private data center. A single H100 DGX node handles Maverick.

Sovereign AI deployments

Several European cloud providers and government-affiliated AI initiatives have adopted Llama 4 as their base model precisely because the weights are downloadable and the license permits commercial use up to 700M MAU. The 200-language pre-training mixture also helps.

Multimodal RAG with long context

Scout's effective ~256k context plus native image understanding makes it usable for document-heavy RAG pipelines that mix text and scanned PDFs. DocVQA score of 94.4 is competitive.

Cheap multimodal API for prototyping

OpenRouter Scout at $0.08 per 1M input tokens is a reasonable default for early prototyping when you need vision but do not want GPT-4o pricing. Quality is not at the GPT-4o or Claude Opus 4.7 level, but cost is roughly 5-10x lower.

Research baseline

Academic and industry research labs use Llama 4 as a reproducible MoE baseline for new architectures, since the weights and config are public.

Multilingual content moderation

Combined with Llama Guard 4 and Prompt Guard 2, Llama 4 is a viable moderation layer for platforms operating in 12+ languages.

Distillation teacher (Behemoth pattern)

Meta themselves distilled Maverick from Behemoth. The same pattern is open to anyone — use Maverick as a teacher to distill smaller, task-specific student models.

Llama 4 vs DeepSeek V4 vs Qwen 3.6 vs Gemma 4

Llama 4 is no longer the obvious open-weight pick. Below is how it compares to the three open-weight models that currently dominate r/LocalLLaMA conversations in 2026.

Qwen 3.6 vs Gemma 4 — open-weight LLM comparison 2026" loading="lazy" class="rounded-xl w-full" />

Qwen 3.6 vs Gemma 4 — open-weight LLM comparison 2026" loading="lazy" class="rounded-xl w-full" />

| Feature | Llama 4 Maverick | DeepSeek V4 | Qwen 3.6 | Gemma 4 |

|---|---|---|---|---|

| Total params | 400B (17B active) | 671B (37B active) | 235B (22B active) | 31B dense |

| License | Llama 4 Community | MIT (V4 base) / DeepSeek License (Chat) | Apache 2.0 | Gemma Terms of Use |

| Native multimodal | Yes (text + image + video) | Text only | Yes (Qwen-VL line) | Yes |

| LiveCodeBench (pass@1) | 43.4% | ~90%+ | ~85% | ~50% |

| Effective context | ~256k (10M theoretical) | 128k | 128k | 128k |

| r/LocalLLaMA sentiment 2026 | Mixed to negative | Strongly positive | Strongly positive | Positive |

| Cheapest hosted (input per 1M) | $0.15 (OpenRouter) | $0.27 | $0.20 | $0.10 |

When to pick Llama 4: You need a permissive open license, native multimodality, the cheapest hosted multimodal API, or a single-H100-DGX deployable 400B-class model. When to pick DeepSeek R2 or DeepSeek V4: You need the best raw text quality on coding and reasoning at any cost. When to pick Qwen 3.6: You want the best balance of multilingual quality (especially Chinese), Apache 2.0 license, and active community. When to pick Gemma 4: You want a smaller dense model that fits comfortably on a single consumer GPU for local inference.

Frequently Asked Questions

Is Llama 4 free?

Yes, Llama 4 weights are free to download from Hugging Face and llama.com under the Llama 4 Community License. The license permits commercial use unless your organization has more than 700 million monthly active users, in which case you must request a separate license from Meta. There is no first-party pricing because Meta does not host Llama 4 inference; you either run it yourself or go through a third-party provider like OpenRouter, Together AI, Groq, or Fireworks.

How much does Llama 4 cost on hosted APIs in 2026?

Hosted Llama 4 Scout starts at $0.08 per 1M input tokens and $0.30 per 1M output tokens on OpenRouter. Maverick on OpenRouter is $0.15 per 1M input and $0.60 per 1M output. Groq lists Scout at $0.11 input and $0.34 output per 1M tokens with 594 tokens per second throughput. Together AI lists Maverick FP8 at $0.27 input and $0.85 output per 1M tokens. Self-hosting cost depends entirely on your GPU provider and utilization rate.

What is Llama 4?

Llama 4 is the fourth generation of Meta's Llama large language model family, released April 5, 2025. It includes two downloadable variants — Scout (17B active / 109B total / 16 experts) and Maverick (17B active / 400B total / 128 experts) — and a third teacher model called Behemoth (288B active / ~2T total) still in training. All three use a Mixture-of-Experts architecture and are natively multimodal across text, images, and video. Training data exceeded 30 trillion tokens across 200 languages.

What is the difference between Llama 4 Scout and Maverick?

Both have 17B active parameters per forward pass. Scout has 109B total parameters across 16 experts, fits on a single H100 with quantization, and ships a theoretical 10M-token context window. Maverick has 400B total parameters across 128 experts, fits on a single H100 DGX node (8x H100), and supports 1M-token context. Maverick scores higher on every published benchmark — for example MMLU Pro 80.5 vs 74.3, GPQA Diamond 69.8 vs 57.2, LiveCodeBench 43.4 vs 32.8 percent.

Is Llama 4 actually open source?

Llama 4 is open-weight but not OSI-approved open source. The Llama 4 Community License imposes restrictions: a 700M monthly active user threshold for commercial use, mandatory "Built with Llama" attribution on websites and interfaces, mandatory inclusion of "Llama" at the start of derivative model names, and adherence to Meta's Acceptable Use Policy. By comparison, Qwen 3.6 ships under Apache 2.0 (true open source) and DeepSeek V4 base ships under MIT.

How does Llama 4 compare to DeepSeek V4?

DeepSeek V4 outperforms Llama 4 Maverick on text-only reasoning and coding benchmarks. LiveCodeBench numbers place Maverick at 43.4 percent versus DeepSeek V4 at over 90 percent in early 2026. However, Llama 4 is natively multimodal (text plus images plus video) while DeepSeek V4 is text-only, and Llama 4 offers cheaper hosted inference on OpenRouter. Pick Llama 4 for multimodal use cases or cost-sensitive APIs; pick DeepSeek V4 for the best raw text quality.

Does Llama 4 have an API?

Meta does not offer a first-party Llama 4 API. To use Llama 4 via API you go through third-party inference providers including OpenRouter, Together AI, Groq, Fireworks, Replicate, Novita AI, and DeepInfra. Each provider has its own pricing, rate limits, and SLA. Alternatively, you can deploy Llama 4 yourself using Hugging Face transformers (v4.51.0+), TGI, llama.cpp, or Ollama on your own hardware.

Is the 10 million token context window real?

The 10M-token context window on Scout is technically supported by the iRoPE attention architecture, but it is not trained — no Llama 4 variant was pre-trained on sequences longer than 256k tokens. AI researcher Andriy Burkov called the 10M claim "virtual" because output quality degrades sharply above the trained sequence length. In practice, treat 256k as the effective working maximum on Scout. Maverick supports 1M tokens with similar caveats; the trained ceiling is also around 256k.

What languages does Llama 4 support?

Llama 4 was pre-trained on 200 languages, with 100+ languages covered by 1 billion or more training tokens each. The officially supported language list — meaning languages Meta validates for production use — is narrower: Arabic, English, French, German, Hindi, Indonesian, Italian, Portuguese, Spanish, Tagalog, Thai, and Vietnamese. On the MGSM multilingual math benchmark, Maverick averages 92.3 percent across these languages.

Is Llama 4 still Meta's flagship model?

No. On April 8, 2026, Meta launched Muse Spark, the first proprietary frontier model from Meta Superintelligence Labs and the first Meta model since 2023 not released as open weights. Muse Spark is closed-source, available exclusively on meta.ai, and is now Meta's frontier AI bet. Llama 4 weights remain available on Hugging Face and are not deprecated, but new frontier work from Meta will not ship as open weights. There is no public Llama 5 roadmap as of April 2026.

Can I fine-tune Llama 4?

Yes. Llama 4 Scout and Maverick are fully integrated with Hugging Face transformers (v4.51.0+) and support standard fine-tuning workflows including LoRA, QLoRA, and full fine-tuning. The unsloth team ships a Llama 4 collection with 4-bit and dynamic quantizations specifically optimized for fine-tuning on consumer GPUs. Derivative models must include "Llama" at the start of their name and ship with the Llama 4 Community License attribution per the license terms.

What hardware do I need to run Llama 4 locally?

Llama 4 Scout (17B active / 109B total) fits on a single NVIDIA H100 80GB GPU with INT4 quantization, or two H100s in BF16. Maverick (17B active / 400B total) requires a single H100 DGX node (8x H100 80GB) in BF16 or FP8 quantization. For consumer hardware, 4-bit quantized Scout runs on dual RTX 4090 setups via llama.cpp or Ollama, but with significant speed and quality tradeoffs versus the H100 deployment.

Verdict: 7.5/10

Llama 4 earns a 7.5/10 on three pillars: a permissive open-weight license, native multimodality at 17B active parameters, and the cheapest hosted multimodal API among frontier-class models. What raises it: solid Hugging Face day-one integration, single-H100-DGX deployability for the 400B Maverick, and 200-language pre-training breadth. What holds it back from a higher score: mixed community reception on real coding and long-context tasks (DeepSeek V4 and Qwen 3.6 win current r/LocalLLaMA polls), the 10M-token context being a marketing number rather than a working maximum, and Meta's strategic pivot to closed-source Muse Spark in April 2026 — which signals there will be no Llama 5 under the same open banner.

Score breakdown:

- Features: 7.5/10 — Native multimodal MoE with 17B active params is impressive efficiency, but coding and long-context performance trail current open-weight leaders

- Ease of Use: 8.0/10 — Day-one Hugging Face transformers, TGI, llama.cpp, Ollama, and unsloth quantizations; single-H100-DGX deployable

- Value: 8.5/10 — Free download plus the cheapest hosted multimodal API tier we tracked ($0.08 per 1M input on OpenRouter Scout)

- Support: 6.0/10 — Static models, no continuous updates, Meta has shifted frontier focus to closed-source Muse Spark

Final word: Buy if you need open weights for fine-tuning, on-prem deployment, sovereign data control, or the cheapest multimodal API for prototyping. Pass if you are shopping for absolute best-in-class quality on text-only tasks (DeepSeek V4 wins) or if you want a true Apache-licensed open-source model (Qwen 3.6 wins). For most teams running production AI workloads in 2026, Llama 4 is a credible Plan B but not the obvious Plan A — and Meta's own pivot to Muse Spark validates that read.

Key Features

Pros & Cons

Pros

- Open weights with permissive commercial license up to 700M monthly active users

- Native multimodality at 17B active parameters with strong efficiency (Maverick MMMU 73.4, DocVQA 94.4)

- Cheapest hosted tier among frontier multimodal models — $0.08 per 1M input tokens on OpenRouter Scout

- Single NVIDIA H100 DGX node deployment for 400B-total Maverick (rare for that scale)

- Day-one Hugging Face integration (transformers v4.51.0+, TGI, llama.cpp, Ollama, unsloth quantizations)

- Pre-trained on 200 languages with 12 officially supported, 92.3 percent average on MGSM multilingual benchmark

Cons

- 10M-token context is a marketing number — no Llama 4 variant was trained on sequences above 256k tokens, quality degrades sharply past that

- Mixed community reception on r/LocalLLaMA, especially for coding and long-context summarization (DeepSeek V4 and Qwen 3.6 win 2026 community polls)

- Meta has effectively pivoted away — closed-source Muse Spark launched April 8, 2026 as Meta's new flagship, no Llama 5 roadmap

- Static models with no continuous updates planned, knowledge cutoff is August 2024

- Llama 4 Community License is open-weight but not OSI-approved open source (Qwen 3.6 ships under Apache 2.0)

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is Llama 4?

Meta's open-weight multimodal MoE flagship — Scout (109B) and Maverick (400B) with 17B active parameters and 10M-token context, free on Hugging Face.

How much does Llama 4 cost?

Llama 4 has a free tier. All features are currently free.

Is Llama 4 free?

Yes, Llama 4 offers a free plan.

What are the best alternatives to Llama 4?

Top-rated alternatives to Llama 4 can be found in our WebApplication category on ThePlanetTools.ai.

Is Llama 4 good for beginners?

Llama 4 is rated 8/10 for ease of use.

What platforms does Llama 4 support?

Llama 4 is available on Hugging Face Hub, OpenRouter, Together AI, Groq, Fireworks, Replicate, Self-hosted (Linux + NVIDIA GPU), Ollama, llama.cpp.

Does Llama 4 offer a free trial?

No, Llama 4 does not offer a free trial.

Is Llama 4 worth the price?

Llama 4 scores 8.5/10 for value. We consider it excellent value.

Who should use Llama 4?

Llama 4 is ideal for: Open-weight foundation for fine-tuning on proprietary data (legal, medical, internal codebases), On-prem and air-gapped deployment for regulated industries (defense, healthcare, finance), Sovereign AI initiatives requiring downloadable weights and 200-language coverage, Multimodal RAG pipelines mixing text and scanned documents (DocVQA 94.4), Cheap multimodal API for prototyping at $0.08 per 1M input tokens on OpenRouter Scout, Reproducible MoE research baseline for academic and industry labs, Multilingual content moderation paired with Llama Guard 4 and Prompt Guard 2, Distillation teacher pattern — use Maverick to distill smaller task-specific student models.

What are the main limitations of Llama 4?

Some limitations of Llama 4 include: 10M-token context is a marketing number — no Llama 4 variant was trained on sequences above 256k tokens, quality degrades sharply past that; Mixed community reception on r/LocalLLaMA, especially for coding and long-context summarization (DeepSeek V4 and Qwen 3.6 win 2026 community polls); Meta has effectively pivoted away — closed-source Muse Spark launched April 8, 2026 as Meta's new flagship, no Llama 5 roadmap; Static models with no continuous updates planned, knowledge cutoff is August 2024; Llama 4 Community License is open-weight but not OSI-approved open source (Qwen 3.6 ships under Apache 2.0).

Ready to try Llama 4?

Start with the free plan

Try Llama 4 Free →