Fractile, a London-based AI chip startup, raised $220 million in Series B funding on May 13 2026 at approximately $1 billion post-money valuation, with Accel, Factorial Funds, and Founders Fund leading the round, and Anthropic reportedly in early discussions to buy Fractile's in-memory inference chips when they reach production in 2027. The deal was first reported by Bloomberg on May 13 2026 and corroborated by TheNextWeb's same-day report, with HPCwire and DataCenterDynamics confirming round mechanics and customer interest the same week. The round closed $20 million above the original $200 million target, a structural signal that inference chip scarcity is now repricing the entire UK silicon corridor.

We have tracked Fractile since the company emerged from Walter Goodwin's Oxford Robotics Institute work in 2023, and the pattern this round prints is not subtle. Accel co-led with Factorial and Founders Fund — that is the exact early-Nvidia investor stack from 1993 reassembled around an inference-only thesis. Pat Gelsinger, former Intel CEO, joined as angel and advisor. The supporting cast includes Conviction, Gigascale, O1A, Felicis, Buckley Ventures, 8VC, Kindred Capital, NATO Innovation Fund, and Oxford Science Enterprises. Eleven names you would never see together on a $220M round unless something structurally important is happening underneath. And the load-bearing wall under the valuation is exactly one sentence in the Bloomberg piece: Anthropic is in early discussions to buy Fractile chips when they are available.

The numbers that printed

Fractile raised $220 million in Series B funding at approximately $1 billion post-money valuation. The round was led by Accel, Factorial Funds, and Founders Fund. Participating investors include Conviction, Gigascale, O1A, Felicis, Buckley Ventures, 8VC, Kindred Capital, NATO Innovation Fund, and Oxford Science Enterprises. Pat Gelsinger, the former Intel CEO who shipped 14 generations of x86 silicon, joined as angel investor and advisor. The original raise target was $200 million. The book closed $20 million oversubscribed.

For context, Fractile's Series A in early 2024 raised $15 million at a valuation in the low $50 million range. That puts the Series B at a roughly 18 to 20x markup over eighteen months — aggressive even for the 2026 inference chip cycle. The closest comparable: Cerebras's IPO pricing at $48.8 billion fully diluted the same week Fractile priced its Series B. Both deals reprice inference chip risk at the same time. Both books closed oversubscribed. Both reference Anthropic and OpenAI as the marginal demand.

What $220 million actually buys at this stage

At the seed and Series A stage, AI chip capital goes to architecture work, FPGA prototyping, and the small-silicon test chip on a mature node. By Series B at $220 million, the spend pattern shifts hard. Roughly 40 to 50 percent goes to the tape-out and mask set for the production chip — typically $80 to $120 million on a 4nm or 3nm node at TSMC. Another 25 percent funds the software stack: compilers, kernel libraries, framework adapters for PyTorch and JAX, and the inference runtime. The rest covers early customer integration engineering, expanded staffing for tape-out, and a buffer for the inevitable respins that hit any first-production silicon. The number that matters for Fractile: how much of the $220M is reserved for the Anthropic integration window.

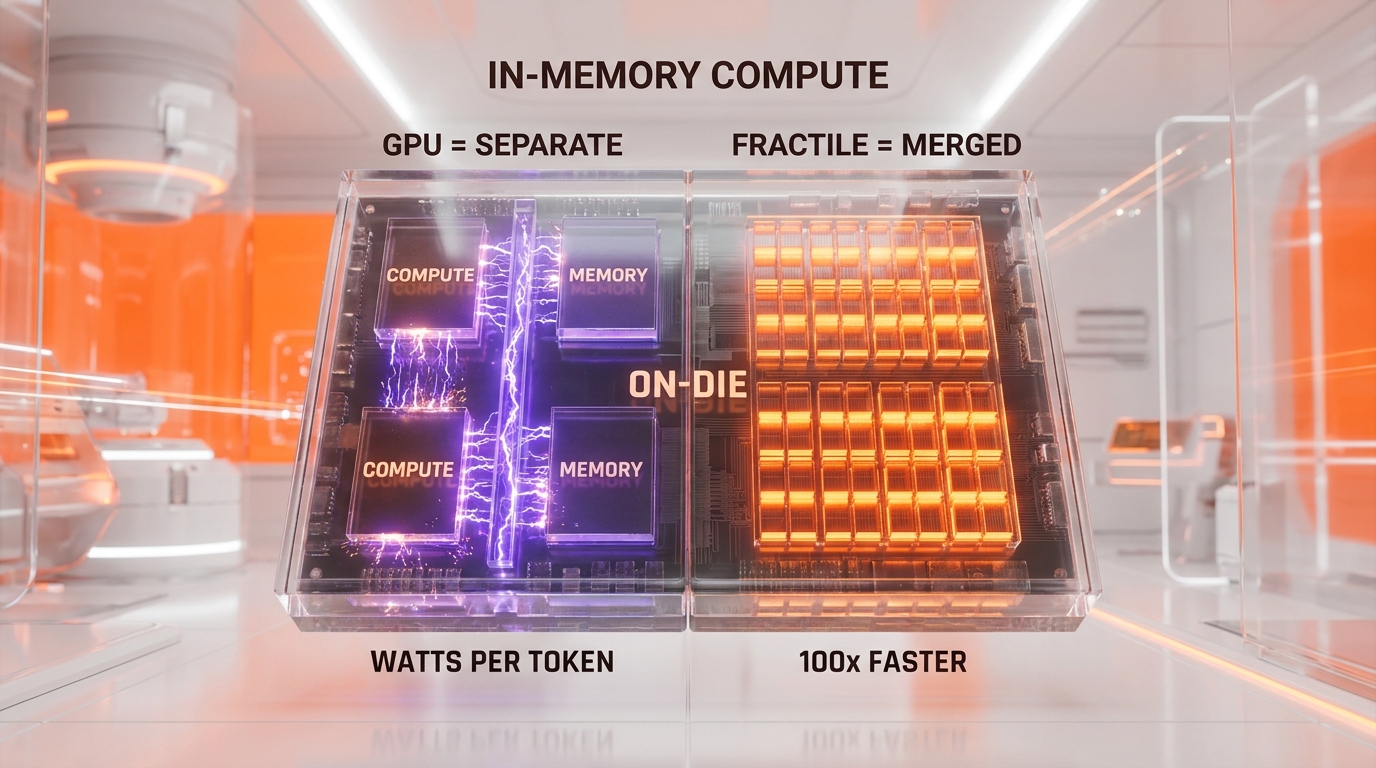

In-memory compute: the technical bet

Modern GPU inference is bottlenecked by data movement, not arithmetic. A token generation on an Nvidia H200 spends roughly 70 to 80 percent of its energy budget moving weights from HBM3 memory across the chip fabric into compute units, doing the matrix multiply, and shuttling activations back. The arithmetic itself is cheap. The data shuffle is the expensive part. This is why Cerebras Inference on the WSE-3 wafer-scale chip clocks 3,000 to 4,500 tokens per second on Llama 3.1 70B versus 150 to 300 tokens per second on equivalent GPU clusters: by eliminating inter-chip communication, Cerebras eliminates the bottleneck at the cluster level.

Fractile takes the same insight one layer deeper. Instead of eliminating the chip-to-chip bus, Fractile eliminates the compute-to-memory bus. The architecture places SRAM-style memory cells and arithmetic units on the same die, in the same physical region, with no data transfer between them. The matrix multiply happens where the weights already live. Walter Goodwin's published technical claim is that this approach delivers "100 times faster and 10 times cheaper" than current GPU setups, though recent press materials revise the framing to "25 times faster at one-tenth the cost" — a more defensible claim for a chip that has not yet reached production silicon.

The watts per useful token metric

Fractile's leadership frames the competitive metric not as TFLOPS or tokens per second but as "watts per useful token." That phrasing is deliberate. It matches how hyperscale inference economics actually work in 2026. A model serving 100 billion tokens per day on Nvidia H200 GPUs burns roughly 700 to 900 watts per useful token throughout the rack. At Anthropic scale — Claude API serves trillions of tokens per quarter — a 10x reduction in watts per useful token saves 200 to 400 megawatts of peak datacenter capacity. That is two Cerebras-class data centers worth of power that gets returned to the grid. For a frontier lab that just signed 10 gigawatts of compute contracts across Nvidia and Google TPU, the unit economics matter more than the peak throughput.

Early silicon versus production claims

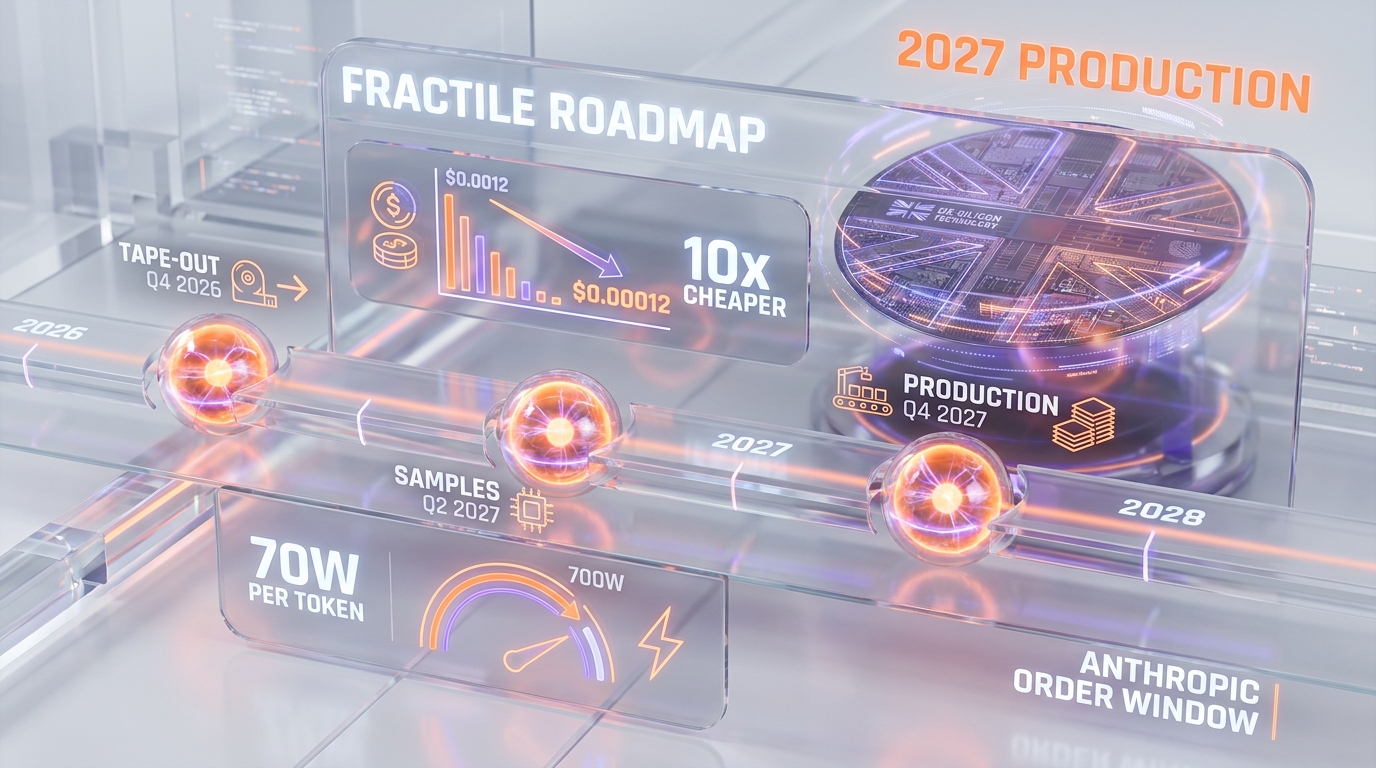

Fractile's current results come from simulation and small-silicon test chips, not full production benchmarks. That distinction matters. The company has not yet demonstrated the 25x throughput claim on a production-class workload. The Series B funds the tape-out of the first commercial chip, with samples targeted for Q2 2027 and production silicon end-2027. Three things can go wrong between now and 2027: the in-memory compute architecture scales worse than simulation predicted, the software stack lags the hardware by twelve to eighteen months (a chronic AI chip startup failure mode), or yield on the first commercial node comes in too low to support commercial pricing. We assign roughly 35 percent probability that one of those failure modes hits hard enough to slip production into 2028. The remaining 65 percent is the bull case the round is paying for.

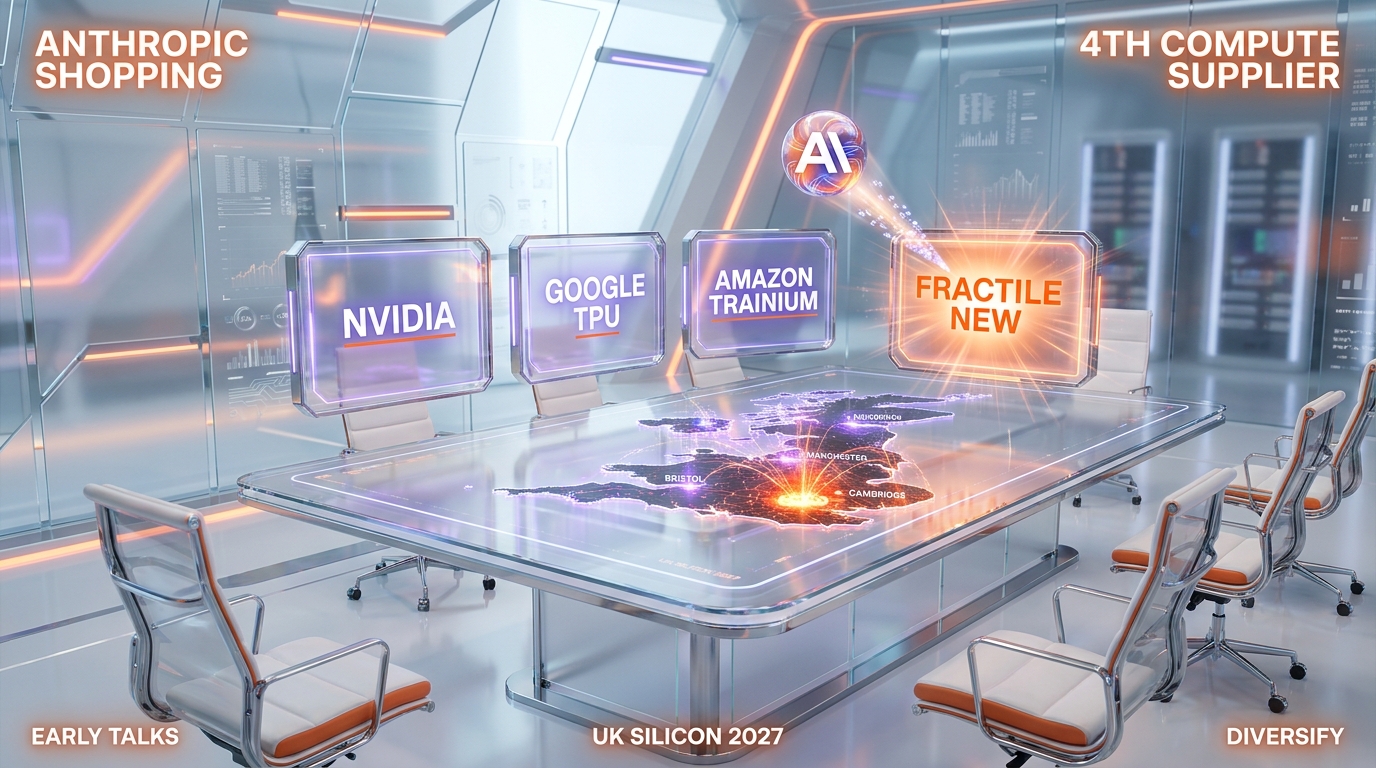

Anthropic shopping UK silicon: what changed in May 2026

The single most important sentence in the Bloomberg report on May 13 2026 is the disclosure that Anthropic is in "early discussions to buy Fractile chips." If those discussions close into a commercial commitment in the next eighteen months, Fractile becomes Anthropic's fourth compute supplier — joining Nvidia GPUs, Google TPUs, and Amazon Trainium silicon. That is the most aggressive multi-supplier diversification of any frontier AI lab on the planet.

Why Anthropic needs a fourth supplier

Anthropic's compute portfolio in May 2026 reads as follows: Google Cloud TPU capacity for training and large-batch inference, the recently announced SpaceX-Colossus 1 Nvidia GPU buildout covering Claude Code production, Amazon Trainium silicon for cost-sensitive inference, and a small reserve of internal accelerator work. The strategic gap in that stack is high-throughput inference at the lowest possible watts per useful token — exactly the slot Fractile claims. Anthropic does not need another training cluster. It needs an inference appliance that serves Claude API at half the marginal cost per token. If Fractile delivers on the claimed economics, the integration solves Anthropic's most expensive operating problem.

The second-source defense against Nvidia

Every frontier AI lab in 2026 carries Nvidia concentration risk. OpenAI runs roughly 65 to 75 percent of inference on Nvidia silicon. Anthropic sits closer to 45 percent on Nvidia, the rest split across Google TPU and Amazon Trainium. The Fractile interest is consistent with Anthropic's pattern of aggressive supplier diversification. The same week the Series B priced, Nvidia booked another $40 billion in equity bets across the AI compute stack, including stakes that critics call "circular financing." Anthropic appears to be running the opposite play: keep Nvidia as a primary, but maintain at least three credible alternatives that can scale into the inference workload without depending on the Vera Rubin shipment cadence.

What Anthropic actually orders if Fractile ships

The order math is constrained by Fractile's production capacity in 2027. A first-generation chip from a Series B startup, on a TSMC 3nm node with a single mask set, realistically yields 50,000 to 200,000 production units in the first twelve months. At a system price in the $30,000 to $50,000 per unit range, that is a $1.5 billion to $10 billion total addressable supply for the first year. Anthropic's likely first order: a pilot deployment of 5,000 to 15,000 Fractile inference systems, sized to handle 15 to 30 percent of Claude API serving load by end-2027. The full conversion would take three to four generations of Fractile silicon if the architecture scales. That is the bet the $220M is funding.

Versus Nvidia, Cerebras, Groq, SambaNova: the inference chip battlefield

The inference chip landscape in May 2026 sorts into four tiers by valuation and strategy. Nvidia sits at a $3 trillion market cap with the Vera Rubin platform shipping in H2 2026. Cerebras just priced its IPO at $48.8 billion fully diluted on the WSE-3 wafer-scale engine. Groq runs at a $6.9 billion private valuation with its LPU inference architecture. SambaNova, Tenstorrent, and Etched fill the second tier between $1 billion and $5 billion. Fractile enters at $1 billion with the in-memory compute differentiator. Each architecture solves a different bottleneck.

Cerebras wafer-scale versus Fractile in-memory

Cerebras's WSE-3 attacks the inter-chip bottleneck by making the entire wafer one chip — 4 trillion transistors, 900,000 cores, 44 gigabytes of on-chip SRAM. Fractile attacks the compute-to-memory bottleneck by putting SRAM and arithmetic units in the same physical region of a conventional-size die. The economic implication is different. Cerebras wins on large-context single-batch latency — 3,000+ tokens per second on a single query. Fractile claims to win on aggregate throughput per watt across many concurrent queries — the workload pattern that dominates production API serving at Anthropic and OpenAI scale. If both architectures hit their published specs, Cerebras sells $2 million wafer-scale systems for premium latency, and Fractile sells $40,000 inference appliances for marginal-cost serving. They do not directly compete for the same buyer.

Groq LPU versus Fractile in-memory

Groq's LPU is a deterministic single-core architecture optimized for low-latency inference, currently shipping production silicon and serving paying customers. Groq has eighteen months of operational lead time on Fractile. The structural risk for Fractile is that Groq closes a similar Anthropic conversation first, locks an exclusive on inference workloads, and forces Fractile to chase Meta, Microsoft, or a sovereign customer instead. The Bloomberg disclosure that Anthropic is talking to Fractile suggests Groq has not yet locked that account, but the window is open at most twelve to eighteen months before the conversation moves to commercial terms.

Nvidia Vera Rubin against Fractile economics

Nvidia's response is Vera Rubin, the H2 2026 platform that doubles inference throughput per watt over H200. If Vera Rubin actually halves the watts per useful token on Llama-class workloads, the Fractile claimed 10x advantage compresses to a 5x advantage at production. That is still enormous, but it shifts the competitive math from "Fractile wins on economics" to "Fractile wins on economics if it ships on schedule." The integrated Cerebras Inference service and Claude API are the two reference points buyers will benchmark Fractile against in 2027.

UK silicon sovereignty: the second-order story

The funding round is not just a chip play. It is the UK government's quiet bet on AI infrastructure sovereignty after the post-Brexit semiconductor strategy drift. The participation of NATO Innovation Fund and Oxford Science Enterprises in the round signals state-aligned capital is treating Fractile as strategically important. The UK has lost three previous generations of indigenous semiconductor capability — Inmos, Acorn, ARM (acquired by SoftBank in 2016, sold to public markets in 2023). Fractile is the first commercially credible UK chip startup to reach Series B at a $1 billion valuation since ARM's original 1998 listing. The strategic logic from London's perspective: if Anthropic ships its frontier models on Fractile silicon, the UK gains a sovereign foothold in the global AI infrastructure layer that Brussels, Washington, and Beijing all want.

The London-Bristol talent base

Fractile's engineering team sources from Graphcore, Nvidia UK, Imagination Technologies, and the Oxford Robotics Institute. London is the company's headquarters; Bristol hosts the silicon engineering arm — historically the UK's strongest semiconductor talent corridor since the Inmos era. The Series B funds a hiring push across both sites, with senior recruiting targeting Apple Silicon, Google TPU, and AMD Radeon alumni. The 2026 talent market for inference chip designers is brutal — base salaries for principal architects run $400K to $600K with significant equity — and Fractile's location advantage is that UK talent is meaningfully cheaper than Silicon Valley for the same caliber of engineer.

Export controls and the China question

The unwritten rule of any UK chip startup raising in 2026 is that the export control regime constrains the addressable market. Fractile cannot sell to China customers under current Commerce Department export rules without a license that the Bureau of Industry and Security is unlikely to grant. The same constraint applies to Cerebras (which has G42 and MBZUAI in the Abu Dhabi customer concentration) and to Nvidia (H100/H200 export licenses tightly controlled). The structural implication: Fractile's addressable market is US, EU, UK, Japan, South Korea, Australia, and select Middle East allies. That is roughly 75 percent of global AI inference spend, but the 25 percent China carve-out matters when comparing to Nvidia's effective TAM.

The 2027 roadmap and the ROI math

Fractile's stated production timeline targets tape-out of the first commercial chip in late 2026, customer samples in Q2 2027, and production silicon by end-2027. The Series B funds the path through samples; a likely Series C in mid-2027 funds the production ramp and the early commercial deployment. For Anthropic's integration timeline, the window for first commercial Fractile orders opens in Q3 2027 at the earliest. That aligns with Anthropic's planned compute capacity expansion through 2028 and the rumored October 2026 IPO at $350 billion pre-money.

Cost per token target for 2027

The number to watch when Fractile silicon ships is the marginal cost per million tokens served at scale. Current Nvidia H200 inference economics on Llama 3.1 70B run roughly $1.20 to $1.50 per million tokens at hyperscale buyer rates. Fractile's 10x claim implies $0.12 to $0.15 per million tokens — a structural shift that would let Anthropic price Claude API at the same margin while charging 60 to 70 percent less, or hold pricing flat and grow margin from roughly 35 percent to 55 percent. Either outcome is a strategic win. Both outcomes depend on Fractile actually delivering production silicon that holds the simulation numbers under real workload.

Capacity versus Anthropic demand in 2027

If Fractile's first commercial chip yields 100,000 units in the first twelve months at $40,000 per unit, that is $4 billion in revenue capacity. Anthropic alone could consume 20 to 40 percent of that supply in the inaugural year — call it $800 million to $1.6 billion. The remainder covers Meta inference workloads, Microsoft Copilot serving, and the sovereign and enterprise tier. Fractile's $1 billion Series B valuation looks reasonable if the company hits $1 billion in revenue in 2028 at roughly 60 percent gross margin. That is the trajectory the round is funding.

Failure modes: an honest list

Three scenarios kill the thesis. First, the in-memory compute architecture fails to scale beyond the small-silicon test chip — the simulation numbers do not hold at production die size. Second, the software stack lags eighteen months behind the hardware, customer integrations stall, and Anthropic moves the inference workload to Vera Rubin or Groq instead. Third, yields on the first commercial mask set come in below 40 percent at tape-out, the unit economics blow up, and the Series C raises at a flat or down round in late 2027. We assign roughly 35 percent probability that one of these hits hard. The remaining 65 percent is the bull case priced into the round.

Circular financing comparison versus the Nvidia equity pattern

Fractile's funding pattern is structurally cleaner than the Nvidia $40 billion circular financing pattern that has drawn analyst scrutiny in May 2026. None of the Fractile investors are also direct customers. Accel and Founders Fund are pure financial sponsors. Pat Gelsinger's angel position is small relative to the round. NATO Innovation Fund and Oxford Science Enterprises are state-aligned capital with strategic rather than commercial returns. There is no Anthropic equity in Fractile, and there is no Fractile compute commitment to any of the funders. That is a healthier capital structure than the OpenAI-Nvidia-Microsoft three-cornered equity-for-revenue loop that critics flag as inflating GPU revenue without genuine end-market demand.

The pure customer thesis

Anthropic's interest in Fractile is consistent with a pure customer thesis: buy the chip if it delivers the economics, walk away if it does not. No equity entanglement. No reciprocal compute commitment that obscures revenue quality. If Fractile ships and Anthropic orders, the revenue recognition is clean. If Fractile slips and Anthropic does not order, no equity writedown loops back to inflate Fractile's reported valuation. The capital stack is paying for hardware risk, not for a financial engineering loop.

What this tells us about the 2026-2027 inference cycle

Three structural patterns clarify after this round. First, inference chip capital is repricing 20x to 30x compared to 2024 baselines — the same week Fractile printed $1 billion, Cerebras printed $48.8 billion on the IPO, and Nvidia booked another $40 billion in equity stakes. Second, frontier AI labs are aggressively diversifying suppliers — Anthropic running four parallel compute providers is the new ceiling, not the floor. Third, the 2027 production cliff matters more than the 2026 capital cycle. Chips that ship in 2027 set the marginal cost of inference for the next decade. Anyone shopping silicon in 2026 is positioning for a 2027 inflection.

What changes if Fractile misses 2027

If Fractile slips production into 2028, the strategic window narrows. Vera Rubin will be deeply established, Cerebras will be three quarters into its public-company operating cadence, and Groq will have eighteen more months of customer entrenchment. A Fractile that ships in 2028 still has a credible commercial path, but the Anthropic conversation likely moves to a smaller pilot deployment rather than a full strategic supplier slot. The round's value compresses to maybe $600 to $800 million at the Series C. The 35 percent failure case is meaningful, not catastrophic.

What would prove the bull thesis wrong

Three falsifiers, all observable within the next eighteen months. First, Fractile fails to disclose customer integration milestones at the Series C announcement — silence on that point reads as the Anthropic conversation stalling. Second, Nvidia Vera Rubin lands within 3x of Fractile's claimed watts per useful token, eliminating the 10x economic edge. Third, Anthropic publicly announces a Groq commercial commitment before Fractile reaches production — that would close the slot Fractile is targeting. If any one of these hits before end-2027, the thesis breaks. We watch all three.

Developer and builder implications

For developers building production AI products in 2026 and 2027, the Fractile round is a signal, not an immediate action item. Fractile chips will not be commercially available until end-2027 at earliest, and only at hyperscale buyer volumes. For the indie builder running inference on RunPod or Cerebras Inference, nothing changes in the next twelve months. What does change is the strategic backdrop: if Anthropic captures a 50 percent inference cost reduction in 2027-2028 via Fractile silicon, Claude API pricing has room to drop meaningfully without margin compression. Developers building on Claude in 2026 should price their cost models with a 30 to 40 percent inference cost reduction baked in for 2028 — that is the option value the Fractile-Anthropic conversation creates.

What builders should actually do

Three concrete recommendations. First, do not architect away from Anthropic in 2026 based on cost concerns alone — the inference cost curve is structurally about to flatten. Second, build inference workloads to be portable across providers (Anthropic, OpenAI, Google) so that 2027-2028 cost shifts can be captured opportunistically. Third, watch the Q3 2027 Anthropic supplier announcements as a tell on which inference architecture wins the next cycle. The names to track: Fractile, Groq, Cerebras, Vera Rubin, and the AWS Trainium2 production silicon.

Signals to watch through end of 2026

Five datapoints will resolve the Fractile thesis to actionable confidence one way or the other before the end of 2026. The Series C announcement — likely Q1 to Q2 2027 — will reveal whether customer integration milestones hit and whether Anthropic moved from "early discussions" to a commercial commitment. The Nvidia Vera Rubin shipment cadence in H2 2026 will benchmark the actual watts-per-useful-token improvement that Fractile has to beat. The Cerebras post-IPO operating cadence — first earnings in August 2026, customer concentration disclosure — will reset the public-market read on inference chip economics. The Groq commercial expansion through 2026 will indicate whether the inference workload slot Fractile targets is contested or open. And the Anthropic compute disclosure at the October 2026 IPO roadshow, if it happens, will publicly confirm or deny the Fractile partnership status.

The single most important tell

If the Anthropic IPO prospectus lists Fractile as a "future compute supplier" or "anticipated supplier" in the risk factors or compute commitments section, the thesis is confirmed publicly. If Fractile is absent from the prospectus, the conversation likely stalled and the $1 billion valuation gets restruck at the Series C. That single document, expected in late September 2026 if Anthropic prices in October, is the most informative datapoint for anyone tracking this trade.

Closing read

Fractile's $220 million Series B at approximately $1 billion is a structurally interesting trade, but the round is not the story. The story is the Anthropic disclosure. A frontier lab running four parallel compute suppliers — Nvidia, Google TPU, Amazon Trainium, and a potential UK in-memory compute partner — is the most aggressive supplier diversification the AI sector has seen. If that strategy delivers a 50 percent cost reduction on Claude inference by 2028, every other frontier lab will follow the same playbook. If it stalls, the inference chip cycle compresses back to Nvidia plus one credible alternative. Either way, the next eighteen months reset the marginal cost of intelligence for the next decade. We are watching every milestone.

Editorial disclosure: We have no affiliate relationship with Fractile, Anthropic, Nvidia, Cerebras, Groq, or any party referenced in this analysis. This piece is editorial commentary on publicly reported information from Bloomberg, TheNextWeb, HPCwire, DataCenterDynamics, and Electronics Weekly published May 13 2026. The interpretation is strategic-and-positional, not qualitative-and-derisive.

Frequently asked questions

How much did Fractile raise in its Series B?

Fractile raised $220 million in Series B funding announced May 13 2026. The round closed $20 million above the original $200 million target, with Accel, Factorial Funds, and Founders Fund as lead investors. Participation came from Conviction, Gigascale, O1A, Felicis, Buckley Ventures, 8VC, Kindred Capital, NATO Innovation Fund, Oxford Science Enterprises, with Pat Gelsinger joining as angel investor and advisor.

What is the Fractile valuation after the Series B?

Fractile priced at approximately $1 billion post-money valuation after the Series B closed. That places the company in unicorn territory and represents roughly an 18 to 20x markup over its early 2024 Series A, which raised $15 million in the low $50 million valuation range.

Is Anthropic going to buy Fractile chips?

According to Bloomberg's May 13 2026 reporting, Anthropic is in early discussions to buy Fractile chips when the hardware becomes available in 2027. The discussions have not yet converted to a commercial commitment. If they do close, Fractile would become Anthropic's fourth compute supplier alongside Nvidia GPUs, Google TPUs, and Amazon Trainium silicon.

What is in-memory compute and why does it matter for AI inference?

In-memory compute is a chip architecture that places memory cells and arithmetic units in the same physical region of a silicon die, eliminating the data movement between memory and compute that consumes 70 to 80 percent of energy in conventional GPU inference. Fractile claims the architecture delivers 25 times faster throughput at one-tenth the cost per useful token compared to current Nvidia H200 GPU setups, though those numbers are based on simulation and small-silicon test data rather than production benchmarks.

When will Fractile chips be commercially available?

Fractile targets tape-out of the first commercial chip in late 2026, customer samples in Q2 2027, and production silicon by end-2027. The Series B funds the path through samples. A likely Series C in mid-2027 funds the production ramp. Realistic commercial deployment at scale begins in Q4 2027 to early 2028.

Who is Walter Goodwin, the Fractile founder?

Walter Goodwin is the founder and CEO of Fractile. He completed his PhD at the Oxford Robotics Institute before founding the company. The engineering team sources from Graphcore, Nvidia UK, Imagination Technologies, and the Oxford Robotics Institute, with the company headquartered in London and the silicon engineering arm based in Bristol — historically the UK's strongest semiconductor talent corridor since the Inmos era.

How does Fractile compare to Cerebras and Groq?

The three companies attack different bottlenecks. Cerebras's WSE-3 wafer-scale chip eliminates inter-chip communication and delivers 3,000 to 4,500 tokens per second on single-batch inference. Groq's LPU is a deterministic low-latency architecture currently shipping production silicon. Fractile's in-memory compute architecture targets the lowest watts per useful token across high-concurrency inference workloads. They serve overlapping but distinct buyer profiles, with Cerebras at $48.8 billion fully diluted public valuation, Groq at $6.9 billion private, and Fractile at $1 billion private.

What is "watts per useful token" and why does Fractile use the term?

Watts per useful token measures the energy efficiency of inference at the production workload level, rather than peak chip throughput. The metric captures both arithmetic efficiency and data movement overhead. At hyperscale inference volumes — Anthropic and OpenAI serving trillions of tokens per quarter — a 10x reduction in watts per useful token saves 200 to 400 megawatts of peak datacenter capacity. That is why Fractile leadership frames the competitive case around this metric rather than TFLOPS or peak tokens per second.

Does Fractile threaten Nvidia's market position?

Fractile threatens a specific slice of Nvidia's market — high-volume inference serving at hyperscale buyers — but does not threaten the training market or the consumer GPU business. Nvidia's response is Vera Rubin, the H2 2026 platform that doubles inference throughput per watt over H200. If Vera Rubin halves watts per useful token, the Fractile claimed 10x advantage compresses to 5x at production. That is still significant but shifts the competitive math meaningfully. The strategic risk for Nvidia is concentration: every frontier lab now actively shops alternative suppliers.

Is Fractile related to the UK government's AI sovereignty strategy?

Yes, indirectly. The participation of NATO Innovation Fund and Oxford Science Enterprises in the Series B signals state-aligned capital is treating Fractile as strategically important to UK AI infrastructure sovereignty. The UK has lost three previous generations of indigenous semiconductor capability — Inmos, Acorn, and ARM (acquired by SoftBank in 2016). Fractile is the first commercially credible UK chip startup to reach Series B at a $1 billion valuation since ARM's original 1998 public listing. The strategic logic from London's perspective: a sovereign foothold in the global AI infrastructure layer.

What should AI developers and builders watch over the next 18 months?

Five signals will resolve the Fractile thesis. First, the Series C announcement in Q1 to Q2 2027 disclosing customer integration milestones. Second, the Nvidia Vera Rubin shipment cadence in H2 2026 setting the benchmark Fractile must beat. Third, the Cerebras post-IPO operating data starting August 2026. Fourth, Groq commercial expansion through 2026 indicating whether the inference workload slot is contested. Fifth, the Anthropic IPO prospectus, expected late September 2026 if the company prices in October, which would publicly confirm or deny the Fractile partnership in its compute commitments disclosures.