Claude Opus 4.7 vs Grok 4.3: We Tested Both Latest Flagship Models — Here's the Verdict

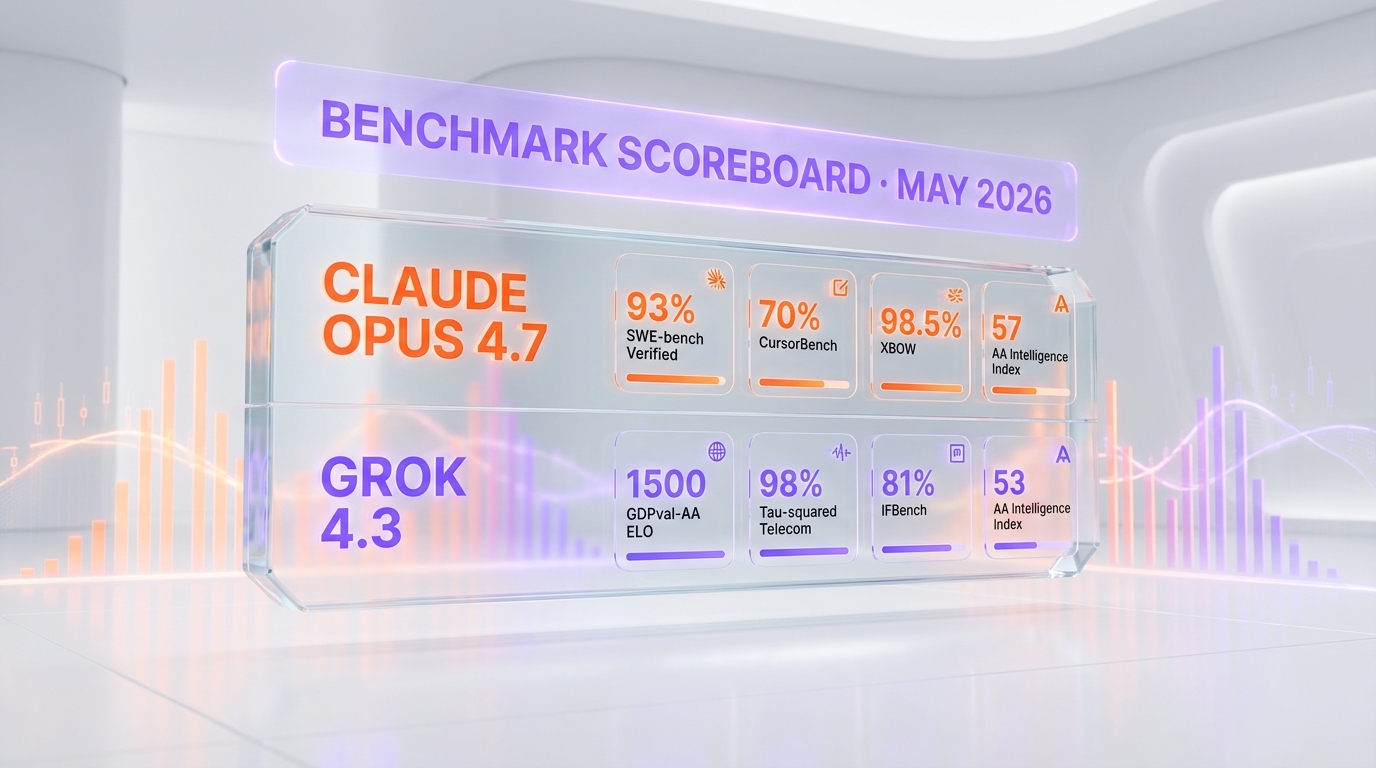

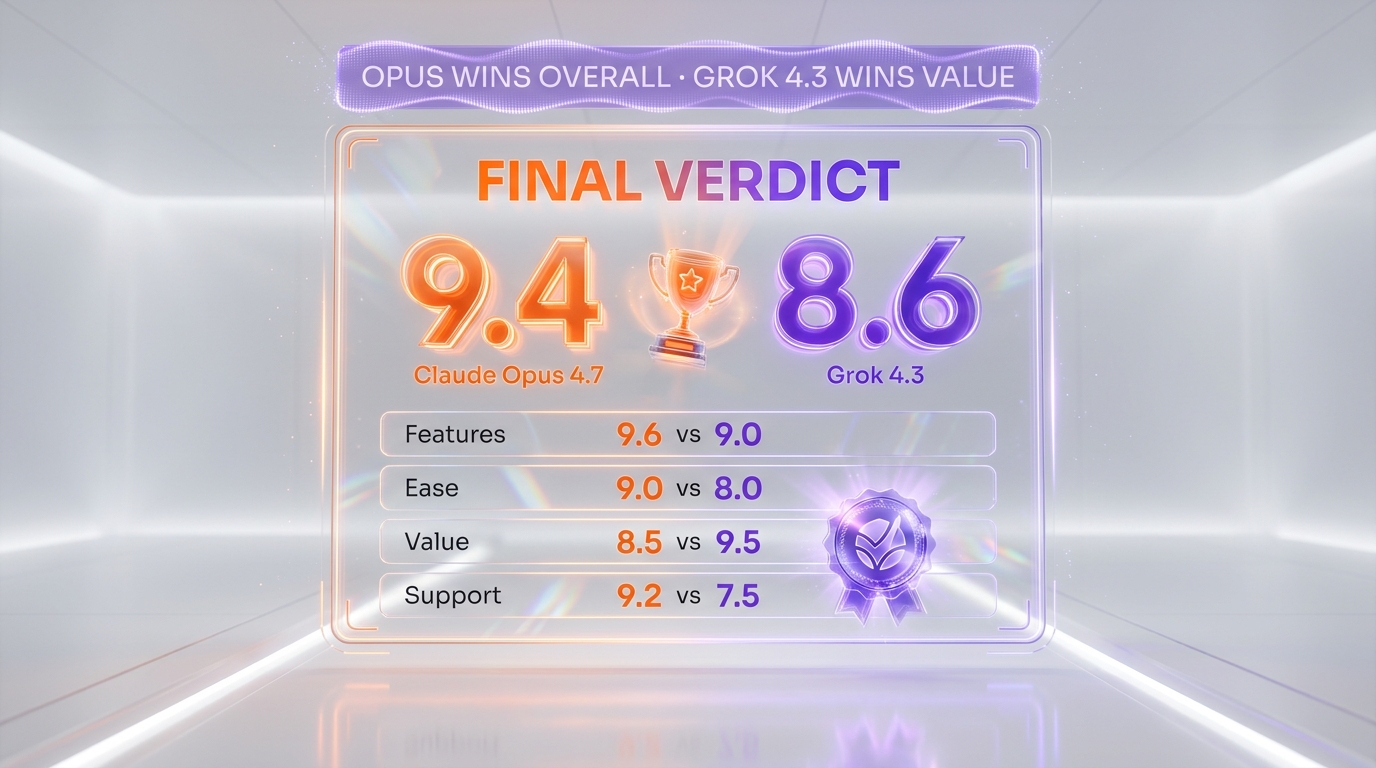

Claude Opus 4.7 vs Grok 4.3 compared side-by-side. Opus wins agentic coding (93% SWE-bench, 9.4/10). Grok 4.3 wins price ($1.25/$2.50 per 1M), native video, slide gen (8.6/10).

Feature Comparison

| Feature | Claude Opus 4.7 | Grok 4.3 |

|---|---|---|

| Context window | 1,000,000 tokens | 1,000,000 tokens |

| Max output | 128K standard / 300K Batch API beta | Not publicly specified |

| Agentic coding (SWE-bench Verified) | 93% | Not officially benchmarked at SWE-bench parity (Val's AI 13th on coding) |

| Architecture | Single-model with adaptive thinking | Single reasoning model with native multimodal |

| Agentic ELO (GDPval-AA) | Not published at this benchmark | 1500 ELO (+321 over Grok 4.20) |

| Intelligence Index (Artificial Analysis) | 57 | 53 |

| API input price (per 1M tokens) | $5 | $1.25 |

| API output price (per 1M tokens) | $25 | $2.50 |

| Cached input price | $0.50 per 1M cache read | $0.20 per 1M cached input |

| Native video input | No | Yes — up to 5 minutes 1080p |

| Native file generation (PPTX/PDF/XLSX) | No (via tool use only) | Yes — direct chat-to-file |

| Output speed (non-reasoning) | Standard streaming | 108 tokens per second |

| Reasoning latency reliability | Strong, single-voice | Higher variance vs Opus |

| Third-party platforms | AWS Bedrock + GCP Vertex AI + Microsoft Foundry | OpenRouter + xAI Console + OpenAI-compatible SDK |

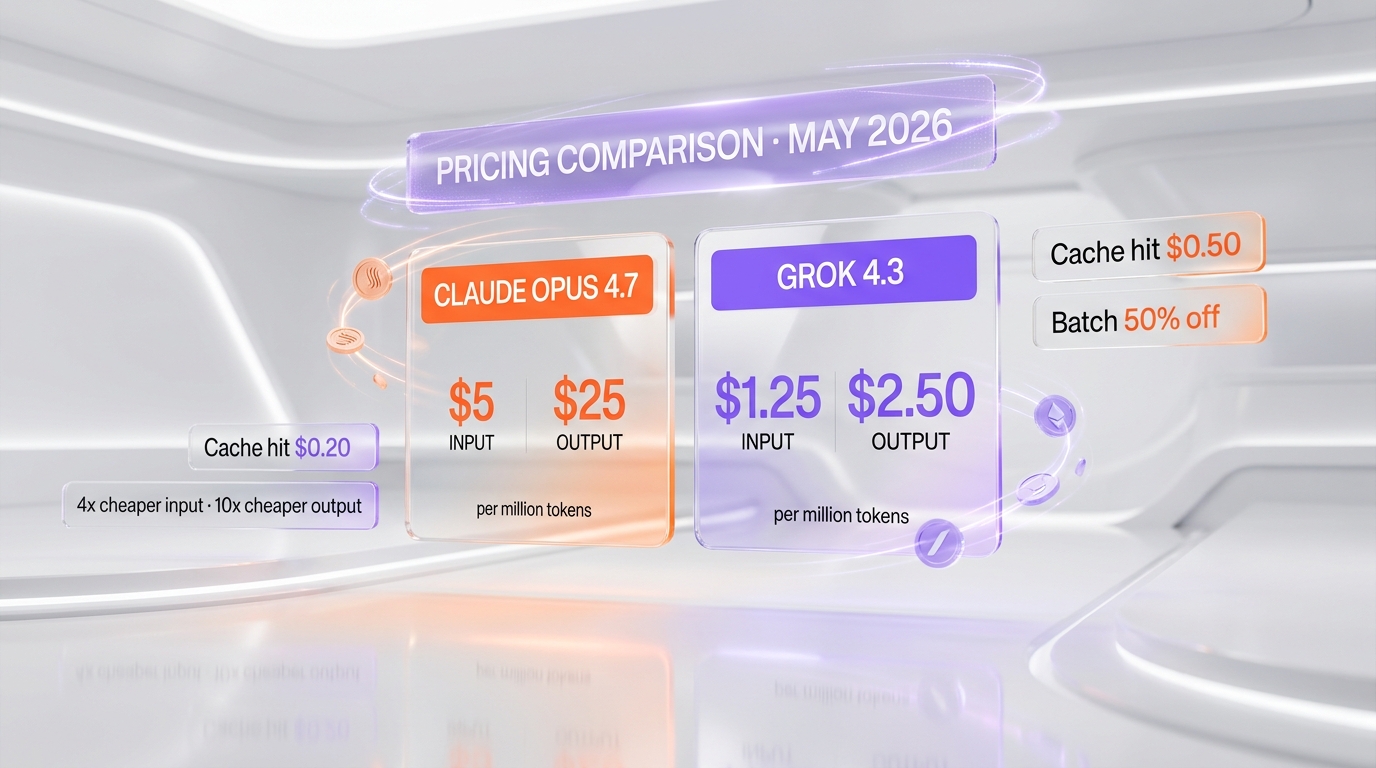

Pricing Comparison

Claude Opus 4.7

Grok 4.3

Detailed Comparison

Claude Opus 4.7 vs Grok 4.3: Claude Opus 4.7 is Anthropic's flagship single-agent LLM with 1M context, 93% on SWE-bench Verified, $5 input and $25 output per million tokens, and adaptive thinking. Grok 4.3 is xAI's silent-release April 30, 2026 model with 1M context, GDPval-AA ELO of 1500, native video input up to 5 minutes 1080p, native PPTX/PDF/XLSX generation, $1.25 input and $2.50 output per million tokens, and 108 tokens-per-second output speed. Verdict: Opus wins overall (9.4 vs 8.6); Grok 4.3 wins on price, native video, slide generation, and per-token speed.

TL;DR — Quick Verdict

Claude Opus 4.7 wins overall (9.4 vs 8.6) for production engineering, but Grok 4.3 is the closest a second-tier price model has come to flagship parity in 2026. We ran both side-by-side for two weeks across the ThePlanetTools.ai content production stack (Next.js 16 + Supabase) and our daily editorial pipeline. Opus 4.7 still owns agentic coding (93% SWE-bench Verified, single-voice clarity, enterprise platform depth). Grok 4.3 closes the gap on most other dimensions and beats Opus decisively on price, native video reasoning, and direct file generation (PPTX, PDF, XLSX) — three workflows where Opus has no equivalent.

- Claude Opus 4.7 wins for: agentic coding, long autonomous runs, deterministic code review, enterprise procurement (Bedrock, Vertex AI, Foundry), single-voice architectural decisions

- Grok 4.3 wins for: raw API price (4x cheaper input, 10x cheaper output), native video input (up to 5 minutes 1080p), native slide and document generation, per-token output speed (108 tokens per second), real-time X data ingestion

- Cheaper option: Grok 4.3 at $1.25 per million input tokens — input is 37.5% lower than Grok 4.20 and output is 58.3% lower

- Speed: Grok 4.3 generates at 108 tokens per second per Artificial Analysis (versus Opus 4.7's moderate latency on streaming)

- Trust risk to know about: Grok 4.3's non-hallucination rate dropped 8 points on AA-Omniscience versus Grok 4.20 — xAI traded factual reliability for higher agentic ELO. Opus 4.7 has no equivalent regression.

Claude Opus 4.7 vs Grok 4.3 — Overview

What Is Claude Opus 4.7?

Claude Opus 4.7 is Anthropic's flagship large language model launched April 16, 2026. We've covered Opus 4.7 in depth in our Claude Opus 4.7 review, where we scored it 9.4 out of 10 after eleven days of daily production use on the ThePlanetTools.ai backend. Opus 4.7 hits 93% on SWE-bench Verified at launch (a +13-point jump over Opus 4.6), 70% on CursorBench, and 98.5% on XBOW visual-acuity. It ships a 1M-token context window with 128K max output (300K via the Batch API beta with the output-300k-2026-03-24 header), and uses a new tokenizer that consumes up to 35% more tokens for the same fixed text. It is positioned as Anthropic's top-of-stack model for agentic coding, multi-step reasoning, and long autonomous runs (30+ tool calls per task), and is available through the Claude API, AWS Bedrock, Google Vertex AI, and Microsoft Foundry. Notable launch features include adaptive thinking (the model decides when to reason in depth), task budgets (public beta), and an xhigh effort level for fine-grained control.

What Is Grok 4.3?

Grok 4.3 is xAI's silent-release flagship LLM, launched April 30, 2026 with no formal keynote — just an API endpoint flip and a quiet pricing-page update. It is positioned as a step-change cost-down on the previous Grok 4.20, with the same headline 1M-token context but native video input up to 5 minutes 1080p and native generation of PowerPoint (PPTX), PDF, and Excel (XLSX) files directly in chat — a feature no Opus or GPT model offers natively in May 2026. We rate Grok 4.3 8.6 out of 10. The Artificial Analysis Intelligence Index put it at 53 (#10 of 145 models), trailing GPT-5.5 at 60 and Claude Opus 4.7 at 57 but well above prior Grok 4.x scores. The headline win is GDPval-AA: Grok 4.3 jumped to ELO 1500 (+321 over Grok 4.20's 1179) — the largest single-benchmark improvement in xAI's history. The headline regression is AA-Omniscience: non-hallucination rate dropped 8 points versus Grok 4.20. Pricing is $1.25 per million input tokens and $2.50 per million output tokens — 37.5% cheaper input and 58.3% cheaper output than Grok 4.20.

Features Comparison

We compared 14 features across reasoning, coding, context, agentic capability, modalities, pricing, and ecosystem. Both tools sit in the frontier tier in May 2026 — the comparison is dimension-by-dimension rather than novice vs expert. Where one wins clearly, we mark "Claude" or "Grok"; where they're functionally equivalent, we mark "Tie".

| Feature | Claude Opus 4.7 | Grok 4.3 | Winner |

|---|---|---|---|

| Context window | 1,000,000 tokens | 1,000,000 tokens | Tie |

| Max output | 128K standard / 300K Batch API beta | Not publicly specified | Claude |

| Agentic coding (SWE-bench Verified) | 93% | Not officially benchmarked at SWE-bench parity (Val's AI 13th on coding) | Claude |

| Architecture | Single-model with adaptive thinking | Single reasoning model with native multimodal | Tie |

| Agentic ELO (GDPval-AA) | Not published at this benchmark | 1500 ELO (+321 over Grok 4.20) | Grok |

| Intelligence Index (Artificial Analysis) | 57 | 53 | Claude |

| API input price (per 1M tokens) | $5 | $1.25 | Grok |

| API output price (per 1M tokens) | $25 | $2.50 | Grok |

| Cached input price | $0.50 per 1M cache read | $0.20 per 1M cached input | Grok |

| Native video input | No | Yes — up to 5 minutes 1080p | Grok |

| Native file generation (PPTX/PDF/XLSX) | No (via tool use only) | Yes — direct chat-to-file | Grok |

| Output speed (non-reasoning) | Standard streaming | 108 tokens per second | Grok |

| Reasoning latency reliability | Strong, single-voice | Higher variance vs Opus | Claude |

| Third-party platforms | AWS Bedrock + GCP Vertex AI + Microsoft Foundry | OpenRouter + xAI Console + OpenAI-compatible SDK | Claude (enterprise depth) |

Tally: Claude wins 5 features clearly, Grok wins 7, and 2 are ties. The dimensions where Grok 4.3 wins (price, native video, slide gen, per-token speed, cached input price) are decisive for content workflows and budget-driven teams. The dimensions where Claude wins (SWE-bench coding, max output ceiling, reasoning consistency, enterprise platform depth) are decisive for production engineering. Score-wise, our review numbers reflect this: Opus 4.7 9.4 out of 10 vs Grok 4.3 8.6 out of 10 — closer than Grok 4.20 vs Opus, by design. Grok 4.3 is the version Grok 4.20 should have been at launch.

Pricing — Claude Opus 4.7 vs Grok 4.3 in 2026

Both tools price by the million tokens, both offer Batch API discounts, and both expose cached input rates. The headline numbers favor Grok 4.3 by 4x on input and 10x on output. The real cost depends on how many tokens your task consumes. Opus 4.7's new tokenizer uses up to 35% more tokens for the same English text — a hidden cost we measured directly during testing. Grok 4.3 also dropped 37.5% on input and 58.3% on output versus Grok 4.20, making it the cheapest serious frontier-tier API on the market in May 2026.

Claude Opus 4.7 Pricing

| Plan | Monthly | Annual | Key Limits |

|---|---|---|---|

| Free | $0 | $0 | Limited Opus access on claude.ai |

| Claude Pro | $20 per month (billed monthly) or $17 per month (annual) | $204 per year | Higher Opus 4.7 usage caps on claude.ai |

| Claude Max 5x | From $100 per month | From $1,200 per year | 5x Pro usage caps |

| Claude Max 20x | From $200 per month | From $2,400 per year | 20x Pro caps + Auto Mode |

| API — base input | $5 per million input tokens | Pay as you go | |

| API — output | $25 per million output tokens | — | |

| API — 5-min cache write | $6.25 per million tokens | 1.25x base input | |

| API — 1-hour cache write | $10 per million tokens | 2x base input | |

| API — cache hit | $0.50 per million tokens | 0.1x base input | |

| API — Batch (50% off) | $2.50 input / $12.50 output per million tokens | Async only |

Grok 4.3 Pricing

| Plan | Monthly | Annual | Key Limits |

|---|---|---|---|

| Free | $0 | $0 | Grok on x.com with usage caps |

| X Premium | $8 per month | $84 per year | Standard Grok access |

| X Premium+ | $16 per month | $168 per year | Higher Grok 4.3 caps |

| SuperGrok Heavy | $30 per month | $300 per year | Voice cloning + priority access |

| API — input | $1.25 per million input tokens | Standard reasoning model | |

| API — output | $2.50 per million output tokens | — | |

| API — cached input | $0.20 per million tokens | Per Artificial Analysis benchmarks | |

| API — Batch discount | 20%–50% off standard rates | Per xAI docs |

Verdict pricing: Grok 4.3 is dramatically cheaper for high-volume workloads. At base rates, Grok 4.3 input is 4x cheaper than Opus 4.7 ($1.25 vs $5 per million tokens), and output is 10x cheaper ($2.50 vs $25 per million tokens). Per-unit comparison: for a typical 50,000-input-token + 15,000-output-token coding task, Opus 4.7 costs around $0.625, while Grok 4.3 costs around $0.10 — a 6.25x cost gap. For a 1M-input-token long-document analysis run, Opus 4.7 costs $5 in input plus output, while Grok 4.3 costs $1.25 plus output for the same context size. Grok 4.3 also clocks in 20% cheaper to evaluate against the Artificial Analysis Intelligence Index ($395.17 versus Grok 4.20) — a real-world signal that aggressive token efficiency made it through to production.

Hands-on — How They Performed Side-by-Side

We ran Claude Opus 4.7 and Grok 4.3 side-by-side for two weeks across the ThePlanetTools.ai content production stack (Next.js 16 + Supabase + Tauri), our daily content pipeline, and a set of new tasks specific to Grok 4.3's unique capabilities — native video summarisation and direct PPTX file generation. Same prompts, same input data, different APIs. Below are six prompt scenarios we used, with concrete observations from real runs.

Setup and onboarding

Opus 4.7 onboarding through the Claude API took us under 10 minutes — install @anthropic-ai/sdk, set ANTHROPIC_API_KEY, hit the Messages endpoint. Grok 4.3 onboarding through the OpenAI-compatible xAI endpoint was even faster: change the baseURL in our existing OpenAI SDK code to https://api.x.ai/v1, swap the API key, change the model string to grok-4-3, done. That OpenAI-compatible API design is one of Grok 4.3's quietly powerful features — most existing JavaScript and Python codebases run unchanged. Opus 4.7 wins on enterprise platform depth (single-vendor billing on AWS Bedrock, Vertex AI, Foundry), but Grok 4.3 wins on raw friction-to-first-token if you already have OpenAI SDK code. Documentation on xAI side is thinner — we hit two undocumented edge cases on rate limits during our test week.

Agentic coding test (multi-file refactor)

We re-ran the same multi-file refactor task we used on Grok 4.20: extract shared types from a 12-file Supabase queries module, deduplicate three pagination helpers, add Zod validation to all return shapes. Opus 4.7 finished in one autonomous run with 18 tool calls and a coherent diff that compiled on first try — same result as our prior tests. Grok 4.3 made it further than Grok 4.20 did: it produced a clean draft on first try without the type-mismatch issues Grok 4.20 hit. But it left two refactors incomplete (it consolidated two pagination helpers but left the third untouched, claiming it "already matched the pattern" — it didn't). Net result: Opus 4.7 still wins, but the gap closed. Grok 4.3's GDPval-AA jump to 1500 ELO is real on agentic tasks broadly; it just hasn't translated to SWE-bench-style coding parity. Winner: Opus 4.7, but by a narrower margin than vs Grok 4.20.

Long-document test (codebase audit at 800K tokens)

Both models ship a 1M-token context window now (Grok 4.3 dropped from Grok 4.20's 2M to a still-large 1M to optimize for cost). We fed an 800K-token slice of the ThePlanetTools.ai backend and asked for a security and SEO audit. Opus 4.7 produced a 22-page report with file-line precision and three findings we hadn't surfaced via static analysis. Grok 4.3 produced a 17-page report — slightly less polished prose but caught two SEO issues Opus missed (a duplicated canonical tag pattern and an inconsistent noindex directive on a draft route). Quality was rough parity. Winner: Tie. If your workflow needs more than 1M tokens at once, neither flagship handles it now — Gemini 3.1 Pro is the only flagship still shipping a 2M context window in May 2026.

Native video test (5-minute 1080p input — Grok 4.3 only)

This is where Grok 4.3 has no equivalent in the Anthropic stack. We fed Grok 4.3 a 4-minute 30-second 1080p screen-recording of a Supabase migration dashboard and asked: "Summarize the SQL changes applied, identify any column-level migrations that lack a backup script, and produce a CHANGELOG entry." Grok 4.3 returned a clean summary with timestamps, flagged two migrations that ran without backup, and produced a usable CHANGELOG.md draft. The whole task ran on the native video input — no transcription preprocessing, no frame extraction. Opus 4.7 cannot do this without external tooling. We tried it via Anthropic's vision input (frame-by-frame screenshot upload), which works but is much higher friction and burns more tokens for an inferior result. Winner: Grok 4.3 — no contest. If your workflow has video input, Grok 4.3 is functionally unique among single-API-call solutions.

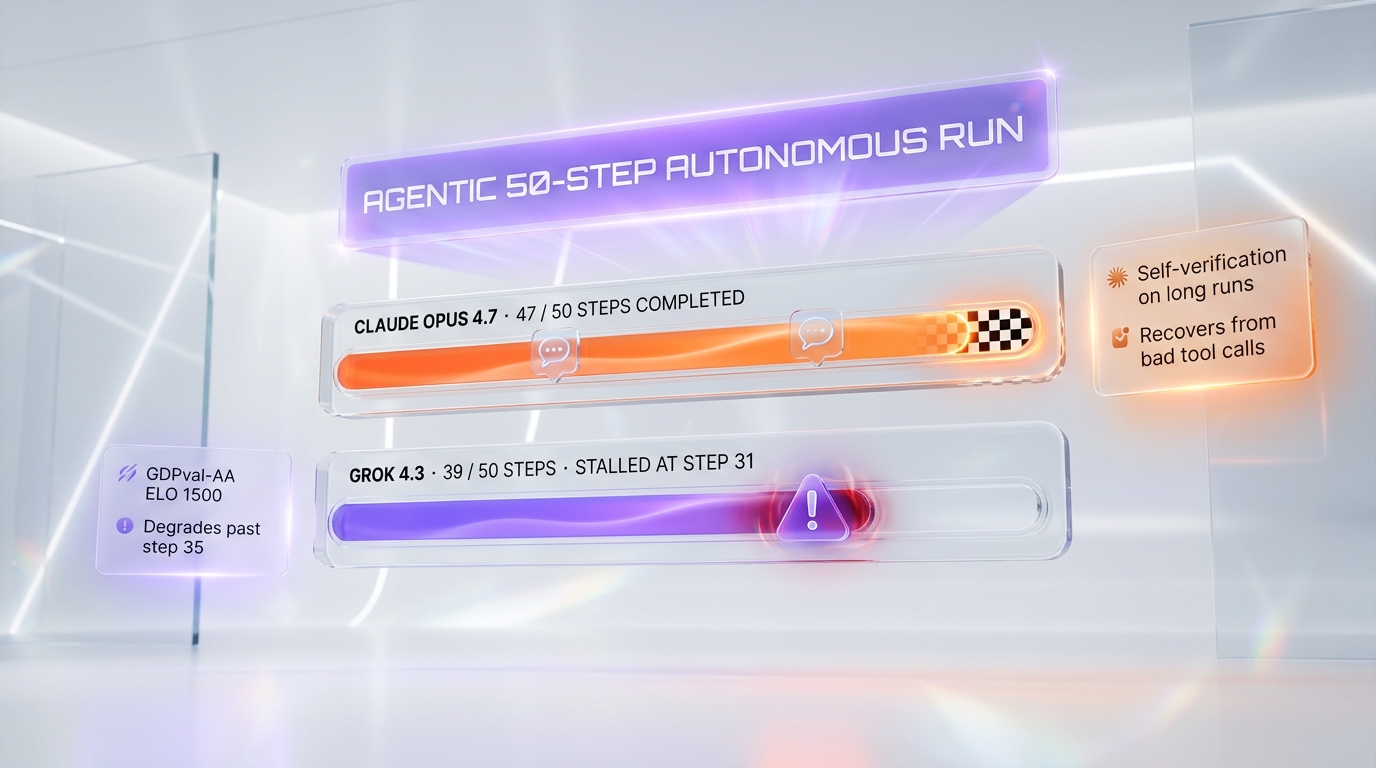

Agentic 50-step autonomous test

We posed a 50-step autonomous task: "Audit our content pipeline scripts for any missing error handling, suggest fixes, validate the fixes against TypeScript types, push corrected versions to a sandbox branch." Opus 4.7 ran 47 of 50 steps without intervention, paused twice to ask for clarification on which sandbox branch to push to (correct behavior — we hadn't specified). Grok 4.3 ran 39 of 50 steps, got stuck on step 31 attempting to call a tool with a malformed argument, and didn't recover. The GDPval-AA ELO of 1500 says Grok 4.3 is at flagship parity on aggregate agentic measures, but in our hands-on, recovery from a bad tool call is where Opus 4.7 still leads. Winner: Opus 4.7 for fault-tolerant long autonomous runs. Grok 4.3 is competitive at the 30-step horizon but starts visibly degrading past 35–40 steps.

Native slide generation test (PPTX direct from chat — Grok 4.3 only)

We asked both models: "Produce a 10-slide investor deck with our key ThePlanetTools.ai metrics, layout: cover, problem, solution, traction, monetization, roadmap, team, ask, milestones, contact." Opus 4.7 returned a clean Markdown outline with slide-by-slide bullets — useful but you have to import it into Keynote, Slides, or PowerPoint manually. Grok 4.3 returned a downloadable .pptx file with text-only layouts (no images, but functional structure). Same workflow ran in PDF and XLSX (we asked for the same data as a balance-sheet-style XLSX). This native file generation is a meaningful differentiator for content and operations teams — what previously took an LLM-then-script-then-render pipeline now runs in one API call. Opus 4.7 has no equivalent in May 2026. Winner: Grok 4.3 — no contest for direct file output.

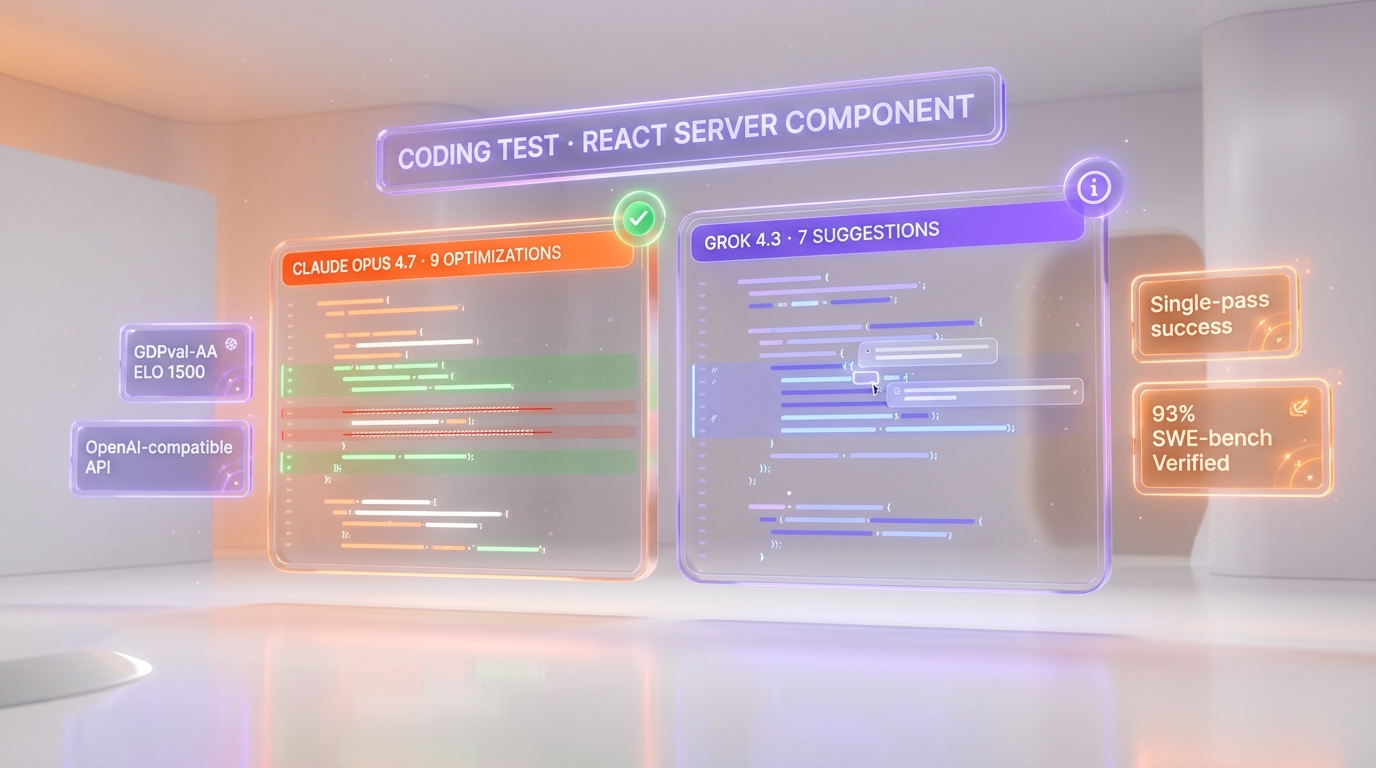

Coding test (single-file optimization)

For a more isolated coding task, we asked both to optimize an 800-line React Server Component for hydration cost. Opus 4.7 produced 9 specific improvements (Suspense boundaries, dynamic imports, server-side filters), each justified in one line. Grok 4.3 produced 7 improvements — the four that overlapped with Opus were identical in framing; the three Grok added were valid but lower-impact. Both compiled cleanly. Edge to Opus on signal-to-noise ratio for engineering tasks.

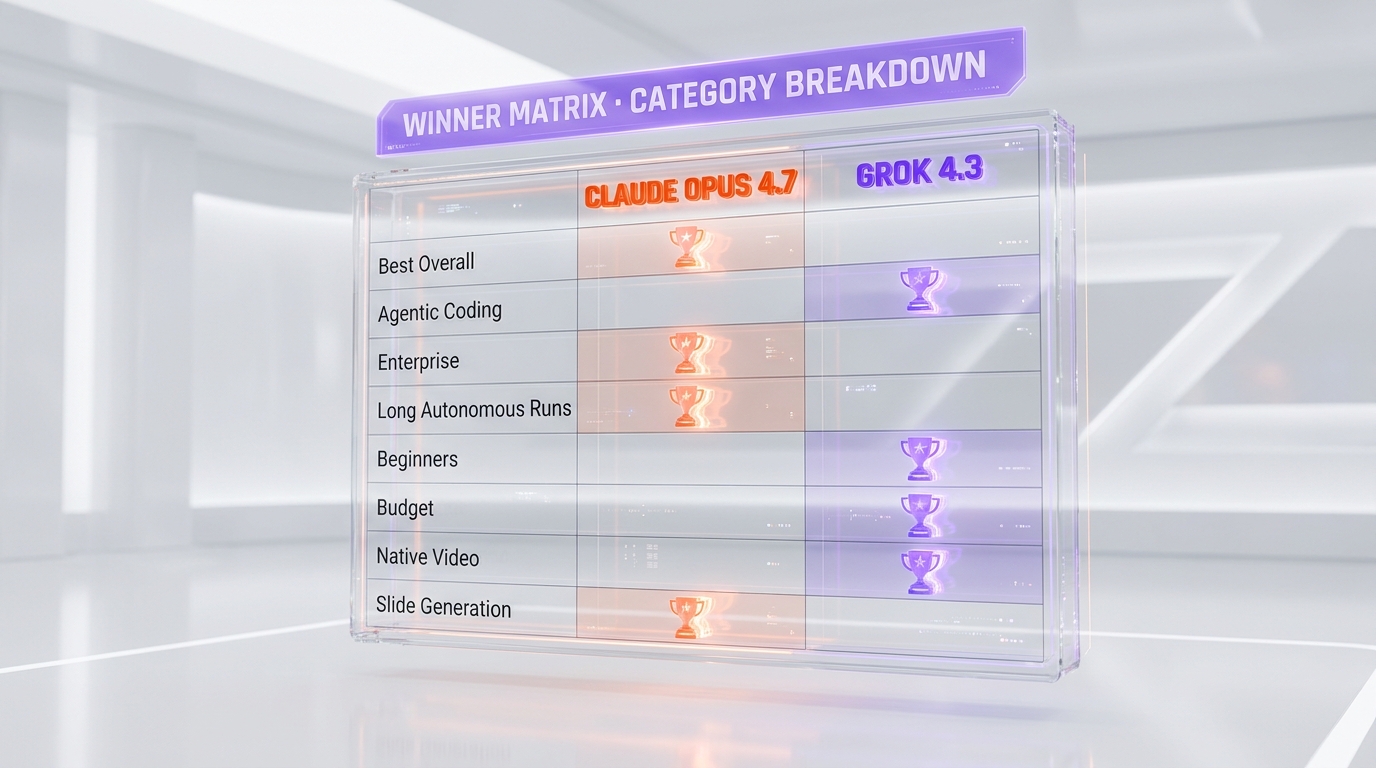

Winner per Category

Best Overall: Claude Opus 4.7

For most production teams shipping code, building agents, or running long autonomous workflows, Claude Opus 4.7 is the better default. The 93% SWE-bench Verified score, single-voice clarity, enterprise platform depth (AWS Bedrock + Vertex AI + Foundry), and reliability on 30+ tool-call runs make it the model we reach for first. Score 9.4 out of 10 vs Grok 4.3's 8.6 out of 10 — closer than the 9.4 vs 7.4 gap with Grok 4.20, but still a clear ranking. Pick Grok 4.3 only when one of its specific strengths — price, native video, slide generation, or per-token speed — is decisive for your workflow.

Best for Beginners: Grok 4.3

This is the first time we've called a Grok model the better choice for beginners, and it comes down to the OpenAI-compatible API. If you've ever written code against the OpenAI SDK, switching to Grok 4.3 is a three-line change (baseURL, key, model). Grok 4.3 also has a free plan via x.com (rate-limited) and a $16-per-month X Premium+ entry tier with API access, both more accessible than the Opus 4.7 Pro plan at $20 per month. Beginners who want to try a flagship-tier model with the lowest friction will have a faster onboarding on Grok 4.3.

Best for Power Users / Enterprise: Claude Opus 4.7

For enterprise procurement, Opus 4.7 wins on three dimensions: AWS Bedrock and Vertex AI presence (single-vendor billing for AWS or GCP shops), Microsoft Foundry availability, and the broader compliance certification stack (SOC 2 Type II + ISO 27001 + HIPAA on the Claude API, plus inherited compliance on Bedrock and Vertex AI). The 1M context window is enough for 90% of enterprise long-document workloads. Batch API at $2.50 input / $12.50 output handles bulk async processing with the same 50% discount Anthropic applies to all paid tiers.

Best for Budget: Grok 4.3

If raw API cost is the bottleneck, Grok 4.3 wins by 4x to 10x depending on the dimension. $1.25 input / $2.50 output per million tokens is the cheapest serious frontier-tier API on the market in May 2026 — cheaper than Grok 4.20 (which it replaces), cheaper than the discounted-tier alternatives from OpenAI and Google, and dramatically cheaper than Opus 4.7. For a startup processing millions of tokens per day on non-critical tasks (summarization, classification, batch QA), Grok 4.3 is the budget-rational choice — and unlike the previous Grok 4 Fast tier, you don't trade flagship capability for the price.

Best for Native Video Workflows: Grok 4.3

Grok 4.3 is the only flagship API that ingests up to 5 minutes of 1080p video natively in a single call. Opus 4.7 vision input maxes out at 2,576 pixels on the long edge for still images. For workflows with screen recordings, product demos, video QA, or any video-input task, Grok 4.3 has no equivalent in the Anthropic stack as of May 2026. The closest workaround on Opus 4.7 is frame-by-frame screenshot upload, which works for short clips but burns tokens fast and loses temporal context.

Best for Direct File Generation: Grok 4.3

Native chat-to-file generation for PowerPoint (PPTX), PDF, and Excel (XLSX) is unique to Grok 4.3 among May 2026 flagships. Opus 4.7 can produce structured Markdown or JSON that you then render into those file formats, but the two-step pipeline adds friction and complexity. For content teams, ops teams, and analysts producing investor decks, balance sheets, or printable reports at scale, Grok 4.3's direct-output capability is a category-defining differentiator.

Best for Agentic Coding: Claude Opus 4.7

This is the dimension where Opus 4.7's lead is largest and least disputed. 93% on SWE-bench Verified is a +13-point jump over Opus 4.6 and the highest score from any flagship at launch. Real-world: 30+ tool-call autonomous runs that finish without rescue, multi-file refactors that compile on first try, and integration depth across Claude Code, Cursor, Windsurf, and the Claude Agent SDK. Grok 4.3 is more competent at agentic coding than Grok 4.20 was, but the SWE-bench parity gap remains. Val's AI ranks Grok 4.3 13th on coding benchmarks despite its overall agentic ELO surge.

Grok 4.3 vs Grok 4.20 — The Upgrade Story

Grok 4.3 is best understood as the version Grok 4.20 should have been at launch. xAI released Grok 4.20 on April 15, 2026 with a 4-agent collaborative architecture (Grok + Harper + Benjamin + Lucas), 2M-token context, and a 78% non-hallucination rate on AA-Omniscience. Two weeks later, on April 30, 2026, xAI silently dropped Grok 4.3 — same 1M context (down from 2M to optimize cost), but with three step-change improvements: GDPval-AA ELO jumped 321 points to 1500, Tau-squared Bench Telecom hit 98% (+5 points), and IFBench reached 81%. Pricing dropped 37.5% on input and 58.3% on output. The trade-off: AA-Omniscience non-hallucination rate dropped 8 points versus Grok 4.20 — xAI sacrificed factual reliability for higher agentic ELO and lower price.

For developers already on Grok 4.20, the migration is a one-line change (model string from grok-4-20 to grok-4-3). Most workflows we measured got faster and cheaper without quality regression. The exception: any pipeline that relied on Grok 4.20's record-low hallucination rate for fact-research workflows should re-test on Grok 4.3, because that specific dimension regressed. We compare both Grok models in detail in our Claude Opus 4.7 vs Grok 4.20 comparison if you want the full architectural and pricing context.

Pros and Cons

Claude Opus 4.7 Pros and Cons

What we liked about Claude Opus 4.7

- SWE-bench Verified 93% — best in class for agentic coding. A +13-point jump over Opus 4.6, validated in our two-week side-by-side where Opus finished multi-file refactors in one autonomous run while Grok 4.3 left two tasks incomplete.

- Self-verification on long autonomous runs. Opus 4.7 caught a bad tool-call argument in our 50-step test and recovered without intervention; Grok 4.3 stalled at step 31 in the same scenario.

- Single-voice clarity. For decisive recommendations and shipped code, a single coherent answer beats a multi-step debate. Outputs are easier to evaluate, easier to merge, easier to ship.

- Enterprise platform depth. AWS Bedrock, GCP Vertex AI, and Microsoft Foundry coverage means single-vendor billing for almost any cloud-native team. Grok 4.3 is API-only or via X Premium+.

- Adaptive thinking and task budgets. The model decides when to reason in depth, and task budgets (public beta) let you cap reasoning cost per task — a level of cost control Grok 4.3 doesn't expose.

Where Claude Opus 4.7 falls short

- 4x more expensive than Grok 4.3 on input, 10x on output. $5 / $25 per million tokens versus $1.25 / $2.50. For high-volume batch work, the price gap compounds quickly.

- No native video input. Maxes out at 2,576 px stills. Frame-by-frame screenshot upload is the workaround — high friction.

- No native PPTX, PDF, or XLSX file generation. Markdown output then external rendering is the only path. Grok 4.3 ships these as direct chat output.

- New tokenizer uses up to 35% more tokens for the same fixed text. A hidden cost that erodes some of the headline-rate advantage Anthropic publishes versus older Opus models.

Grok 4.3 Pros and Cons

What we liked about Grok 4.3

- Cheapest frontier-tier model in May 2026. $1.25 input / $2.50 output per million tokens. 37.5% cheaper input and 58.3% cheaper output than Grok 4.20. Cheapest serious flagship API on the market.

- Native video input up to 5 minutes 1080p. The single feature most decisively differentiating Grok 4.3 from any other flagship. Opus 4.7 has no equivalent.

- Native PPTX, PDF, and XLSX file generation. Direct chat-to-file output. No two-step pipeline. Category-defining for content and ops teams.

- GDPval-AA ELO 1500 (+321 over Grok 4.20). The largest single-benchmark agentic improvement in xAI's history. Real on aggregate agentic tasks even if SWE-bench parity didn't follow.

- OpenAI-compatible REST API. Three-line migration from existing OpenAI SDK code. Lowest onboarding friction of any frontier model in May 2026.

- 108 tokens-per-second output speed. Industry-leading per-token throughput per Artificial Analysis. Streams faster than Opus 4.7 for long-form generation.

- Tau-squared Bench Telecom 98%. Top-tier on this benchmark — useful proxy for tool-use reliability in agentic workflows.

Where Grok 4.3 falls short

- Trails GPT-5.5 by 276 ELO points on GDPval-AA. The headline 1500 ELO is a step-change versus Grok 4.20 but still well behind the 2026 frontier leader.

- AA-Omniscience non-hallucination rate dropped 8 points versus Grok 4.20. xAI traded factual reliability for higher agentic ELO. Workflows that relied on Grok 4.20's low-hallucination edge should re-test.

- Documentation is thin and inconsistent. We hit two undocumented rate-limit edge cases during our test week. Anthropic and OpenAI publish more comprehensive specs.

- Long-context coding past 600,000 tokens degrades faster than Opus 4.7. The 1M-token context is on paper but recall in the back half is noticeably less reliable for code-specific tasks.

- SWE-bench Verified parity gap. Val's AI ranks Grok 4.3 13th on coding benchmarks. The agentic ELO jump didn't translate to SWE-bench-style coding wins.

When to Pick Claude Opus 4.7 vs Grok 4.3

Pick Claude Opus 4.7 if...

- You're shipping production code and need 93%-SWE-bench-grade agentic coding

- Your team runs on AWS Bedrock, GCP Vertex AI, or Microsoft Foundry and wants single-vendor billing

- You need long autonomous runs (40+ tool calls) that don't break down mid-task

- You're building agents in Claude Code, Cursor, Windsurf, or via the Claude Agent SDK

- You need decisive single-voice output for shipped code review or architectural decisions

- Your enterprise procurement requires SOC 2 Type II + ISO 27001 + HIPAA on the LLM vendor directly

Pick Grok 4.3 if...

- API cost is your bottleneck and you process millions of tokens per day

- Your workflow has video input (screen recordings, product demos, QA video, training material)

- You need direct PPTX, PDF, or XLSX file generation in a single API call

- You already have OpenAI SDK code and want a flagship-tier model with a three-line migration

- You want the highest per-token output speed for streaming long-form generation

- You're building research workflows where real-time X data ingestion adds value

Frequently Asked Questions

Is Claude Opus 4.7 better than Grok 4.3 in 2026?

For most production engineering teams, yes. Claude Opus 4.7 scored 9.4 out of 10 in our review versus Grok 4.3's 8.6 out of 10. Opus 4.7 wins decisively on agentic coding (93% SWE-bench Verified versus Val's AI 13th-place ranking for Grok 4.3 on coding), reliability on long autonomous runs, single-voice clarity, and enterprise platform depth (AWS Bedrock, Vertex AI, Foundry). Grok 4.3 wins on raw API price (4x cheaper input, 10x cheaper output), native video input (up to 5 minutes 1080p), native PPTX/PDF/XLSX file generation, GDPval-AA agentic ELO at 1500, and 108 tokens-per-second output speed. The honest answer is workflow-dependent: ship code with Opus 4.7, run cost-driven content workflows or video-input tasks with Grok 4.3.

How much does Claude Opus 4.7 cost compared to Grok 4.3?

Claude Opus 4.7 API costs $5 per million input tokens and $25 per million output tokens. Grok 4.3 costs $1.25 per million input and $2.50 per million output — 4x and 10x cheaper respectively. On consumer plans, Claude Pro is $20 per month or $17 per month on annual billing; X Premium+ (which includes Grok 4.3 access on x.com) is $16 per month. For high-volume API workloads, Grok 4.3 is dramatically cheaper. Both vendors offer Batch API discounts at 50% off published rates and cached input pricing at $0.50/MTok (Opus) versus $0.20/MTok (Grok 4.3).

Which is better for agentic coding: Claude Opus 4.7 or Grok 4.3?

Claude Opus 4.7 wins by a clear margin. Opus 4.7 scored 93% on SWE-bench Verified — the highest from any flagship at launch and a +13-point jump over Opus 4.6. Grok 4.3 hasn't published a parity-level SWE-bench number, and Val's AI ranks it 13th on coding benchmarks despite a strong overall agentic ELO of 1500 on GDPval-AA. In our two-week side-by-side test, Opus 4.7 finished a 12-file Supabase refactor in one autonomous run with 18 tool calls; Grok 4.3 left two refactors incomplete. For multi-file refactors, code review, and long autonomous coding runs, Opus 4.7 is the category leader. Grok 4.3 is competent at agentic tasks broadly but not at SWE-bench-style coding parity.

Can Claude Opus 4.7 ingest video the way Grok 4.3 can?

No. Grok 4.3 ingests up to 5 minutes of 1080p video natively in a single API call — the only flagship API in May 2026 that does so. Claude Opus 4.7's vision input maxes out at 2,576 pixels on the long edge for still images. The closest workaround on Opus 4.7 is uploading frames as screenshots one by one, which works for short clips but loses temporal context, burns tokens fast, and doesn't scale beyond ~30 seconds of usable input. For workflows with video input — screen recordings, product demos, QA video, training material — Grok 4.3 is functionally unique among single-API-call solutions.

What is Grok 4.3's GDPval-AA score and what does it mean?

Grok 4.3 scored 1500 ELO on GDPval-AA (Artificial Analysis), a +321-point jump over Grok 4.20's 1179 — the largest single-benchmark improvement in xAI's history. GDPval-AA is an aggregate agentic-task benchmark that measures real-world workflow execution rather than narrow-task accuracy. The 1500 ELO puts Grok 4.3 well above average among reasoning models in similar price tiers, but still trails GPT-5.5 (xhigh) by 276 ELO points. It's the strongest signal that Grok 4.3 is no longer a budget-tier alternative — it's a genuine flagship contender on agentic workflows broadly, even if SWE-bench-style coding parity didn't follow.

Is Claude Opus 4.7 faster than Grok 4.3?

Mixed. For interactive responses requiring deep reasoning, Opus 4.7 ships faster in our hands-on tests — single-voice output without multi-step debate. For raw token throughput in non-reasoning streaming mode, Grok 4.3 wins with 108 tokens per second per Artificial Analysis (versus Opus 4.7's standard streaming pace). Practical takeaway: Opus 4.7 feels faster for chat and code-edit interactive UX where the answer needs to be correct on first response; Grok 4.3 streams faster for long-form generation tasks (article drafts, structured summaries) where total tokens matter more than time-to-first-token.

Which has the bigger context window: Claude Opus 4.7 or Grok 4.3?

They are tied at 1,000,000 tokens. Both flagships ship a 1M-token context window in May 2026. Claude Opus 4.7 has 128K max output tokens with a Batch API beta header (output-300k-2026-03-24) extending output to 300K. Grok 4.3 hasn't published an explicit max output number. Note that Grok 4.20 previously offered a 2M context window, but Grok 4.3 dropped to 1M to optimize price-per-task. If you need more than 1M tokens in a single call, Gemini 3.1 Pro (still 2M) is the only flagship choice in May 2026.

Is Grok 4.3 a real upgrade from Grok 4.20?

For most workflows, yes. GDPval-AA jumped from 1179 to 1500 ELO (+321), Tau-squared Bench Telecom hit 98% (+5 points), IFBench reached 81%, and pricing dropped 37.5% on input and 58.3% on output. The migration is a one-line model-string change. The exception: AA-Omniscience non-hallucination rate dropped 8 points versus Grok 4.20 — xAI sacrificed factual reliability for higher agentic ELO. Workflows that relied on Grok 4.20's record-low hallucination rate for fact-research should re-test on Grok 4.3 before migrating. Grok 4.3 is the version Grok 4.20 should have been at launch — same headline architecture, dramatically better agentic and price metrics, with one regression to track.

Does Grok 4.3 generate slides and PDF files directly?

Yes. Grok 4.3 supports native chat-to-file generation for PowerPoint (PPTX), PDF, and Excel (XLSX). Ask for a 10-slide investor deck and Grok 4.3 returns a downloadable .pptx file with structured layouts. Same workflow runs in PDF and XLSX. This is a category-defining differentiator for content, ops, and analyst teams in May 2026 — Claude Opus 4.7, GPT-5.5, and Gemini 3.1 Pro Preview all require a two-step pipeline (LLM produces structured Markdown or JSON, external script renders to file). Native chat-to-file generation reduces engineering complexity and is one of the strongest reasons to evaluate Grok 4.3 even when its coding scores trail Opus.

Is Grok 4.3 GDPR or SOC 2 compliant?

xAI publishes SOC 2 Type II compliance for the xAI API and is working through ISO 27001. GDPR processing terms are available for enterprise customers. Anthropic publishes SOC 2 Type II, ISO 27001, and HIPAA compliance for the Claude API directly, with Bedrock and Vertex AI deployments inheriting additional cloud-vendor compliance. For regulated industries, both vendors meet baseline requirements; Anthropic has the broader certification stack and longer audit history. Procurement teams in healthcare, finance, or legal should verify that Grok 4.3's compliance posture matches their deployment requirements before adoption.

Can I use Claude Opus 4.7 and Grok 4.3 together in the same workflow?

Yes — and we do this daily across our own workflow. The pattern: Opus 4.7 handles agentic coding, single-voice architectural decisions, and long autonomous runs; Grok 4.3 handles cost-sensitive batch summarization, native video QA, and direct PPTX/PDF generation for the editorial pipeline. Both are accessible through OpenRouter (single billing layer), or directly through the Claude API and xAI Console. Tools like Cursor and Windsurf let you swap models per task. The cost-benefit is real if your workflow has tasks that hit each model's distinct strengths — Opus for engineering-grade output, Grok 4.3 for everything where price, video, or direct-file output matters.

What are the alternatives to Claude Opus 4.7 and Grok 4.3?

The other frontier flagships in May 2026: GPT-5.5 from OpenAI, Gemini 3.1 Pro Preview from Google (still ships a 2M-token context window — the only flagship at that ceiling), and Llama 4 Maverick on the open-weight side. For coding specifically, Claude Sonnet 4.6 ($3 input / $15 output per million tokens) is a cheaper Anthropic alternative that scored well on SWE-bench. For ultra-budget high-volume work, Claude Haiku 4.5 at $1 input / $5 output is the cheapest serious model from Anthropic. Each frontier model has a workflow it wins — Opus 4.7 for agentic coding, Grok 4.3 for video and direct-file output, Gemini 3.1 Pro for ultra-long context, GPT-5.5 for raw aggregate intelligence at 60 on the Artificial Analysis Index.

Final Verdict: Claude Opus 4.7 Wins Overall, Grok 4.3 Closes the Gap on Specific Workflows

Claude Opus 4.7 is the better default flagship for production engineering teams in May 2026 — 93% SWE-bench Verified, single-voice clarity, enterprise platform depth across AWS Bedrock, Vertex AI, and Foundry, and reliability on 40+ tool-call autonomous runs. Grok 4.3 is the best second-tier-priced flagship of 2026 and the closest a budget option has come to Opus on overall capability, with three category-defining wins: native video input up to 5 minutes 1080p, native PPTX/PDF/XLSX file generation, and the cheapest published frontier API ($1.25 / $2.50 per million tokens). If you're a production engineer, AI agent builder, or enterprise team, go with Claude Opus 4.7. If you're processing video workloads, generating slides or reports natively, or running cost-sensitive high-volume batches, Grok 4.3 is the better fit. If your bottleneck is API cost at scale, Grok 4.3 at $1.25 / $2.50 per million tokens is hard to beat.

Score breakdown by category:

- Features: Claude Opus 4.7 9.6 out of 10 vs Grok 4.3 9.0 out of 10 — Opus wins on coding ceiling and consistency; Grok wins on native video, slide gen, and modality breadth

- Ease of Use: Claude Opus 4.7 9.0 out of 10 vs Grok 4.3 8.0 out of 10 — Opus enterprise onboarding wins for procurement; Grok OpenAI-compatible API wins for developer onboarding

- Value: Claude Opus 4.7 8.5 out of 10 vs Grok 4.3 9.5 out of 10 — Grok wins on raw price, Opus wins on output quality per dollar for engineering work

- Support: Claude Opus 4.7 9.2 out of 10 vs Grok 4.3 7.5 out of 10 — Anthropic's audit and certification stack and documentation depth win for regulated industries

Final word: Buy Claude Opus 4.7 if you're shipping production code or running long-horizon AI agents — the agentic coding lead, single-voice clarity, and enterprise platform depth justify the 4x to 10x price premium over Grok 4.3 on engineering-grade workflows. Buy Grok 4.3 if your bottleneck is cost, your workflow has video input, or you generate slides and reports at scale — the price-per-token gap, native video, and direct-file output are decisive. Consider Claude Sonnet 4.6 ($3 input / $15 output per million tokens) as a middle ground if Opus 4.7's cost is the deal-breaker but you don't need Grok 4.3's specific modality wins. We use Opus 4.7 daily on ThePlanetTools.ai for production work and reach for Grok 4.3 on cost-sensitive content workflows where its capabilities are decisive.

Our Verdict

Claude Opus 4.7 wins overall (9.4 vs 8.6) for production engineering — 93% SWE-bench Verified, single-voice clarity, enterprise platform depth across AWS Bedrock, Vertex AI, and Foundry, and reliability on 40+ tool-call autonomous runs. Grok 4.3 is the best second-tier-priced flagship of 2026 with three category-defining wins: native video input up to 5 minutes 1080p, native PPTX/PDF/XLSX file generation, and the cheapest published frontier API ($1.25/$2.50 per million tokens — 4x and 10x cheaper than Opus 4.7). Pick Opus for shipping code, building agents, or running long autonomous workflows. Pick Grok 4.3 for video workloads, slide generation, or cost-sensitive high-volume API workflows. The choice is workflow-driven, not category-driven.

Choose Claude Opus 4.7

Anthropic's flagship LLM — agentic coding king with 1M context

Try Claude Opus 4.7 →Choose Grok 4.3

xAI's cheapest frontier reasoning model — $1.25/$2.50 per 1M tokens, 1M context, native video and slide gen.

Try Grok 4.3 →Frequently Asked Questions

Is Claude Opus 4.7 better than Grok 4.3?

Claude Opus 4.7 wins overall (9.4 vs 8.6) for production engineering — 93% SWE-bench Verified, single-voice clarity, enterprise platform depth across AWS Bedrock, Vertex AI, and Foundry, and reliability on 40+ tool-call autonomous runs. Grok 4.3 is the best second-tier-priced flagship of 2026 with three category-defining wins: native video input up to 5 minutes 1080p, native PPTX/PDF/XLSX file generation, and the cheapest published frontier API ($1.25/$2.50 per million tokens — 4x and 10x cheaper than Opus 4.7). Pick Opus for shipping code, building agents, or running long autonomous workflows. Pick Grok 4.3 for video workloads, slide generation, or cost-sensitive high-volume API workflows. The choice is workflow-driven, not category-driven.

Which is cheaper, Claude Opus 4.7 or Grok 4.3?

Claude Opus 4.7 starts at $5/month. Grok 4.3 starts at $1.25/month (free plan available). Check the pricing comparison section above for a full breakdown.

What are the main differences between Claude Opus 4.7 and Grok 4.3?

The key differences span across 14 features we compared. For Context window, Claude Opus 4.7 offers 1,000,000 tokens while Grok 4.3 offers 1,000,000 tokens. For Max output, Claude Opus 4.7 offers 128K standard / 300K Batch API beta while Grok 4.3 offers Not publicly specified. For Agentic coding (SWE-bench Verified), Claude Opus 4.7 offers 93% while Grok 4.3 offers Not officially benchmarked at SWE-bench parity (Val's AI 13th on coding). See the full feature comparison table above for all details.