gpt-realtime

OpenAI's flagship speech-to-speech voice model — GA since August 2025, 20 percent cheaper than the gpt-4o-realtime preview, with native SIP, MCP, image input.

Quick Summary

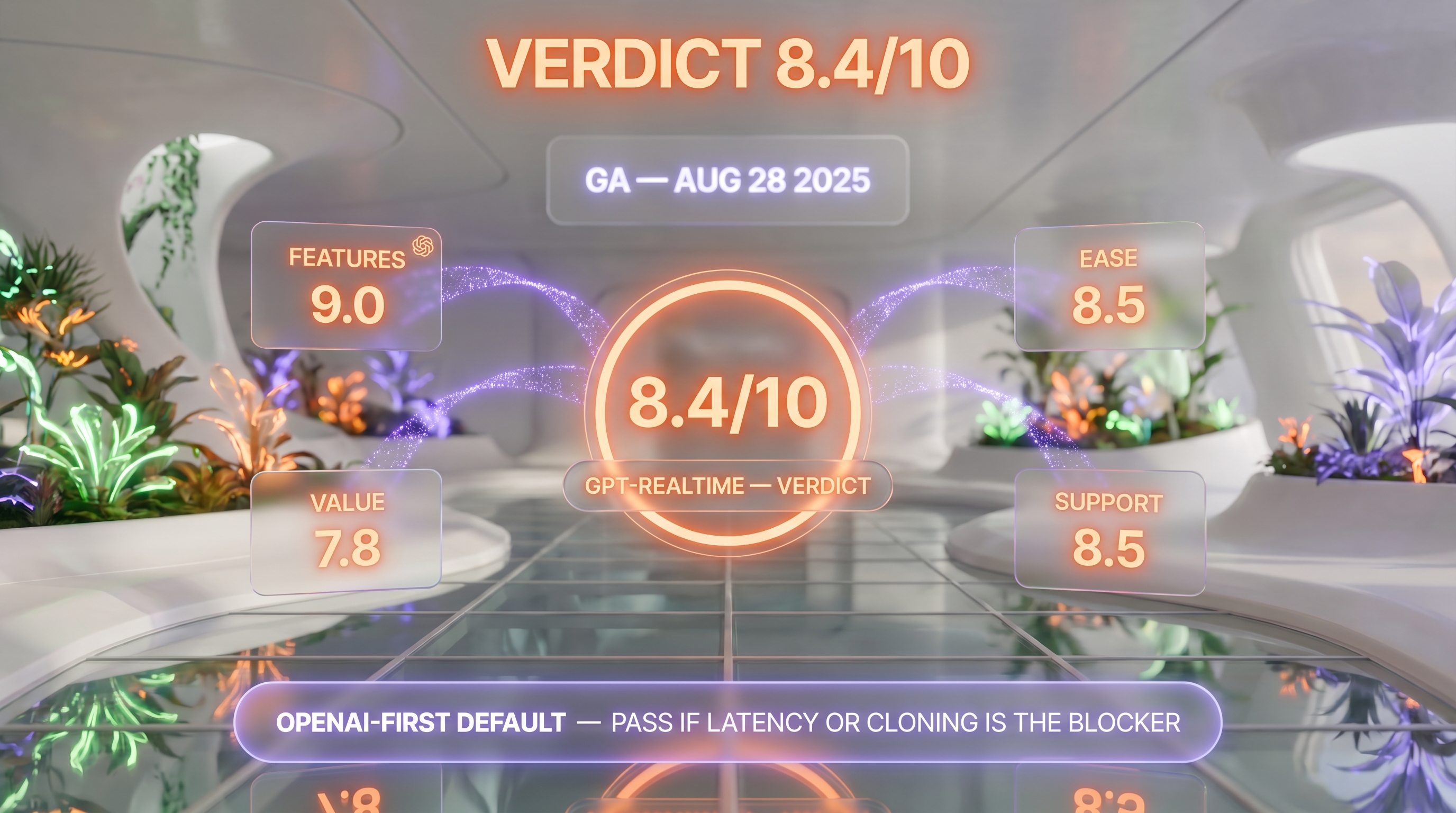

gpt-realtime is OpenAI's production speech-to-speech voice model, GA since August 28, 2025 (renamed from GPT-4o Realtime Voice). Audio: $32 per 1M input, $64 per 1M output, $0.40 per 1M cached. Text $4 per 1M input, $16 output. Score 8.4/10.

gpt-realtime is OpenAI's production speech-to-speech voice model, generally available since August 28, 2025 and rebranded from "GPT-4o Realtime Voice" to drop the 4o suffix. It powers conversational voice agents end-to-end without the speech-to-text plus LLM plus text-to-speech pipeline. Pricing: $32 per 1M audio input tokens, $64 per 1M audio output tokens, $0.40 per 1M cached input tokens, $4 text input and $16 text output per 1M. Free tier none, pay per use only. Score: 8.4/10.

TL;DR — Our Verdict

Score: 8.4/10. gpt-realtime is the most production-ready speech-to-speech model OpenAI has shipped, with native support for SIP phone calling, MCP servers, image input, and asynchronous function calling. It is the safest default if you are building a voice agent on the OpenAI ecosystem and need GA stability rather than experimentation. It is not the cheapest option — burn rate at $64 per 1M audio output tokens climbs fast on long calls — and Cartesia or ElevenLabs Conversational AI may suit you better for ultra-low-latency or voice-cloning-first workloads.

- Speech-to-speech in a single model — no STT plus LLM plus TTS pipeline to maintain

- 20 percent cheaper than the previous gpt-4o-realtime-preview, and cached input is now $0.40 per 1M tokens

- Production features — SIP phone calling, remote MCP servers, image inputs, async function calling, EU data residency

- Audio output at $64 per 1M tokens means a 30-minute call burns real money — model still expensive at scale

- Documented loop and language-identification bugs reported on the OpenAI Developer Community forum

What Is gpt-realtime?

gpt-realtime is OpenAI's speech-to-speech voice model, exposed via the Realtime API. It accepts audio (and optionally text or images) as input and produces audio (and optionally text) as output — without the traditional pipeline of a separate speech-to-text model, an LLM, and a text-to-speech model stitched together. The model preserves tone, emotion, and rhythm because it never converts speech to text in the middle.

The exact model ID is gpt-realtime, with the dated snapshot gpt-realtime-2025-08-28. The branding shift matters: OpenAI publicly retired the "GPT-4o Realtime Voice" naming when the model graduated from preview to general availability on August 28, 2025. The 4o suffix is gone — internal docs, billing dashboards, and SDKs now say gpt-realtime. We mention this because anyone searching for "GPT-4o realtime API" in 2026 lands on the same product, just under a new identifier.

OpenAI built gpt-realtime in close collaboration with enterprise customers — notably T-Mobile, Zillow, and Oscar Health, all named in the launch post — to handle real-world voice agent workloads: customer support, scheduling assistants, in-app voice tutors, and education tools. The Realtime API supports three transports: WebRTC for browser-based voice apps, WebSocket for server-side integrations, and SIP for traditional phone networks. SIP is the production-ready piece: you can route a real phone call straight into a gpt-realtime session, which is what carriers and call-center platforms now do.

Key Features

Native speech-to-speech architecture

The largest design decision: gpt-realtime processes audio end-to-end. There is no intermediate transcript that the LLM reads and then re-vocalizes. This cuts latency by hundreds of milliseconds on a typical exchange and preserves prosodic cues — laughter, hesitation, emphasis — that traditional pipelines flatten. OpenAI's published benchmark shows audio reasoning rising from 65.6% (4o-realtime-preview) to 82.8% on Big Bench Audio, and instruction-following jumping from 20.6% to 30.5% on MultiChallenge audio.

Voices — Marin, Cedar, plus six legacy

OpenAI shipped two new voices exclusive to gpt-realtime: Marin and Cedar. The launch post calls them "the most significant improvements to natural-sounding speech" and the docs explicitly recommend them for production assistants. The six legacy voices (alloy, ash, ballad, coral, echo, sage, shimmer, verse) remain available on older snapshots. A known issue (openai-agents-python issue #1746) documents Marin and Cedar occasionally ignoring agent instructions on long sessions — a regression OpenAI has acknowledged.

MCP servers, image input, and SIP phone calling

Three production capabilities arrived with the GA announcement on August 28, 2025:

- Remote MCP server support. You can hand a gpt-realtime session a Model Context Protocol server URL and the model will call its tools mid-conversation. Best when paired with async function calling so the model keeps talking while waiting for slow tool results.

- Image input. The model accepts inline images during a session — useful for "here's a screenshot of my dashboard, walk me through it" support flows.

- SIP phone calling. Direct integration with the public phone network. Carriers and call-center platforms can route a real customer call into a gpt-realtime session without a separate WebRTC bridge.

Asynchronous function calling

The previous Realtime API blocked the conversation while a tool call resolved. gpt-realtime introduces async function calling: the model continues talking — "let me check that for you, one moment" — while a long-running tool call executes in the background. No additional developer code changes required, per OpenAI's docs.

Context window and session length

The context window is 32,000 tokens with a 4,096-token maximum response. Maximum input context is 28,672 tokens, and session instructions plus tools cap at 16,384 tokens. Maximum session length is 60 minutes, doubled from the previous 30-minute cap on gpt-4o-realtime-preview. For longer interactions you orchestrate multiple sessions and pass context manually.

Interruption handling and VAD

When voice activity detection (VAD) is enabled, the Realtime API detects when a user starts speaking, cancels the model's current response, and starts a new one. This is the table-stakes feature for any voice agent — without it you get a model that talks over the user.

Language coverage

gpt-realtime supports the same broad language set as the GPT-4-class models — English, French, Spanish, German, Italian, Portuguese, Dutch, Polish, Japanese, Korean, Mandarin, Arabic, and dozens more. Heavy accents on input audio remain a known weakness: developers report the model occasionally misidentifies the speaker's language on the first turn, particularly with accented English from non-native speakers.

EU data residency

EU data residency is supported for organizations that need it. This was added at GA and matters for European deployments under GDPR Article 28 obligations.

gpt-realtime Pricing in 2026

gpt-realtime is pay-per-use only — there is no free tier, no monthly subscription, and no included quota. You pay per 1M tokens consumed, with separate rates for text and audio. We verified these rates on April 27, 2026 from OpenAI's official pricing page.

| Modality | Input | Cached input | Output |

|---|---|---|---|

| Audio | $32.00 per 1M tokens | $0.40 per 1M tokens | $64.00 per 1M tokens |

| Text | $4.00 per 1M tokens | $0.40 per 1M tokens | $16.00 per 1M tokens |

gpt-realtime-mini variant. OpenAI also lists gpt-realtime-mini on the API pricing page at $10 per 1M audio input tokens, $20 per 1M audio output tokens, and $0.30 per 1M cached audio input tokens — roughly a third of the headline gpt-realtime rate. Pick the mini variant for high-volume voice traffic where the full model's reasoning ceiling is unnecessary; pick the headline gpt-realtime when you need the deeper feature set documented above (MCP, image input, async function calling).

Two anchors to make the math intuitive. A typical voice agent session burns roughly 800 audio input tokens and 1,200 audio output tokens per minute of conversation. A 10-minute customer-support call therefore costs about $0.26 in input plus $0.77 in output — call it roughly $1 per 10-minute call at list prices, before caching. Cached input drops audio input by 98.75 percent (from $32 to $0.40 per 1M tokens), which matters if you reuse long system prompts across sessions — typical for a single voice agent product.

OpenAI dropped the price 20 percent versus gpt-4o-realtime-preview at GA, and the cached input rate is the headline win for production teams running thousands of calls per day. There is no enterprise-tier discount published — volume discounts are negotiated directly with OpenAI's sales team.

Best for: Production voice agents on the OpenAI ecosystem. Burn at $64 per 1M audio output tokens means a 60-minute support call can cost $5–$8, so this is not the cheapest option for high-volume retail voice. For latency-bound or voice-cloning-first workloads, Cartesia (Sonic 3 at 90ms time-to-first-audio) or ElevenLabs Conversational AI are competitive. We surface why in the Alternatives section.

Our Methodology for This Review

We have not run gpt-realtime in production at the cockpit. ThePlanetTools.ai runs on text generation models — Claude Opus 4.7 for content, Gemini 3.1 Pro for backup, Nano Banana Pro for image generation — and our voice work to date has been with ElevenLabs (TTS) and Speechma (research). We do operate paid OpenAI keys daily for the ChatGPT API, so we know the ChatGPT billing surface, the SDK shape, and how OpenAI ships dated snapshots — but our hands-on time on the Realtime API specifically is limited to a few exploratory sessions in early 2026.

This review compiles the OpenAI launch post (August 28, 2025), the Realtime API documentation at developers.openai.com (last verified April 27, 2026), the official pricing page (verified same date), the OpenAI Developer Community forum announcement thread (over 26 pages of developer feedback as of April 2026), Reddit r/OpenAI sentiment threads, and the openai-agents-python GitHub issue tracker (notably issue #1746 on Marin/Cedar instruction-following regressions). External community ratings on G2, Trustpilot, and Capterra for gpt-realtime specifically were not available — gpt-realtime is an API endpoint, not a standalone SaaS product, so review aggregator pages don't exist for it. OpenAI's broader portfolio scores 4.7 out of 5 on G2 across 2,293 reviews, but those cover ChatGPT and the wider product surface.

Our score reflects feature completeness against the gpt-4o-realtime-preview baseline, the GA milestone (production stability matters), the price reduction (real-world cost impact), and the documented limitations weighted against community consensus from the OpenAI Developer Forum and Reddit.

Pros and Cons After Research

What we liked

- True speech-to-speech. No STT plus LLM plus TTS pipeline. The model preserves tone, emotion, and rhythm, which translates to noticeably more natural-feeling agent interactions on the demos OpenAI publishes and on developer videos we reviewed.

- 20 percent cheaper than the preview model. Direct price reduction at GA, plus a 98.75 percent discount on cached input ($32 → $0.40 per 1M tokens for audio). For teams reusing system prompts, this is meaningful.

- Production features that matter. SIP phone calling, remote MCP servers, image input, async function calling, EU data residency, 60-minute max session length. These are the boxes enterprise voice teams check.

- GA stability. The Realtime API is officially out of beta. Pricing, rate limits, and SDK contracts are stable for production planning.

- Marin and Cedar voices. The two new voices are a tangible quality jump over the legacy six. OpenAI explicitly recommends them for production assistants.

- Three transport modes. WebRTC for browsers, WebSocket for servers, SIP for the public phone network. Most voice platforms only ship one or two of these.

Where it falls short

- Audio output remains expensive at scale. $64 per 1M output tokens means a 30-minute call costs $2 to $4 in output alone. High-volume retail voice (thousands of calls per day) makes the bill add up fast.

- Documented bugs. Developers on the OpenAI Forum report agents getting stuck in repetition loops and hitting random API errors, particularly on long sessions. The openai-agents-python issue #1746 documents Marin and Cedar voices ignoring agent instructions in some configurations.

- Language-identification weakness on heavy accents. Multiple developer reports indicate the model occasionally misidentifies the speaker's language on the first turn, particularly with non-native English speakers.

- Vendor lock-in. A closed-source API from a single provider. If OpenAI deprecates the snapshot or changes pricing, your voice agent has nowhere to fall back without a re-architecture.

- 32K context, 60-minute cap. Long-running customer service interactions, multi-turn diagnostic sessions, or extended tutoring conversations need orchestration logic to span sessions.

Real-World Use Cases

Customer support voice agents

OpenAI's launch post names T-Mobile and Oscar Health as production customers using gpt-realtime for support. SIP integration plus async function calling — the agent pulls account data while keeping the user engaged — makes this the canonical use case.

In-app voice assistants and tutors

Education products and consumer apps use gpt-realtime for hands-free interaction. The image-input feature is particularly relevant here — a learner can show a homework problem and have the agent walk them through it.

Phone-tree replacement (IVR modernization)

SIP support means traditional carrier-grade phone trees can be replaced with an LLM-powered voice agent that actually understands what the caller wants. This is the largest enterprise opportunity OpenAI is targeting.

Real-time translation and interpretation

Speech-to-speech with multi-language support enables live interpretation use cases — though the language-identification weakness on heavy accents is a known caveat.

Voice-driven scheduling and personal assistants

Calendar integration via MCP servers turns gpt-realtime into a hands-free scheduling agent. This is what Zillow built with the model, per OpenAI's launch post.

Accessibility tools

Voice-first interfaces for users with vision impairments or motor difficulties. The 60-minute session length and natural prosody are tailored for this.

Sales call coaching and live agent assist

Agent-assist tools that listen to a call in real time and prompt the human agent with suggestions. The image-input feature lets the assist tool consume a CRM screenshot.

gpt-realtime vs ElevenLabs Conversational AI vs Vapi vs Cartesia

gpt-realtime is OpenAI's first-party voice model. The competitive set splits into three tiers: voice agent platforms that orchestrate any model (Vapi), voice cloning and TTS-first platforms with conversational layers on top (ElevenLabs Conversational AI), and ultra-low-latency speech-to-speech alternatives (Cartesia). Here is how they stack up.

| Feature | gpt-realtime | ElevenLabs Conv AI | Vapi | Cartesia |

|---|---|---|---|---|

| Architecture | Native speech-to-speech | STT + LLM + TTS pipeline | Multi-model orchestrator | State Space Models speech-to-speech |

| Time-to-first-audio | ~250 ms typical | ~400-600 ms | Depends on chosen LLM | ~90 ms (Sonic 3) |

| Audio input pricing | $32 per 1M tokens | Bundled in plan | Pass-through model cost | Free tier; $5+ per month plans |

| Audio output pricing | $64 per 1M tokens | From $0.18 per minute | Pass-through | Per-character pricing |

| SIP phone calling | Native | Via Twilio integration | Native | Via integration |

| Voice cloning | No (8 fixed voices) | Yes (instant) | Use any TTS provider | Yes (3-second clone) |

| MCP servers | Yes | No | Workflow tool layer | No |

| Image input | Yes | No | Via underlying LLM | No |

| Score (ours) | 8.4/10 | 9.0/10 | 8.6/10 | 9.0/10 (draft) |

Pick gpt-realtime if: you are already on OpenAI infrastructure, you want a single vendor for the model and the voice layer, and SIP phone calling plus MCP server support matter for your enterprise use case.

Pick ElevenLabs Conversational AI if: voice cloning is non-negotiable, you want a fully managed conversational layer including telephony and analytics, or you need the largest catalog of natural-sounding voices in the industry.

Pick Vapi if: you want to mix and match LLMs (Claude for reasoning, OpenAI for voice, ElevenLabs for cloning) and orchestrate the pipeline through a single SDK and dashboard. Vapi is a platform, not a model.

Pick Cartesia if: latency is your bottleneck — Sonic 3 hits 90ms time-to-first-audio versus gpt-realtime's typical 250ms — or you need 3-second voice cloning at a fraction of OpenAI's audio token price.

Frequently Asked Questions

Is gpt-realtime free?

No. gpt-realtime is pay-per-use only and has no free tier. You pay per 1M tokens consumed, separated into audio input ($32 per 1M), audio output ($64 per 1M), cached input ($0.40 per 1M), text input ($4 per 1M), and text output ($16 per 1M). New OpenAI API accounts may receive promotional credits, but there is no recurring free quota for gpt-realtime specifically. Verified on the OpenAI pricing page on April 27, 2026.

How much does gpt-realtime cost in 2026?

Audio is $32 per 1M input tokens and $64 per 1M output tokens, with cached audio input at $0.40 per 1M tokens (a 98.75 percent discount). Text is $4 per 1M input and $16 per 1M output. A 10-minute typical voice agent call costs roughly $1 at list prices before caching. OpenAI cut the price 20 percent versus the previous gpt-4o-realtime-preview at general availability on August 28, 2025.

What is gpt-realtime and why was it renamed from GPT-4o Realtime?

gpt-realtime is OpenAI's speech-to-speech voice model — it accepts audio (and optionally text and images) and returns audio (and text) without a separate speech-to-text plus LLM plus text-to-speech pipeline. OpenAI dropped the "GPT-4o" prefix when the model graduated from preview to general availability on August 28, 2025. The exact model ID is gpt-realtime; the dated snapshot is gpt-realtime-2025-08-28. Older "GPT-4o Realtime Voice" branding is being phased out in dashboards and SDKs.

How does gpt-realtime compare to ElevenLabs Conversational AI?

gpt-realtime is a single speech-to-speech model from OpenAI; ElevenLabs Conversational AI is a managed conversational platform built on top of ElevenLabs' best-in-industry voice cloning and TTS catalog. Pick gpt-realtime if you want native MCP and image input plus SIP and you are already on OpenAI. Pick ElevenLabs if voice cloning is critical or you want the larger natural-sounding voice catalog. Time-to-first-audio is roughly 250 ms on gpt-realtime versus 400 to 600 ms on ElevenLabs' pipeline approach.

What voices does gpt-realtime support?

Two new voices ship exclusively with gpt-realtime: Marin and Cedar. OpenAI's documentation explicitly recommends them for production assistants. Eight legacy voices remain available on older snapshots: alloy, ash, ballad, coral, echo, sage, shimmer, and verse. A known issue (openai-agents-python issue #1746) documents Marin and Cedar occasionally ignoring system instructions on long sessions.

Does gpt-realtime support function calling and MCP servers?

Yes. gpt-realtime supports both synchronous function calling and asynchronous function calling — the model can keep talking ("let me check that for you") while a long-running tool resolves in the background. Remote Model Context Protocol (MCP) servers are also supported: you hand the session an MCP server URL and the model calls its tools mid-conversation. The combination of MCP and async function calling is OpenAI's recommended pattern for tool-heavy voice agents.

What is the maximum session length on gpt-realtime?

The maximum session length is 60 minutes, doubled from the 30-minute cap on the previous gpt-4o-realtime-preview model. The context window is 32,000 tokens with a 4,096-token maximum response. Maximum input context is 28,672 tokens, and session instructions plus tools cap at 16,384 tokens. For longer interactions you orchestrate multiple sessions and pass conversation history manually.

Does gpt-realtime work over the public phone network?

Yes. SIP (Session Initiation Protocol) is natively supported — you can route a real phone call straight into a gpt-realtime session without a separate WebRTC bridge. This is the production-ready transport for IVR modernization and call-center deployments. WebRTC and WebSocket transports are also supported for browser-based voice apps and server-side integrations respectively.

Can gpt-realtime accept image input?

Yes. Image input was added when the model went GA on August 28, 2025. You can send inline images during a session — useful for "here's a screenshot of my dashboard, walk me through it" support flows or visual learning use cases. Output remains text and audio only — gpt-realtime does not generate images.

What languages does gpt-realtime support?

gpt-realtime supports the broad multilingual set of GPT-4-class models — English, French, Spanish, German, Italian, Portuguese, Dutch, Polish, Japanese, Korean, Mandarin, Arabic, and dozens more. Heavy accents on input audio remain a known weakness — developers on the OpenAI Forum report the model occasionally misidentifies the speaker's language on the first turn, particularly with accented English from non-native speakers.

Is gpt-realtime better than Cartesia or Vapi?

It depends. Cartesia's Sonic 3 hits 90 ms time-to-first-audio versus gpt-realtime's typical 250 ms — Cartesia wins on raw latency and offers 3-second voice cloning gpt-realtime cannot match. Vapi is an orchestrator, not a model — pick Vapi if you want to mix Claude for reasoning, OpenAI for voice, and ElevenLabs for cloning under one SDK. gpt-realtime wins for OpenAI-first stacks needing native MCP, image input, and SIP, and for teams who prioritize a single vendor for model and voice layer.

Is EU data residency available on gpt-realtime?

Yes. EU data residency was added at general availability on August 28, 2025 and is available for organizations that need it under GDPR Article 28 obligations. You configure data residency at the organization level in your OpenAI dashboard. This was one of the key enterprise blockers OpenAI removed at GA.

Verdict: 8.4/10

gpt-realtime earns an 8.4/10 on three things it does better than every other voice option: it is the most production-ready first-party voice model OpenAI has shipped, the 20 percent price cut and 98.75 percent cached-input discount actually move the unit-economics needle, and the combination of SIP plus MCP plus async function calling plus image input plus 60-minute sessions is the most complete voice-agent feature surface in 2026. The audio output cost at $64 per 1M tokens is what holds it back from a higher score — high-volume retail voice will burn fast — and the documented loop and language-identification bugs mean production teams need monitoring and fallbacks.

Score breakdown:

- Features: 9.0/10 — speech-to-speech, MCP, image input, SIP, async tools, EU residency. Most complete voice-agent feature set in 2026.

- Ease of Use: 8.5/10 — clean SDK in Python and Node, three transports, but voice agent productionization remains a non-trivial integration effort.

- Value: 7.8/10 — 20 percent cheaper than preview and cached input is excellent, but $64 per 1M output tokens is still expensive for high-volume retail voice.

- Support: 8.5/10 — OpenAI's enterprise-tier support is solid, EU residency available, but the public Developer Forum carries a long tail of unresolved bug reports.

Final word: pick gpt-realtime if you are already on OpenAI infrastructure and need GA stability with the deepest voice-agent feature set on the market — SIP plus MCP plus image input plus 60-minute sessions is hard to match elsewhere in one model. Pass on it if Cartesia's 90 ms time-to-first-audio matters more than the OpenAI ecosystem, if voice cloning is non-negotiable (go ElevenLabs), or if you prefer to orchestrate multiple models through a platform layer (go Vapi). For most production voice agents on OpenAI, this is the default in 2026.

Key Features

Pros & Cons

Pros

- Native speech-to-speech architecture — no STT plus LLM plus TTS pipeline, preserves tone, emotion and rhythm. Big Bench Audio jumps from 65.6 percent (preview) to 82.8 percent.

- 20 percent cheaper than the previous gpt-4o-realtime-preview at GA, plus a 98.75 percent discount on cached audio input ($32 to $0.40 per 1M tokens).

- Production feature set is the most complete in 2026 — native SIP phone calling, remote MCP servers, image input, asynchronous function calling, EU data residency, 60-minute max session length.

- Marin and Cedar are tangibly better voices than the legacy six (alloy, ash, ballad, coral, echo, sage, shimmer, verse) — OpenAI explicitly recommends them for production assistants.

- Three transport modes — WebRTC for browsers, WebSocket for servers, SIP for the public phone network. Most voice platforms ship one or two of these, not three.

- Generally available since August 28, 2025 — pricing, rate limits, SDK contracts and dated snapshots are stable for production planning.

Cons

- Audio output at $64 per 1M tokens means a 30-minute call costs $2 to $4 in output alone. High-volume retail voice (thousands of calls per day) makes the bill add up fast.

- Documented bugs on the OpenAI Developer Forum — agents getting stuck in repetition loops, random API errors on long sessions. The openai-agents-python issue #1746 documents Marin and Cedar voices ignoring system instructions in some configurations.

- Language-identification weakness on heavy accents — multiple developer reports flag the model occasionally misidentifying the speaker's language on the first turn, particularly accented English from non-native speakers.

- Vendor lock-in on a closed-source API from a single provider. If OpenAI deprecates the snapshot or changes pricing, your voice agent has nowhere to fall back without a re-architecture.

- 32K context window and 60-minute session cap require orchestration logic for long-running customer service interactions, multi-turn diagnostic sessions or extended tutoring conversations.

Best Use Cases

Platforms & Integrations

Available On

Integrations

We're developers and SaaS builders who use these tools daily in production. Every review comes from hands-on experience building real products — DealPropFirm, ThePlanetIndicator, PropFirmsCodes, and many more. We don't just review tools — we build and ship with them every day.

Written and tested by developers who build with these tools daily.

Frequently Asked Questions

What is gpt-realtime?

OpenAI's flagship speech-to-speech voice model — GA since August 2025, 20 percent cheaper than the gpt-4o-realtime preview, with native SIP, MCP, image input.

How much does gpt-realtime cost?

gpt-realtime costs $32/month.

Is gpt-realtime free?

No, gpt-realtime starts at $32/month.

What are the best alternatives to gpt-realtime?

Top-rated alternatives to gpt-realtime include Claude Code (9.9/10), Cursor (9.4/10), Veo 3.1 (9.4/10), Nano Banana Pro (9.3/10) — all reviewed with detailed scoring on ThePlanetTools.ai.

Is gpt-realtime good for beginners?

gpt-realtime is rated 8.5/10 for ease of use.

What platforms does gpt-realtime support?

gpt-realtime is available on Web (WebRTC), REST API, WebSocket, SIP (phone), iOS, Android.

Does gpt-realtime offer a free trial?

No, gpt-realtime does not offer a free trial.

Is gpt-realtime worth the price?

gpt-realtime scores 7.8/10 for value. It offers good value.

Who should use gpt-realtime?

gpt-realtime is ideal for: Customer support voice agents over the public phone network (T-Mobile, Oscar Health are named OpenAI customers)., In-app voice assistants and education tutors with image input for screenshots or homework., Phone-tree replacement and IVR modernization via native SIP integration., Real-time translation and interpretation across the multilingual GPT-4-class language set., Voice-driven scheduling and personal assistants via MCP server integration with calendars (Zillow built on this)., Accessibility voice-first interfaces for users with vision impairments or motor difficulties., Sales call coaching and live agent assist tools that listen to a call and prompt the human agent in real time..

What are the main limitations of gpt-realtime?

Some limitations of gpt-realtime include: Audio output at $64 per 1M tokens means a 30-minute call costs $2 to $4 in output alone. High-volume retail voice (thousands of calls per day) makes the bill add up fast.; Documented bugs on the OpenAI Developer Forum — agents getting stuck in repetition loops, random API errors on long sessions. The openai-agents-python issue #1746 documents Marin and Cedar voices ignoring system instructions in some configurations.; Language-identification weakness on heavy accents — multiple developer reports flag the model occasionally misidentifying the speaker's language on the first turn, particularly accented English from non-native speakers.; Vendor lock-in on a closed-source API from a single provider. If OpenAI deprecates the snapshot or changes pricing, your voice agent has nowhere to fall back without a re-architecture.; 32K context window and 60-minute session cap require orchestration logic for long-running customer service interactions, multi-turn diagnostic sessions or extended tutoring conversations..

Best Alternatives to gpt-realtime

Ready to try gpt-realtime?

Get started today

Try gpt-realtime Now →