Gemini "Omni" — an unannounced Google video generation model — surfaced inside the Gemini mobile app on May 11, 2026, eight days before Google I/O 2026 (May 19-20). The in-app prompt reads: "Meet our new video generation model. Remix your videos, edit directly in chat, try a template, and more." Early demos posted to Reddit show a chalkboard math proof with legible text handling and a "two men eating spaghetti at an upscale seaside restaurant" redux of the Will Smith spaghetti test that broke the internet in 2023. Google has not announced Omni. No resolution, length, audio, or modality specs have been confirmed. What we have is a leaked surface, a tagline that hints at a unified text-image-video pipeline, and a keynote calendar that says Sundar Pichai walks on stage in eight days. The strategic read is sharper than the demos.

What is Gemini Omni?

Gemini Omni is an unannounced Google video generation model that appeared as an in-app prompt inside the Gemini mobile app on May 11, 2026, eight days before Google I/O 2026 (May 19-20). The leaked tagline — "remix your videos, edit directly in chat, try a template" — suggests a unified text-image-video pipeline integrated directly into the Gemini chat surface rather than a standalone Veo successor. Early demos showed a chalkboard math proof and a Will Smith spaghetti redux at an upscale seaside restaurant. Google has not confirmed the model. No specs (resolution, length, audio, frame count) have leaked. The strategic question for I/O 2026 is whether Omni replaces Veo 3.1, sits beside it as the consumer surface, or whether Google launches both — and how the unified-pipeline framing positions Google against OpenAI's discontinued Sora 2, Kling 3 Omni, and Runway Gen-4.5.

What actually leaked in the Gemini app on May 11

The leak surface is narrower than the chatter around it suggests. According to 9to5Google's May 11 report, Reddit users surfaced an in-app prompt inside the Gemini mobile app naming a new video model "Omni" and describing four use cases: remix existing videos, edit directly in chat, start from a template, and "more." The "more" is doing a lot of work in that sentence. That is the entire confirmed surface — a tagline, a name, and a button.

The early demos that followed are where the technical signal lives. The first demo making the rounds shows a video generated from a text prompt of a mathematician writing a proof on a chalkboard — the kind of text-rendering torture test that broke every video model from 2023 through 2025 because legible written text inside generated video has been one of the hardest open problems in the modality. The output, by all accounts, is "fairly realistic," with chalkboard text that holds together across frames. If the demo is real and the model is shipping at I/O, this is a significant step up from where Veo 3 and Veo 3.1 landed on legible in-frame text.

The second demo is the one that will dominate social. Two men sit at an upscale seaside restaurant eating spaghetti — a deliberate callback to the 2023 Will Smith spaghetti video that became the cultural reference point for "AI video is not ready." If Omni is willing to ship that exact test as a launch demo, Google's product team thinks the model has cleared the bar that became internet shorthand for the modality's limitations. That is a confidence signal, regardless of whether the demo is cherry-picked.

What did not leak: resolution, clip length, frame rate, audio support, the underlying architecture, the parameter count, the training data scale, the inference cost, the API surface, the rollout schedule, or whether Omni replaces or sits beside Veo 3.1. Anyone telling you they know any of this on May 15, 2026 is guessing. The honest framing is: Google built something, soft-launched the brand into the consumer app surface, and is waiting for the keynote stage to fill in the technical sheet.

The leak anatomy — why this surface, why this week

The Gemini app prompt surfacing eight days before I/O is unlikely to be an accident. Google has a long pattern of controlled pre-keynote leaks seeded through the Pixel and Android dev channels to build organic hype without breaking embargo. The 2024 Veo 1 announcement was preceded by Android Police screenshots from the internal Gemini-Workspace integration. The 2025 Veo 3 reveal had a similar pre-leak through Workspace early access. The pattern repeats — leak the brand and a teaser surface to consumer channels, let the demos and reactions seed Reddit and Twitter for a week, then walk the full technical reveal on stage.

The choice of "Omni" as a name is the part that matters most strategically. Google did not call this "Veo 4" or "Veo Lite" or "Veo Mobile." They picked a name that explicitly does not bracket the model inside the existing Veo lineage. The naming signals a different positioning — Veo is a frontier video model. Omni is a brand that scales across modalities. The same Google product team that ships a unified Gemini chat experience across text, image, and code is signaling that the next surface is the same chat experience with video baked in as a first-class modality, not as a separate Veo tab.

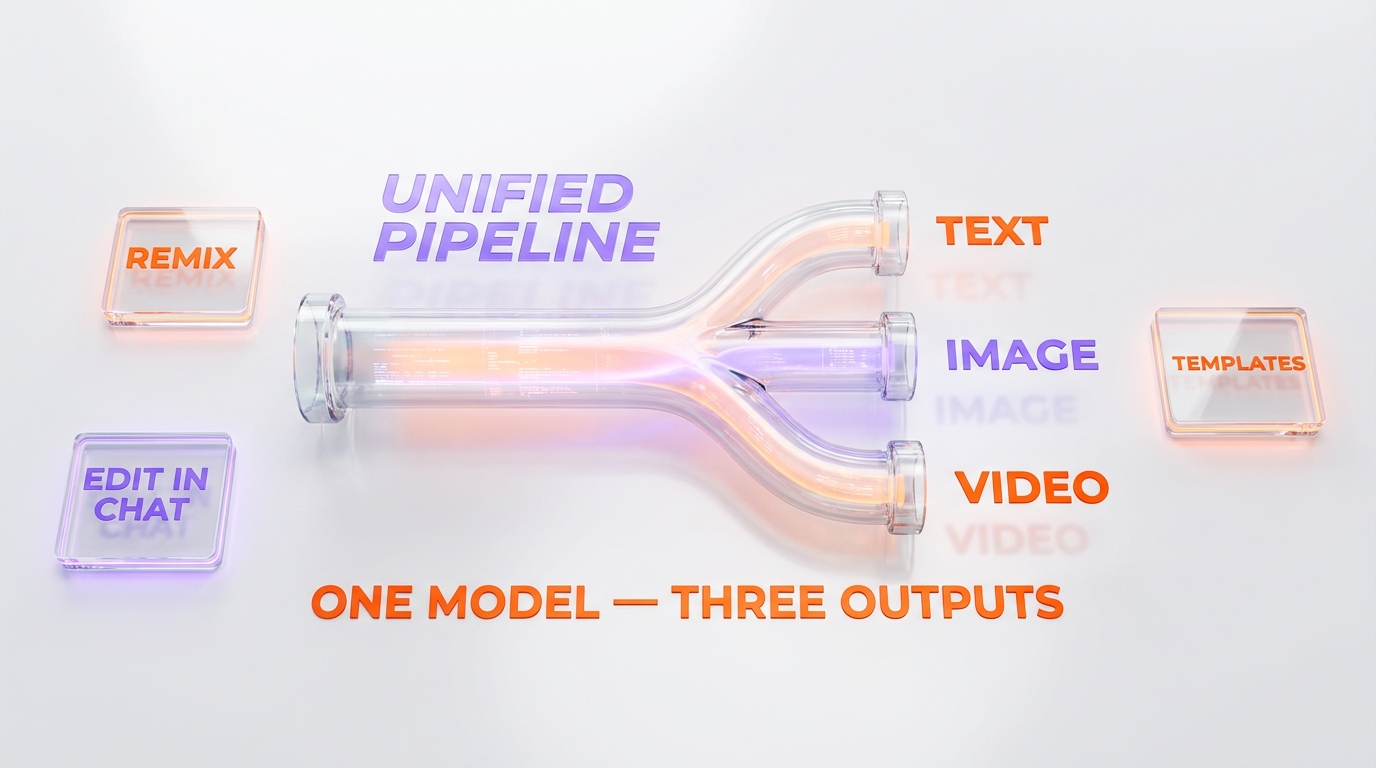

The unified pipeline read — what the tagline actually implies

The phrase that sets the architectural read is buried in the in-app prompt: "edit directly in chat." That is not the language a standalone video generation model uses. Runway Gen-4.5 launches a video editor as a dedicated surface. Veo 3.1 ships as an API endpoint and a Vertex AI deployment. Kling 3 Omni sits inside Kuaishou's separate Kling product. None of them describe the editing surface as "directly in chat" — because for them, chat is not the primary product. For Google, chat is Gemini, and Gemini is the consumer surface Google has been building for two years to compete with ChatGPT.

The unified-pipeline architectural read goes like this. A single transformer-class backbone — almost certainly trained on the same multimodal corpus as Gemini 3.1 Pro — handles text understanding, image generation, and video synthesis through a shared latent space. The user types a prompt into the Gemini chat. The model decides whether the appropriate output is text, image, video, or a chain of those, conditioned on the prompt and the conversation history. The "remix your videos" use case implies video-to-video conditioning. The "edit directly in chat" use case implies iterative refinement through dialogue, where the user says "make the lighting warmer" and the model regenerates a variant. The "try a template" use case implies style transfer with a structured prompt scaffold.

If that architecture is what Google reveals on May 19 or 20, the implication for the rest of the video-model market is significant. It means Veo 3.1 was the last generation where Google treated video as a separate model family. Omni is video as a chat capability. The competitive question shifts from "which video model is best on benchmarks" to "which assistant experience can you live inside without leaving for a separate tool." That is the question OpenAI has been losing on the multimodal axis since Gemini 3 Pro shipped, and the question Anthropic does not even try to win because Anthropic's lane is coding supremacy, not consumer multimedia.

Why a unified text-image-video pipeline matters strategically

The historical analog is the 2024 shift in image generation from standalone tools (Midjourney, DALL-E as separate products) to chat-integrated image generation (ChatGPT Plus image generation, Gemini image generation in the chat surface). That shift collapsed the standalone-image-tool market for casual and prosumer users almost entirely. Midjourney survived because its quality lead and Discord community moat are durable. Most of the other standalone image tools that existed in 2023 are gone.

If Omni replicates that pattern for video, the casualties are predictable. Pika, which has been competing on accessibility and chat-style prompting, faces a direct frontal assault from a free or near-free chat-integrated Google product. Standalone video tools that sit between Runway's pro tier and the consumer chat layer have the squeezed-middle problem — too expensive to be casual, not powerful enough to compete with Runway on production work.

The labs that survive the unification wave are the ones with a clear answer to why a creative professional would leave the Gemini chat to use their tool. Runway's answer is the editor, the timeline, the LoRA infrastructure, and the Hollywood production pipeline integration. Kling's answer is the Chinese market and a different aesthetic baseline. Veo 3.1's answer, if it survives Omni's launch, is the Vertex AI enterprise compliance story. Pika's answer is — honestly — unclear if Omni ships at the price point most observers expect.

Google I/O 2026 — what we expect Google to actually announce

The keynote calendar matters. Google I/O 2026 runs May 19-20, with the main Sundar Pichai and Demis Hassabis keynote on the morning of May 19. The developer keynote on the afternoon of May 20 is where the technical specs land. That gives Google two stage moments to walk through Omni — a consumer-narrative reveal on day one, a developer-pricing-and-API reveal on day two.

Based on the pre-leak surface, the demo selection, and the pattern of every Google video model launch since Veo 1, here are the five things I expect Google to actually announce.

1. The name is Gemini Omni, not Veo 4

The leak is too well-placed to be a fake. The brand is real. Google ships Omni under that name as a chat-integrated video capability, distinct from but related to the Veo product line.

2. Veo 3.1 stays as the API and Vertex AI product

Google is unlikely to deprecate Veo 3.1 on stage. The enterprise pipeline that depends on Veo's API surface — production studios, ad tech buyers, Vertex AI procurement — needs continuity. Expect Google to position Omni as the consumer-surface evolution and Veo as the enterprise-API continuation. Veo's competitive position against Kling 3 Omni stays unchanged on the enterprise axis.

3. The consumer pricing is "free with Gemini Advanced"

The chat-integration play only works if the price point matches the rest of the Gemini consumer surface. Expect Omni to ship as a feature inside Gemini Advanced ($20 per month consumer subscription), with daily generation caps that scale with the subscription tier. The free Gemini tier likely gets limited Omni access — a few generations per day, capped resolution, watermarked output — calibrated to feel just generous enough to convert to Advanced.

4. A separate developer API at a competitive per-generation price

The day-two developer keynote ships the Omni API on Vertex AI at a price point that pressures Veo 3.1's existing tier. Expect somewhere in the range of $0.35-0.50 per generated second for the standard tier, with a Pro variant at roughly double for higher resolution and longer clips. That undercuts Runway's enterprise pricing and matches the floor Google has been setting across the Gemini 3.1 family.

5. Native audio generation in the same model

The competitive bar that Google's recent Gemini 3.1 Pro family has been demonstrating is multi-modality at the architecture level, not as a bolt-on. The probability that Omni ships with native synchronized audio — voices, dialogue, ambient — is high. If Google demonstrates a one-prompt video generation with synchronized speech on stage, that is the moment the modality changes for everyone.

What I do not expect: a full deprecation of Veo on stage, a sub-$10 per month standalone Omni subscription tier, open weights or a research paper with full architecture disclosure, or a launch in regions outside the existing Gemini Advanced footprint. Google's pattern across Gemini, Veo, and Imagen has been controlled rollout over regional expansion.

Omni versus Veo 3.1, Sora 2, Kling 3 Omni, Runway — competitive positioning

The Omni leak rewrites the competitive frame for AI video generation in mid-2026. The lineup heading into Google I/O looks different than it did six months ago.

Versus Veo 3.1 — internal cannibalization or layered strategy

Veo 3.1 remains Google's frontier video model with the strongest enterprise procurement story. The question Omni raises is whether Veo 3.1 stays the frontier model and Omni sits beneath it as the consumer chat surface, or whether Omni's architecture is actually a successor that quietly retires Veo over the next 12 months. The 9to5Google leak does not answer this question. Google's pattern suggests layered strategy — Veo for enterprise, Omni for consumer — at least through 2026. The risk is that the layered story collapses if Omni's underlying model is actually stronger than Veo on the metrics that matter.

Versus Sora 2 — moot, because Sora 2 is discontinued

This is the part of the competitive map that has changed most dramatically. OpenAI discontinued Sora 2 earlier in 2026, redirecting video generation effort into the broader GPT-5.5 multimodal stack rather than a standalone video product. That means the comparison most consumer-facing tech press will reach for — "is Omni better than Sora 2" — has no live answer. Sora 2 is not a shipping product to compare against. The comparison that matters is Omni versus whatever OpenAI ships at the GPT-5.5 video tier, which has been sub-keynote since OpenAI's Daybreak announcement.

Versus Kling 3 Omni — coincidence, or naming convergence

Kling 3 Omni already exists. Kuaishou's video model has used the "Omni" branding for over six months, framing the same unified-modality positioning that Google's leak hints at. The naming convergence is either coincidence or signal — both companies independently picked the same brand metaphor for the same architectural bet. In our Veo 3.1 vs Kling 3 Omni comparison, we mapped Kling's strengths on Asian-market aesthetics, animation styles, and cheaper per-generation pricing. Google's Omni inherits the same brand metaphor with materially more distribution. The Kling-Omni-versus-Gemini-Omni naming conflict alone will eat days of press cycle confusion at I/O.

Versus Runway Gen-4.5 — different lane, no direct conflict

Runway Gen-4.5 sits in a different lane than Omni. Runway's product is the timeline editor, the LoRA training, the Hollywood production pipeline, and the cinematographer-grade controls — none of which are what a chat-integrated consumer surface tries to be. Our Runway vs Veo 3.1 comparison and Runway vs Kling AI both land on the same conclusion — Runway wins on production pipeline integration, the frontier video models win on raw generation quality. Omni does not change that. The risk for Runway is that a free-with-Gemini-Advanced consumer surface cannibalizes their lower-tier subscribers who never needed the pro features in the first place.

Versus Pika — the squeezed middle

Pika has the hardest competitive position in the post-Omni landscape. Pika's pitch is accessible, chat-style video generation at consumer pricing. That is exactly the surface Omni is built to occupy. Pika's defense is community, brand, and the fact that they have been iterating on the consumer-chat-style video experience longer than Google has shipped it inside Gemini. The strategic question for Pika is whether the early-mover advantage and creator community moat survive a Google product that ships free inside an assistant the user already pays for.

What the early demos actually tell us — and what they hide

Two demos surfaced. Both are deliberate.

Demo 1 — the chalkboard math proof

Legible written text inside generated video has been the modality's hardest problem since 2023. Every major frontier video model — Sora 1 and 2, Veo 1 through 3.1, Kling 1 through 3, Runway Gen-1 through 4.5 — has shipped with text rendering as a known weakness. Models that nail static image text (Midjourney v7, Imagen 4) collapse when asked to maintain text legibility across video frames. The chalkboard demo is Google saying we solved this. If the demo is representative of the model's actual capability and not a hand-picked best-of-50 sample, Omni has cleared a bar that consumer creators have been waiting two years for.

The cynical read: Google's product team picked this demo because they know it is the most defensible win. The chalkboard format is constrained — black background, white text, fixed camera, minimal motion. The harder text-rendering test is "video advertisement with logo legibility, dynamic camera, full color, and product packaging." That demo did not leak. Whether that is because Google has not solved it yet or because they are saving it for the keynote is the open question.

Demo 2 — Will Smith spaghetti redux

The two-men-at-a-seaside-restaurant demo is meme bait designed for Twitter virality. The deliberate callback to the 2023 Will Smith video is the kind of cultural-reference launch that Google's consumer marketing team has been getting better at since the Gemini 3 launch cycle. The technical content of the demo is less the food and more the multi-character interaction, the environmental consistency, and the fine-grained motor control (utensils, chewing, conversation gestures) that the 2023-era models could not maintain.

The cynical read on this demo: the seaside restaurant is a controlled environment with predictable lighting, a static camera, and limited character variation. The harder consumer test is "generate a 10-second clip of someone walking their dog through a busy street while making a phone call." That demo did not leak either. Google's product team is showing the demos that win on social — clean, viral, easy to share. That is fair launch marketing. It is not yet evidence the model handles the long tail of consumer use cases.

What could go wrong — the bear case for Omni

I have written 2,500 words on why the Omni leak is a strategic move that reshapes the video model market. The honest counter is that several scenarios would make the leak look much less significant than the early reaction implies.

Bear case 1 — the demos are cherry-picked beyond the normal range

Every video model launch ships with demos that represent the upper end of the model's capability. The relevant question is the gap between launch demo quality and median user output. Veo 3 launched with demos that were significantly better than the median user could reproduce. Kling 3 Omni followed the same pattern. If Omni's chalkboard demo is best-of-100 cherry-picked and the median user gets gibberish text 50% of the time, the actual product is much closer to Veo 3.1 than the demos suggest.

Bear case 2 — the pricing mismatch closes the access advantage

The chat-integration play works if the pricing is consumer-affordable. If Omni ships with per-generation caps that hit any meaningful creator workflow within hours and the price-per-generation outside the Advanced subscription is competitive with Runway's per-generation pricing, the "free with Gemini Advanced" narrative dissolves. The casualties — Pika, the squeezed middle — get a longer runway than the early reaction suggests.

Bear case 3 — internal cannibalization breaks the layered strategy

If Omni is genuinely the better model on the metrics that matter to Veo's enterprise customers, the Vertex AI Veo 3.1 customers migrate to Omni faster than Google's enterprise sales motion can manage. The result is a forced repricing of Veo or a forced sunset, neither of which serves Google's I/O narrative. The internal Google product politics around this layered story will matter more than the keynote framing suggests.

Bear case 4 — the Kling 3 Omni naming conflict triggers legal

Kuaishou shipped Kling 3 Omni months before Google's leak. The naming overlap is not a coincidence the legal teams will ignore. Either Google paid for the brand before the leak, the two companies reach a coexistence agreement, or one of them rebrands. The most likely outcome is coexistence, but the press cycle confusion at I/O is real and dilutes the launch impact.

Bear case 5 — the regulatory response throttles the consumer surface

A free chat-integrated video generation tool with photorealistic output is the worst-case product surface for the deepfake regulatory environment. If Omni ships before Google's Trust & Safety story is bulletproof, the regulatory and political response in the EU, the UK, and the US legislatures will throttle the consumer rollout. Google has been more cautious than OpenAI on deepfake-adjacent surfaces, which is why Gemini 3.1 Flash-Lite shipped cleanly while OpenAI's recent launches have faced more scrutiny. Omni is the first product where Google's safety calculus will be tested at consumer scale.

What product buyers and creators should actually do this week

The leak does not require an immediate move. The keynote is in eight days. The technical sheet, the pricing, the API surface, and the rollout schedule all land then. Until May 19, the rational position is to delay any new video-model procurement decision if it can wait, and to set up monitoring for the keynote reveal.

If you are an enterprise buyer evaluating Veo 3.1

Wait. Whatever Veo's pricing or capabilities look like on May 18 will not match the May 20 picture. The likely outcome is that Veo gets a price adjustment, a feature update, or both alongside the Omni announcement. Signing a Veo Vertex AI contract this week is paying a premium to learn information that is free in eight days.

If you are a Runway Pro customer

You are not the target of the Omni launch. The Runway pro pipeline — timeline editor, LoRA, project management — is not what a chat-integrated consumer video product replaces. The honest read is that your subscription is safe through 2026, but the Runway product team needs to articulate a clearer pro-tier differentiation story than they have in the last six months.

If you are a Pika subscriber

You should watch the I/O keynote closely. The Omni pricing tier and capability bar determine whether Pika's consumer-chat positioning has a defensible moat or whether you are paying $10-30 per month for something Google ships free inside Gemini Advanced.

If you are a creator who has not yet committed to a video stack

Wait through I/O. The decision tree for creators is going to look meaningfully different on May 21 than it does on May 15.

How to actually watch the I/O 2026 keynote and what to look for

Google I/O 2026 runs May 19-20 at the Shoreline Amphitheatre in Mountain View. The main keynote (Sundar Pichai, Demis Hassabis) starts at 10 AM Pacific on May 19. The developer keynote is afternoon of May 20. Both stream free on YouTube via the official Google channel.

The five things to watch for in the keynote moments that confirm or deny the Omni read:

- Naming confirmation — does Google announce "Gemini Omni" as a product name, or does the model ship under a different brand?

- Veo 3.1 status — does Veo get a deprecation notice, a continuation announcement, or silence?

- Pricing structure — is the consumer access free with Gemini Advanced, or does it require a separate subscription?

- Audio — does the on-stage demo include synchronized native audio, or is video silent?

- Resolution and length — does Google publish actual specs for clip length, frame rate, and resolution, or does the spec sheet stay vague?

The answers to those five questions are the difference between Omni being the most significant video model announcement of 2026 and Omni being a marketing rebrand that does not meaningfully change the competitive map. We will know in eight days. The leak is a tease. The keynote is the answer.

Frequently Asked Questions

What is Gemini Omni?

Gemini Omni is an unannounced Google video generation model that surfaced as an in-app prompt inside the Gemini mobile app on May 11, 2026, eight days before Google I/O 2026 (May 19-20). The leaked tagline reads "Meet our new video generation model. Remix your videos, edit directly in chat, try a template, and more." Google has not officially announced the model. No technical specs (resolution, length, audio, frame rate) have leaked. Early demos showed a chalkboard math proof with legible text handling and a two-men-eating-spaghetti redux of the 2023 Will Smith spaghetti test.

When will Google announce Gemini Omni officially?

The most likely announcement window is Google I/O 2026, which runs May 19-20 at the Shoreline Amphitheatre. The main keynote with Sundar Pichai and Demis Hassabis is on the morning of May 19. The developer keynote is the afternoon of May 20, which is the more likely moment for the technical sheet, API pricing, and Vertex AI deployment timeline.

How is Gemini Omni different from Veo 3.1?

The leak suggests Omni is positioned as a chat-integrated consumer video capability — the in-app tagline says "edit directly in chat" — while Veo 3.1 remains Google's frontier video API for enterprise Vertex AI deployments. The likely strategy is a layered product where Veo 3.1 continues as the enterprise API and Omni sits inside the Gemini consumer chat surface. Whether Omni's underlying model is the same as Veo 3.1 or a new architecture is not confirmed.

Is Gemini Omni real or just a rumor?

The brand surface is real — Reddit users surfaced the in-app prompt inside the Gemini mobile app on May 11, 2026, and 9to5Google verified the screenshot. What is unconfirmed is the underlying model architecture, pricing, rollout timing, technical specs, and whether the early demos circulating are representative of the actual model capability. Google has not officially announced anything. The honest framing is that Omni is a confirmed brand and a confirmed in-app surface, with everything else being either inference or pre-keynote leak.

How does Gemini Omni compare to OpenAI's Sora 2?

The comparison is moot in mid-2026 because OpenAI discontinued Sora 2 earlier in the year, redirecting video generation effort into the broader GPT-5.5 multimodal stack rather than maintaining Sora as a standalone product. The relevant OpenAI comparison for Omni is whatever GPT-5.5 video capability OpenAI announces at the next OpenAI launch event, which has been sub-keynote since the Daybreak announcement.

How does Gemini Omni compare to Kling 3 Omni?

Kuaishou's Kling 3 Omni has used the "Omni" branding for over six months — meaning the naming convergence with Google's leaked product is either coincidence or signal. Kling 3 Omni has strengths on Asian-market aesthetics, animation styles, and cheaper per-generation pricing. Google's Gemini Omni inherits the same brand metaphor with materially more distribution through the Gemini consumer app. The naming overlap is likely to create press confusion at I/O 2026.

How does Gemini Omni compare to Runway Gen-4.5?

Runway Gen-4.5 and Gemini Omni sit in different lanes. Runway's product is the timeline editor, LoRA training, and Hollywood production pipeline — pro creator tools. Omni is positioned as a chat-integrated consumer surface based on the leaked tagline "edit directly in chat." The lanes do not directly conflict on the pro creator axis. The risk for Runway is consumer cannibalization at the lower subscription tiers if Omni ships free with Gemini Advanced.

What pricing will Gemini Omni have?

Google has not announced pricing. The expected pricing based on Google's pattern with the Gemini 3.1 family is that Omni ships as a feature inside the $20 per month Gemini Advanced consumer subscription, with daily generation caps. The free Gemini tier likely gets limited Omni access with watermarked output. The developer API on Vertex AI is expected to land in the range of $0.35 to $0.50 per generated second for the standard tier, but this is unconfirmed inference based on Google's pricing pattern, not a leaked number.

What did the early demos actually show?

Two demos surfaced. The first is a chalkboard math proof with legible written text handled across frames — significant because legible text inside generated video has been one of the hardest open problems in the modality. The second is a Will Smith spaghetti redux showing two men eating spaghetti at an upscale seaside restaurant — a deliberate cultural callback to the 2023 Will Smith spaghetti video that became internet shorthand for AI video failure. Both demos are cherry-picked launch material, not representative of median user output.

Will Gemini Omni ship with native audio?

Unconfirmed. Google has not disclosed whether Omni generates synchronized native audio in the same model. The competitive bar set by the Gemini 3.1 Pro family suggests that audio at the architecture level (not as a bolt-on) is likely, but this is inference. If Google demonstrates one-prompt video generation with synchronized speech on stage at I/O 2026, that is the moment the modality changes for the entire video model market.

Should I buy a Veo 3.1 enterprise contract this week?

Wait. Whatever Veo's pricing or capabilities look like on May 18, they will not match the May 20 picture after the I/O keynote. The likely outcome is that Veo gets a price adjustment, a feature update, or both alongside the Omni announcement. Signing a Veo Vertex AI contract this week pays a premium for information that becomes free in eight days. Set up keynote monitoring instead.

What could make the Gemini Omni leak look overhyped?

Five scenarios would dilute the launch impact. The demos turn out to be heavily cherry-picked relative to median user output. The pricing closes the access advantage if per-generation caps hit creator workflows fast. Internal Veo 3.1 cannibalization breaks the layered enterprise versus consumer strategy. The naming conflict with Kuaishou's existing Kling 3 Omni triggers legal noise. Regulatory pressure on deepfake-adjacent surfaces throttles the consumer rollout in the EU, UK, and US. All five are plausible. None are guaranteed.

Sources

- 9to5Google — "Gemini 'Omni' video model shows up with some early demos" (May 11, 2026): 9to5google.com

- Google I/O 2026 official event page: io.google

- Google DeepMind — Veo product page (continuing reference): deepmind.google

- Google Gemini app (May 11, 2026 in-app prompt — original surface, Reddit-sourced screenshots)